Amazon has launched a public preview of AI agent integration for Amazon WorkSpaces, allowing LLM-driven agents to operate legacy desktop applications via a secure, virtualized environment. By leveraging the Model Context Protocol (MCP), agents can “see” and “click” UIs without requiring modern APIs or expensive application refactoring.

For years, the enterprise AI dream has hit a brick wall: the legacy app. We’re talking about the monolithic, grey-windowed software from 2004 that still runs the world’s logistics, healthcare, and banking systems. These apps don’t have REST APIs. They don’t have Webhooks. They are essentially black boxes that require a human to sit in a chair and click a button. Until this week’s beta rollout, the only solution was “modernization”—a polite industry term for a multi-million dollar rewrite that usually fails.

AWS is pivoting the strategy. Instead of forcing the software to change, they are giving the AI a desk.

The Death of the API Bottleneck

The technical brilliance of this move isn’t in the virtualization—AWS has mastered WorkSpaces for a decade. The brilliance is in the Agentic Interface. By treating the virtual desktop as the primary interface, AWS has effectively bypassed the need for LLM parameter scaling to handle complex API schemas for every single legacy tool in existence.

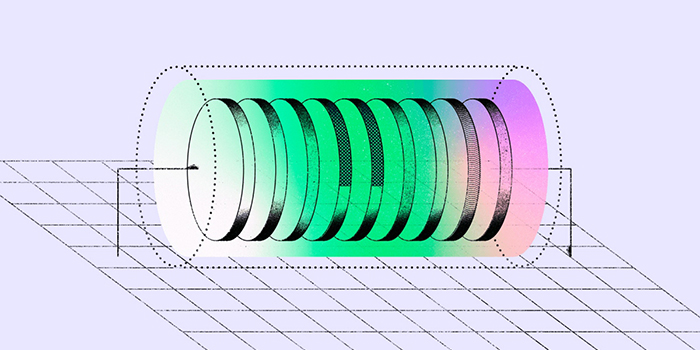

In a traditional setup, if you wanted an AI to refill a prescription in a legacy pharmacy system, you’d need a middleware layer to translate natural language into a specific API call. If that API didn’t exist, you were stuck. Now, the agent uses a Vision-Language Model (VLM) to interpret the screen. It takes a PNG screenshot, analyzes the spatial coordinates of the “Patient Record” field, and sends a simulated mouse click. This proves, for all intents and purposes, a “headless” employee.

This is a massive shift in the “AI War.” While Microsoft is pushing Copilot deeply into the Office 365 ecosystem, AWS is playing the “infrastructure” game. They aren’t just selling a chatbot; they are selling the environment where the chatbot lives and works.

Deconstructing the Model Context Protocol (MCP) Layer

The linchpin here is the Model Context Protocol (MCP). For those not deep in the weeds, MCP is an open standard that allows developers to swap out the “brain” (the LLM) without rewriting the “hands” (the tools the LLM uses). By making WorkSpaces MCP-compliant, AWS ensures that this isn’t a closed garden.

You can point a LangChain agent or a CrewAI swarm at a WorkSpaces MCP endpoint, and the agent immediately gains the ability to interact with any software installed on that image. This prevents the dreaded platform lock-in. If a company decides that a new, more efficient model from Anthropic or a specialized open-source model from Hugging Face is better for their workflow, they can swap the model while keeping the WorkSpaces infrastructure intact.

The latency overhead is the only real question mark. Moving from a direct API call (milliseconds) to a screenshot-analyze-click loop (seconds) is a regression in speed. However, for a business process that takes a human ten minutes, a thirty-second agentic loop is still a 20x productivity gain.

Agentic RPA: Why Semantic Vision Beats Brittle Scripts

We need to be clear: this is not the Robotic Process Automation (RPA) of five years ago. Old-school RPA was fragile. If a developer moved a “Submit” button three pixels to the left, the script broke. It relied on hard-coded X/Y coordinates.

Modern AI agents operate on semantic understanding. They don’t look for “Coordinate 402, 115”; they look for “the button that looks like a save icon and is labeled ‘Confirm’.” This makes the workflow resilient. If the legacy app gets a UI update, the agent doesn’t crash—it just looks for the button again.

// Conceptual Agent Loop in a WorkSpaces Environment while (task_not_complete) { screenshot = WorkSpaces.captureScreen(); action = VLM.analyze(screenshot, current_goal); // action = { "type": "click", "coords": [450, 200], "reason": "Clicking 'Search' to find patient" } WorkSpaces.execute(action); verify_state(screenshot); }

As Gartner has noted, the sheer volume of legacy debt in the Fortune 500 is staggering. By enabling AI agents to operate in a “human-like” environment, AWS is essentially monetizing the inability of enterprises to update their code.

The Security Perimeter of the Headless Desktop

From a cybersecurity perspective, this is the only way to do this safely. If you give an AI agent direct access to a server, one prompt injection could lead to a catastrophic data wipe. By confining the agent to a WorkSpace, AWS is employing enterprise-grade isolation.

The agent doesn’t have “root” access to the network; it has “user” access to a desktop. Everything is routed through AWS Identity and Access Management (IAM), and every single action is logged in AWS CloudTrail. If an agent goes rogue and starts deleting records, there is a forensic audit trail of exactly what the agent “saw” and “clicked.”

"The risk shift here is critical. We are moving from 'API security'—where you secure the endpoint—to 'Session security,' where you secure the environment. For regulated industries like FinTech or HealthTech, the ability to audit a visual session is far more valuable than a JSON log of API calls." — (Industry Perspective: Senior Cloud Security Architect)

The inclusion of “screenshot storage” for debugging is a nod to the reality of LLM hallucinations. When an agent fails, the developer doesn’t have to guess why; they can simply scroll through the PNG history and see exactly where the agent got confused by the UI.

The 30-Second Verdict

- The Win: Instant AI capability for apps that haven’t been updated since the Bush administration. No API development required.

- The Catch: Higher latency than native APIs and a reliance on VLM accuracy for complex UIs.

- The Strategy: AWS is positioning itself as the “OS for Agents,” making the cloud desktop the primary interface for the autonomous enterprise.

This isn’t just a feature update; it’s a recognition that the “API-first” world failed to account for the sheer weight of legacy software. By giving AI agents their own desktops, Amazon is building a bridge over the modernization gap, allowing companies to automate the present without having to perfectly build the future first.