Myrtle.ai, in partnership with VOLLO, has halved latency records for financial machine learning inference, drastically reducing the time between data ingestion and trade execution. This breakthrough targets high-frequency trading (HFT) environments where microsecond advantages translate directly into alpha, redefining the performance ceiling for real-time financial AI.

In the world of quantitative finance, latency is the only metric that truly matters. We aren’t talking about the slight lag of a webpage loading or the buffering of a 4K stream. We are talking about the “race to zero”—the relentless pursuit of executing trades in the time it takes a photon to travel a few hundred meters of fiber optic cable.

The announcement hitting the wires this week marks a pivotal shift. For years, the industry has been trapped in a trade-off: you could have a highly complex, accurate model that took too long to decide, or a primitive, fast model that missed the nuance of the market. Myrtle.ai and VOLLO claim to have broken that compromise.

The Microsecond War: Why Halving Latency is a Paradigm Shift

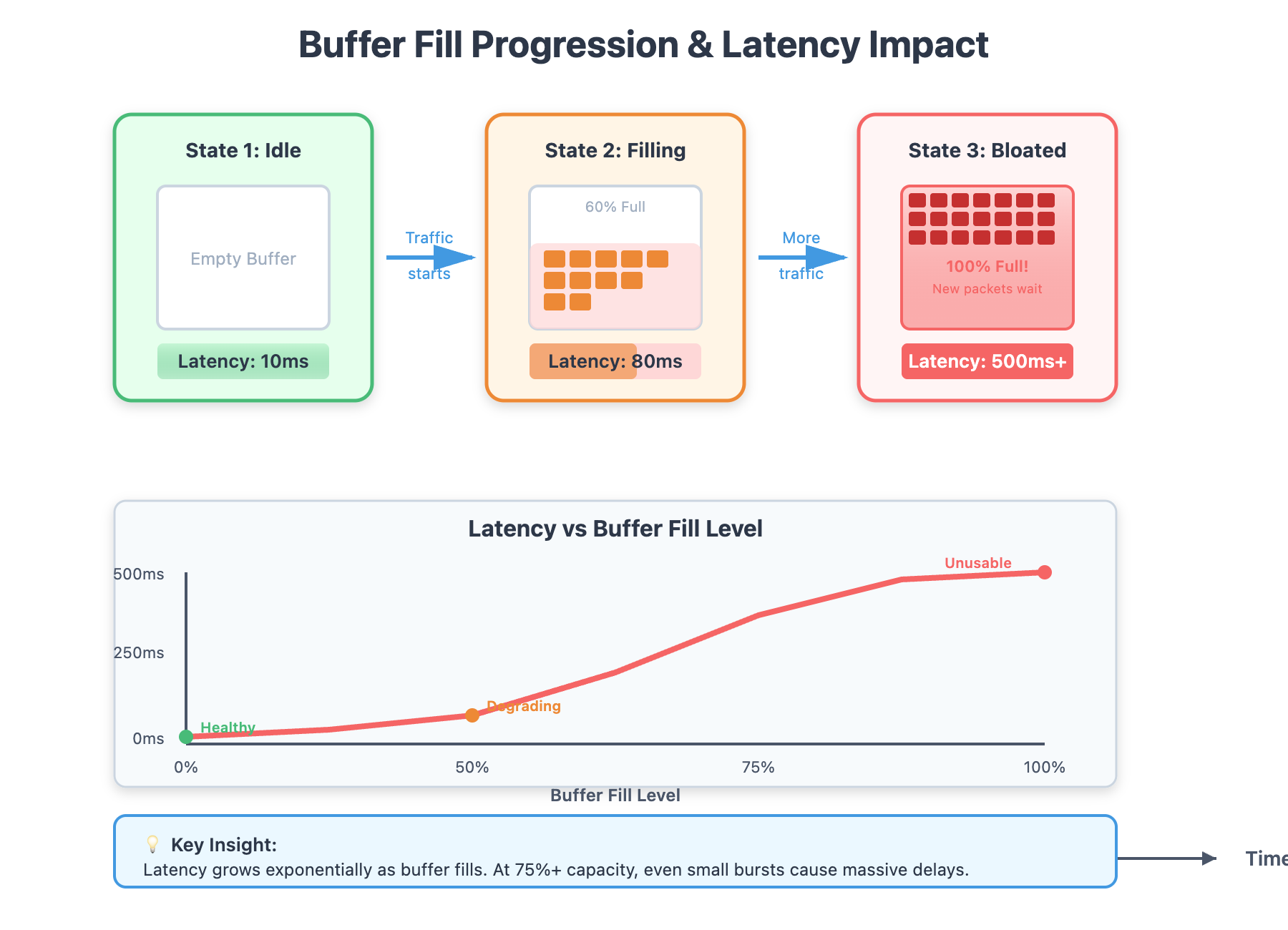

To understand why a 50% reduction in inference latency is a “black swan” event for the industry, you have to understand the inference pipeline. When a market tick arrives, the system must preprocess the data, feed it through a neural network (the inference phase), and trigger an order. If your competitor does this in 10 microseconds and you do it in 20, you aren’t just slower—you are irrelevant.

Most financial ML models have traditionally relied on heavy-duty GPUs. While GPUs are monsters at parallel processing, they suffer from significant “tail latency”—those random spikes in response time caused by memory bottlenecks or kernel scheduling overhead. VOLLO’s architecture addresses this by bypassing the traditional PCIe bottlenecks and optimizing the way tensors are moved through the chip.

It is a brutal game of efficiency.

By leveraging specialized hardware acceleration and a highly optimized compilation layer from Myrtle.ai, the system minimizes the number of clock cycles required for a single forward pass of the model. This isn’t just a software tweak; it’s a fundamental restructuring of how the model’s weights are stored and accessed in memory.

Stripping the Bloat: How Myrtle.ai and VOLLO Optimize the Inference Path

The secret sauce here isn’t a “better” AI model, but a more efficient way to run it. Myrtle.ai focuses on what we call “graph optimization.” They capture a model trained in a framework like PyTorch or TensorFlow and strip away every single operation that doesn’t contribute to the final output.

They utilize a process known as Weight Pruning and Quantization. In plain English: they identify the neurons in the network that aren’t doing much and delete them, then they convert the high-precision numbers (FP32) into lower-precision integers (INT8 or even FP8). This reduces the memory footprint and allows the NPU (Neural Processing Unit) to crunch numbers faster without a meaningful loss in predictive accuracy.

The integration with VOLLO allows for “Kernel Fusion.” Normally, a model performs a series of separate mathematical operations—an addition here, a multiplication there—each requiring a trip to the memory. Kernel Fusion collapses these into a single operation, keeping the data on the chip and slashing the time spent waiting for data to move.

The 30-Second Verdict: Technical Gains

- Deterministic Latency: Elimination of “jitter,” ensuring every trade executes in a predictable timeframe.

- Throughput Scaling: Ability to handle more simultaneous data streams without linear increases in lag.

- Energy Efficiency: Lower thermal output per inference, reducing the risk of thermal throttling in dense server racks.

“The industry has hit a wall with general-purpose compute. To gain another 10% of performance, we used to require 100% more power. What Myrtle and VOLLO are doing is shifting the focus from raw power to architectural elegance. It’s no longer about how big the hammer is, but how precisely you hit the nail.”

The Hardware Hegemony: Breaking the GPU Bottleneck

For a decade, NVIDIA has held a virtual monopoly on AI compute via the CUDA ecosystem. But CUDA is designed for flexibility and massive batches of data—not the single-stream, ultra-low-latency requirements of a trading desk. The VOLLO approach represents a move toward specialized ML compilers and ASICs (Application-Specific Integrated Circuits) that prioritize latency over throughput.

This creates a fascinating tension in the market. We are seeing a divergence between “Generative AI” (which needs massive VRAM and parallelization) and “Predictive AI” (which needs raw speed and determinism). By optimizing for the latter, Myrtle.ai is effectively building a moat around the financial sector, making general-purpose cloud AI too slow to compete.

Below is a conceptual breakdown of how this architecture compares to traditional GPU-based inference pipelines currently used in mid-tier quant shops.

| Metric | Standard GPU Pipeline | Myrtle.ai + VOLLO | Impact |

|---|---|---|---|

| Precision | FP32 (High) | INT8/FP8 (Optimized) | Faster compute, minimal accuracy loss |

| Memory Access | High-latency VRAM | On-chip SRAM/Tightly Coupled Memory | Eliminates memory bottlenecks |

| Execution | Batch Processing | Single-stream Inference | Immediate reaction to market ticks |

| Latency Profile | Stochastic (Jittery) | Deterministic (Stable) | Consistent execution speed |

Strategic Implications for the Quant Landscape

The rollout of this technology, which we are seeing enter beta environments this week, will likely trigger an arms race. When one firm can suddenly “see” the market and react twice as fast as the rest, the existing strategies of other firms become liabilities. This represents the “predatory” nature of HFT: the fastest player captures the liquidity, leaving the crumbs for the rest.

However, there is a broader ecosystem play here. This isn’t just about trading. Any industry requiring real-time inference—autonomous vehicle collision avoidance, robotic surgery, or grid-scale energy management—could benefit from this architecture. If you can halve the time it takes for a machine to “think” and “act,” you save more than just money; you save lives.

The risk? Platform lock-in. While moving away from NVIDIA is a win for competition, moving into a proprietary VOLLO/Myrtle stack creates a new dependency. Developers will need to learn new toolchains, and the “open source” nature of AI development may take a backseat to proprietary, high-performance binaries.

For those tracking the semiconductor war, this is a signal that the next frontier isn’t just about who can craft the smallest transistor, but who can create the shortest path between a data packet and a decision.

The bottom line: Myrtle.ai isn’t just optimizing code; they are compressing time. In the financial markets, that is the ultimate luxury.