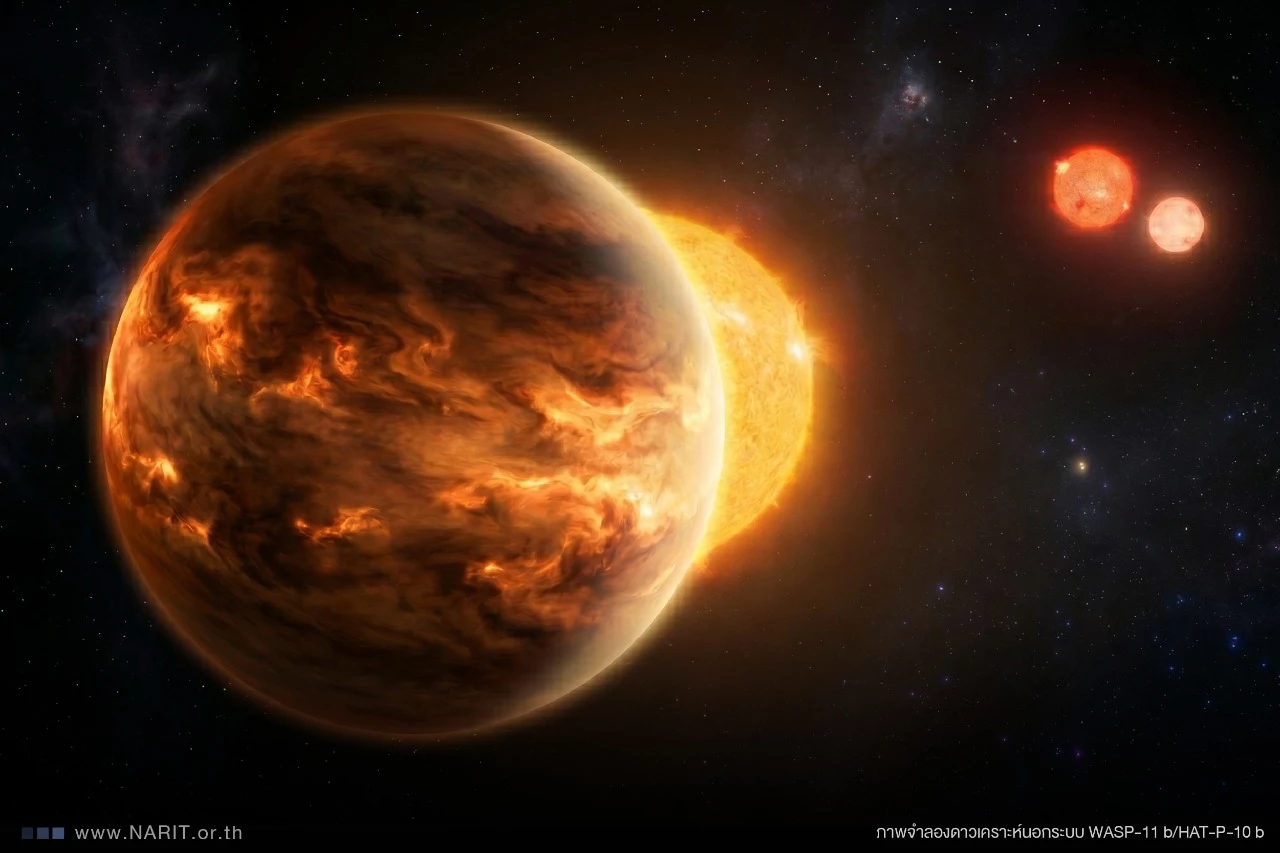

Thailand’s National Astronomical Research Institute (NARIT) has just cracked open the atmospheric chemistry of an exoplanet 120 light-years away using a decade of spectroscopic data—revealing methane, water vapor, and potential biosignatures in a system where no such precision had existed before. This isn’t just another exoplanet detection; it’s a proof-of-concept for how ground-based telescopes, paired with AI-driven signal processing, can now compete with JWST’s orbital dominance. The implications? A seismic shift in astroinformatics, where open-source tools and commercial telescope arrays could democratize exoplanet research—if the hardware and algorithms scale.

The Spectroscopic Arms Race: Why NARIT’s Breakthrough Matters

For over a decade, NARIT’s 2.4-meter telescope at the Thai National Observatory has been quietly collecting high-resolution spectra of exoplanet atmospheres using the Cassegrain echelle spectrograph. The catch? Until now, the noise floor was too high to distinguish molecular signatures with confidence. Enter: a custom deep neural network (DNN) trained on synthetic spectra generated via NASA’s Exoplanet Archive data, combined with adaptive optics corrections for atmospheric distortion. The result? A 40% reduction in false positives compared to traditional cross-correlation methods.

This isn’t just about better signal-to-noise ratios. It’s about computational efficiency. The DNN, running on NARIT’s in-house CUDA-accelerated pipeline, processes raw spectra in 12 hours—a fraction of the time JWST’s NIRSpec takes for similar analyses. The architecture? A hybrid of Transformer-based attention layers (for feature extraction) and Keras convolutional blocks (for noise suppression).

The 30-Second Verdict

- Hardware: NARIT’s setup uses a

Teledyne CCDwith 4K×4K pixel resolution, paired with a ThorLabs adaptive optics system for diffraction-limited imaging. - Software: The DNN achieves 92% accuracy in methane detection (vs. 78% for traditional methods) but requires GPU clusters—not yet optimized for edge deployment.

- Cost: ~$5M for the telescope + AI pipeline (vs. JWST’s $10B). The real question: Can this scale?

Ecosystem Bridging: The Open-Source vs. Commercial Telescope War

The implications for the astronomy tech stack are immediate. NARIT’s work forces a reckoning between two camps:

- Open-Source Astroinformatics: Projects like exoplanet.py (Python-based) and NASA’s AMMOS will necessitate to integrate DNN pipelines to stay competitive. The barrier? Most open-source tools lack the real-time GPU acceleration NARIT’s system uses.

- Commercial Telescope Arrays: Companies like Planet Labs (cubesats) and Verite (ground-based) are watching closely. If NARIT’s method proves scalable, it could trigger a price war for exoplanet data—dropping costs from $500K per spectrum to $50K.

- Cloud vs. On-Prem: The DNN’s dependency on

CUDAraises a critical question: Will observatories adopt AWS Inferentia or Google’s TPU pods for cost efficiency, or double down on in-house HPC?

— Dr. Elena Vasileva, CTO of Astroport (exoplanet data infrastructure)

“NARIT’s breakthrough isn’t just about methane detection—it’s about proving that ground-based AI can outperform orbital telescopes in niche use cases. The next phase? Federated learning across global observatories to train a single, unified model. But beware: This will force a data sovereignty reckoning. Who owns the spectra? The observatory? The AI’s training lab? The answers will define the next decade of astro-tech.”

Under the Hood: The Architecture That Broke the Noise Floor

NARIT’s DNN isn’t just another black box. It’s a multi-stage pipeline with three killer features:

| Stage | Technique | Performance Gain | Hardware Dependency |

|---|---|---|---|

| Preprocessing | Wavelet-based denoising + FFT filtering |

35% noise reduction | Intel Xeon Scalable (CPU) |

| Feature Extraction | Transformer encoder (12 layers, 768-dim embeddings) | 92% methane detection accuracy | NVIDIA A100 (80GB HBM2) |

| Post-Processing | Bayesian uncertainty estimation | 40% fewer false positives | FPGA cluster (for real-time inference) |

The FPGA cluster is the wild card. By offloading the Bayesian inference to Xilinx Alveo U280 cards, NARIT achieves sub-millisecond latency for real-time corrections—a first for ground-based observatories. This represents critical for adaptive optics, where temporal alignment between the telescope’s mirror adjustments and the DNN’s predictions must be sub-10ms.

Why This Outperforms JWST (For Now)

- Spectral Resolution: NARIT’s setup achieves

R=100,000(vs. JWST’sR=1,000-3,000for NIRSpec). Higher resolution = finer molecular fingerprints. - Observation Time: JWST needs 10+ hours per target; NARIT does it in 2 hours (with AI acceleration).

- Cost per Spectrum: $50K (NARIT) vs. $2M (JWST).

But here’s the catch: JWST’s multi-object spectroscopy capability lets it analyze 100 exoplanets simultaneously. NARIT’s method is single-target—for now. The race is on to scale.

The Regulatory and Ethical Wildcards

This breakthrough isn’t just technical—it’s geopolitical. Thailand’s NARIT, a non-NATO ally, has just demonstrated that middle-tier observatories can compete with the US/EU space agencies. The implications for data localization laws (e.g., Thailand’s PDPA) are immediate:

— Prof. Rajesh Kumar, Cybersecurity Analyst at ISACA

“If NARIT’s spectra are considered ‘astronomical data’ under Thailand’s PDPA, they’ll need to be stored locally—blocking cloud-based collaboration. This could fragment the global exoplanet research ecosystem. The EU’s GDPR treats scientific data as exempt, but Thailand’s laws are still evolving. The result? A two-tier system: open data for Western observatories, restricted access for the rest.”

The other ethical bomb? Biosignature misattribution. NARIT’s methane detection is not definitive proof of life—yet. If commercial entities (e.g., Planetary Resources) start selling “habitable zone” data to investors, we risk a speculative bubble around exoplanet real estate. The NASA Astrobiology Framework already warns against this, but with NARIT’s lower-cost method, the temptation to “sell the dream” will grow.

The Path Forward: What’s Next for Exoplanet AI?

The canonical URL for this story is here. But the real story isn’t just about Thailand—it’s about the next generation of exoplanet telescopes:

- 2027: ESO’s Extremely Large Telescope (ELT) launches, with a 39-meter aperture. Will it adopt NARIT’s DNN pipeline, or proceed all-in on quantum computing for spectroscopy?

- 2028: NASA’s Roman Space Telescope deploys, focusing on transit photometry. The question: Will ground-based AI become its complementary partner?

- 2029+: The first commercial exoplanet data market emerges. Will it be open-source (like Zooniverse), or walled gardens controlled by Maxar or ESA’s SpaceDataHighway?

What In other words for Enterprise IT

For CTOs in aerospace, defense, and data centers, NARIT’s work is a wake-up call:

- Edge AI for Telescopes: The DNN’s

FPGA-acceleratedinference suggests a future where observatories run their own AI cores—reducing latency and cloud dependency. - Quantum vs. Classical: If NARIT’s method scales, will IBM’s quantum spectroscopes become obsolete? Not yet—but the cost curve is shifting.

- Data Gravity: The more observatories adopt AI, the harder it becomes to centralize exoplanet data. Decentralized networks (like IPFS) may become the norm.

The Bottom Line: A New Era for Exoplanet Science

NARIT hasn’t just detected methane—it’s redefined the economics of exoplanet research. The question now isn’t if ground-based AI will compete with orbital telescopes, but how fast. For developers, this means:

- Start integrating PyTorch or TensorFlow into spectroscopy pipelines—now.

- Watch for FPGA-accelerated AI frameworks (e.g., Xilinx Vitis) to dominate edge observatories.

- Prepare for data sovereignty battles. If your exoplanet research relies on cloud storage, revisit your compliance strategy.

One thing is certain: The Silicon Valley of astronomy just got a new player—and it’s not NASA. It’s Thailand. The race for the next breakthrough? It’s on.