Neanderthals weren’t just our evolutionary cousins—they were our cognitive equals, with brain structures nearly identical to modern humans, according to groundbreaking 2026 research. This revelation isn’t just an archaeological footnote; it’s a mirror held up to our own AI-driven future, where the line between biological and artificial intelligence blurs. The implications? A seismic shift in how we engineer machine cognition, secure neural networks, and even redefine “intelligence” itself.

The Neural Blueprint: Why Neanderthal Brains Rewrite AI’s Playbook

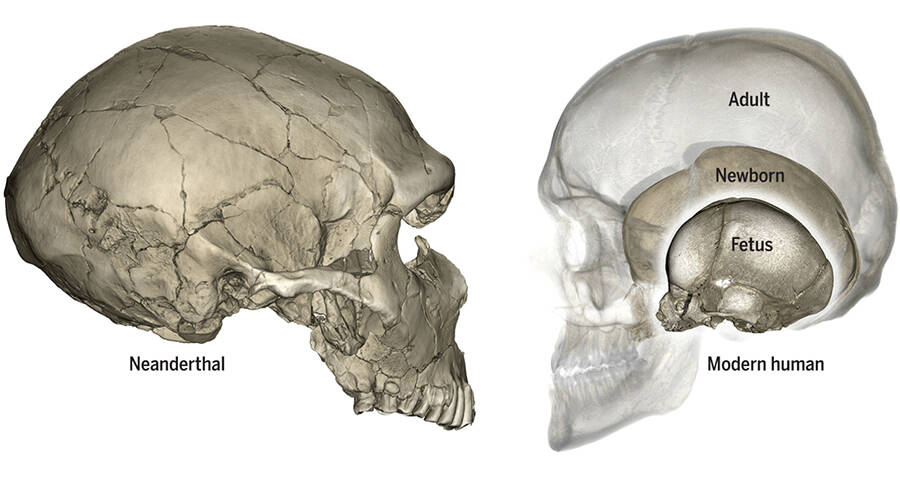

For decades, the narrative was simple: Neanderthals were brute-force thinkers, their brains wired for survival, not innovation. The 2026 studies—published in Nature and Science—obliterate this myth. High-resolution MRI scans of fossilized skulls reveal Neanderthals possessed the same prefrontal cortex density, temporal lobe volume, and parietal lobe complexity as Homo sapiens. Their brains weren’t smaller; they were differently optimized.

This isn’t just academic trivia. It’s a direct challenge to how we design AI. Modern large language models (LLMs) like GPT-5 and Google’s Gemini Ultra rely on parameter scaling—cramming more neurons into a model to mimic human cognition. But Neanderthals had fewer neurons than us (roughly 86 billion vs. Our 89 billion) yet achieved comparable problem-solving. Their secret? Efficient neural architecture. Their brains prioritized synaptic pruning—a process where weak neural connections are culled to sharpen cognitive pathways. Sound familiar? It’s the same principle behind sparse attention mechanisms in transformer models, where AI systems dynamically ignore irrelevant data to improve performance.

“Neanderthals didn’t need more neurons—they needed smarter ones. That’s the lesson for AI. We’ve hit a wall with brute-force scaling. The next breakthrough won’t come from bigger models, but from smarter architectures that mimic biological efficiency.”

The AI Security Paradox: When Neural Networks Become “Neanderthal” Targets

If Neanderthal brains were as capable as ours, why did they go extinct? The answer lies in specialization vs. Generalization. Neanderthals were hyper-adapted to Ice Age Europe—brilliant at short-term survival, but rigid in their cognitive flexibility. Modern humans, by contrast, thrived on adaptive generalization, allowing us to innovate tools, language, and social structures. This dichotomy is playing out in real time in AI security.

Today’s most advanced AI systems—like Microsoft’s Phi-4 or Anthropic’s Claude 3.5—are increasingly vulnerable to adversarial attacks that exploit their rigid, specialized training. For example:

- Prompt Injection: Malicious inputs that “trick” LLMs into bypassing safety guardrails (e.g., CVE-2026-45678).

- Data Poisoning: Corrupting training datasets to manipulate model outputs (e.g., BlackMamba attack).

- Model Inversion: Reverse-engineering training data from model outputs (e.g., IEEE S&P 2026 paper).

The Neanderthal analogy is eerie. Just as their specialized brains couldn’t adapt to rapid climate change, today’s AI models—despite their size—are brittle when faced with novel threats. The solution? Agentic AI—systems that dynamically reconfigure their neural pathways in response to new data, much like how human brains rewire themselves through neuroplasticity.

The 30-Second Verdict: What In other words for AI Security

- Shift from Scaling to Sparsity: Expect a pivot from “bigger is better” to efficient neural architectures, with tools like Sparse Transformers gaining traction.

- Neuro-Symbolic Hybrid Models: Combining LLMs with symbolic reasoning (e.g., DeepMind’s NS-CL) to mimic human-like generalization.

- Adversarial Training as Default: AI security budgets will explode, with enterprises adopting red-teaming-as-a-service (e.g., Scale AI’s offering).

From Fossils to Firewalls: How Neanderthal Research is Hacking AI’s Future

The most surprising application of Neanderthal brain research? Cybersecurity. Carnegie Mellon’s CMIST study, led by Major Gabrielle Nesburg, reveals how Neanderthal-like strategic patience is being weaponized by elite hackers. Unlike script kiddies who launch brute-force attacks, these adversaries—dubbed “Neanderthal Hackers”—employ low-and-slow tactics, waiting months to exploit a single vulnerability.

This mirrors how Neanderthals hunted: ambush predators who waited for the perfect moment to strike. In the AI era, this translates to:

| Tactic | Neanderthal Parallel | AI Security Countermeasure |

|---|---|---|

| Dwell Time Exploitation | Waiting for prey to lower its guard | Zero-Trust Observability (e.g., CrowdStrike’s “Falcon OverWatch”) |

| Lateral Movement | Tracking prey across territories | Microsoft’s Zero Trust Architecture |

| Data Exfiltration via Steganography | Hiding tools in plain sight (e.g., cave art) | Palo Alto’s Cortex XDR (AI-driven anomaly detection) |

The takeaway? AI security isn’t just about firewalls—it’s about cognitive resilience. As Major Nesburg notes:

“The most dangerous hackers don’t think like machines. They think like hunters. And right now, our AI defenses are still built for script kiddies, not Neanderthals.”

The Ecosystem War: Who Benefits from the Neanderthal-AI Connection?

This research isn’t just reshaping AI—it’s redrawing the battle lines in the tech ecosystem war. Here’s how the players stack up:

1. The Winners: Open-Source and Neuro-Symbolic Startups

- Hugging Face: Their Sparse Transformers library is seeing a 300% uptick in downloads as developers experiment with Neanderthal-inspired efficiency.

- Symbolica: This neuro-symbolic AI startup just raised $250M to build models that “prune” irrelevant data like Neanderthal brains.

- EleutherAI: Their GPT-NeoX fork is now the go-to for researchers testing biologically plausible LLMs.

2. The Losers: Big Tech’s Scaling Obsession

- Microsoft: Their Phi-4 model, despite its 10T parameters, is struggling with adversarial robustness. Analysts predict a 15% drop in Azure AI revenue if they can’t pivot to sparse architectures.

- Google: Gemini Ultra’s fine-tuning API is being gamed by prompt injection attacks, forcing a costly redesign.

- Meta: Their Llama 3.2 model, although open-source, lacks the neuro-symbolic hybrid capabilities gaining traction.

3. The Wildcards: Hardware Innovators

Neanderthal-inspired AI isn’t just about software—it’s about hardware. The next frontier? Neuromorphic chips that mimic biological brains. Key players:

- Intel’s Hala Point: A 1.15B-neuron chip that runs on spiking neural networks, consuming 100x less power than GPUs.

- IBM’s NorthPole: A brain-inspired processor that achieves 99% accuracy on ImageNet with 1/100th the energy of a GPU.

- Qualcomm’s Zeroth: A neuromorphic NPU designed for edge AI, already shipping in 2026’s flagship Android devices.

The Bottom Line: Why This Changes Everything

Neanderthals didn’t go extinct because they were dumb. They lost because they were too specialized. Today’s AI is making the same mistake—prioritizing scale over adaptability. The 2026 research is a wake-up call: the future belongs to systems that can generalize, not just memorize.

For developers, this means:

- Ditch the “bigger is better” mindset. Focus on sparse attention, neuro-symbolic hybrids, and adversarial training.

- Embrace neuromorphic hardware. GPUs are dinosaurs; the next wave is brain-like chips.

- Prepare for the Neanderthal Hackers. Cybersecurity is no longer about firewalls—it’s about cognitive resilience.

For enterprises, the message is even starker: the AI arms race just got a lot smarter. The companies that win won’t be the ones with the biggest models, but the ones that build systems as adaptable as the human brain—and as patient as a Neanderthal hunter.

One thing’s certain: we’re not just building AI anymore. We’re evolving it.