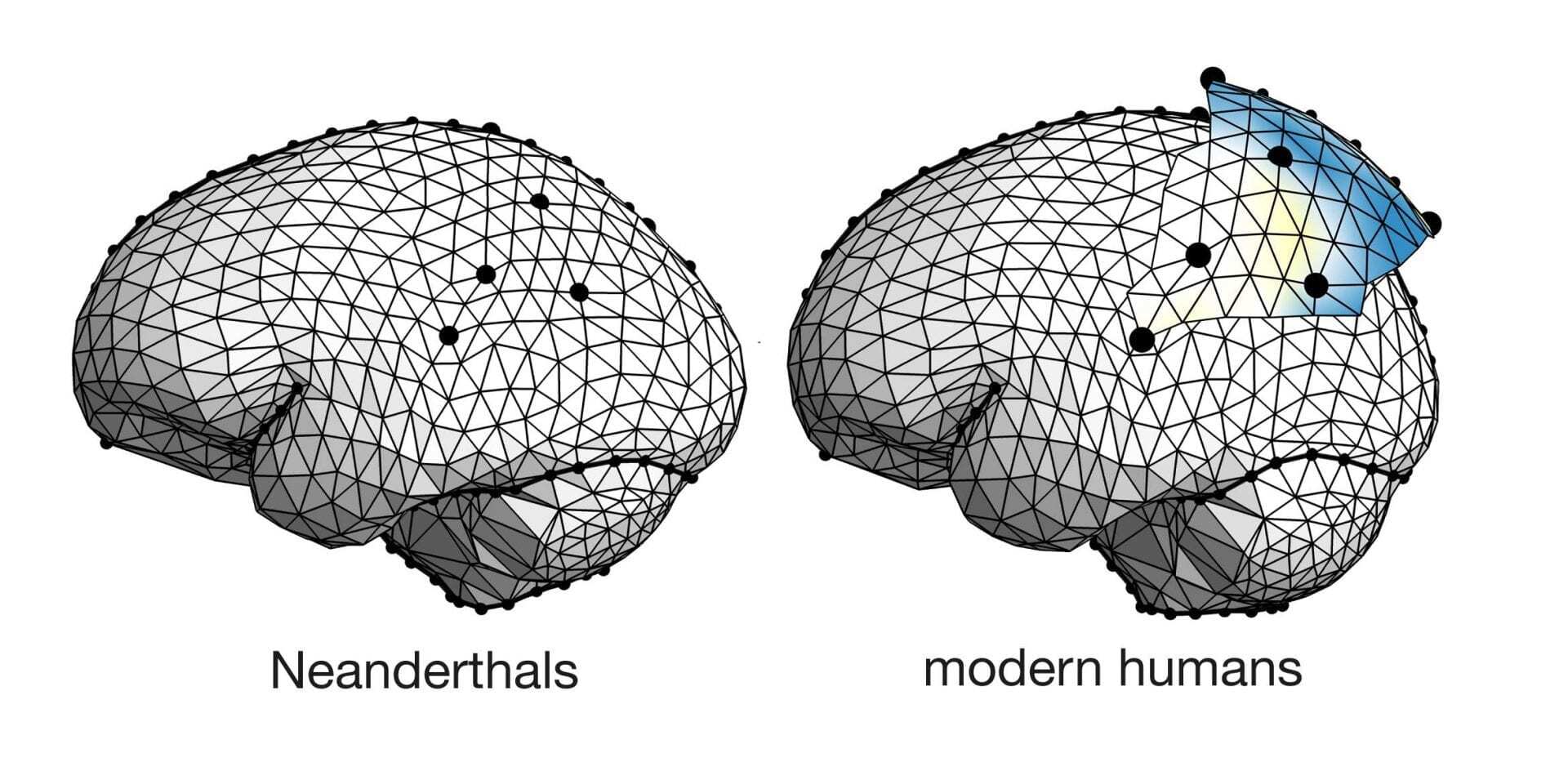

Scientists have debunked the “primitive” Neanderthal myth, revealing that their brain volume equaled or exceeded modern humans. The critical divergence lay not in raw capacity, but in neural architecture and social connectivity—essentially a difference in “network topology” rather than “hardware specs”—which ultimately determined evolutionary survival by the end of the Pleistocene.

For decades, the narrative was simple: Homo sapiens were the “upgraded” version, and Neanderthals were the legacy hardware—clunky, dim-witted, and destined for the scrap heap of history. But as we peel back the layers of paleo-neurology, we uncover a reality that looks less like a software upgrade and more like a pivot in system architecture. It turns out Neanderthals weren’t lacking in “compute power.” If anything, they were overclocked.

This isn’t just a win for anthropology; it’s a masterclass in the difference between scale and efficiency. In the tech world, we see this constantly. You can throw more parameters at a Large Language Model (LLM), but if the attention mechanism is flawed, you just get a more expensive way to hallucinate. The Neanderthal brain is the biological equivalent of a massive GPU cluster with a bottlenecked interconnect.

The Volume Fallacy: Why Raw Compute Wasn’t Enough

When we look at the cranial capacity of Neanderthals, the data is jarring. Their brains weren’t just “comparable”; they were often larger than our own. In engineering terms, they had more raw RAM and a higher theoretical throughput. But volume is a vanity metric. A larger chip doesn’t guarantee a faster boot time if the trace routing is inefficient.

The “surprising” find in recent comparative studies is that the disparity wasn’t in the amount of gray matter, but in the distribution. Neanderthals leaned heavily into the occipital lobe—the visual processing center. They were optimized for high-fidelity sensory input and physical coordination in harsh, low-light environments. They were the ultimate edge-computing devices: highly specialized, incredibly powerful, but lacking the versatility of a general-purpose OS.

Modern humans, conversely, developed a more pronounced prefrontal cortex and parietal lobes. We didn’t build a bigger engine; we redesigned the transmission. This allowed for higher-order abstraction and, crucially, the ability to manage complex social graphs. While the Neanderthal was optimized for the “local node” (the immediate family or small clan), Homo sapiens were building a wide-area network (WAN).

The Hardware Spec Comparison

| Metric | Neanderthal (Legacy Arch) | Modern Human (Current Arch) | Technical Implication |

|---|---|---|---|

| Cranial Volume | High (Often >1500cc) | Moderate (~1350cc) | Raw compute capacity was not the limiting factor. |

| Visual Processing | Hyper-optimized (Large Occipital) | Standardized | Superior sensory input vs. Superior data synthesis. |

| Social Connectivity | Low-latency / Small Cluster | High-latency / Global Mesh | Ability to scale knowledge across distant populations. |

| Cognitive Flexibility | Specialized (Task-specific) | Generalized (Adaptive) | General-purpose intelligence vs. Niche optimization. |

Network Topology and the Social API

The real “killer app” of the modern human brain wasn’t the ability to make a better spear; it was the ability to share the idea of a better spear with a tribe five hundred miles away. What we have is where the concept of “network topology” becomes critical. Neanderthals operated in isolated silos. Their knowledge transfer was vertical (parent to child) and limited in scope.

Homo sapiens developed a social API that allowed for massive horizontal scaling. We created symbolic language and cultural markers that acted as a protocol for trust between strangers. When two groups of Sapiens met, they didn’t just compete; they traded data. This created a compounding effect of innovation. While a Neanderthal genius might invent a new tool, that invention often died with them or stayed within a tiny cluster. When a Sapiens genius invented something, it was pushed to the “main branch” of the species’ collective knowledge base.

This is effectively the difference between a closed-source proprietary system and an open-source community. The open-source model—though messier and more prone to conflict—evolves at an exponential rate since the feedback loop is global.

“The survival of Homo sapiens wasn’t a victory of intelligence, but a victory of connectivity. We didn’t out-think the Neanderthals; we out-networked them. The cognitive architecture of Sapiens allowed for the creation of shared myths, which functioned as the first social operating system.”

From Paleolithic Brains to Transformer Architectures

As we move further into 2026, this biological lesson is mirroring the current shift in AI development. For the last few years, the industry was obsessed with “parameter scaling”—the belief that simply making models larger (the Neanderthal approach) would lead to AGI. We saw this with the transition from GPT-3 to GPT-4, where raw scale drove performance.

But we’ve hit a wall of diminishing returns. The current frontier is “architectural efficiency.” We are seeing a pivot toward Mixture-of-Experts (MoE) and sparse activation, where the model doesn’t fire every neuron for every prompt. This is exactly what the modern human brain does. We don’t use our entire cortical capacity to tie a shoelace; we route the task to the most efficient sub-network.

The Neanderthal brain was a monolithic architecture. It was powerful, but it lacked the modularity required to adapt to rapidly shifting environmental variables. In the AI world, we call this “overfitting.” The Neanderthals were overfitted to a specific, brutal environment. When the environment changed, they couldn’t re-train their weights rapid enough.

We can see this struggle today in the “chip wars.” The move toward Neuromorphic Computing—chips that mimic the brain’s physical structure—is an attempt to move away from the von Neumann bottleneck. We are trying to build hardware that doesn’t just have “more memory” (volume) but better “synaptic routing” (connectivity).

The Evolutionary “Bug” That Became a Feature

It is tempting to view the Neanderthal’s extinction as a failure. In reality, it was a lesson in the dangers of specialization. In a stable environment, the specialist wins. In a volatile one, the generalist survives.

The “surprising” discovery that Neanderthals were cognitively our equals—or perhaps even our superiors in certain domains—forces us to redefine what “intelligence” actually is. Intelligence isn’t the ability to solve a complex problem in isolation; it’s the ability to integrate a solution into a larger system. The Neanderthals had the hardware, but they lacked the network protocol.

For those of us building the next generation of AI, the takeaway is clear: Stop obsessing over the size of the model. Start obsessing over how the data flows. The most powerful system isn’t the one with the most parameters; it’s the one that can most effectively connect a disparate set of ideas into a scalable truth.

The Neanderthals didn’t lose because they weren’t smart enough. They lost because they were running a localized version of the software in a world that had just gone global.