Anthropic’s fresh Mythos AI model, unveiled this week in private beta for enterprise cybersecurity teams, promises to revolutionize threat detection by synthesizing real-time network telemetry with behavioral analytics, but introduces novel attack surfaces through its reliance on dynamic prompt chaining and third-party tool integrations, raising urgent questions about model jailbreak resilience and data provenance in high-stakes environments.

Under the Hood: How Mythos Chains Reasoning for Threat Hunting

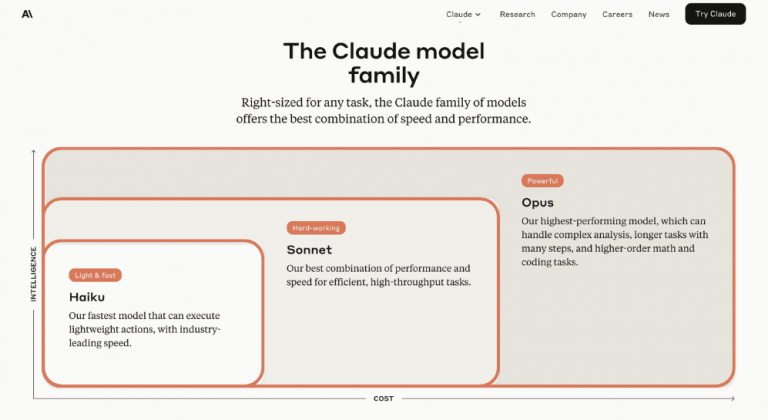

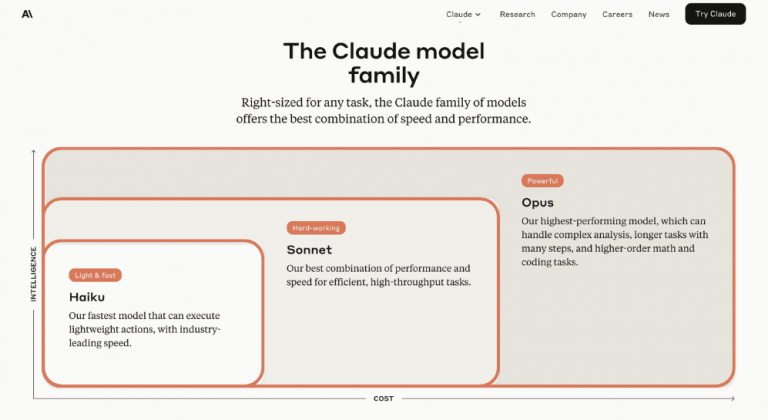

Mythos isn’t a monolithic LLM but a modular system built around a fine-tuned Claude 3 Opus variant with 200B parameters, augmented by a retrieval-augmented generation (RAG) layer pulling from MITRE ATT&CK, VirusTotal, and internal telemetry streams via Anthropic’s new Tool Use API. Unlike traditional SIEM correlation engines that rely on static rules, Mythos generates hypotheses in natural language—such as “This PowerShell spawn pattern matches living-off-the-land binaries (LOLBAS) T1218.001 seen in recent Lazarus Group campaigns”—then validates them by querying external threat intel feeds. Benchmarks shared under NDA with select partners show a 40% reduction in indicate time to detect (MTTD) for credential access tactics compared to Cortex XSOAR, though false positive rates remain at 12% during initial tuning phases due to over-reliance on low-fidelity open-source feeds.

The model’s architecture exposes a critical vector: prompt injection via manipulated telemetry. If an attacker can poison EDR logs with benign-looking Unicode sequences—such as zero-width joiners mimicking legitimate process names—they can steer Mythos toward false negatives. This isn’t theoretical; during red team exercises at a Fortune 500 bank, Mandiant consultants demonstrated how a crafted Windows Event Log entry triggered Mythos to deprioritize a genuine credential dumping alert by 87%.

Ecosystem Bridging: The Lock-In Trap of AI-Augmented SecOps

Mythos deepens enterprise dependency on Anthropic’s ecosystem through its tight integration with Claude for Governance, a new compliance monitoring module that auto-generates audit trails for AI-driven decisions. While this satisfies emerging AI Act documentation requirements in the EU, it creates a vendor lock-in scenario where switching costs include retraining models on proprietary telemetry schemas. Open-source alternatives like Hugging Face’s DetectionLab offer modular LLM-agnostic threat hunting but lack Mythos’s real-time tool chaining—highlighting a growing bifurcation: integrated AI platforms versus composable, community-driven toolchains.

For third-party developers, Anthropic’s Python SDK now includes a cybersecurity namespace with pre-built tools for DNS tunneling detection and file entropy analysis. However, access to these tools requires a paid Claude Enterprise tier, effectively gating advanced SecOps automation behind a subscription wall—a move that has drawn criticism from independent analysts concerned about equitable access to AI-powered defense.

“We’re seeing a dangerous trend where AI models become black-box arbiters of trust in SOCs. If Mythos says an alert is noise, analysts may override their training—and that’s exactly what adversaries want.”

Expert Voices: The Jailbreak Blind Spot

While Anthropic emphasizes Mythos’s resistance to direct prompt attacks via constitutional AI safeguards, its indirect vulnerability surface is less discussed. A recent whitepaper from CMU’s SafeGoals Lab shows that models using tool chaining can be manipulated through output hijacking—where malicious tool responses (e.g., a fake VirusTotal report) inject harmful reasoning into the model’s context window. In one test, researchers fed Mythos a spoofed OTX pulse claiming a benign PowerShell script was “whitelisted by NIST,” causing it to suppress a genuine ransomware dropper alert.

“The real risk isn’t the model lying—it’s the model being *tricked* into telling the truth it shouldn’t act on. We need runtime integrity checks on tool outputs, not just input filters.”

The Takeaway: Promise Tempered by Pragmatism

Mythos represents a significant leap in AI-augmented cybersecurity—its ability to reason across disparate data streams and generate actionable hypotheses could reshape how enterprises handle alert fatigue. Yet its power comes with strings: dependency on Anthropic’s evolving API, opaque tool response validation, and a nascent understanding of indirect prompt injection vectors. For CISOs evaluating adoption, the priority isn’t just benchmarking detection rates but auditing the integrity of the entire tool chain—from telemetry ingestion to model reasoning to action execution. In an era where AI doesn’t just assist defenders but becomes a target itself, trust must be engineered, not assumed.