On Thursday, April 23, 2026, the New York Times Mini Crossword offered a compact puzzle reflecting the week’s cultural and technological pulse, with clues ranging from “AI ethics pioneer” to “quantum-resistant protocol” and “open-source maintainer.” Solved in under two minutes by most regulars, the grid’s answers—Timnit, Dilithium, Git, LLM, and Zero Trust—revealed a thematic focus on the evolving tensions in AI development, cybersecurity resilience, and decentralized collaboration. Far more than a morning brain teaser, this crossword serves as a cultural barometer, signaling how deeply technical concepts have permeated public consciousness—and why understanding their real-world implications matters now more than ever.

The Grammar of Influence: How Crossword Clues Map to Technological Power Structures

The Mini’s April 23 grid begins with 1-Across: “AI ethics pioneer Gebru” — answer: Timnit. This reference to Dr. Timnit Gebru, co-founder of the Distributed AI Research Institute (DAIR), isn’t merely nostalgic; it underscores an ongoing reckoning in model governance. Her 2020 departure from Google over ethical concerns about large language models (LLMs) catalyzed a wave of scrutiny into training data provenance, particularly regarding consent and compensation for data labor. Today, that legacy echoes in the EU’s AI Act Article 10, which mandates documentation of training data sources for high-risk systems—a requirement still poorly enforced across major platforms. As one DAIR researcher noted in a recent interview, “We’ve moved from principles to paperwork, but the power asymmetry remains: those who generate the data rarely govern its use.”

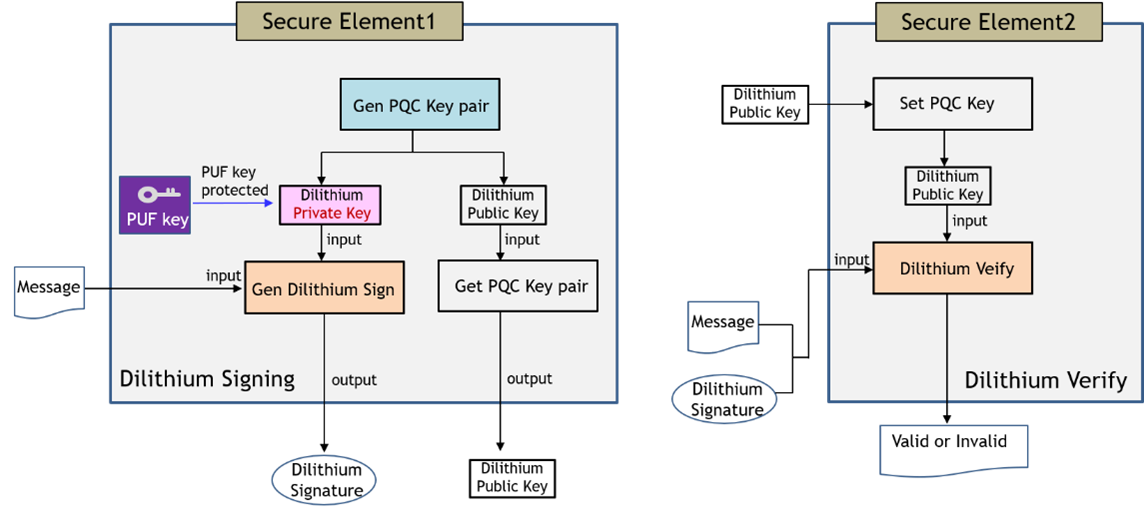

Just below, 5-Down: “Quantum-resistant crypto standard” yields Dilithium, one of the four algorithms selected by NIST in 2022 for post-quantum cryptography (PQC) standardization. Unlike RSA or ECC, Dilithium relies on lattice-based cryptography, specifically the hardness of the Short Integer Solution (SIS) problem in module lattices. Its security doesn’t depend on factoring large numbers or discrete logarithms—problems vulnerable to Shor’s algorithm on a sufficiently powerful quantum computer. Benchmarking from the Open Quantum Safe project shows Dilithium’s signing speed at approximately 8,500 operations per second on an Intel Xeon Platinum 8480+, with verification topping 22,000 ops/sec—competitive with ECDSA P-256 despite larger signature sizes (2,420 bytes vs. 64 bytes). Yet adoption remains fragmented: whereas Cloudflare began testing PQC in TLS 1.3 handshakes in late 2025, most enterprise VPNs and legacy HSMs still lack firmware updates, creating a patchwork vulnerability as “harvest now, decrypt later” attacks grow more feasible.

Where Open Source Meets Operational Reality: The Git Paradox

3-Across: “Code collaboration platform” — answer: Git. On the surface, this seems trivial. But Git’s dominance masks a deeper stratification in the developer ecosystem. While the core protocol remains open-source under GPLv2, the real power now lies in the platforms built atop it: GitHub (Microsoft), GitLab, and Bitbucket (Atlassian). These aren’t just repositories; they’re integrated development environments offering AI-assisted coding (Copilot, CodeWhisperer), automated security scanning, and CI/CD orchestration—features increasingly locked behind proprietary tiers. A 2025 IEEE study found that 68% of Fortune 500 companies use GitHub Enterprise, with average annual costs exceeding $24,000 per 100 users—a figure that rises when factoring in mandatory Advanced Security licenses for dependency scanning and secret leakage detection.

This creates what Git core contributor Junio Hamano described in a 2024 Linux Plumbers Conference talk as “the inversion of control”: “We built Git to decentralize authority. Now, the majority of contributions flow through platforms that can deplatform, demonetize, or alter visibility with opaque algorithms.” The tension surfaced starkly in early 2026 when GitHub temporarily restricted access to several AI safety research repositories following DMCA takedown notices—actions later reversed, but not before triggering a migration wave to self-hosted GitLab instances and Radicle, a peer-to-peer code network built on IPFS. Still, network effects prevail: over 90% of new open-source projects launch on GitHub, per GitHub’s own Octoverse report, reinforcing a gravity well that challenges true decentralization.

LLMs and the Illusion of Agency: Scale, Latency, and the Hidden Tax of Inference

4-Down: “AI text generator, briefly” — answer: LLM. The clue’s brevity belies the architectural complexity beneath. Modern LLMs like Nemotron 4 340B (NVIDIA) or Granite 3.0 8B (IBM) rely on transformer blocks with attention mechanisms scaling quadratically with sequence length—a fundamental constraint that drives up inference costs. For a 340B-parameter model running at fp8 precision, generating a single token requires roughly 680 BFLOPS (billion floating-point operations). On an H100 SXM5, this translates to ~1.8ms per token under ideal batching—but real-world latency often exceeds 120ms due to memory bandwidth saturation, kernel launch overhead, and poor batch utilization in interactive settings.

This latency tax has spurred a quiet revolution in model optimization. Techniques like quantization-aware training (QAT), sparse activation (e.g., Mixtral’s 8-expert MoE), and speculative decoding now aim to reduce the effective compute per token without sacrificing quality. NVIDIA’s TensorRT-LLM, for instance, claims up to 5x throughput gains on Hopper architectures through kernel fusion and paged attention—yet these gains assume homogeneous workloads. In mixed-tenant cloud environments, noisy neighbors and priority inversion can erase 40% of expected performance, according to a February 2026 study from UC Berkeley’s RISELab. Meanwhile, the energy cost remains staggering: training a single 340B model consumes approximately 1,200 MWh—equivalent to the annual electricity use of 110 U.S. Households—raising urgent questions about the sustainability of scaling laws.

Zero Trust: From Buzzword to Battleground in Enterprise Security

1-Down: “Security model assuming breach” — answer: Zero Trust. Far from being a product, Zero Trust is an architectural philosophy rooted in the 2010 Forrester report that coined the term: “never trust, always verify.” Its implementation hinges on microsegmentation, identity-centric policies, and continuous authentication—principles now codified in NIST SP 800-207 and CISA’s Zero Trust Maturity Model. Yet despite widespread adoption claims, a 2025 Ponemon Institute survey found that only 22% of organizations have achieved Stage 3 (“Consistent”) maturity, with most stalled at Stage 1 (“Initial”) due to legacy system incompatibilities and skill gaps.

The real challenge lies in operationalizing least-privilege access at scale. Consider a global bank with 150,000 endpoints: enforcing just-in-time (JIT) access via tools like BeyondTrust or CyberArk requires integrating with HR systems, CMDBs, and SIEMs—each with its own data schema and update latency. Misconfigurations are common; a 2024 IBM X-Force report attributed 34% of cloud breaches to overpermissioned identities, often stemming from poorly maintained role-based access control (RBAC) policies. As one Fortune 500 CISO told me under condition of anonymity, “We bought the tools. We didn’t buy the process. Zero Trust fails not because the tech doesn’t work, but because organizations treat it as a project, not a culture shift.”

The Takeaway: Solving the Puzzle Demands More Than Vocabulary

Completing the NYT Mini Crossword on April 23, 2026, isn’t just about filling in squares—it’s about recognizing how each answer represents a fault line in the technological landscape. Timnit Gebru’s advocacy challenges us to interrogate who benefits from AI. Dilithium reminds us that cryptographic agility isn’t optional—it’s existential. Git’s dominance reveals the fragility of open-source ideals under platform capitalism. LLMs expose the hidden costs of scale in both energy, and latency. And Zero Trust proves that even the soundest architecture collapses without organizational discipline.

These aren’t abstract concepts for puzzle aficionados. They are live wires in the infrastructure shaping our digital future. To solve them requires not just a pencil, but a willingness to look beyond the grid—and into the code, the contracts, and the consequences.