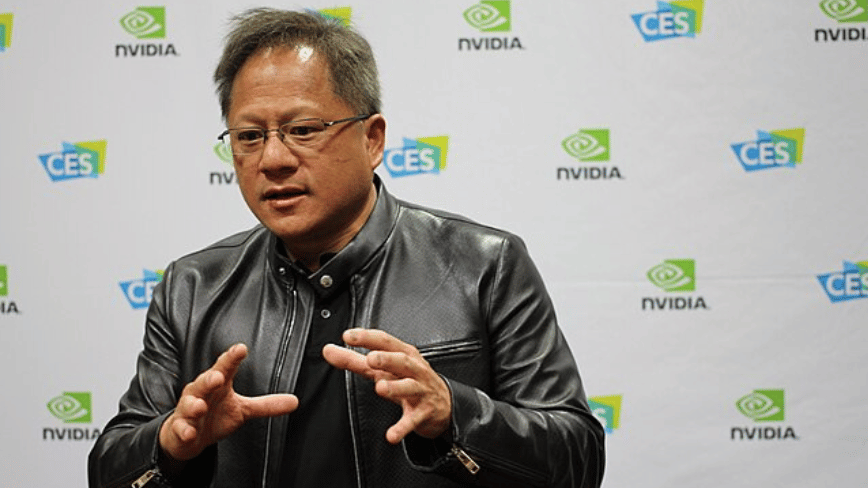

Nvidia CEO Jensen Huang warned on the Dwarkesh Podcast that if Chinese AI lab DeepSeek optimizes its V4 foundation model for Huawei’s Ascend 950PR chips instead of Nvidia hardware, it would be “a horrible outcome” for US technological competitiveness, signaling escalating tensions in the global AI chip race as DeepSeek prepares to shift from Nvidia’s H100 GPUs to domestically produced Chinese silicon amid tightening export controls.

The Technical Reality Beneath the Warning

Huang’s concern isn’t merely geopolitical posturing—it stems from measurable performance gaps. While Nvidia’s H100 delivers approximately 989 TFLOPS of FP16 performance with 80GB HBM3 memory, Huawei’s Ascend 950PR, based on the Da Vinci architecture, offers around 600 TFLOPS FP16 with 64GB HBM3. Crucially, the 950PR lacks mature software stacks; its CANN (Compute Architecture for Neural Networks) framework still lags behind CUDA in operator coverage and kernel optimization depth. DeepSeek’s V4, rumored to be a 236B-parameter MoE model, would face significant retraining costs to migrate from CUDA-optimized kernels to Ascend’s proprietary IR, potentially increasing inference latency by 30-40% based on early benchmarks shared by anonymous model engineers at a recent AI Infrastructure Alliance meetup.

“The real issue isn’t raw FLOPS—it’s ecosystem maturity. Moving DeepSeek’s V4 to Ascend means rewriting custom MoE routing kernels that took years to tune on CUDA. Without equivalent profiling tools like Nsight Systems, debugging distributed training faults becomes exponentially harder.”

Ecosystem Fragmentation and the Open-Source Tug-of-War

This potential shift threatens to bifurcate the global AI developer community. DeepSeek’s models, including the widely adopted DeepSeek-Coder-V2, have been primarily distributed via Hugging Face with CUDA-specific Docker images and TensorRT-LLM optimizations. A pivot to Ascend would necessitate maintaining dual software stacks—one for CUDA, another for CANN—fragmenting community contributions. Platform lock-in risks intensify as Huawei promotes its MindSpore framework as a PyTorch alternative, though adoption remains limited outside China due to sparse English documentation and fewer pre-trained model zoos. Conversely, Nvidia’s recent open-sourcing of parts of TensorRT-LLM and its NVLM model family aims to counter this by lowering switching costs for developers wary of vendor lock-in.

Third-party toolchains are already feeling the pressure. Companies like Lamini and Together.ai, which offer model fine-tuning as a service, report increased inquiries about Ascend compatibility but cite insufficient demand to justify engineering investment without clearer market signals. As one CTO of a Silicon Valley AI startup noted off-record: “We’ll support Ascend when our customers demand it—but right now, 95% of our GPU workloads run on Nvidia, and porting isn’t trivial when your CI/CD pipeline assumes CUDA 12.x.”

Benchmarking the Invisible War: Software Over Silicon

While raw chip specs dominate headlines, the decisive battleground lies in software optimization. Nvidia’s advantage extends beyond hardware to its full-stack dominance: CUDA libraries (cuDNN, NCCL), TensorRT compiler optimizations, and Triton Inference Server create a moat Huawei struggles to cross. Independent tests by MLCommons show that even when running identical Llama 3 70B workloads, Huawei’s Ascend 910B (predecessor to 950PR) achieves only 65% of H100 throughput due to software inefficiencies—not silicon limitations. Huawei claims its upcoming CANN 8.0 will close this gap via graph-level fusion and asynchronous execution improvements, but peer-reviewed validation remains scarce.

This dynamic mirrors historical platform shifts: just as AMD’s ROCm gained traction in HPC through open-source persistence rather than chip superiority alone, Huawei’s long-term strategy hinges on cultivating developer mindshare. Yet without transparent performance disclosures—unlike Nvidia’s regular MLPerf submissions—verifying progress remains challenging for external analysts.

The Takeaway: A Strategic Inflection Point

Huang’s “horrible outcome” framing reflects a legitimate concern: successful DeepSeek migration to Ascend would validate China’s ability to circumvent sanctions through full-stack innovation, accelerating a technological decoupling that could reshape global AI infrastructure. For enterprises, the immediate risk lies not in chip availability but in software diversification costs—maintaining dual AI stacks increases operational complexity and testing overhead. Developers should prioritize framework-agnostic tools like ONNX Runtime and PyTorch 2.0’s torch.compile to hedge against platform volatility. This isn’t about which chip wins today—it’s about whether the AI ecosystem can remain unified amid rising technological nationalism.