The NVIDIA-Google Cloud Alliance: A Full-Stack Power Play for Agentic and Physical AI

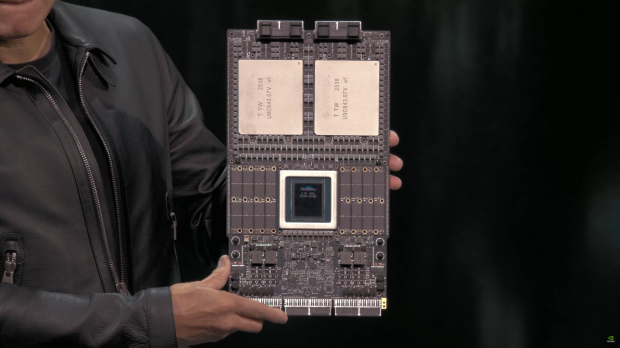

In 50 words: NVIDIA and Google Cloud have co-engineered a full-stack AI platform—spanning Blackwell GPUs, Vera Rubin NVL72 racks, and Gemini Enterprise—to push agentic and physical AI into production. This collaboration delivers 10x cost efficiency, confidential computing, and open-model support, reshaping enterprise AI, robotics, and industrial digital twins.

This week’s Google Cloud Next in Las Vegas wasn’t just another keynote—it was a declaration of war on the last mile of AI deployment. NVIDIA and Google Cloud have spent a decade co-engineering a full-stack AI platform, but their latest collaboration isn’t incremental. It’s a structural shift in how agentic and physical AI move from lab experiments to factory floors, data centers, and even sovereign clouds. The stakes? Nothing less than the next decade of enterprise AI, robotics, and industrial automation.

Why This Isn’t Just Another GPU Announcement

Most AI hardware announcements focus on raw performance—teraflops, memory bandwidth, or token throughput. The NVIDIA-Google Cloud partnership does that, but it also solves three critical bottlenecks that have kept AI stuck in prototype purgatory:

- Cost: The new Vera Rubin NVL72 racks deliver 10x lower inference cost per token and 10x higher token throughput per megawatt than the previous generation. For context, that’s the difference between running a 100B-parameter model at $0.0001 per token versus $0.001—a game-changer for enterprises scaling agentic workflows.

- Scale: The A5X instances can scale to 960,000 NVIDIA Rubin GPUs across multisite clusters, enabling workloads that were previously confined to supercomputers. This isn’t just about training larger models; it’s about running thousands of parallel agentic simulations for robotics or digital twins without hitting a wall.

- Security: Confidential VMs with NVIDIA Blackwell GPUs bring hardware-level encryption to multi-tenant environments, ensuring prompts, models, and data remain encrypted even from cloud operators. This is the first time confidential computing has been extended to GPUs in the cloud, addressing a major compliance hurdle for regulated industries like healthcare and finance.

But the real breakthrough isn’t in the specs—it’s in the integration. Google Cloud’s AI Hypercomputer now runs NVIDIA’s entire software stack, from NeMo for model training to NIM microservices for deployment. This isn’t just a hardware upgrade; it’s a full-stack rearchitecture for agentic AI.

The Agentic AI Stack: How It Actually Works

Agentic AI—systems that can reason, plan, and act autonomously—requires more than just a powerful GPU. It demands a tightly integrated stack spanning hardware, software, and security. Here’s how NVIDIA and Google Cloud are delivering it:

| Layer | NVIDIA Component | Google Cloud Integration | Real-World Impact |

|---|---|---|---|

| Hardware | Blackwell GPUs, Rubin NVL72, ConnectX-9 SuperNICs | A5X/A4X VMs, Confidential VMs, Virgo networking | 10x cost efficiency, 80K-GPU single-site clusters, hardware-encrypted inference |

| Software | NeMo, Nemotron, NIM microservices, Omniverse | Gemini Enterprise Agent Platform, Vertex AI, Kubernetes Engine | Managed RL training, open-model customization, digital twin simulation |

| Security | Confidential Computing, Blackwell encryption | Confidential VMs, Google Distributed Cloud | End-to-end encryption for prompts, models, and data in multi-tenant environments |

One of the most underrated aspects of this collaboration is the managed reinforcement learning (RL) API built with NVIDIA NeMo RL. RL is notoriously difficult to scale—it requires dynamic cluster sizing, failure recovery, and job orchestration—but Google Cloud’s Managed Training Clusters automate these complexities. This means enterprises can focus on agent behavior (e.g., autonomous cybersecurity response or robotics planning) without worrying about infrastructure.

For developers, the integration of NVIDIA Cosmos Reason 2 into Google Vertex AI is a game-changer. Cosmos Reason 2 is a multimodal model that can process text, images, and sensor data to enable robots and AI agents to “see, reason, and act” in physical environments. This is the foundation for next-gen industrial AI, from automated quality control in factories to real-time logistics optimization.

The Ecosystem War: Who Wins and Who Loses?

This collaboration isn’t just about NVIDIA and Google Cloud—it’s a shot across the bow at the rest of the AI ecosystem. Here’s how it reshapes the competitive landscape:

1. The Open-Source vs. Closed-Source Divide

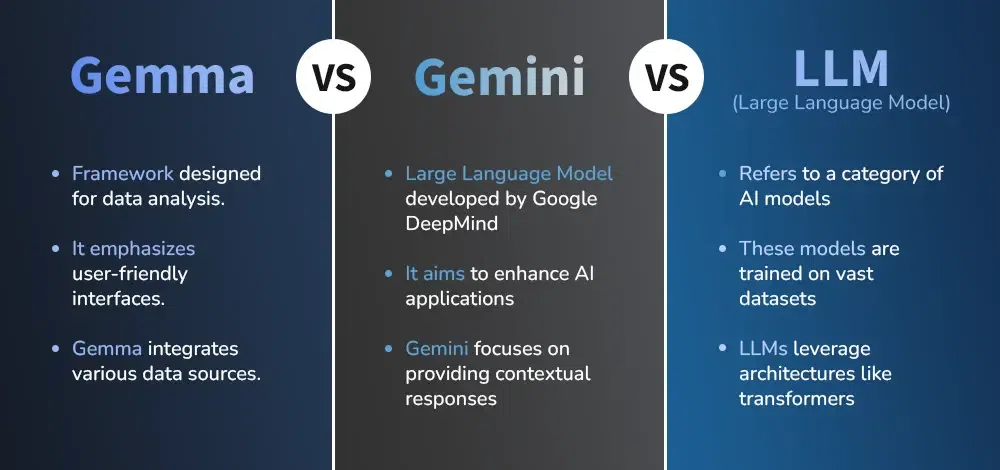

NVIDIA and Google Cloud are hedging their bets by supporting both open and closed models. The Nemotron 3 Super family of open models is available on Gemini Enterprise, but so are Google’s proprietary Gemini and Gemma models. This dual approach gives enterprises flexibility but also risks fragmenting the open-source community.

As Dr. Emily Zhang, CTO of AI startup Factory, noted in a recent interview with IEEE Spectrum:

“The NVIDIA-Google Cloud stack is the first truly production-ready platform for agentic AI, but it’s a double-edged sword. On one hand, it accelerates adoption by providing managed services for open models like Nemotron. On the other, it could push more enterprises toward proprietary solutions like Gemini, especially if they’re already locked into Google Cloud. The real test will be whether open-source alternatives like Meta’s Llama or Mistral can keep up with the performance and cost efficiency of this stack.”

2. The Cloud Wars: AWS and Azure on the Defensive

Amazon Web Services (AWS) and Microsoft Azure have dominated the cloud market for years, but this collaboration positions Google Cloud as the leader in AI-native infrastructure. The key differentiator? Vertical integration. While AWS and Azure rely on partnerships for AI hardware (e.g., AWS’s custom Trainium chips or Azure’s collaboration with AMD), Google Cloud and NVIDIA have co-engineered every layer of the stack—from the Blackwell GPUs to the Virgo networking fabric.

This level of integration is why OpenAI is running large-scale inference on NVIDIA GB300 systems on Google Cloud, even though Microsoft is OpenAI’s primary cloud partner. As Rajesh Gopinathan, former CEO of Tata Consultancy Services, told Ars Technica:

“The NVIDIA-Google Cloud alliance is a masterclass in ecosystem lock-in. By controlling the hardware, software, and security layers, they’re making it nearly impossible for competitors to match their performance or cost efficiency. AWS and Azure will have to respond with their own co-engineered stacks, but they’re playing catch-up.”

3. The Developer Dilemma: Lock-In vs. Flexibility

For developers, the NVIDIA-Google Cloud stack offers unparalleled performance but at the cost of flexibility. The NeMo RL API and NIM microservices are optimized for this stack, making it difficult to port workloads to other clouds. This is a deliberate strategy—NVIDIA and Google Cloud are betting that the performance gains will outweigh the risks of lock-in.

However, the partnership also includes support for open-source tools like NVIDIA Isaac Sim and Omniverse, which are available on the Google Cloud Marketplace. This gives developers a path to build portable AI applications, but only if they stick to open standards.

Physical AI: The Next Frontier

While agentic AI (e.g., autonomous software agents) has dominated headlines, the real long-term play here is physical AI—systems that interact with the physical world, from robots to digital twins. NVIDIA and Google Cloud are positioning themselves as the default platform for this next wave, and their collaboration is already bearing fruit:

- Robotics: NVIDIA Isaac Sim, a robotics simulation framework, is now available on Google Cloud. This allows developers to train, simulate, and validate robots in physically accurate digital twins before deploying them in the real world. For example, a logistics company could use Isaac Sim to optimize a fleet of autonomous forklifts in a virtual warehouse before rolling them out in a real facility.

- Industrial Digital Twins: Companies like Siemens and Cadence are using NVIDIA Omniverse on Google Cloud to build digital twins of factories, power plants, and even entire cities. These digital twins can simulate everything from energy consumption to traffic patterns, enabling real-time optimization.

- Autonomous Systems: NVIDIA NIM microservices for models like Cosmos Reason 2 enable robots to process sensor data, reason about their environment, and take action. This is the foundation for next-gen autonomous vehicles, drones, and industrial robots.

The most compelling use case? Closed-loop AI. Imagine a digital twin of a wind farm that not only simulates energy output but also autonomously adjusts turbine angles in real time based on weather data. Or a robotics system that can detect a defect in a manufacturing line and automatically recalibrate the machinery. These aren’t futuristic concepts—they’re being built today on the NVIDIA-Google Cloud stack.

What This Means for Enterprise IT

For CIOs and CTOs, the NVIDIA-Google Cloud collaboration presents both opportunities and challenges:

The 30-Second Verdict

- Adopt if: You’re scaling agentic AI, robotics, or digital twins and need a full-stack solution with hardware-level security.

- Avoid if: You’re heavily invested in AWS or Azure and can’t justify the migration costs.

- Watch closely if: You’re in a regulated industry (e.g., healthcare, finance) and need confidential computing for compliance.

Key Considerations

- Cost Efficiency: The 10x improvement in inference cost per token is real, but only if you’re running at scale. Smaller workloads may not see the same benefits.

- Security: Confidential VMs with Blackwell GPUs are a major step forward for multi-tenant security, but they’re still in preview. Expect limited availability in 2026.

- Lock-In: The deeper you integrate with NVIDIA’s software stack (NeMo, NIM, Omniverse), the harder it will be to switch clouds. Plan your exit strategy now.

- Open vs. Closed: If you’re committed to open-source models, the Nemotron family is a great option. If you prefer proprietary models, Gemini and Gemma are fully supported.

The Bottom Line: A New Era of AI Infrastructure

NVIDIA and Google Cloud aren’t just selling GPUs or cloud instances—they’re selling a platform. This collaboration is the first true full-stack solution for agentic and physical AI, and it’s setting a new standard for performance, cost efficiency, and security. For enterprises, the message is clear: If you want to deploy AI at scale, you’ll need to consider beyond individual models or hardware accelerators. The future belongs to those who can integrate hardware, software, and security into a seamless whole.

As for the rest of the industry? They’re playing catch-up. AWS and Azure will need to respond with their own co-engineered stacks, but for now, NVIDIA and Google Cloud have the lead—and they’re not slowing down.

For more details, check out the official Google Cloud blog post or NVIDIA’s Blackwell architecture deep dive.