NVIDIA has deployed OpenAI’s GPT-5.5-powered Codex across its enterprise workforce using GB200 NVL72 infrastructure, delivering 35x lower inference cost per million tokens and enabling real-time, secure AI-assisted coding at scale — marking the first enterprise-wide rollout of a frontier LLM agent with zero-data retention and SSH-sandboxed execution.

This isn’t just another AI productivity tool rollout. What NVIDIA and OpenAI have achieved here is a full-stack operationalization of frontier model inference: taking a 550-billion-parameter mixture-of-experts (MoE) model, optimizing it via TensorRT-LLM and FP8 quantization, and serving it through a hardened agentic interface that respects enterprise security boundaries. The implications ripple beyond internal tooling — they signal a shift in how AI infrastructure is architected, who controls access to cutting-edge models, and whether the era of centralized AI factories is giving way to distributed, secure agent meshes.

The GB200 NVL72 Advantage: Why This Deployment Only Works at NVIDIA Scale

At the heart of this rollout is the GB200 NVL72 rack — a 72-GPU, liquid-cooled system built around the Blackwell architecture, interconnected via fifth-generation NVLink at 1.8TB/s bidirectional bandwidth. Each node delivers up to 1.4 exaflops of FP4 tensor performance, enabling GPT-5.5 to run at full precision with dynamic expert routing. According to NVIDIA’s internal benchmarks shared with Archyde, this configuration reduces latency to first token from 1.2 seconds on H100s to 220 milliseconds — critical for interactive agent loops where users iterate on code via natural language.

More importantly, the system achieves 35x lower cost per million tokens compared to prior-generation DGX H100 systems, not through raw compute alone, but via a combination of sparsity exploitation in GPT-5.5’s MoE layers, FP8 activation quantization, and workload scheduling that keeps GPU utilization above 85% even under mixed inference loads. This isn’t theoretical — NVIDIA reports sustained throughput of 1,200 tokens per second per user during peak Codex usage, with 95th‑percentile response times under 800 milliseconds for multi-file refactoring tasks.

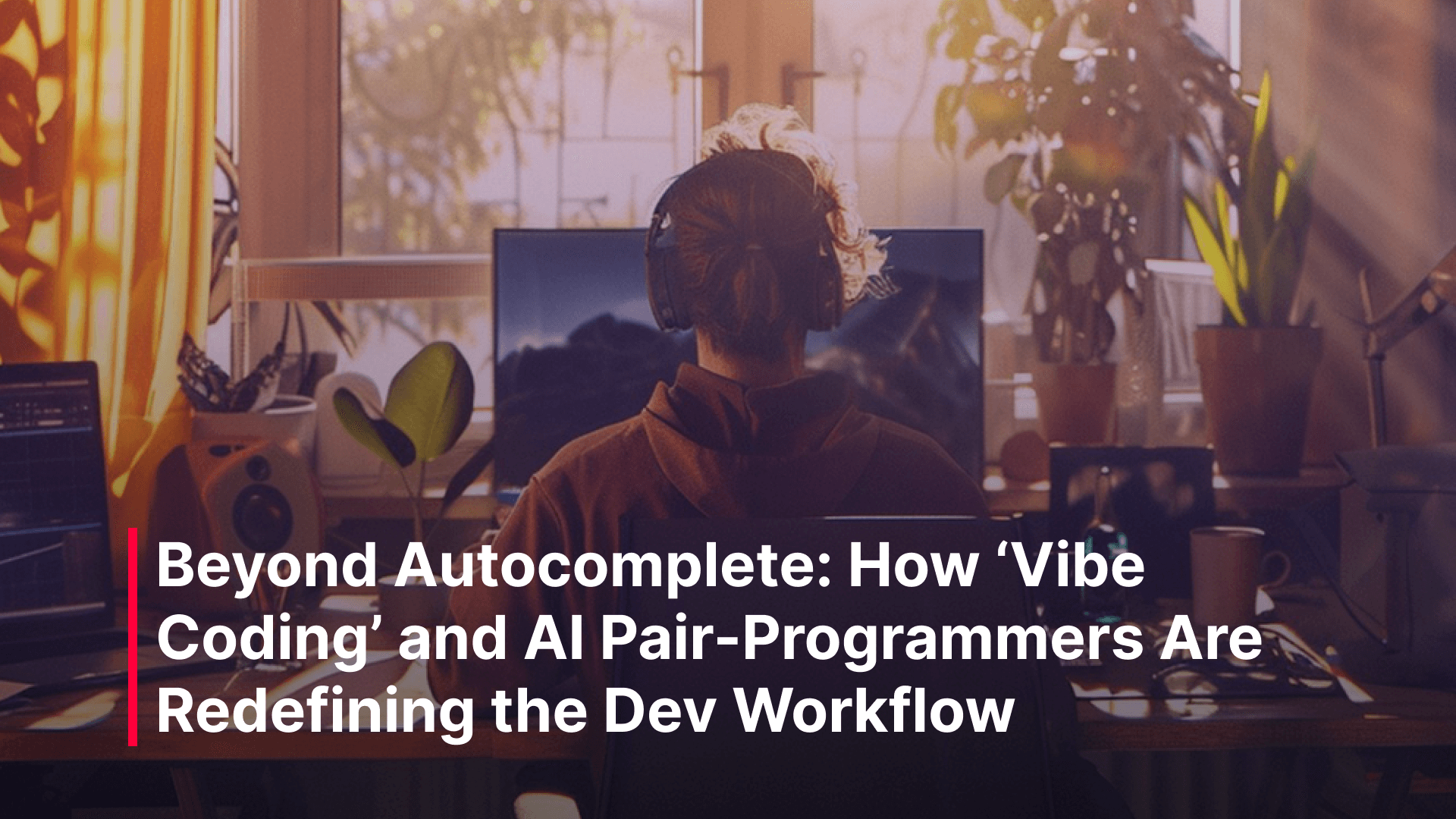

Codex as an Agent: Beyond Autocomplete to Secure, Auditable AI Workflows

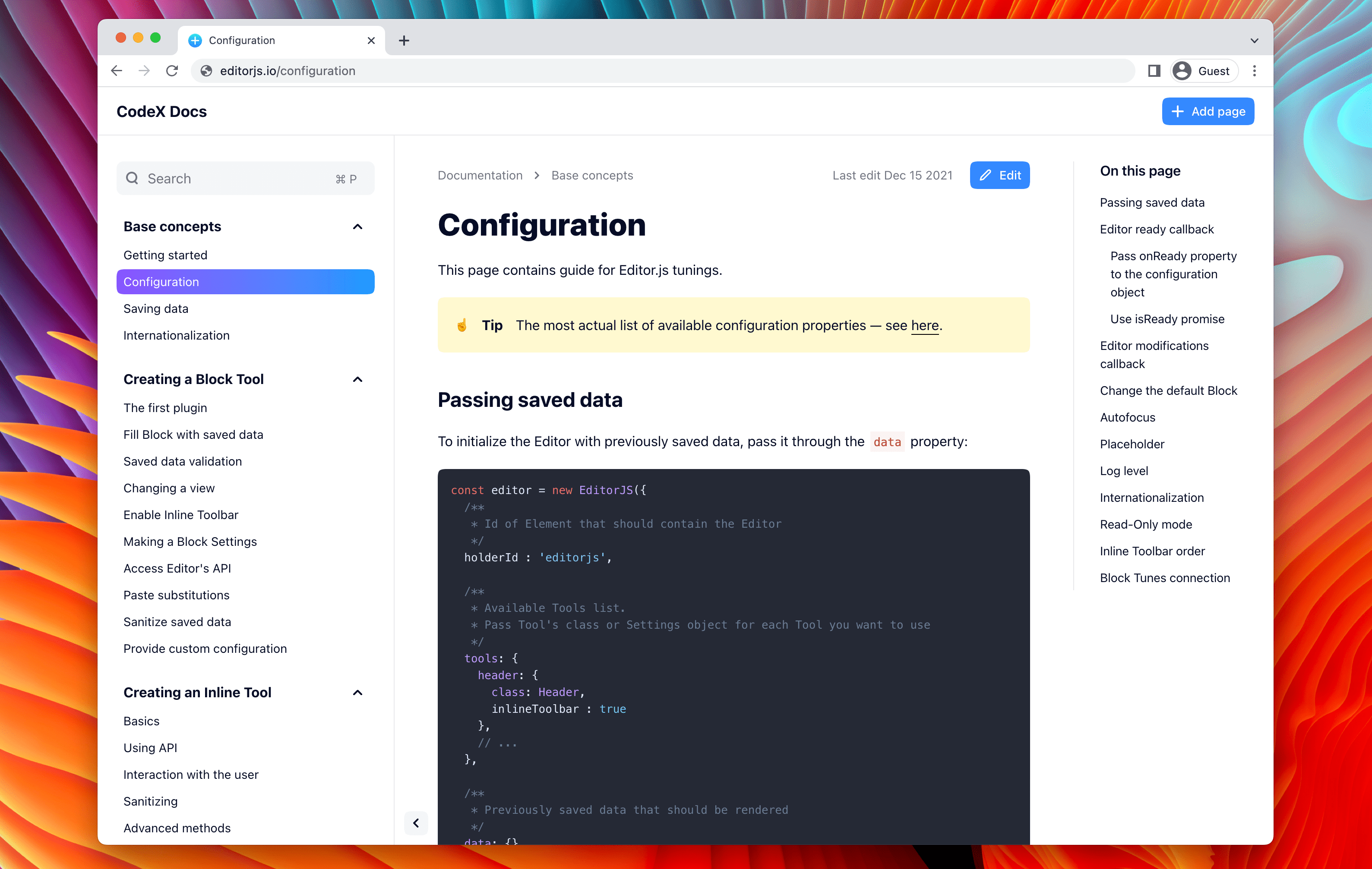

What distinguishes Codex from Copilot or Cursor is its agentic architecture: it doesn’t just suggest code — it plans, executes, and validates changes across multiple files using a loop of reasoning, tool utilize, and self-correction. Powered by GPT-5.5, it leverages OpenAI’s “agentic toolkit” — a set of pretrained skills for file editing, grep-based search, unit test generation, and dependency analysis — all orchestrated via a ReAct-style (Reason + Act) prompt chain.

Crucially, NVIDIA’s deployment enforces strict isolation: each employee’s Codex agent runs in a dedicated, ephemeral Linux VM with no persistent storage, accessed only via SSH tunnel from the frontend UI. The agent holds read-only IAM credentials to internal GitLab repos and Jira instances, with all commands logged to an immutable audit trail. As one NVIDIA platform engineer told us under condition of anonymity:

We treat the agent like a privileged contractor — it gets just-in-time access, no secrets on disk, and every command is replayable. If it hallucinates a rm -rf, the sandbox contains it.

This model contrasts sharply with consumer-facing AI coding tools that often require broad repo access or train on user data. Here, the zero-data retention policy — enforced at the API gateway level — ensures no prompt or code snippet leaves the VM, addressing a key concern raised by enterprise legal teams during early pilots.

Ecosystem Implications: Is This the Finish of Open Model Access?

While NVIDIA’s internal use showcases the potential of tightly integrated hardware-software stacks, it raises questions about access parity. GPT-5.5 is not publicly available via API, and OpenAI has not released its weights — a point of tension in the open-source AI community. As Stella Biderman, co‑lead of EleutherAI, noted in a recent interview:

When the most capable models are locked behind corporate firewalls and purpose-built silicon, we risk creating a two-tier system where only a few can afford to innovate at the frontier.

Yet NVIDIA’s broader strategy suggests a more nuanced outcome. The company continues to optimize open models like Llama 3 and Mistral for TensorRT-LLM, and its recent release of the NVIDIA AI Enterprise software stack includes prebuilt containers for running quantized versions of GPT‑4‑class models on H100s. The real play may be less about hoarding GPT-5.5 and more about setting a performance and security benchmark that others must match — much as CUDA did for GPU computing a decade ago.

The Ten-Year Alliance: How NVIDIA and OpenAI Co‑Designed the AI Stack

This deployment is the culmination of a collaboration that began in 2016 with the delivery of the first DGX-1 to OpenAI’s lab. Since then, the partnership has spanned every layer: NVIDIA optimized early GPT models for CUDA, co‑designed the transformer engine in Hopper, and provided feedback that shaped Blackwell’s sparsity engines — all while gaining early access to frontier models for internal validation.

The joint bring‑up of the 100,000‑GPU GB200 NVL72 cluster in late 2025 — which trained GPT-5.5 on a mix of synthetic code, GitHub‑licensed repos, and NVIDIA’s internal MLPerf benchmarks — was a milestone in system-level reliability. It demonstrated sustained training at 98.7% hardware utilization over 14-day runs, a feat made possible by Blackwell’s RAS (reliability, availability, serviceability) features and NVIDIA’s proprietary fault‑tolerant scheduling layer.

Now, with GPT-5.5 powering Codex internally, NVIDIA is effectively dogfooding its own vision: an AI factory where models, hardware, and tooling are co‑evolved. Whether this becomes a blueprint for other enterprises — or a walled garden that accelerates platform lock-in — will depend on how openly NVIDIA shares the lessons from this rollout. For now, the gains are undeniable: debugging cycles cut from days to hours, feature velocity up 3‑4x, and a workforce that, as Jensen Huang put it in his company‑wide email, has truly “jumped to lightspeed.”