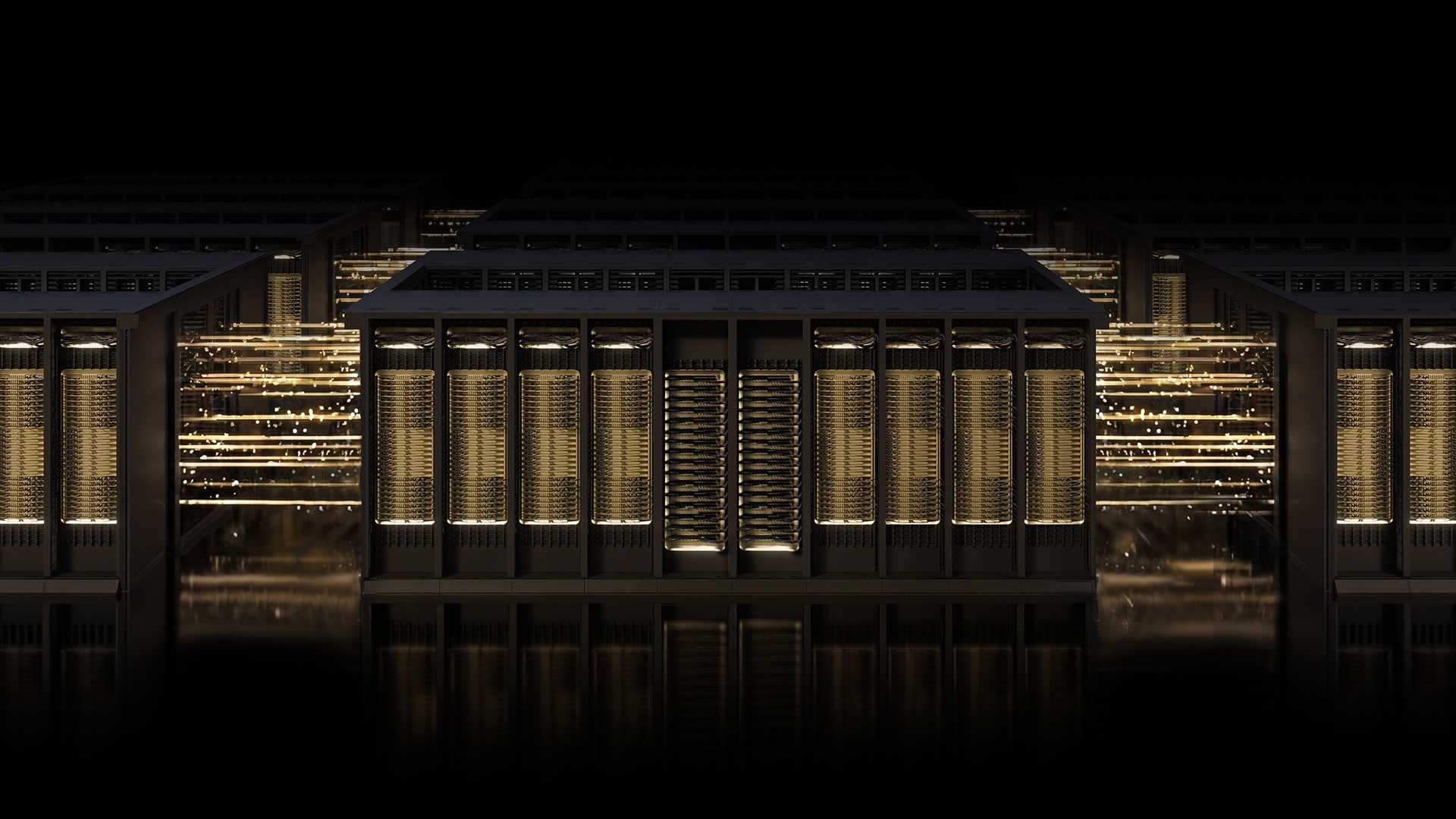

NVIDIA has launched Multipath Reliable Connection (MRC) on its Spectrum-X Ethernet fabric, enabling gigascale AI factories for OpenAI and Microsoft. By optimizing RDMA transport across multiple network paths, MRC eliminates congestion-driven GPU idling, ensuring maximum throughput for frontier LLM training at massive scale.

For the uninitiated, the “AI factory” isn’t a metaphor. it’s a brutal exercise in thermodynamics and packet switching. When you are scaling to hundreds of thousands of GPUs, the network is no longer just a pipe—it is the primary bottleneck. Historically, the industry was split: you either chose the proprietary, low-latency performance of InfiniBand or the ubiquitous, scalable nature of Ethernet. Spectrum-X is NVIDIA’s attempt to end that dichotomy and the introduction of MRC is the killing blow to the “Ethernet is too slow for AI” argument.

It is a sophisticated play in silicon diplomacy.

The Death of the Single-Lane Bottleneck

To understand why MRC matters, you have to understand the failure of standard RoCE (RDMA over Converged Ethernet). Traditional RDMA connections are essentially a single-lane highway. If a packet hits a congested switch or a link fails, the entire flow stalls. In a training run for a frontier model, this creates “tail latency” spikes. When one GPU waits for data, the other 32,000 GPUs in the cluster might sit idle, wasting millions of dollars in compute cycles per hour.

MRC fundamentally rewrites the transport layer. Instead of a single path, it treats the network as a grid. By distributing a single RDMA connection across multiple physical paths, MRC ensures that no single congested switch can throttle the entire training job. It’s the difference between a single-point-of-failure bridge and a redundant city street map.

The hardware-level failure bypass is where this gets surgical. We are talking about detection and rerouting in microseconds. In the world of IEEE-standardized networking, that speed is the difference between a seamless training run and a catastrophic job crash that requires a full checkpoint reload.

The 30-Second Verdict: MRC vs. Standard RoCE

- Pathing: Standard RoCE is single-path; MRC is multi-path.

- Resilience: Standard RoCE relies on slow software timeouts; MRC uses microsecond hardware bypass.

- Utilization: MRC maximizes GPU bandwidth by load-balancing in real-time across the fabric.

- Scaling: Moves the ceiling from “large clusters” to “gigascale factories” (100k+ GPUs).

Engineering the Multiplanar Moat

The deployment at OpenAI and Microsoft’s Fairwater data center reveals a deeper architectural shift: the multiplanar network. By utilizing Spectrum-X, these entities are building independent network “planes.” Imagine three separate, parallel highways running between the same set of GPUs. If one highway suffers a total blackout, the other two carry the load without the GPUs ever realizing there was a flicker.

This is where the Open Compute Project (OCP) integration becomes a strategic masterstroke. By releasing MRC as an open specification, NVIDIA isn’t just being generous; they are setting the industry standard. If AMD, Broadcom, and Intel adopt the MRC spec, the entire ecosystem begins to align with the way NVIDIA’s SuperNICs and switches handle traffic. It is a classic “open-core” strategy: give away the protocol to ensure your hardware remains the gold standard for implementing it.

// Conceptual Logic of MRC Pathing if (path_congestion > threshold || link_status == DOWN) { reroute_packet(next_available_plane); trigger_hardware_bypass(microseconds); } else { distribute_load(multipath_grid); }

The Geopolitics of the Fabric

This isn’t just about packets; it’s about platform lock-in. For years, the “Chip Wars” focused on the H100 or the Blackwell B200. But the real war is being fought in the fabric. If you control the network, you control the cluster.

By bridging the gap between Ethernet’s openness and InfiniBand’s performance, NVIDIA is effectively neutralizing the primary argument for moving to alternative networking stacks. When the world’s most powerful LLMs are being trained on MRC-enabled Spectrum-X, any competitor wanting to enter the “gigascale” market must either adopt NVIDIA’s open spec or build a vastly superior alternative from scratch.

As noted by industry analysts monitoring the shift toward AI-native hardware, the move toward open Ethernet standards is often a shield against antitrust scrutiny. By making the protocol open, NVIDIA can claim they are fostering an ecosystem, even while their proprietary hardware remains the most efficient way to run that protocol.

"The transition to AI-native Ethernet isn't just a technical upgrade; it's a fundamental shift in how we perceive the data center. We are moving from a collection of servers to a single, massive, distributed computer."

Operationalizing the Gigascale

For the sysadmins and network engineers tasked with managing these beasts, the real win is the telemetry. Spectrum-X provides fine-grained visibility into traffic paths that was previously the sole domain of proprietary InfiniBand fabrics. Troubleshooting a “stuck” training job in a 50,000-GPU cluster used to be like finding a needle in a haystack while the haystack was on fire. Now, the intelligent fabric control allows admins to pinpoint the exact switch or cable causing the jitter.

| Metric | Legacy Ethernet | Spectrum-X w/ MRC |

|---|---|---|

| Recovery Time | Milliseconds/Seconds | Microseconds |

| Traffic Flow | Static/Hash-based | Dynamic Multipathing |

| GPU Idle Time | High (during congestion) | Near-Zero |

| Interoperability | High (Standard) | High (OCP Open Spec) |

The rollout we’re seeing this week in the latest beta deployments confirms that the “AI Factory” model is now viable. We are no longer talking about “scaling up” a few racks; we are talking about the industrialization of intelligence.

The Takeaway: NVIDIA has successfully weaponized the openness of Ethernet. By solving the RDMA congestion problem through MRC and the OCP, they’ve ensured that the infrastructure of the next decade of AI will be built on their terms. If you’re building a frontier model, you aren’t just buying GPUs—you’re buying into the Spectrum-X ecosystem.

For those diving deeper into the implementation, the official NVIDIA networking documentation and the latest OCP whitepapers are the only maps that matter in this new territory.