Astrophysics is currently facing a systemic paradigm shift as new observational data suggests Dark Matter—the invisible “glue” supposed to hold galaxies together—may be a mathematical artifact rather than a physical particle. This challenge to the $\Lambda$CDM (Lambda Cold Dark Matter) model, accelerated by AI-driven data analysis, is forcing a pivot toward modified gravity theories to explain galactic rotation curves.

For decades, the scientific community treated Dark Matter as the ultimate “software patch” for a buggy universe. We observed that galaxies spin faster than the visible mass should allow, so we simply invented an invisible substance to provide the extra gravity. It was a clean solution on paper, but the hardware—our detectors—has failed to deliver. Despite billions invested in cryogenic detectors and the Large Hadron Collider, we have zero confirmed detections of a WIMP (Weakly Interacting Massive Particle). We are essentially debugging a system where the primary variable doesn’t exist in the source code.

This isn’t just a crisis for theorists; it’s a compute problem. The $\Lambda$CDM model requires massive N-body simulations to predict the large-scale structure of the universe. These simulations are computationally expensive, relying on massive GPU clusters to track millions of virtual particles across billions of light-years. As we integrate the latest data batches from the JWST’s 2026 observation cycle, the discrepancies between simulation and reality are becoming too loud to ignore.

The $\Lambda$CDM Model is Hitting a Compute Wall

The standard model of cosmology, $\Lambda$CDM, is essentially a high-level abstraction. It works beautifully for the Cosmic Microwave Background (CMB), but it breaks down at the galactic scale. We are seeing “small-scale crises” where the predicted density of dark matter in galactic cores (the “cusp-core problem”) doesn’t match the flat density profiles we actually observe. To fix this, theorists keep adding “epicycles”—increasing the complexity of the model to fit the data, which is the scientific equivalent of adding nested if-else statements to a failing legacy codebase.

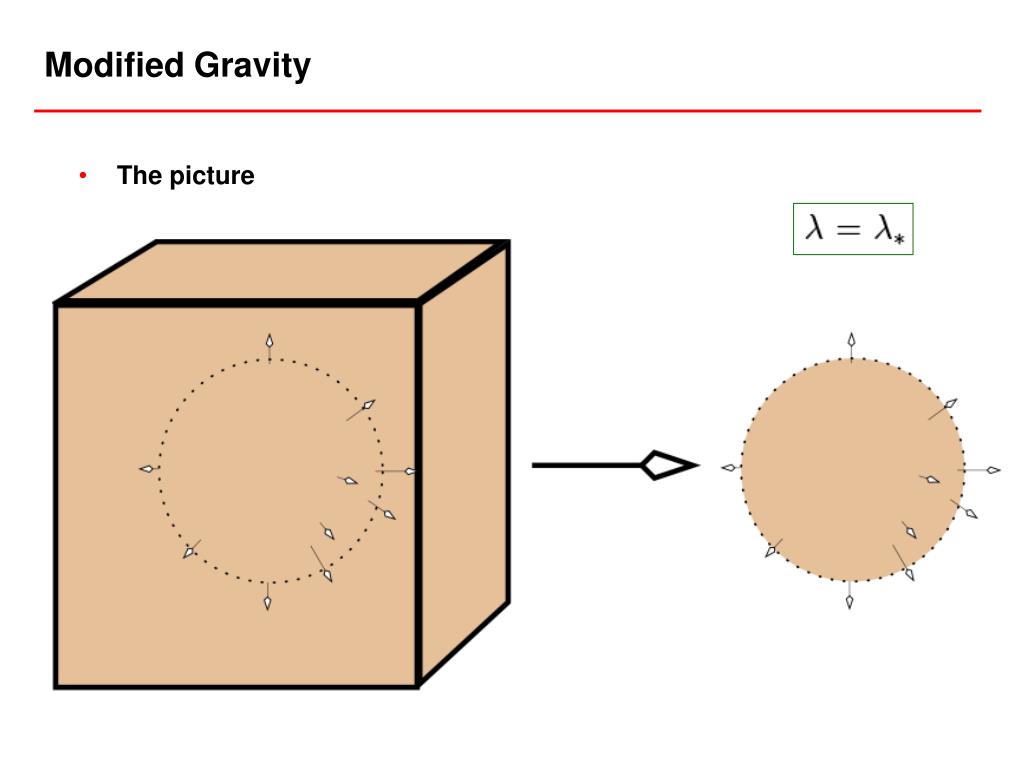

The alternative is MOND (Modified Newtonian Dynamics). Instead of adding invisible matter, MOND suggests that at extremely low accelerations—like those found at the edges of galaxies—the laws of gravity themselves change. It’s a more elegant solution, effectively refactoring the core physics engine rather than adding bloatware to the universe.

The 30-Second Verdict: Particle vs. Law

- Dark Matter ($\Lambda$CDM): Assumes a new, undetected particle exists. High compute cost for simulations; zero hardware detection.

- Modified Gravity (MOND): Assumes gravity behaves differently at scale. Lower compute overhead; struggles to explain the CMB.

- The Bottom Line: We are moving from a “matter-centric” view to a “geometry-centric” view of the cosmos.

The Algorithmic Shift in Galactic Mapping

The real catalyst for this shift isn’t just better telescopes; it’s the application of LLM-style pattern recognition to astronomical catalogs. By employing deep learning architectures to analyze the morphology of thousands of galaxies, researchers are finding that the distribution of visible baryons (normal matter) perfectly predicts the rotation curves without needing any dark matter “filler.”

This suggests a tight coupling between baryonic matter and gravity that $\Lambda$CDM simply cannot explain without fine-tuning parameters to an absurd degree. When you have to tweak your constants every time you look at a new galaxy, your model isn’t predictive—it’s descriptive. It’s the difference between a machine learning model that generalizes and one that has simply overfit the training data.

“The persistent null results from direct detection experiments like LUX-ZEPLIN suggest we aren’t looking for a particle, but for a misunderstanding of spacetime curvature. We’ve spent forty years looking for a ghost in the machine, only to realize the machine itself is built differently than we thought.”

The technical implications are staggering. If we move toward a modified gravity framework, the entire pipeline for astrophysical pre-prints and simulation software will need a total rewrite. We are talking about shifting from particle-based simulations to field-based tensors that can handle non-linear gravitational scaling.

Why Quantum Computing is the Only Way Out

The deadlock between $\Lambda$CDM and MOND exists because our current classical compute architectures cannot efficiently simulate the “quantum gravity” regime. We are attempting to solve General Relativity (a smooth, continuous geometry) and Quantum Mechanics (discrete, probabilistic packets) on the same hardware. It’s a fundamental architectural mismatch.

The path forward lies in Quantum Simulation. By using qubits to map the entanglement of spacetime, we may finally find a theory of Quantum Gravity that explains why gravity appears “stronger” at galactic edges without needing a dark matter particle. This isn’t vaporware; it’s the primary driver for current IEEE research into topological quantum computing.

| Metric | $\Lambda$CDM (Dark Matter) | MOND (Modified Gravity) | Quantum Gravity (Emergent) |

|---|---|---|---|

| Detection Status | Null / Theoretical | Observational Correlation | Computational Hypothesis |

| Compute Complexity | $O(N \log N)$ – High | $O(N)$ – Moderate | Exponential (Classical) / Poly (Quantum) |

| Core Assumption | New Particle (WIMP/Axion) | Non-linear Acceleration | Spacetime Entanglement |

| Predictive Power | Excellent (Large Scale) | Excellent (Galactic Scale) | Theoretical (All Scales) |

The Data Pipeline Crisis: From JWST to Local Clusters

The sheer volume of data coming from the James Webb Space Telescope is creating a bottleneck. We are seeing “impossible” early galaxies—massive structures that formed far too quickly for the $\Lambda$CDM model to allow. In software terms, the universe is booting up faster than the OS should allow.

To process this, we’re seeing a shift toward edge computing in space. Instead of beaming raw telemetry back to Earth, we are deploying AI filters on-orbit to identify anomalies in real-time. This reduces the latency between observation and hypothesis testing. If we find more “impossible” galaxies, the $\Lambda$CDM model doesn’t just need a patch—it needs to be deprecated.

the “obvious” existence of Dark Matter was a convenient fiction that allowed us to keep using Newtonian physics in a regime where it no longer applied. As we refine our tools and our compute, we’re discovering that the universe is far more strange—and far more efficient—than our current models allow. We aren’t missing matter; we’re missing the correct equation.

For those tracking the “chip wars,” the takeaway is clear: the next leap in hardware won’t just be about faster LLMs or better GPUs. It will be about building the machines capable of simulating the fundamental fabric of reality. The race to solve the Dark Matter mystery is, in reality, a race to build the first truly useful quantum simulator.

Keep your eyes on the Nature Cosmology archives over the next six months. If the current trend holds, we are about to witness the largest “version update” to physics since Einstein.