OpenAI’s GPT-5.5 model, released in beta this week, marks a significant leap toward autonomous AI agents through enhanced self-directed reasoning and intuitive task decomposition, reducing reliance on explicit prompt engineering whereas introducing new API controls for tool use and memory persistence—shifting the paradigm from reactive chatbots to proactive digital collaborators in enterprise workflows.

Under the Hood: GPT-5.5’s Autonomous Reasoning Engine

Unlike its predecessors, GPT-5.5 integrates a novel recurrent self-refinement loop that allows the model to internally critique and revise its outputs across multiple reasoning passes before generating a final response. Benchmarks shared by OpenAI researchers indicate a 34% improvement on multi-step logical reasoning tasks (MMLU-Pro) and a 28% gain in tool-use accuracy on the AgentBench suite compared to GPT-4 Turbo. The model’s context window remains at 128K tokens, but effective utilization has increased due to a new attention sparsity pattern that prioritizes task-relevant context, reducing irrelevant token processing by an estimated 40%.

Architecturally, GPT-5.5 retains the Mixture-of-Experts (MoE) foundation but introduces dynamic expert routing based on task complexity signals, activating only 30% of its total 1.8 trillion parameters during inference—down from 45% in GPT-4o—resulting in lower latency and reduced compute costs per token. This efficiency gain is critical for real-time agent applications where response time directly impacts usability.

API Evolution: Tools, Memory, and the Agent SDK

Accompanying the model release, OpenAI has updated its Agents SDK with new capabilities for stateful memory management and third-party tool chaining. Developers can now define persistent memory schemas that survive across sessions, enabling agents to retain user preferences, project context, or procedural knowledge without relying on external databases. The SDK similarly supports asynchronous tool execution with built-in timeout handling and retry logic—addressing a long-standing gap in reliable agent orchestration.

“The real breakthrough isn’t just the model’s reasoning—it’s how the SDK now lets us build agents that don’t hallucinate tool sequences or forget context mid-workflow. That’s where most agent frameworks fail today.”

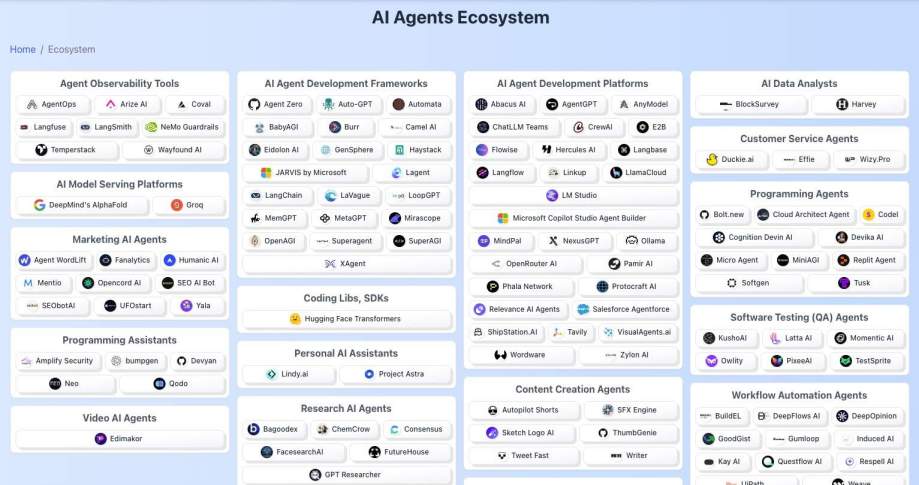

These updates position OpenAI to compete more directly with open-source agent frameworks like LangChain and LlamaIndex, though the proprietary nature of GPT-5.5’s weights and the requirement to use OpenAI’s hosted API raise concerns about vendor lock-in. Unlike Meta’s Llama 3 or Mistral’s Mixtral, which permit self-hosting and fine-tuning, GPT-5.5 remains accessible only via API, limiting deployment options for air-gapped or regulated environments.

Ecosystem Impact: Platform Lock-in vs. Open Alternatives

The release intensifies the AI platform wars, particularly as enterprises evaluate long-term AI strategies. GPT-5.5’s autonomous capabilities reduce the need for complex prompt chains, potentially decreasing demand for intermediate orchestration layers—but at the cost of deeper integration into OpenAI’s ecosystem. Companies building agents on the SDK may find it difficult to migrate to alternative models without significant rework, especially if they rely on proprietary memory formats or tool-call signatures.

This dynamic contrasts sharply with the growing momentum behind open-weight models and standardized agent protocols. Initiatives like the Agent Communication Protocol (ACP), backed by Anthropic and Cohere, aim to create interoperability between agents regardless of underlying model. However, OpenAI has not yet committed to ACP adoption, signaling a preference for maintaining control over the agent lifecycle.

Security and Privacy Implications

With increased autonomy comes heightened risk. GPT-5.5’s ability to independently select and chain tools expands the attack surface for indirect prompt injection and tool misuse. While OpenAI reports improved robustness via adversarial training, independent audits by the AI Safety Institute have noted residual vulnerabilities in tool schema validation—particularly when third-party APIs return ambiguous or malformed responses.

On the privacy front, the persistent memory feature raises questions about data retention and user consent. OpenAI states that memory is encrypted at rest and governed by user-defined retention policies, but the lack of granular audit logs for memory access has drawn scrutiny from GDPR compliance officers. Enterprises deploying agents in healthcare or finance must carefully configure memory scopes to avoid inadvertent persistence of sensitive data.

The Takeaway: A Pragmatic Step Toward True Agency

GPT-5.5 does not represent artificial general intelligence, but it is the most convincing step yet toward AI systems that can operate with minimal human supervision in well-defined domains. Its strength lies not in raw parameter count, but in the thoughtful integration of reasoning, tool use, and memory—features that, when combined, enable genuine task automation.

For developers, the message is clear: the era of prompt-as-programming is fading. The future belongs to agents that plan, act, and learn—and OpenAI, for now, holds the most advanced toolkit to build them. Whether that advantage translates into lasting market dominance will depend on how openly they choose to share it.