OpenAI has launched GPT-5.5, its most capable model to date, leveraging a massive 10-gigawatt computing capacity milestone to outpace rival Anthropic. The release focuses on agentic workflows and advanced coding, signaling a strategic shift toward enterprise-grade autonomy and a deeper reliance on Nvidia (NASDAQ: NVDA) infrastructure.

The release of GPT-5.5—codenamed Spud

—is not merely a software update; It’s a demonstration of raw industrial power. While the AI sector has been plagued by “bubble” narratives, OpenAI’s ability to scale its compute footprint suggests that the barrier to entry for “frontier” models is no longer just algorithmic brilliance, but the sheer volume of electricity and silicon a company can command. By securing 10 gigawatts of capacity ahead of schedule, OpenAI is attempting to commoditize intelligence through scale, forcing competitors like Anthropic to either find more efficient architectures or secure equally massive capital infusions.

The Bottom Line

- Infrastructure Dominance: OpenAI’s 10-gigawatt capacity milestone creates a significant “compute moat,” making it harder for smaller labs to compete on model scale.

- Capex Escalation: The race is driving unprecedented spending, with Microsoft (NASDAQ: MSFT) raising 2026 capital expenditure guidance to $190 billion to support these deployments.

- Enterprise Pivot: GPT-5.5 is positioned as an “agentic” engine, moving beyond chatbots toward autonomous tools that execute complex professional workflows.

The Compute Moat: Scaling Beyond the Algorithm

For years, the debate in Silicon Valley centered on whether “scaling laws” would hit a plateau. The arrival of GPT-5.5 suggests the opposite. OpenAI is no longer just optimizing code; it is building a physical empire. The company’s “Stargate” initiative and its recent achievement of 10 gigawatts of computing capacity indicate a shift toward vertical integration of the AI stack.

But the balance sheet tells a different story. This level of scaling requires a symbiotic, almost dependent, relationship with Nvidia (NASDAQ: NVDA). GPT-5.5 runs on Nvidia GB200 NVL72 rack-scale systems, and the financial ripple effects are evident in Nvidia’s recent filings. For the fourth quarter ended January 25, 2026, Nvidia (NASDAQ: NVDA) reported record quarterly revenue of $68.1 billion, with Data Center revenue alone hitting $62.3 billion.

Here is the math on the infrastructure war:

| Metric | OpenAI / Microsoft Ecosystem | Market Context (FY2026) |

|---|---|---|

| OpenAI Compute Capacity | 10 Gigawatts | Reached ahead of target |

| Microsoft 2026 CapEx | $190 Billion | Includes $25B increase for components |

| Nvidia Q4 Revenue | $68.1 Billion | Up 73% year-over-year |

| Model Focus | Agentic / Coding (GPT-5.5) | Shift from Chat to Execution |

The Anthropic Friction and the Oligopoly Shift

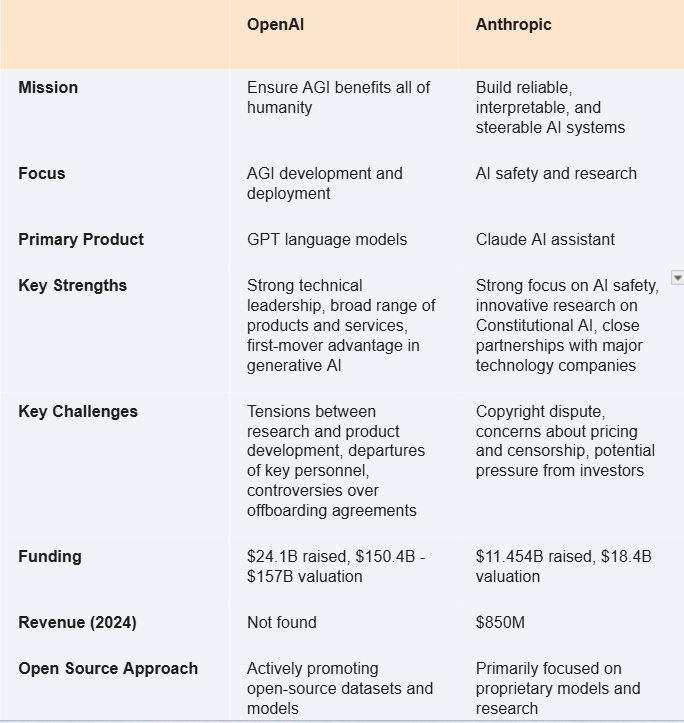

The tension between OpenAI and Anthropic has evolved from a philosophical divide over “AI safety” to a brutal war of attrition over hardware. Sam Altman’s suggestion that GPT-5.5 would be released more widely than Anthropic’s latest offerings points to a confidence in distribution and compute availability. While Anthropic has secured significant funding—including a reported $30 billion round at a $380 billion valuation—they have historically lagged in the raw infrastructure race.

Industry analysts suggest an oligopoly is forming. As the cost of training a frontier model moves from millions to billions of dollars, the “startup” phase of AI is ending. We are entering the era of the Hyperscalers. Bloomberg reporting indicates that OpenAI’s aggressive push to secure capacity has forced rivals to play catch-up, often signing compute deals six months behind the market leader.

“This is a new class of intelligence. It’s a massive step towards more agentic AI.” Sam Altman, CEO of OpenAI

Market Implications: The “Agentic” Economy

The strategic pivot in GPT-5.5 is the move toward “agentic” behavior—models that don’t just answer questions but perform tasks across different tools. This has immediate implications for the software-as-a-service (SaaS) economy. If an AI agent can debug code, conduct research, and manage data analysis autonomously, the value proposition of mid-tier enterprise software begins to erode.

This shift is why Microsoft (NASDAQ: MSFT) is pouring $190 billion into capital expenditures. They aren’t just buying GPUs; they are buying the ability to host the world’s autonomous workforce. However, this spending comes with risks. CFO Amy Hood has noted the impact of rising component costs, specifically in memory and storage, which added $25 billion to their 2026 budget.

The broader economic impact is a massive transfer of wealth toward the “physical layer” of AI. While the application layer is crowded, the infrastructure layer—power, cooling, and silicon—is where the actual pricing power resides. According to Reuters analysis of AI trends, the bottleneck has shifted from “how do we build the model” to “how do we power the data center.”

The Trajectory: Compute as the New Currency

As we move further into 2026, the metric of success for AI labs will not be “parameter count,” but “gigawatts secured.” OpenAI’s move to release GPT-5.5 widely is a calculated play to capture the enterprise market before Anthropic can scale its own infrastructure.

For investors, the signal is clear: the “AI bubble” may be a misnomer if the revenue is being captured by the infrastructure providers. As long as Nvidia (NASDAQ: NVDA) continues to post record-breaking data center growth and Microsoft (NASDAQ: MSFT) continues to raise its CapEx, the demand for compute remains the primary driver of the tech economy. The winner of the AI race will not be the company with the best prompt, but the company with the most stable power grid and the most H100s/B200s in the rack.

Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute financial advice.