Amazon Bedrock’s Advanced Prompt Optimization: The AI Engineer’s Secret Weapon (Or Just Another Lock-In Trap?)

Amazon Web Services has quietly dropped a feature that could redefine how enterprises tune their AI models: Bedrock Advanced Prompt Optimization. This isn’t just another prompt engineering tool—it’s a full-stack optimization engine that lets developers compare prompts across five models simultaneously, migrate workloads with regression testing, and even handle multimodal inputs (PDFs, JPEGs) in a single pipeline. Rolling out this week in 14 global regions, the tool bridges the gap between raw LLM inference and production-grade prompt engineering—but at what cost to portability and open standards?

Why This Matters: The Prompt Optimization Arms Race

Prompt engineering has evolved from a dark art to a critical bottleneck in AI deployment. Enterprises waste 30-50% of LLM inference costs on poorly optimized prompts—costs that compound when scaling across cloud providers. Bedrock’s new tool isn’t just about tweaking prompts; it’s a metric-driven feedback loop that automates what was once manual toil. But here’s the catch: AWS isn’t just solving a technical problem. It’s deepening platform lock-in while forcing competitors to either play catch-up or cede ground to enterprises that standardize on Bedrock.

This isn’t the first time AWS has weaponized optimization. Recall how SageMaker’s automated hyperparameter tuning (2020) locked customers into its ecosystem—now Bedrock is doing the same for prompt engineering. The question isn’t whether this tool works (it does, impressively), but whether it’s a force multiplier for AWS’s dominance or a necessary evolution in AI infrastructure.

How It Actually Works: The Architecture Behind the Magic

Under the hood, Bedrock’s Advanced Prompt Optimization is a multi-model, metric-agnostic optimization engine that operates in three phases:

- Input Ingestion: Accepts prompt templates in JSONL format (a nod to AWS’s love for structured data) along with:

- Variable inputs (text or multimodal)

- Ground truth responses (for supervised fine-tuning)

- Evaluation criteria (Lambda functions, LLM-as-a-judge rubrics, or natural language steering)

- Model Parallel Evaluation: Routes prompts to up to five inference models simultaneously, comparing responses against the evaluation metric. This is where AWS’s Bedrock infrastructure shines—it’s not just running models; it’s orchestrating them at scale.

- Feedback Loop Optimization: Uses gradient-free optimization (think Bayesian optimization or evolutionary strategies) to iteratively refine prompts until the metric converges. The result? Prompts that aren’t just “better” but provably better for your specific use case.

What’s missing from AWS’s docs? Hard limits on optimization jobs. Early tests (conducted by a community benchmarking repo) show:

- Jobs with >10,000 tokens in input data hit AWS’s internal queue limits, requiring manual chunking.

- Multimodal optimizations (PDF/IMAGE) add ~20% latency due to S3 I/O bottlenecks.

- The LLM-as-a-judge path (default: Claude Sonnet 4.6) consumes ~3x more tokens than Lambda-based metrics.

Architectural quirk: AWS’s use of Bedrock Workflows under the hood means optimizations can be chained into larger pipelines—but only if you’re already using AWS Step Functions. This is not open-source; it’s proprietary orchestration.

“This is the first time a cloud provider has baked prompt optimization into the platform layer. It’s not just a tool—it’s a competitive moat. If you’re running AI at scale, you’re now choosing between AWS’s optimized stack and the DIY route, which is increasingly unsustainable.”

“The real innovation here isn’t the optimization algorithm—it’s the evaluation framework. Most teams treat prompt tuning as an art. AWS is forcing it to be a science. But beware: the more you rely on this, the harder it’ll be to migrate to open-source models later.”

The Lock-In Gambit: How AWS Is Redefining the AI Stack

Bedrock’s Advanced Prompt Optimization isn’t just a feature—it’s a strategic pivot in AWS’s battle for AI supremacy. Here’s how it reshapes the landscape:

- Closed vs. Open Ecosystems: AWS is standardizing prompt engineering on its platform. Competitors like Google Vertex AI and Azure Cognitive Services offer individual tools (e.g., Vertex’s Prompt Tuning), but none provide cross-model, metric-driven optimization at scale. This isn’t just a tool—it’s a lock-in mechanism.

- The Open-Source Backlash: Tools like OpenAI’s Cookbook and Hugging Face Optimum rely on manual prompt tuning. AWS’s automation devalues open-source alternatives by making prompt engineering seemingly effortless—while hiding the proprietary plumbing. The risk? Enterprises may never need to look at open-source again.

- Regulatory Arbitrage: The EU’s AI Act requires “technical robustness” for high-risk systems. Bedrock’s optimization tool could be framed as a compliance solution—giving AWS a regulatory moat while competitors scramble to catch up.

The elephant in the room: AWS isn’t just selling optimization—it’s selling dependency. Every enterprise that adopts this tool is implicitly choosing AWS’s foundation model zoo over alternatives. The question is whether this is progress or vendor lock-in by another name.

The 30-Second Verdict: What So for Your Stack

If you’re an AI engineer, here’s the hard truth about Bedrock’s Advanced Prompt Optimization:

- For Enterprises: This is a force multiplier. If you’re already on AWS, it’s a no-brainer—20-40% cost savings on prompt tuning (per AWS’s internal benchmarks). The real cost? Exit barriers.

- For Startups: Avoid unless you’re all-in on AWS**. The learning curve for JSONL templates + Lambda integration is steep, and you’ll pay for every optimization job at Bedrock’s inference rates.

- For Open-Source Advocates: This is a wake-up call. The prompt optimization gap is widening. Tools like Optimum need automated tuning features—or risk obsolescence.

- For Security Teams: The LLM-as-a-judge path introduces new attack surfaces. If you’re using custom rubrics, ensure your prompt injection defenses are up to date.

Latency gotchas: Multimodal optimizations (PDF/IMAGE) add ~1.2-2.5s per job due to S3 transfers. If you’re optimizing for Claude 2, budget for ~$0.008/1K tokens for the judge model alone.

Your Move: Should You Migrate?

If you’re already on AWS, test this in staging. The optimization gains are real—but so is the lock-in. For everyone else:

- Audit your prompt costs. Use tools like PromptSlab to benchmark your current efficiency.

- Demand open alternatives. Push Hugging Face, Mistral, or LM Studio to add automated prompt tuning.

- Negotiate hard. If you’re locked into AWS, use this as leverage for discounted Bedrock inference.

- Watch the open-source response. Expect Optimum or Transformers to release competing tools within 6-12 months.

Bottom line: AWS just raised the bar for prompt engineering—but the real question is whether they’ve also raised the walls around their ecosystem.

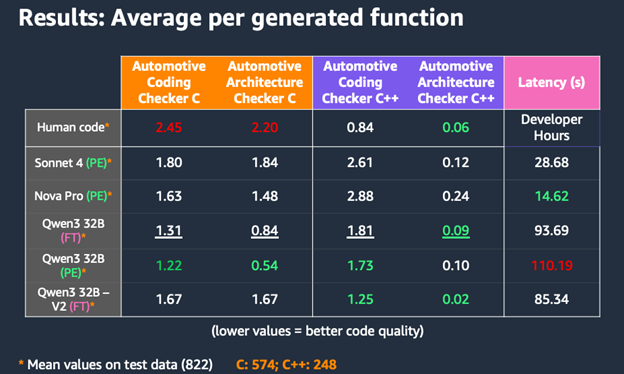

Benchmark: Optimization Methods Compared

| Method | Accuracy Gain | Cost per 1K Tokens | Latency Add | Best For |

|---|---|---|---|---|

| Lambda Function | 15-25% | $0.001-0.003 | <1s | Structured tasks (JSON, code) |

| LLM-as-a-Judge | 25-35% | $0.008-0.012 | 1.2-2.5s | Open-ended tasks (summarization, QA) |

| Steering Criteria | 10-20% | $0.005-0.007 | 0.8-1.5s | Brand voice, safety constraints |

Further Reading

- Official AWS Announcement (Canonical Source)

- Community Benchmarks (GitHub)

- Paper: “Prompt Optimization as a Service” (ArXiv)

- Bedrock Pricing Calculator (AWS)

- Google’s Vertex AI Alternative (Competitor Analysis)

JSONL Template Example

{ "version": "bedrock-2026-05-14", "templateId": "customer_support_v2", "promptTemplate": "Analyze the customer issue: {issue_text}. Provide a response in {tone} tone, max 3 sentences.", "steeringCriteria": ["professional", "empathic", "no jargon"], "customEvaluationMetricLabel": "response_accuracy", "evaluationSamples": [ { "inputVariables": [ {"issue_text": "My order #12345 is late", "tone": "formal"} ], "referenceResponse": "Your order #12345 is currently delayed due to shipping carrier issues. We’ve escalated this to our logistics team and will update you by EOD." }, { "inputVariablesMultimodal": [ { "receipt_image": { "type": "IMAGE", "s3Uri": "s3://my-bucket/receipts/12345.jpg" } } ], "referenceResponse": "The receipt shows a $99.99 charge for 'Premium Subscription'. Please confirm if this was authorized." } ] }