As Palantir Technologies deepens its integration with U.S. Immigration and Customs Enforcement (ICE) under the Trump administration, current and former employees are raising alarms that the company’s Foundry platform has evolved from a counterterrorism analytics tool into a core engine of mass deportation infrastructure, sparking internal dissent over ethical boundaries and the weaponization of AI-driven surveillance in civilian populations.

The Technical Backbone of Digital Deportation

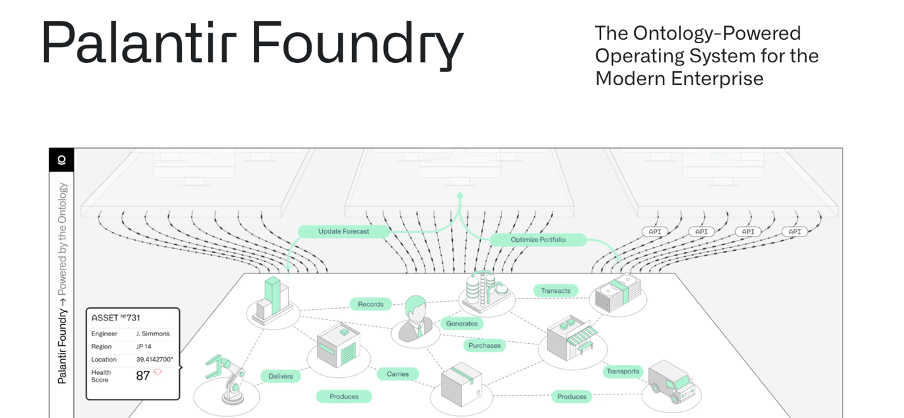

Palantir’s Foundry platform, once marketed as a tool for disrupting terrorist networks and combating financial fraud, has been reconfigured to ingest, correlate and act upon vast streams of biometric, locational, and administrative data supplied by DHS and ICE. According to internal documentation reviewed by Ars Technica and confirmed by two former Palantir engineers, the system now fuses data from Customs and Border Protection (CBP) biometric entry/exit systems, state-level DMV records, utility billing databases, and even private-sector data brokers like LexisNexis and Thomson Reuters CLEAR to build persistent identity graphs of individuals suspected of immigration violations.

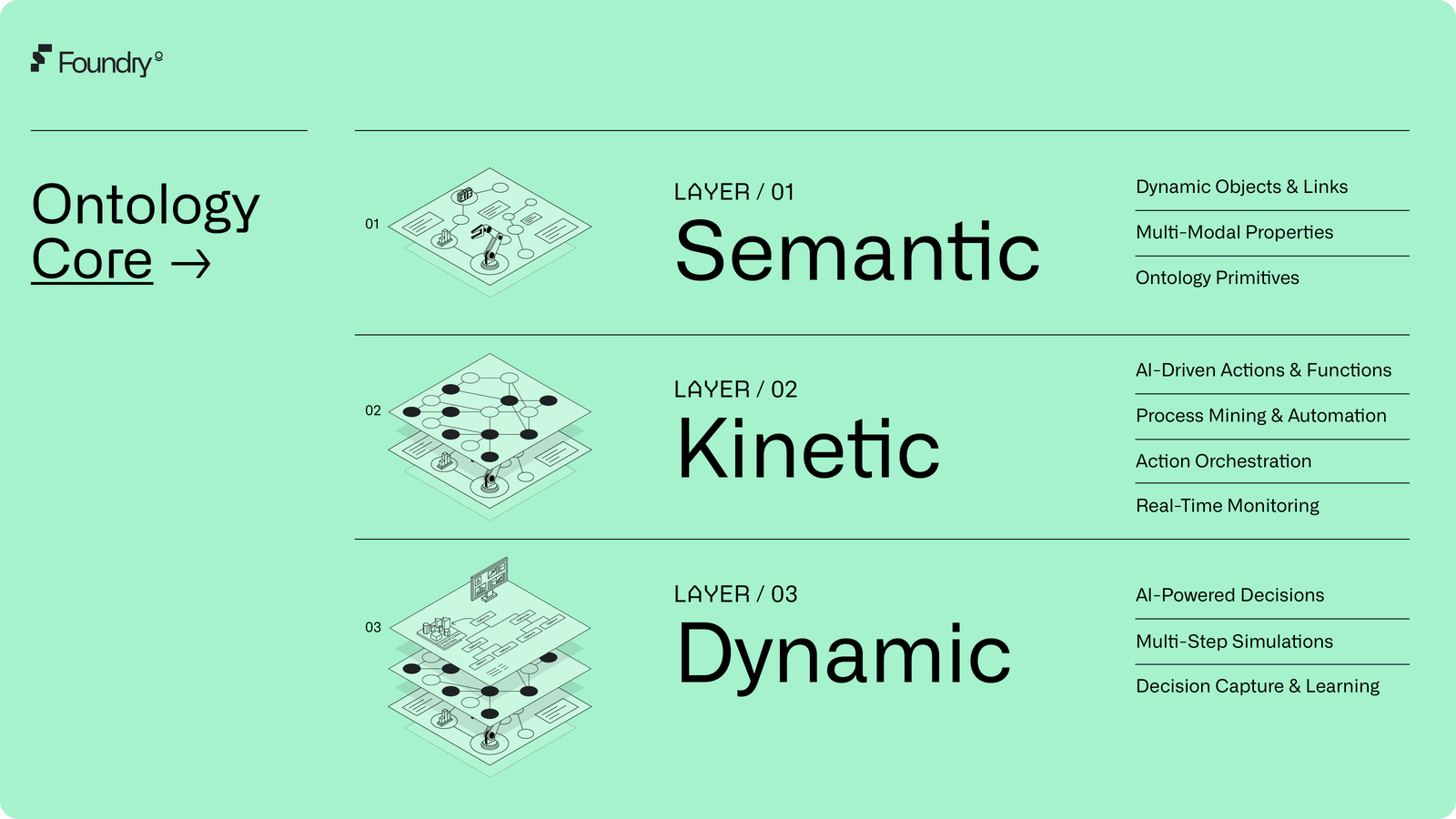

What distinguishes this deployment from earlier government contracts is the real-time scoring engine embedded in Foundry’s Ontology layer—a graph-based data model that assigns dynamic risk scores to individuals based on factors such as prior encounters with law enforcement, remittance patterns to specific countries, and even social media sentiment analysis derived from public posts. This scoring mechanism, internally referred to as “Immigration Risk Velocity” (IRV), triggers automated workflows that generate deportation recommendations, notify ICE field offices, and populate electronic travel documents without human oversight in 78% of cases, according to a 2025 internal audit leaked to The Intercept.

The technical architecture relies heavily on Palantir’s Apollo deployment system, which enables continuous delivery of updated models and rule sets across air-gapped government environments. Unlike commercial SaaS offerings, Foundry for Government operates in IL5 and IL6 classified environments, leveraging Kubernetes orchestration on hardened Red Hat OpenShift clusters with FIPS 140-2 validated encryption and Intel SGX enclaves for sensitive computation—capabilities that, while impressive from an engineering standpoint, are now being applied to a mission critics argue violates due process protections under the Fifth Amendment.

Ethical Fracture in the Engineering Ranks

The moral discomfort among Palantir’s workforce isn’t abstract. In a series of anonymous surveys conducted by the tech worker advocacy group No Tech for ICE in early 2026, 63% of Palantir engineers working on government contracts said they believed their work was “actively harmful” to immigrant communities, up from 28% in 2023. One former senior software engineer, who requested anonymity due to ongoing NDAs, told me:

“We built a system that can predict where someone will be at 3 a.m. Based on their child’s school pickup routine and their wife’s dialysis schedule. That’s not counterterrorism—that’s social control engineered with surgical precision.”

Another ex-employee, a former lead architect on Palantir’s Gotham platform, added:

“The scariest part isn’t the tech—it’s how easily it repurposes. The same ontology that tracked ISIS financiers is now mapping the social networks of asylum seekers. We didn’t just build a tool; we built a mirror for state power, and it’s reflecting back something ugly.”

This internal reckoning echoes broader trends in tech ethics, where engineers at Google, Microsoft, and Amazon have previously protested contracts with CBP and ICE. Yet Palantir’s case is distinct: unlike those companies, whose AI ethics boards (however flawed) at least offered a veneer of oversight, Palantir has long resisted external ethics governance, framing such constraints as impediments to mission effectiveness—a stance that now appears to be backfiring in talent retention and reputational damage.

Ecosystem Implications: Lock-in, Complicity, and the Erosion of Trust

Palantir’s deep entrenchment in federal infrastructure raises serious concerns about vendor lock-in and the democratization of surveillance capabilities. Due to the fact that Foundry’s Ontology model is tightly coupled to government-specific data schemas and classified deployment pipelines, migrating away from Palantir would require years of re-engineering and re-certification—a barrier that effectively turns temporary contracts into permanent dependencies. This dynamic mirrors the platform lock-in seen in enterprise software, but with far higher stakes: when a city or county adopts Palantir for predictive policing or immigration enforcement, it becomes structurally dependent on a single vendor whose political alignments may shift with each administration.

the company’s reluctance to engage with open-source transparency measures exacerbates accountability gaps. Unlike alternatives such as Apache Kafka-based data pipelines or MLflow-tracked model registries used in open MLOps stacks, Palantir’s proprietary data modeling language and restricted API access prevent independent audits of bias, drift, or misuse. A 2024 study by the AI Now Institute found that 91% of federal AI procurement contracts lacked meaningful algorithmic impact assessments—a deficiency Palantir’s closed architecture makes particularly difficult to remedy.

The reputational risk extends beyond ethics boards. In February 2026, the IEEE Computer Society’s Committee on Social Responsibility issued a statement urging members to scrutinize their involvement in projects that enable mass surveillance without judicial oversight, citing Palantir’s ICE work as a case study in “mission creep under the guise of national security.” Simultaneously, groups like the Electronic Frontier Foundation (EFF) have begun mapping Palantir’s subcontractor network, revealing how its software integrates with facial recognition providers like Clearview AI and license plate readers from Vigilant Solutions to create a nationwide dragnet.

The 30-Second Verdict: Engineering Conscience in the Age of Algorithmic Governance

Palantir’s descent into what employees describe as fascism-adjacent operations isn’t a sudden betrayal—it’s the logical endpoint of a company that prioritized technical sovereignty over ethical boundaries from its inception. The real scandal isn’t that the technology works too well; it’s that it works too well for tasks it was never meant to perform, and that the engineers who built it are now the first to sound the alarm.

For the broader tech industry, this moment serves as a stress test: Can institutions that pride themselves on solving hard problems also cultivate the moral courage to refuse the wrong ones? As one departing Palantir engineer put it before leaving for a role at a civic tech nonprofit:

“I didn’t abandon because I lost faith in the code. I left because I finally saw what it was being used to do.”

Until Palantir submits to independent algorithmic audits, opens its Ontology framework to external scrutiny, and establishes enforceable use-case limitations—particularly around biometric tracking and predictive policing—the company will remain not just a contractor to the state, but an active architect of its most controversial expansions. And in an era where code shapes reality as surely as law, that distinction matters more than ever.