Google has signed a classified agreement granting the US Department of Defense (DoD) full access to Gemini AI for any lawful government purpose. The deal bypasses typical corporate restrictions on AI use, signaling a strategic pivot back into defense contracting amid intensifying global AI competition.

This is the ghost of Project Maven returning to haunt Mountain View, but this time, the corporate guardrails have been dismantled. For years, Google danced a delicate tango between its “Don’t be evil” heritage and the gravitational pull of the military-industrial complex. That dance is over. By granting the Pentagon “full access” with minimal oversight, Google isn’t just selling a tool. it’s integrating its core intelligence layer into the machinery of national security.

The implications are massive.

To understand why the DoD wants Gemini specifically, you have to gaze past the chatbot interface and into the architecture. We aren’t talking about a simple API wrapper. The Pentagon is eyeing Gemini’s massive context window—which, in its most advanced iterations, handles millions of tokens. In plain English: the ability to ingest thousands of pages of intelligence reports, hours of drone footage, and sprawling satellite telemetry in a single prompt without the model “forgetting” the beginning of the data set. This is a quantum leap over traditional Transformer-based architectures that struggle with long-term dependency.

The Compute Moat and the Multimodal Edge

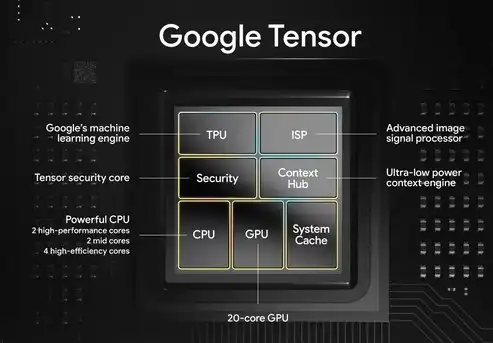

The DoD isn’t just buying a model; they are buying into Google’s vertical integration. While rivals rely on third-party silicon, Google’s Tensor Processing Units (TPUs) provide a specialized hardware acceleration layer that reduces inference latency—the time it takes for the AI to “reckon” and respond. In a tactical environment, a three-second delay in processing a target identification is an eternity.

Gemini is natively multimodal. It doesn’t just translate text to image; it reasons across them simultaneously. Imagine an analyst feeding the system a raw SIGINT (signals intelligence) transcript and a thermal infrared feed of a coastline. Gemini can cross-reference the auditory cues with the visual anomalies in real-time. This eliminates the “translation tax” usually paid when moving data between separate vision and language models.

It is an efficiency play of the highest order.

The 30-Second Verdict: Why This Changes the Game

- Zero-Veto Power: Unlike previous contracts, Google has no “kill switch” if they dislike how the AI is used, provided it’s “lawful.”

- Data Sovereignty: The DoD likely demands “air-gapped” instances of Gemini, meaning the model runs on government servers, not the public cloud.

- Market Lock-in: This creates a massive barrier for open-source alternatives, as the DoD’s workflows will now be optimized for Gemini’s specific tokenization and prompting logic.

The Geopolitical Chessboard: Google vs. Palantir and Azure

This move is a direct shot across the bow of Microsoft and Palantir. For years, Microsoft’s Azure Government has been the gold standard for secure cloud deployments. Palantir, meanwhile, has built its entire brand on being the “software for the warfighter.” By entering the fray with a frontier model like Gemini, Google is attempting to leapfrog the middleware layer and provide the primary intelligence engine.

We are seeing the emergence of “Sovereign AI.” Governments no longer seek to rent intelligence; they want to own the weights of the models they use. While the current deal provides access, the inevitable next step is the fine-tuning of Gemini on classified datasets—creating a version of the AI that knows things the public version cannot even conceive.

“The integration of frontier LLMs into defense frameworks isn’t about automation; it’s about cognitive dominance. The side that can synthesize disparate data streams faster makes the decision that wins the engagement.”

This sentiment, echoed by various cybersecurity analysts and defense strategists, highlights the shift from “AI as a tool” to “AI as a strategic asset.” When you combine Gemini’s reasoning capabilities with the IEEE standards for autonomous systems, you get a framework for decision-support that operates at speeds human analysts cannot match.

Internal Fracture and the Ethics of the “Black Box”

Inside Google, the reaction is predictably volatile. Hundreds of employees are sounding the alarm, fearing that the “lawful government purpose” clause is a loophole wide enough to drive a drone through. The core of the anxiety lies in the “Black Box” problem: the inability to fully explain why a deep-learning model reached a specific conclusion. If an AI suggests a strike based on a multimodal analysis and that analysis is flawed, who is accountable? The engineer? The general? The model?

The tension here is between the academic idealism of Silicon Valley and the cold reality of the “Chip Wars.” Google cannot afford to be the only frontier AI lab not playing in the defense sandbox. If they opt out, they lose not only the revenue but the critical feedback loop that comes from the most demanding users on earth.

The cost of morality is often a loss of market share.

The Technical Trade-off: Accuracy vs. Speed

To create Gemini viable for the DoD, Google likely had to implement aggressive quantization—reducing the precision of the model’s weights to allow it to run on edge hardware (like ruggedized laptops in the field) without needing a massive server farm. This creates a precarious balance between “hallucination rates” and “inference speed.”

| Metric | Public Gemini (Cloud) | Defense Gemini (Edge/Air-gapped) | Strategic Impact |

|---|---|---|---|

| Latency | Variable (Network dependent) | Ultra-Low (Local TPU) | Real-time tactical response |

| Context Window | Up to 2M Tokens | Optimized/Compressed | Faster synthesis of local intel |

| Privacy | Standard Encryption | End-to-End / Air-gapped | Prevention of adversarial leakage |

| Oversight | Corporate Safety Filters | Government-defined Parameters | Removal of “refusal” triggers |

By removing the standard safety filters—those annoying “answer that” responses we see in the consumer version—the DoD gets a raw, unfiltered reasoning engine. This is essential for military utility but terrifying from a safety perspective. We are essentially talking about a “jailbroken” version of the world’s most powerful AI, sanctioned by the state.

As we move further into 2026, the line between “Big Tech” and “Defense Contractor” has effectively vanished. Google is no longer just a search engine or an AI lab. It is now a critical component of the US defense infrastructure. The “Don’t be evil” era didn’t just finish; it was rewritten in Python and deployed to a secure server in Northern Virginia.

For those tracking the open-source AI movement, this is a warning. The most powerful models are moving behind classified curtains, leaving the public to play with the leftovers while the real intelligence evolution happens in the dark.