A rare comet is currently visible across New Zealand’s skies, offering a once-in-170,000-year viewing window. Astronomers and hobbyists are leveraging high-sensitivity CMOS sensors and automated plate-solving software to track the object before it exits the inner solar system, marking a peak moment for citizen science and deep-sky imaging.

For the casual observer, Here’s a bucket-list celestial event. For those of us obsessed with the stack, It’s a live-fire stress test for the current state of astronomical imaging and data pipelines. We aren’t just looking at a ball of ice and dust; we are witnessing the intersection of high-quantum-efficiency hardware and the democratization of orbital mechanics.

The comet’s brief residency in Aotearoa’s night sky is a reminder that the window for capture is brutally short. Once it vanishes, the orbital period puts the next viewing opportunity well beyond the horizon of human civilization as we know it. In the tech world, we call this a “hard deadline.”

The Silicon Shift: Why CMOS is Eating the Night

A decade ago, the gold standard for capturing a faint comet was the CCD (Charge-Coupled Device). CCDs offered superior linearity and lower noise, but they were leisurely, expensive, and required cumbersome cooling systems to prevent thermal noise from obliterating the signal. Today, the shift to BSI (Back-Illuminated) CMOS sensors has fundamentally changed the game for the amateur astronomer in New Zealand.

Modern CMOS sensors utilize a design where the wiring is placed behind the photodiode layer, maximizing the surface area available to capture photons. This increase in quantum efficiency means that we can now capture the faint, diffuse coma of a comet with shorter exposure times and significantly less “hot pixel” interference.

The technical win here is the reduction in read noise. When you are hunting for a comet against a backdrop of cosmic noise, the signal-to-noise ratio (SNR) is everything. By integrating on-chip amplifiers, current sensors allow for “lucky imaging”—taking hundreds of short exposures and using median-stacking algorithms to discard frames blurred by atmospheric turbulence.

It is a brute-force computational approach to optics.

The 30-Second Technical Verdict

- Hardware: Shift from CCD to BSI-CMOS has lowered the barrier for high-SNR deep-sky captures.

- Software: Plate solving has replaced manual star-hopping, allowing for sub-arcsecond targeting.

- Data: The event is being cross-referenced with MPC (Minor Planet Center) data in real-time.

Plate Solving and the Death of the Star Map

If you see a photographer in Auckland or Christchurch perfectly centered on this comet, they aren’t using a paper map. They are using plate solving.

Plate solving is essentially a reverse-image search for the universe. The software takes a raw image, identifies the patterns of stars (centroids), and compares them against a known catalog of celestial coordinates. This process, often handled by Astrometry.net or integrated into mounts via ASCOM drivers, allows the telescope to know exactly where it is pointing with mathematical precision.

The algorithm calculates the WCS (World Coordinate System) of the image, transforming pixel coordinates (x, y) into Right Ascension (RA) and Declination (Dec). For a fast-moving object like a comet, this requires constant updates to the tracking rate. Standard sidereal tracking—which follows the stars—isn’t enough. The mount must be programmed with the comet’s specific orbital velocity to prevent the object from streaking across the sensor.

“The integration of automated plate solving and precise ephemeris data has turned the telescope from a passive lens into a robotic instrument. We are no longer searching for objects; we are commanding the hardware to lock onto them.” Dr. Elena Rossi, Computational Astrophysicist

The Macro-Scale: Rubin and the Era of Big Data Astronomy

While New Zealanders look up with binoculars, the broader scientific community is preparing for a paradigm shift in how we detect these objects. The Vera C. Rubin Observatory, which is central to the Legacy Survey of Space and Time (LSST), represents the “Big Tech” evolution of this process.

The Rubin Observatory isn’t just a telescope; it is a data factory. With a 3.2-gigapixel camera—the largest digital camera ever constructed—it will scan the entire visible sky every few nights. This creates a temporal map of the universe, allowing astronomers to detect “transients” (objects that move or change brightness) in near real-time.

The sheer volume of data is staggering. We are talking about terabytes of raw imagery per night that must be processed through an automated pipeline to identify potential comets or asteroids. This is where the “tech war” moves from optics to compute. The challenge is no longer just about the glass; it is about the open-source algorithms and GPU-accelerated pipelines required to filter signal from noise at a planetary scale.

This comet is a precursor to that era. Every amateur photo uploaded to a forum today is a data point that validates the orbital models used by professional agencies.

The Citizen Science Pipeline

The most compelling part of this event isn’t the comet itself, but the ecosystem surrounding it. The flow of information follows a specific technical trajectory:

- Detection: Professional surveys or advanced amateurs spot a deviation in the background stars.

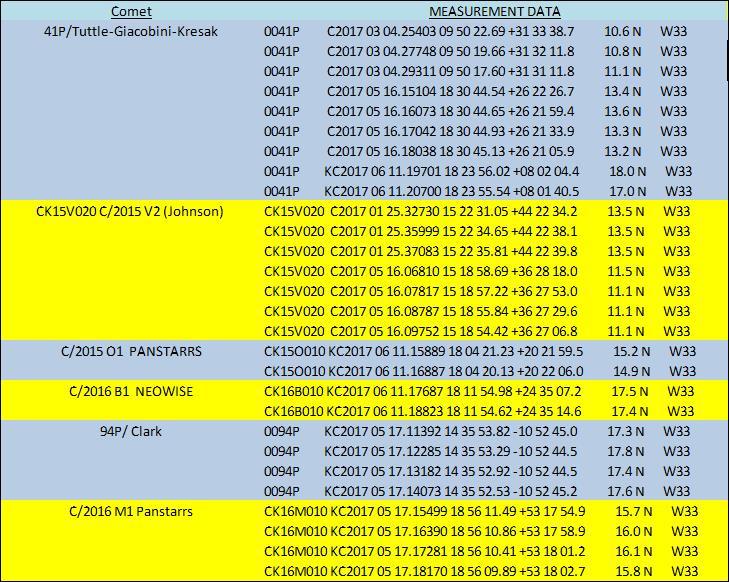

- Verification: Data is sent to the Minor Planet Center (MPC) to confirm the orbit.

- Dissemination: Ephemeris tables (predicted positions) are pushed to apps and telescope controllers.

- Capture: A global network of CMOS sensors captures the object from multiple angles, providing a stereoscopic view of its evolution.

This is a decentralized observation network. By leveraging the ubiquity of smartphones and affordable astrophotography gear, we have effectively turned the planet into a giant, distributed sensor array.

The comet will be gone in a week. The 170,000-year wait for its return is a cosmic blink, but the technological infrastructure we’ve built to track it is here to stay. We’ve moved past the era of the lonely astronomer with a sketchpad; we are now in the era of the algorithmic sky.

If you’re in New Zealand, secure your gear out. Calibrate your dark frames, sync your mounts, and capture the data. In the world of high-stakes astronomy, the only thing worse than a blurry photo is a missed opportunity.