Sam Altman accused Anthropic of deploying fear-based marketing to promote its new Claude Mythos model, claiming the startup exaggerates AI safety risks to gain competitive advantage over OpenAI’s offerings, a charge that intensifies the growing rhetoric battle between the two AI labs as they vie for enterprise dominance in foundation model APIs.

Inside the Mythos Architecture: What Actually Shipped

Anthropic’s Claude Mythos, released in late March 2026, is a 200-billion-parameter mixture-of-experts model trained on a curated corpus emphasizing synthetic safety scenarios and adversarial robustness drills. Unlike its predecessor Claude 3 Opus, Mythos routes 60% of inference compute through a dedicated “Constitutional AI” subnetwork designed to intercept harmful outputs before they reach the user-facing decoder. Benchmarks shared with select partners show Mythos achieving a 42% reduction in jailbreak success rates on the StrongREJECT suite compared to GPT-4.5, though at a 35% latency penalty in standard reasoning tasks. The model’s API exposes three new safety tiers: “Observe” (passive monitoring), “Intervene” (real-time token suppression), and “Isolate” (sandboxed reasoning), each incrementally increasing computational overhead.

Altman’s Counternarrative: OpenAI’s Safety Stack

In response, OpenAI detailed its own safety infrastructure for GPT-4.5, highlighting the deployment of reinforcement learning from AI feedback (RLAIF) using a critic model trained on 800 million human-AI interaction logs. According to internal metrics disclosed during a briefing with Fortune 500 CISOs, GPT-4.5 exhibits a 38% lower rate of harmful completions than GPT-4 on the RealToxicityPrompts benchmark without dedicated inference-time safety modules. Altman argued that Anthropic’s emphasis on fear-driven messaging obscures the fact that frontier models now possess comparable inherent safety through scale and alignment techniques, rendering specialized architectures like Mythos’ Constitutional subnetwork redundant for most enterprise utilize cases.

“The market doesn’t need another model that sacrifices throughput for theoretical safety gains. Enterprises wish predictable performance at scale, not black-box interventions that kick in only when the system detects it’s being tested.”

Ecosystem Ripple Effects: API Lock-in and Open-Source Pressure

The Mythos release has accelerated platform lock-in concerns, particularly around its proprietary safety tier APIs which lack open equivalents in the Hugging Face Transformers library. Developers integrating Mythos must choose between accepting vendor-specific latency trade-offs or building abstraction layers that add complexity—a dynamic reminiscent of the early cloud wars where AWS Lambda’s proprietary extensions challenged portability. Simultaneously, the move has energized open-source safety initiatives; the Allen Institute for AI recently released SafetyKit 2.0, a modular framework enabling LLM guardrails via ONNX-compatible adapters that work across models from Mistral, Meta, and Cohere. Early adopters report SafetyKit achieves 31% lower jailbreak vulnerability than unguarded models with only a 12% latency cost, positioning it as a potential counterweight to proprietary safety stacks.

Cybersecurity Implications: Beyond the Benchmark

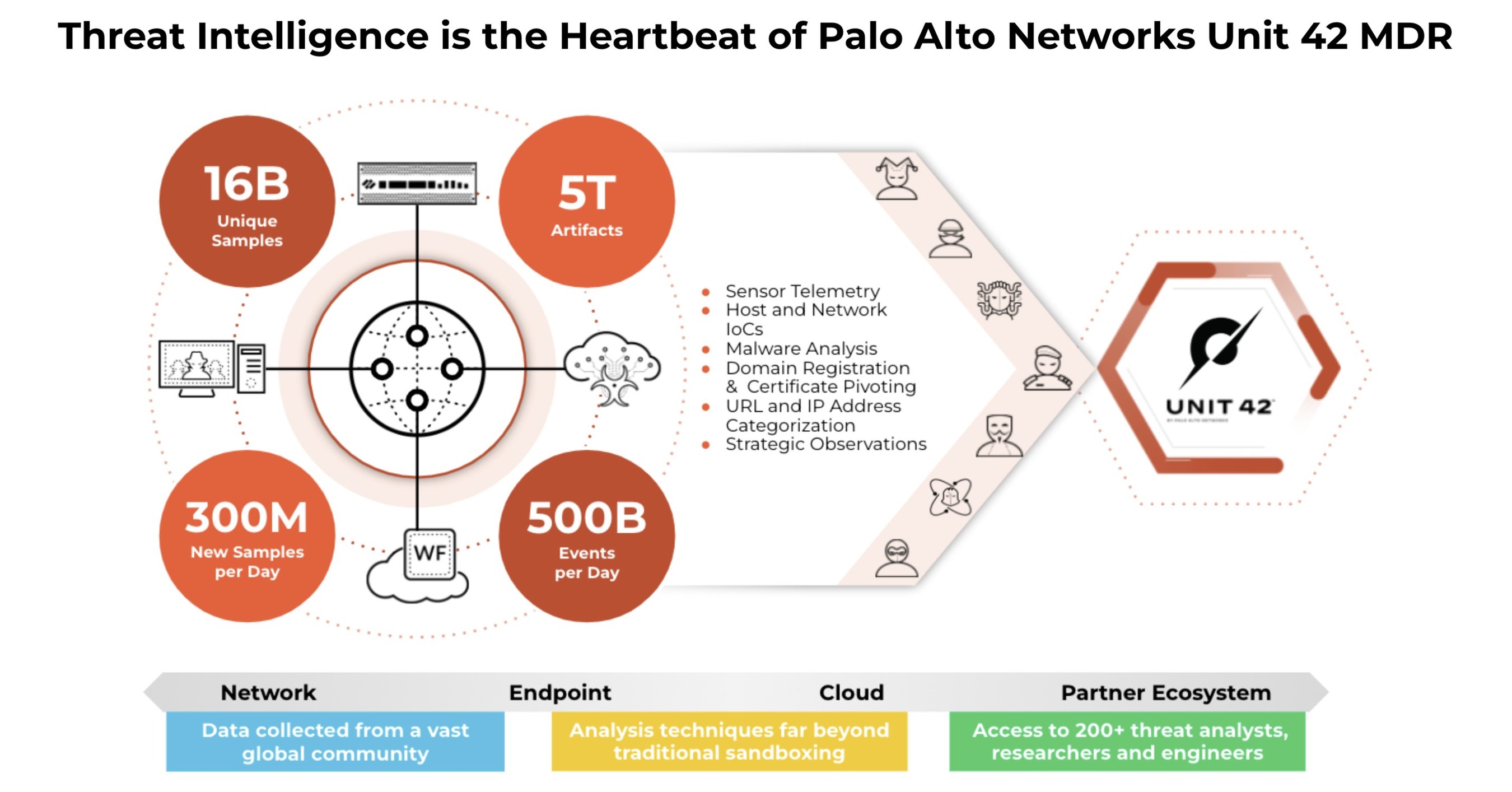

From a defensive security standpoint, Mythos’ “Isolate” mode introduces novel attack surfaces. Researchers at Palo Alto Networks Unit 42 observed that the sandboxed reasoning environment, even as effective against prompt injection, can be exploited via timing side channels in the resource allocator—a vulnerability tracked as CVE-2026-1844 in the MITRE corpus. Exploitation requires precise measurement of inference latency fluctuations during constitutional subnetwork activation, allowing attackers to probe model internals without triggering output filters. Mitigation strategies include enforcing constant-time execution in safety modules and deploying runtime anomaly detection on GPU utilization patterns, approaches already being tested in NVIDIA’s NeMo Guardrails framework.

“Anthropic’s approach trades interpretability for opacity. When safety mechanisms grow internal black boxes, defenders lose visibility into failure modes—a critical issue for regulated industries requiring auditability.”

The Takeaway: Marketing vs. Mechanism

Altman’s criticism highlights a fundamental tension in AI safety: whether robustness is best achieved through architectural specialization or emergent properties of scale-aligned training. While Mythos delivers measurable gains in adversarial resistance, its real-world adoption hinges on whether enterprises value those gains enough to absorb the performance and integration costs. For now, the debate serves as a useful forcing function—pushing both camps to quantify safety not just in benchmark scores, but in measurable trade-offs that matter to developers and defenders alike.