Big Tech firms are doubling down on CSAM detection as the EU’s ePrivacy derogation expires, creating a legal vacuum. This clash pits conclude-to-end encryption (E2EE) against child safety mandates, forcing a shift toward controversial client-side scanning architectures to identify illegal content without breaking privacy protocols.

The expiry of the ePrivacy derogation isn’t just a bureaucratic hiccup; This proves a systemic failure of European regulatory agility. For years, this legal carve-out allowed platforms to deploy technology to detect Child Sexual Abuse Material (CSAM) without running afoul of strict privacy laws. Now, as we hit April 2026, the clock has run out. The result is a dangerous paradox: the very tools designed to protect the most vulnerable are now legally precarious, while the bad actors they target operate with total impunity in the shadows of encrypted tunnels.

This is where the “safety vs. Privacy” debate stops being a philosophical exercise and starts being an engineering problem.

The Perceptual Hashing Paradox

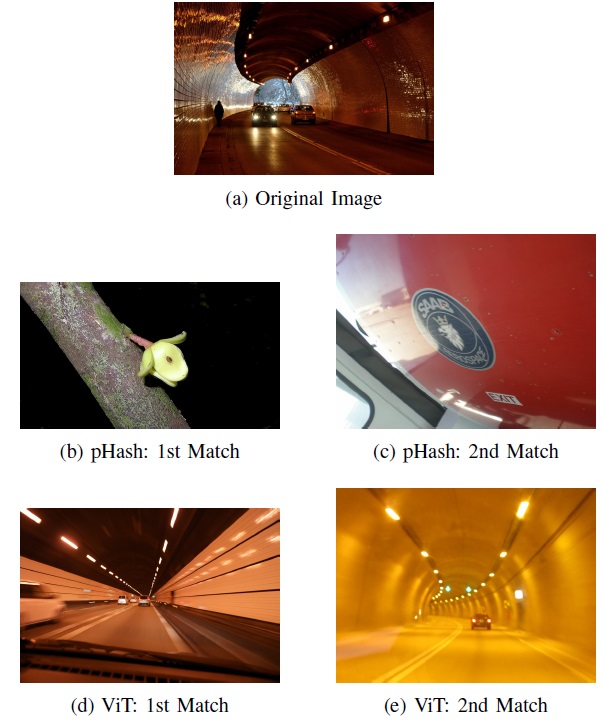

To understand why this is a technical nightmare, you have to understand how detection actually works. We aren’t talking about a human moderator scrolling through a feed. We are talking about Perceptual Hashing. Unlike a cryptographic hash (like SHA-256), where changing a single bit in a file completely alters the resulting hash, a perceptual hash creates a “fingerprint” based on the visual content of an image. If you resize a photo or change the brightness slightly, the pHash remains nearly identical.

Platforms compare these hashes against massive databases of known CSAM. But there is a catch: in an end-to-end encrypted (E2EE) environment, the server never sees the image. It only sees an encrypted blob of noise. To detect CSAM without breaking the encryption, the industry is pushing toward Client-Side Scanning (CSS).

CSS moves the detection engine from the cloud to the edge. The scan happens on your device—leveraging the NPU (Neural Processing Unit) for low-latency inference—before the data is encrypted and sent. If a match is found, the “hit” is reported to the provider. It is a surgical strike approach to moderation, but it creates a massive security vulnerability.

| Feature | Cryptographic Hashing (SHA-256) | Perceptual Hashing (pHash) | AI-Based Classification |

|---|---|---|---|

| Sensitivity | Exact match only; fragile. | Resilient to minor edits/resizing. | Detects conceptual themes. |

| Privacy Risk | Low (only matches known files). | Medium (can be used for fingerprinting). | High (requires semantic analysis). |

| Compute Load | Negligible. | Low (suitable for mobile NPUs). | High (requires significant RAM/GPU). |

Client-Side Scanning: The Privacy Trojan Horse

From a Silicon Valley insider’s perspective, CSS is a terrifying precedent. If you build a mechanism that can scan a user’s local gallery for CSAM before it is encrypted, you have effectively built a government backdoor. The only difference between a “child safety” filter and a “political dissident” filter is the hash list stored on the server.

The technical community is rightfully panicked. By implementing CSS, platforms are essentially introducing a “trusted” piece of malware into the secure enclave of the device. This undermines the core value proposition of E2EE, which is that the service provider cannot access the data, regardless of whether they are compelled by a court order or hacked by a state actor.

“The move toward client-side scanning is a fundamental betrayal of the encryption promise. Once the architecture for local inspection exists, the slope toward general surveillance is not just slippery—it’s a vertical drop.”

This sentiment is echoed across the open-source community. While proprietary giants might argue that their “secure silos” prevent abuse, the reality is that any API capable of reporting a hash match can be repurposed. If the EU fails to provide a legal framework that strictly limits the scope of these scans, we are looking at the end of true digital privacy in the Eurozone.

The 30-Second Verdict for Enterprise IT

- The Risk: Potential for “feature creep” where safety tools become surveillance tools.

- The Tech: Shift from server-side analysis to NPU-accelerated local inference.

- The Impact: Increased device-side compute overhead and a potential fragmentation of E2EE standards.

The Regulatory Vacuum and the “Chip War” Influence

The EU’s inaction is particularly galling given the current state of the “chip wars.” We are seeing a massive push toward hardware-level security. With Apple’s Secure Enclave and Google’s Titan M2 chips, the boundary between the OS and the secure hardware has become the primary battleground for data integrity. CSS attempts to sit exactly on that boundary.

If the EU mandates CSS without strict technical guardrails, they are essentially forcing hardware manufacturers to bake surveillance capabilities into the silicon. This creates a market bifurcation. We may witness “Privacy-First” hardware—likely emerging from open-source RISC-V architectures—competing against “Compliant” hardware that satisfies EU mandates but compromises the user.

this affects the broader ecosystem of third-party developers. If a platform like WhatsApp or Signal is forced to implement CSS, the API surface area for potential exploits increases. Every recent “safety” layer is a new attack vector for zero-day vulnerabilities.

For those tracking the technical implementation of these standards, the open-source community on GitHub has already begun prototyping “canary” systems to detect when local scanning is active. This is the digital equivalent of a tripwire, alerting users when their device is performing unauthorized content analysis.

The Path Forward: Zero-Knowledge Proofs?

Is there a middle ground? Some researchers are proposing the employ of Zero-Knowledge Proofs (ZKPs). In theory, a device could prove that a piece of content does not match a known blacklist without ever revealing the content itself or the specific hash to the server. This would allow for “privacy-preserving detection.”

However, ZKPs are computationally expensive. Running a complex ZKP for every image sent in a group chat would murder battery life and introduce unacceptable latency, even with the latest ARM-based NPUs. We are currently in a race between mathematical elegance and hardware reality.

The bottom line is this: the EU’s failure to renew the ePrivacy derogation has left a void that Big Tech is filling with a mixture of corporate altruism and technical compromise. We cannot protect children by destroying the architectural foundations of privacy for everyone else. The solution isn’t a backdoor; it’s a better front door, built on transparent, audited and mathematically verifiable code. Until then, the “commitment to child safety” remains a convenient shield for a much more dangerous architectural shift.

For more on the intersection of encryption and law, see the latest analyses at Ars Technica or the Electronic Frontier Foundation.