Elektor Lab Talk highlights the industry shift toward reconfigurable test gear, specifically the Red Pitaya platform. By leveraging an FPGA-based SoC, these tools replace static, expensive lab instruments with software-defined hardware, allowing engineers to customize signal processing and data acquisition in real-time for rapid prototyping and industrial research.

For decades, the electronics lab has been a graveyard of “black box” instruments. You bought a high-end oscilloscope or a signal generator from a legacy vendor, paid a premium for a proprietary OS, and accepted that the hardware’s functionality was frozen in silicon the moment it left the factory. If you needed a specific trigger logic or a custom filter, you were out of luck unless you spent five figures on a specialized module.

The discourse emerging from the latest Elektor Lab Talk signals the finish of this rigidity. We are seeing a pivot toward “Lab-as-Code.”

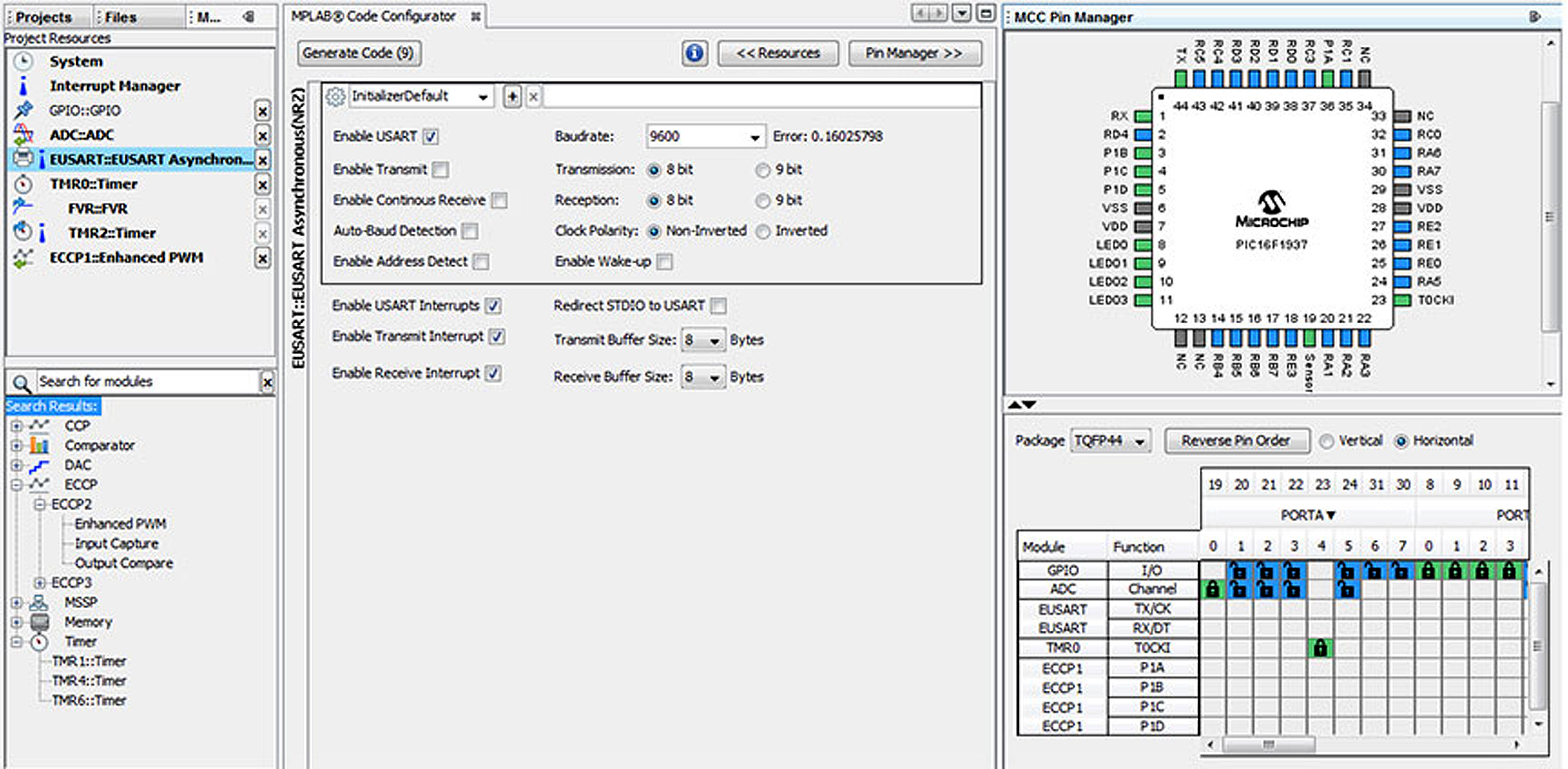

At the center of this shift is the Red Pitaya, a device that isn’t just a tool, but a programmable canvas. For the uninitiated, Red Pitaya utilizes a Xilinx Zynq SoC (System on Chip), which is the secret sauce here. Unlike a standard microcontroller, the Zynq architecture integrates a dual-core ARM Cortex-A9 processor (the Processing System, or PS) with an FPGA (the Programmable Logic, or PL) on a single die. This allows for a symbiotic relationship: the ARM core handles the high-level Linux OS and network stack, while the FPGA handles the raw, nanosecond-level signal processing.

The Zynq Synergy: Why FPGA Logic Trumps Static Silicon

In traditional test gear, the signal path is hard-wired. In a reconfigurable system, the signal path is a software definition. By manipulating the Zynq FPGA fabric, developers can implement custom Digital Signal Processing (DSP) blocks—like FIR filters or Fast Fourier Transforms (FFT)—that execute in parallel hardware rather than sequential software. This eliminates the “jitter” and latency inherent in CPU-based processing.

It is raw efficiency.

When you move the heavy lifting to the PL (Programmable Logic), you aren’t just speeding up the process; you are fundamentally changing the capability of the device. You can turn a basic oscilloscope into a lock-in amplifier or a software-defined radio (SDR) simply by flashing a novel bitstream. Here’s the antithesis of the traditional hardware lifecycle, where “upgrading” meant buying a new chassis.

However, the “geek-chic” allure of open hardware often masks a brutal reality: the learning curve. Writing VHDL or Verilog for the FPGA is a different beast than writing Python for the ARM core. While the Red Pitaya ecosystem has made strides in providing high-level APIs, the true power remains locked behind the complexity of hardware description languages.

Dismantling the Proprietary Moat

The broader implication here is a direct assault on platform lock-in. Legacy vendors have long relied on “feature gating,” where advanced analysis tools are locked behind expensive software licenses. By moving toward an open-source hardware model, the community is effectively crowdsourcing the R&D of test instrumentation.

“The democratization of FPGA-based instrumentation is shifting the value proposition from the hardware itself to the IP running on it. We are moving away from buying a ‘box’ and toward buying ‘accelerated workflows’.” — Marcus Thorne, Lead Hardware Architect at OpenSignal Labs

This shift mirrors what happened in the server world with the rise of x86 and Linux. When the hardware becomes a commodity, the innovation moves to the software layer. We are seeing this manifest in the integration of Red Pitaya’s GitHub repositories, where users share custom apps that extend the device’s utility far beyond the original manufacturer’s intent.

The 30-Second Verdict: Open vs. Closed Gear

- Legacy Gear: High precision, zero configuration, extreme cost, zero flexibility.

- Reconfigurable Gear: Moderate precision, high configuration overhead, low cost, infinite flexibility.

- The Winner: For R&D and agile prototyping, the reconfigurable model wins on ROI. For certified calibration labs, legacy gear remains the gold standard.

Benchmarking the Trade-off: Precision vs. Programmability

We need to be honest about the specs. A Red Pitaya is not going to replace a $50,000 Keysight scope in a high-frequency aerospace lab. The analog front-end (AFE) is where the physical limits reside. The ADC (Analog-to-Digital Converter) resolution and the sampling rate are capped by the hardware’s physical components, regardless of how clever your FPGA code is.

But for 90% of engineering tasks—signal monitoring, basic control loops, and sensor interfacing—the “good enough” threshold has been crossed.

| Metric | Traditional High-End Scope | Red Pitaya (Reconfigurable) | Impact on Workflow |

|---|---|---|---|

| Logic Path | Fixed ASIC | Programmable FPGA | Custom triggers vs. Preset triggers |

| OS | Proprietary/Closed | Embedded Linux | API integration vs. Manual UI |

| Deployment | Benchtop | Embedded/Remote | Local testing vs. Distributed sensing |

| Cost Entry | $$$$$ | $ | Lowering the barrier for startups |

The “Lab-as-Code” Pipeline and Cybersecurity

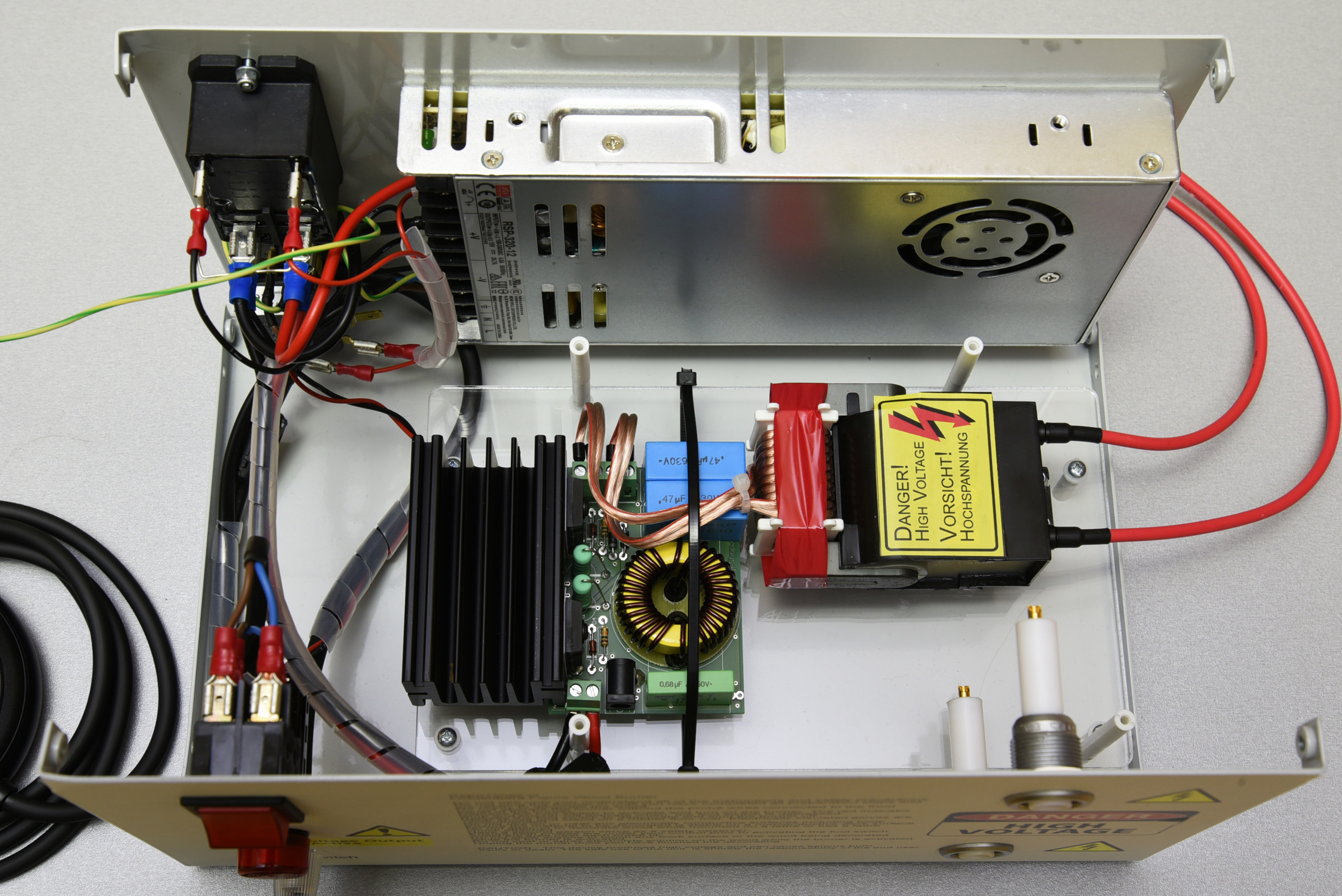

As these devices move from the bench to the field—often connected via Ethernet for remote monitoring—a new vulnerability surface emerges. A reconfigurable test device is, at its core, a Linux server with high-speed access to physical signals. If a Red Pitaya is exposed to the public internet without a hardened SSH configuration or a VPN, it becomes a potent entry point for lateral movement within a corporate network.

the ability to remotely flash the FPGA bitstream introduces a terrifying possibility: hardware-level persistence. A sophisticated actor could potentially inject a malicious module into the FPGA fabric that intercepts signals or exfiltrates data, bypassing the ARM processor’s OS-level security entirely. This is why the move toward IEEE standards for hardware security is no longer optional for open-source hardware.

The integration of Python and Jupyter Notebooks for controlling these devices is a godsend for productivity, but it necessitates a shift in mindset. We are no longer just “using a tool”; we are managing an edge computing node.

What So for the Modern Engineer

The takeaway is clear: the skill set required for hardware engineering is merging with software engineering. Knowing how to probe a circuit is no longer enough; you now need to know how to orchestrate that probe via a REST API and process the resulting data stream in a cloud-native environment.

The “reconfigurable” era isn’t just about saving money on gear. It’s about the speed of iteration. When the distance between a theoretical signal processing algorithm and its physical implementation is reduced to a git push and a bitstream flash, the pace of innovation accelerates exponentially. The black box is open, and there is no going back.