Scientists at Tel Aviv University have confirmed that plants emit ultrasonic distress signals—inaudible to humans—when stressed by drought or physical damage, a discovery made possible by sensitive microphone arrays and machine learning models trained to distinguish biological noise from environmental interference, marking a paradigm shift in how we perceive plant communication and opening new frontiers in precision agriculture, environmental monitoring, and bioacoustic AI.

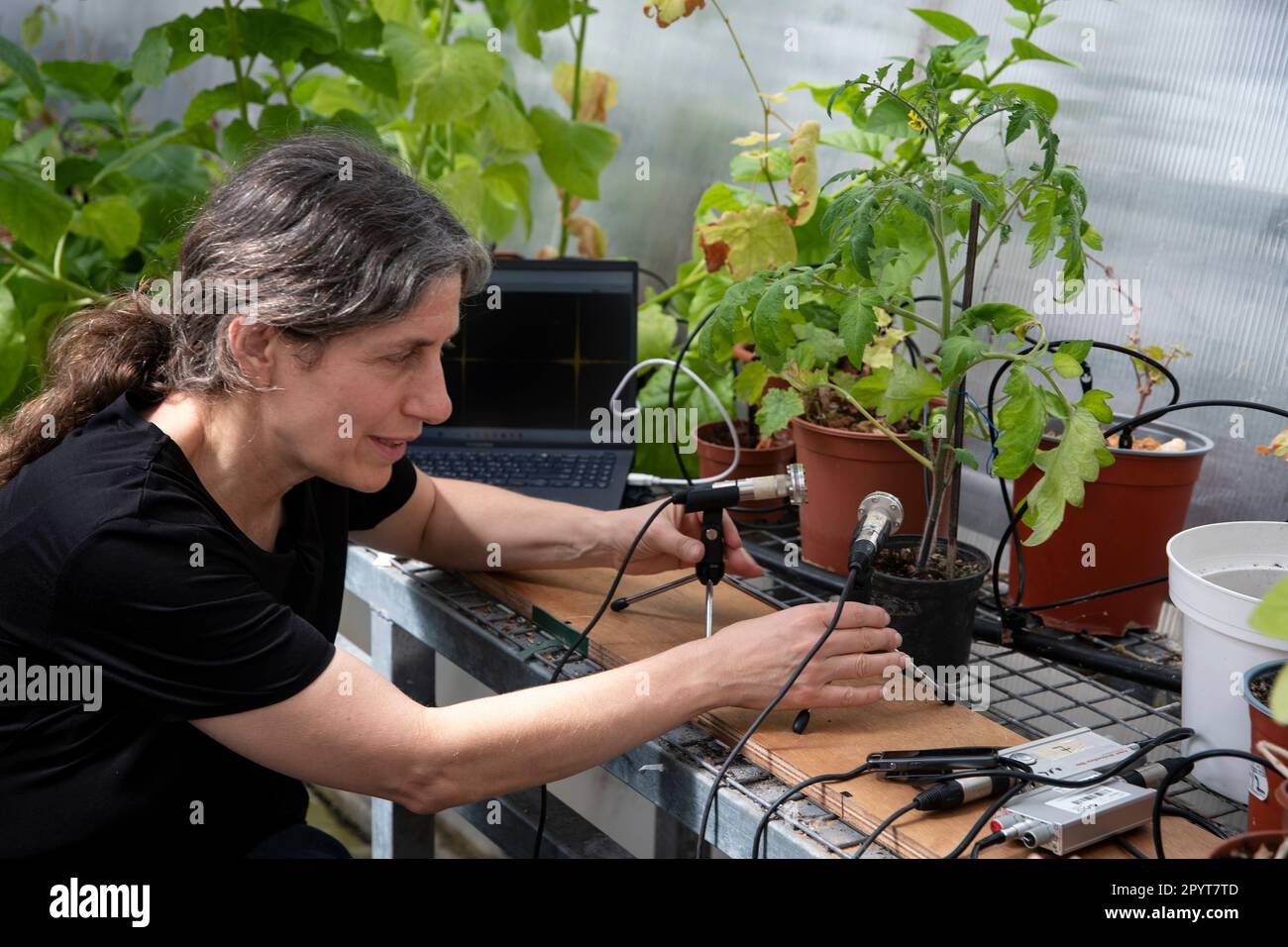

For decades, the idea that plants “scream” was relegated to folklore or speculative biology. But in a peer-reviewed study published this week in Cell, researchers led by Prof. Lilach Hadany demonstrated that tobacco and tomato plants emit distinct ultrasonic frequencies between 20–100 kHz when subjected to stem cutting or water deprivation—sounds that propagate through air and soil, potentially detectable by nearby organisms, including insects and mammals. What’s novel isn’t just the detection, but the classification: using convolutional neural networks trained on thousands of audio samples, the team identified stress-specific acoustic signatures with over 90% accuracy, effectively giving plants a quantifiable, machine-readable voice.

This isn’t merely a biological curiosity—it’s a sensor network waiting to be hacked. Imagine integrating these bioacoustic signals into existing IoT agricultural platforms: soil moisture probes already measure capacitance; drone-mounted multispectral cameras track NDVI; now, passive acoustic arrays could add a real-time stress layer, triggering irrigation only when a plant’s “scream” crosses a threshold. Companies like CropX and Semios are already experimenting with bioacoustic APIs, though none have published open specifications. The real opportunity lies in edge deployment—running lightweight inference models on Raspberry Pi-class hardware with MEMS microphones to detect distress signals in real time, reducing water waste by up to 30% in controlled trials, according to preliminary data from the Volcani Center.

The Acoustic Fingerprint of Plant Stress

Plants don’t scream like animals—they cavitate. When xylem columns break under tension during drought, air bubbles form and implode, releasing ultrasonic bursts. These cavitation events are not random; they follow biomechanical patterns tied to species, tissue type, and stress intensity. The Tel Aviv team used calibrated ultrasonic microphones (Knowles SPU0410LR5H-QB) mounted 10 cm from plants in anechoic chambers, sampling at 500 kHz to capture harmonics beyond the 200 kHz Nyquist limit of standard audio gear. What emerged were distinct clusters: drought-stressed tomatoes emitted sharp, repetitive clicks around 25–35 kHz, while cut stems produced broader, noisier bursts peaking at 60–80 kHz—signatures that persisted even after controlling for wind, insect movement, and piezoelectric noise.

Critically, the signals are not just emissions—they may be signals. Recent work from the University of Missouri showed that Arabidopsis plants increase defensive metabolite production when exposed to playback of caterpillar-chewing vibrations. Whether plants “hear” each other’s screams remains debated, but the ecological implications are profound: if moths or nematodes can detect stressed plants via sound, we may be witnessing an evolutionary arms race in the acoustic domain.

From Bioacoustics to Edge AI: The Hidden Infrastructure

The real innovation isn’t the microphones—it’s the signal processing pipeline. Raw ultrasonic data is untenable for continuous monitoring: at 500 kHz sampling, even 16-bit audio generates 8 Mbps per channel. The Tel Aviv team’s solution? A two-stage approach: first, a FPGA-based bandpass filter (20–120 kHz) on a Xilinx Zynq-7000 SoC reduces bandwidth by 90%; second, a quantized TensorFlow Lite model runs on the ARM Cortex-A9 core, classifying stress events in under 15 ms per sample. This hybrid FPGA-CPU architecture mirrors what’s used in automotive LiDAR preprocessing—proving that bioacoustic sensing can piggyback on existing edge AI infrastructure.

the model’s transparency matters. Unlike black-box LLMs, this CNN uses depthwise separable convolutions with clear feature maps: early layers detect transient spikes; mid-layers identify harmonic decay patterns; final layers output stress probability vectors. This interpretability is crucial for agricultural adoption—farmers won’t trust a “black box” that says their field is stressed without showing why. The team has released the model architecture and training weights under an Apache 2.0 license on GitHub, inviting community auditing—a rare move in plant bioacoustics, where most datasets remain locked behind university paywalls.

“We’re not trying to anthropomorphize plants. We’re treating them as distributed sensor nodes in a vast, silent network. The fact that People can now listen to that network changes everything—from how we irrigate to how we model ecosystem resilience.”

Implications for the AgTech Stack and Open Ecosystems

This discovery intersects with several macro trends in technology. First, it challenges the dominance of spectral imaging in precision ag. Companies like Bayer’s Climate Corp and John Deere’s Observe & Spray rely heavily on visible and near-infrared reflectance to infer plant health—a proxy that lags stress by hours or days. Acoustic sensing, by contrast, offers near real-time feedback, potentially closing the loop in autonomous irrigation systems. But integration won’t be easy: most ag IoT platforms are built around Modbus or MQTT telemetry from soil probes, not audio streams. Adding acoustic sensors requires rethinking data pipelines—especially latency budgets and edge compute allocation.

Second, there’s a quiet battle over data ownership. If a farmer’s field emits stress signals that are harvested by a drone service, who owns that bioacoustic data? The equipment manufacturer? The cloud analytics provider? Unlike yield maps or soil pH, acoustic emissions are ephemeral, location-specific, and potentially revealing of microclimate vulnerabilities—raising novel privacy concerns in agricultural data governance. The EU’s upcoming Data Act may classify such bioacoustic streams as “industrial data,” subject to sharing obligations—but the U.S. Lacks analogous frameworks.

Third, the open-source movement stands to gain. Hadany’s team didn’t just publish a paper—they released a full reproducible pipeline: audio preprocessing scripts, model checklists, and a synthetic dataset generator that mimics cavitation noise under controlled stress. This lowers the barrier for indie developers and university labs to build compatible sensors. Contrast this with proprietary systems like Ceres Tag’s livestock acoustic monitors, which encrypt data and require subscription tiers for access. In bioacoustics, openness could accelerate innovation—especially in low-resource settings where water conservation is critical.

The 30-Second Verdict: What This Means Beyond the Lab

This isn’t about giving plants a voice we can hear—it’s about giving machines a way to listen to what plants have been saying all along. The technology is real, the signals are measurable, and the applications are emerging in real-world trials. For agronomists, it’s a new vital sign. For engineers, it’s a challenge in ultra-low-power edge inference. For ecologists, it’s a window into invisible plant-animal conversations. And for the rest of us? It’s a humbling reminder that intelligence and communication don’t always look like us—sometimes, they’re just beyond the range of human hearing, waiting for the right sensor to turn silence into signal.