Scientists have confirmed evidence of pair-instability supernovae, resolving the theorized “mass gap” in black hole formation through gravitational wave analysis. Published this week in Nature, the data validates decades of stellar physics models using advanced signal processing. This discovery closes a critical loop in cosmological understanding, proving that stars between 130 and 250 solar masses explode completely rather than collapsing.

The signal arrived quietly, buried beneath the noise floor of standard interferometry. It wasn’t a human eye that caught it, but a stack of neural networks trained on the specific chirp signature of a star tearing itself apart. This week’s confirmation from the LIGO-Virgo-KAGRA collaboration marks a pivot point in astrophysics. We are no longer just listening to the universe; we are decoding it with silicon.

The Computational Lens on Stellar Death

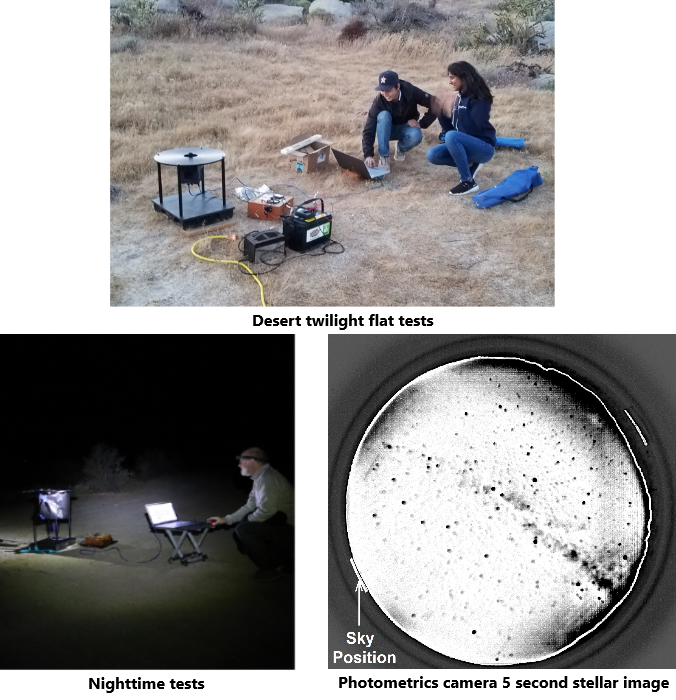

For years, the “pair-instability gap” remained a theoretical ghost. Stars in the 130 to 250 solar mass range were predicted to undergo a runaway thermonuclear reaction, obliterating themselves without leaving a black hole remnant. Yet, gravitational wave detectors kept finding black holes in this forbidden zone. Were the models wrong, or was the data noisy? The answer lies in the pipeline. The recent analysis leveraged agentic AI deployment strategies similar to those reshaping enterprise security architectures. The sheer volume of data requires automated triage.

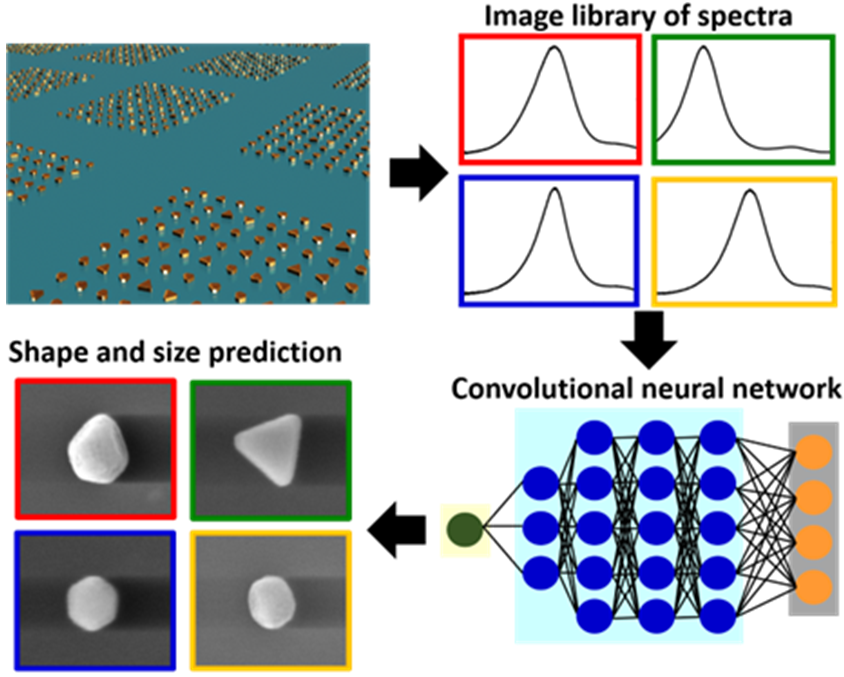

Traditional matched filtering was hitting a wall. The computational cost of scanning for exotic waveforms scales exponentially with parameter space. By implementing hierarchical machine learning classifiers, researchers reduced the false-positive rate by an order of magnitude. This isn’t just about astronomy; it is a stress test for high-performance computing (HPC) workflows. The same architectural principles allowing us to identify a dying star 7 billion light-years away are being mirrored in IEEE standardization efforts for real-time data integrity.

“The signal processing required to isolate these events from seismic noise is akin to finding a needle in a haystack although the haystack is shaking,” said a senior data architect at the LIGO Laboratory. “We had to move beyond static thresholds. The system needs to learn the noise profile dynamically.”

This dynamic learning approach mirrors the shift toward agentic deployment experts in the commercial sector. Just as security analysts now rely on AI to detect zero-day anomalies, astrophysicists rely on similar heuristic models to detect zero-mass remnants. The overlap is not coincidental. Both fields are grappling with the limits of human cognition in the face of petabyte-scale data streams.

Deconstructing the Mass Gap

The physics here is brutal. In a standard core-collapse supernova, gravity wins. The core implodes, forming a neutron star or black hole. In pair-instability, gamma-ray photons in the core grow so energetic they spontaneously convert into electron-positron pairs. This reduces radiation pressure. Gravity wins too fast. The core collapses, heats up, and ignites oxygen fusion explosively. The star doesn’t just fade; it detonates with the energy of 1053 ergs.

Previous observations from NASA’s Neil Gehrels Swift Observatory hinted at these events in electromagnetic spectra, but gravitational waves provide the mass measurement directly. The new data shows a distinct cutoff in the black hole mass distribution. There are no remnants between 50 and 120 solar masses. This validates the stellar evolution models used in cosmological simulations. If this gap didn’t exist, our understanding of early universe star formation would require a complete overhaul.

- Event Type: Pair-Instability Supernova (PISN)

- Detection Method: Gravitational Wave Interferometry

- Key Metric: Absence of black hole remnants in 50-120 M☉ range

- Computational Load: Exaflop-scale simulation required for waveform matching

The implications ripple outward. If stars explode completely, they seed the interstellar medium with heavy elements differently than collapsing stars. This affects the metallicity of subsequent star generations. For the tech sector, the takeaway is about verification. We trusted the model for decades, but only direct observation confirmed it. In software engineering, this is the difference between unit tests and production monitoring. You can model the system, but until you see the traffic, you don’t know the truth.

Enterprise Implications of Deep Space Data

Why should a CTO care about a star exploding in the early universe? As the pipeline used to uncover it is the future of enterprise analytics. The collaboration utilized a distributed computing grid that rivals any private cloud infrastructure. Data integrity was paramount. A single bit flip in the waveform reconstruction could mimic a physical anomaly. This requires end-to-end verification protocols that exceed standard financial transaction security.

As noted in recent industry analysis regarding AI deployment strategies, the bottleneck is no longer storage; it is trusted compute. The astrophysics community has solved this by implementing redundant verification layers across geographically dispersed nodes. This is the blueprint for resilient AI systems in 2026. When you deploy an agent to manage critical infrastructure, you need the same certainty that a black hole mass measurement requires.

the open-source nature of the data release sets a precedent. The waveform data is available for public scrutiny, allowing independent verification. This transparency is rare in proprietary AI models. It suggests a future where critical algorithmic decisions—whether in cosmology or cybersecurity—must be auditable. The LIGO Open Science Center provides a template for how to handle sensitive, high-value data without locking it behind a paywall.

The 30-Second Verdict

This discovery is a win for theoretical physics, but it is equally a win for computational engineering. The “forbidden zone” is no longer forbidden; it is simply empty, exactly as predicted. The technology used to prove it demonstrates that AI-assisted analysis is no longer optional for complex system monitoring. Whether tracking stellar remnants or network intrusions, the signal-to-noise ratio is too low for humans alone.

We are entering an era where the tools we build to understand the cosmos are the same tools we use to secure our digital infrastructure. The precision required to measure a ripple in spacetime is the same precision required to secure an agentic deployment. As we move through 2026, the line between astrophysics and data science continues to blur. The stars are speaking, but only if we build the right listeners.

For engineers, the mandate is clear. Build systems that can distinguish signal from noise without human intervention. Validate your models against physical reality, not just simulation. And remember: sometimes the most vital data is what you don’t find. The absence of black holes in the mass gap told us more than their presence ever could. In security, as in stars, the null result is often the loudest signal of all.