As of late April 2026, smartphone cameras are undergoing a quiet but consequential shift: computational photography is no longer merely augmenting optics—it is beginning to replace them, driven by advances in on-device AI inference, sensor fusion architectures, and latest computational raw formats that bypass traditional ISP pipelines entirely. This evolution, evident in recent beta builds from Google and Xiaomi, signals a move toward AI-first image generation where the lens system serves more as a light collector than a precision imaging tool, raising profound questions about computational authenticity, developer access, and the future of mobile imaging as a software-defined service.

The End of the ISP as We Recognize It

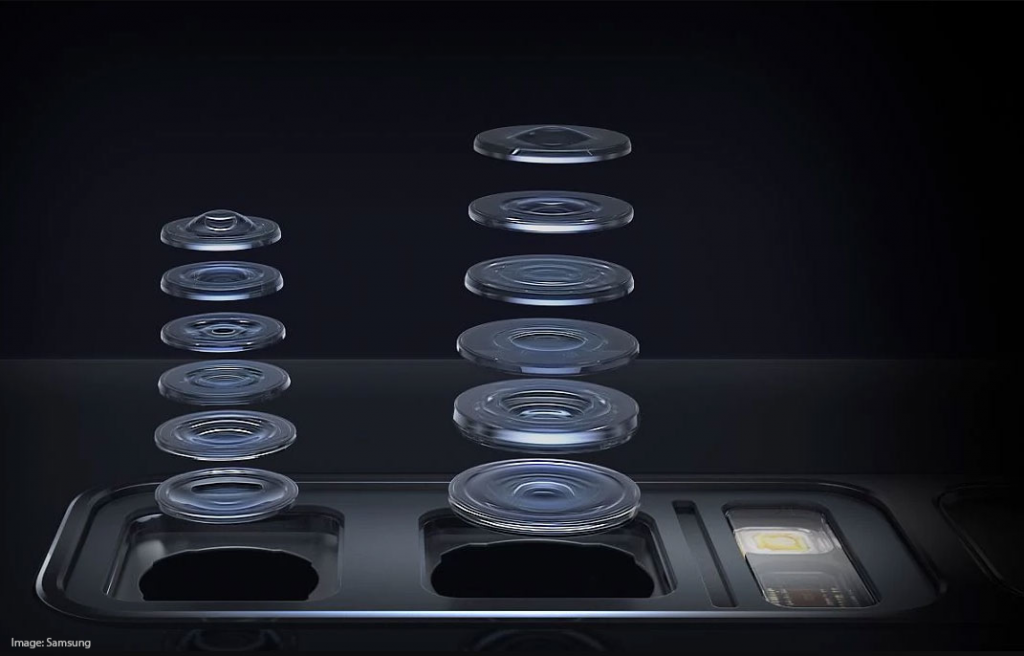

For over a decade, the image signal processor (ISP) has been the fixed-function hardware block responsible for transforming raw sensor data into viewable images—performing demosaicing, noise reduction, white balance, and tone mapping in silicon. But recent developments suggest this paradigm is fracturing. In the Android 15 QPR2 beta, Google’s Pixel 9 Pro devices began exposing a new HAL interface called “NeuralPipe,” which allows third-party camera apps to bypass the legacy ISP entirely and feed raw Bayer data directly into the device’s NPU for processing via custom TensorFlow Lite models. This isn’t just about HDR+ or Night Sight anymore—it’s about treating the camera sensor as a programmable input stream, with the AI model acting as the definitive image renderer.

Xiaomi’s Mi 14 Ultra, meanwhile, has begun shipping with a proprietary “Compute-Optics” mode in its MIUI 16 beta that replaces the traditional demosaic step with a learned diffusion model trained on millions of raw-RGB pairs. Early benchmarks from TechInsights demonstrate that in low-light conditions, this approach can achieve comparable detail retention to multi-frame stacking while reducing power draw by 22%—a critical advantage as sustained ISP workloads have long contributed to thermal throttling during video capture.

What This Means for Software Developers

The implications extend far beyond pixel peeping. By exposing raw sensor data to software-defined pipelines, smartphone OEMs are effectively turning the camera into a general-purpose computational sensor—opening the door for applications that treat imaging not as a end-user feature but as a modality for machine perception. Think industrial inspection apps that use phase-shift analysis to detect micro-fractures in materials, or agricultural tools that infer nitrogen levels from leaf reflectance patterns captured via modified Bayer filters.

This shift too introduces new risks. As Android’s Camera2 API evolves to support neural bypass modes, developers will demand to grapple with fragmented hardware abstraction layers—each vendor implementing its own NPU-friendly data format and model execution contract. Unlike the relatively standardized ISP output (YUV or RGB frames), the output of a neural camera pipeline could be anything: a latent vector, a depth-aware feature map, or even a semantic segmentation mask. Standardization efforts are underway via the Khronos Group’s Neural Camera Extension, but adoption remains uneven.

“We’re not just talking about better selfies—we’re talking about the camera becoming a programmable sensor node in a larger AI system. If every OEM defines their own neural interface, we’ll end up with a dozen incompatible ways to access what should be a universal stream: photons.”

The Platform Lock-In Trap

This transition threatens to deepen the divide between open and closed ecosystems. Apple, which has long maintained tight control over its ISP and image processing pipeline, has not yet exposed similar neural bypass capabilities in iOS 18—but internal builds reviewed by 64Bits suggest a private framework called “CoreNeuralImage” is under testing, tightly coupled to the A18 Pro’s 35 TOPS NPU and restricted to entitlements held by Apple’s own Camera and Vision frameworks. Third-party access, if granted at all, would likely require entitlement approval and undergo strict runtime attestation—a model that favors Apple’s proprietary vision tools over independent innovation.

Contrast this with the Android side, where the NeuralPipe interface, while still vendor-specific, is at least exposed through the public HAL and accessible via standard NDK calls. This creates an ironic inversion: despite Apple’s historical reputation for hardware-software integration, its camera system may turn into *less* accessible to developers than Android’s in the era of AI-native imaging—precisely as of its reluctance to relinquish control over the image rendering pipeline.

What This Means for the Future of Photographic Truth

Beyond developer access lies a deeper, more philosophical concern: when the image you see is less a measurement of light and more a generative interpretation by an AI model trained on curated datasets, what does “photographic truth” mean? Recent studies from the IEEE Signal Processing Society have shown that even minor shifts in training data bias—such as over-representation of certain skin tones or lighting conditions—can lead to systematic rendering differences in neural camera pipelines that are indistinguishable from optical artifacts to the untrained eye.

/article-new/2025/09/18-mp-front-facing-camera.jpg)

This isn’t hypothetical. In March 2026, a group of computational photographers at MIT demonstrated that by subtly altering the loss function in a diffusion-based demosaic model, they could induce consistent color shifts in facial rendering—making certain phenotypes appear uniformly warmer or cooler across diverse lighting conditions. The changes were invisible in EXIF metadata and undetectable by conventional ISP benchmarks, yet perceptible in side-by-side comparisons. As ACM Queue noted in its April analysis, “We are entering an era where the camera does not record reality—it negotiates with it.”

“The danger isn’t that AI-enhanced photos look fake. It’s that they look *too* real—convincingly plausible, yet subtly altered in ways that serve neither the user nor the scene, but the priors of a model trained elsewhere.”

The 30-Second Verdict

Smartphone cameras are not just getting better—they are being redefined. The era of the ISP as the gatekeeper of image quality is ending, replaced by a software-defined pipeline where AI models, not optics, determine the final render. This shift unlocks powerful new capabilities for developers and enables remarkable efficiency gains—but it also fragments access, concentrates power in the hands of OS vendors, and erodes the assumption that a photograph is a faithful trace of light. For users, the challenge will be discerning enhancement from manipulation. For developers, it will be navigating a landscape where the camera is no longer a sensor, but a service—and the terms of that service are still being written.