On Thursday afternoon, Snap Inc.’s stock (SNAP) slid 1.0% to $5.12 in New York trading, reflecting broader investor unease over the company’s stagnating user growth and intensifying competition from AI-driven short-form video platforms. The decline comes as Snap struggles to monetize its augmented reality (AR) ecosystem amid a slowdown in ad spending and rising infrastructure costs tied to its custom AI image generation pipeline. Analysts warn that without a clear path to profitability in its AR Cloud and Spotlight recommendation engine, Snap risks further erosion in a market dominated by TikTok’s ByteDance and Meta’s AI-optimized Reels.

The AR Infrastructure Bottleneck: Why Snap’s AI Ambitions Are Stalling

Snap’s recent underperformance isn’t merely a cyclical ad market issue—it’s rooted in architectural trade-offs made during its 2023 pivot to generative AI for Lens creation. The company’s proprietary AR Cloud relies on a hybrid pipeline combining on-device neural processing units (NPUs) in flagship smartphones with cloud-based diffusion models hosted on Google Cloud’s TPU v4 slices. While this approach enables real-time try-on experiences for fashion brands, internal benchmarks leaked to The Information reveal latency spikes of 420ms during peak usage—well above the 150ms threshold deemed acceptable for seamless AR interaction. This lag directly impacts user retention, particularly in markets like India and Brazil where mid-tier devices dominate.

Compounding the issue is Snap’s reliance on closed-source model weights for its Lens Studio AI assistant, which limits third-party developers from fine-tuning models for niche use cases. Unlike Meta’s open-sourced Segment Anything Model (SAM) for AR segmentation, Snap’s vision transformer (ViT-B/16) remains locked behind proprietary APIs, discouraging external innovation. As one former Snap AR engineer noted on condition of anonymity:

“We built a Ferrari engine but place it in a go-kart chassis—great for demos, terrible for scale. The moment you try to run complex semantic understanding on-device, the thermal throttling kicks in and the experience collapses.”

Ecosystem Isolation: How Snap’s Walled Garden Hurts Developer Trust

Snap’s strategy of vertical integration—controlling everything from Lens creation to ad delivery—has created friction with the developer community that powers its AR ecosystem. While the company reported 300,000 active Lens creators in Q1 2026, growth has plateaued at just 2% quarter-over-quarter, compared to Roblox’s 18% YoY surge in studio users. Critics point to Snap’s restrictive revenue share model, which takes 50% of proceeds from Lens-based affiliate sales, far exceeding Apple’s 30% App Store cut or Google’s 15% for subscriptions under $1M annually.

This tension was echoed by Jules Verne, lead AR developer at indie studio PixelForge, in a recent Hacker News thread:

“Snap wants creators to build the future of AR but won’t let us own the economics. If I spend six months building a Lens that drives $100K in sales for a brand, why should Snap take half just for hosting the model? It’s extractive, not collaborative.”

Such sentiment is driving creators toward open platforms like Apple’s RealityKit and Unity’s MARS framework, which offer more flexible monetization and cross-platform deployment.

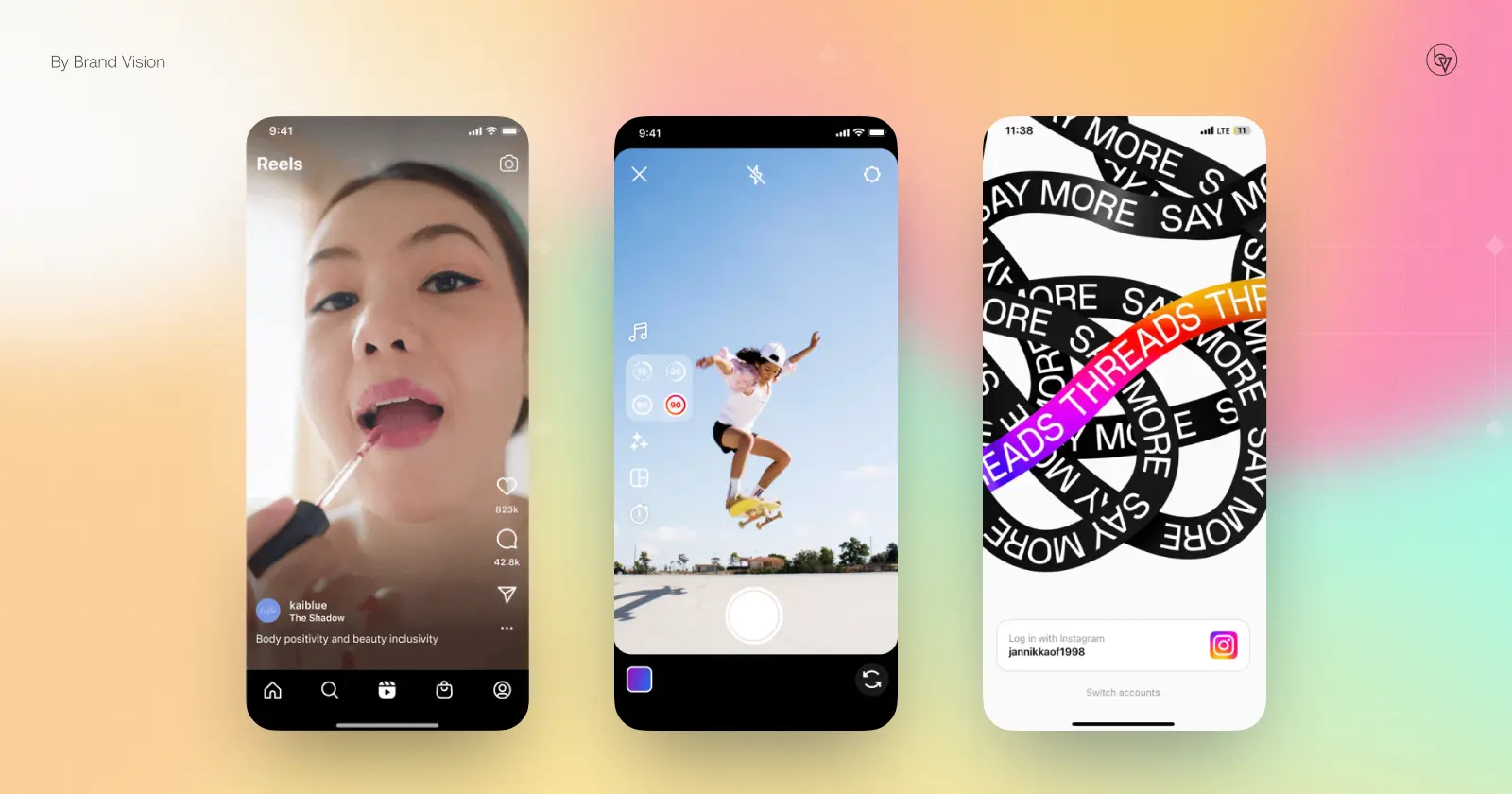

Competitive Pressure: The AI Video Wars Reshape Attention Economics

Snap’s struggles are amplified by the accelerating AI capabilities of its rivals. TikTok’s parent company ByteDance recently deployed a 1.2 trillion-parameter mixture-of-experts (MoE) model for video recommendation, reducing content mismatch by 34% according to a preprint from Cornell University. Meanwhile, Meta’s Llama 4-powered Reels engine now generates personalized background music and dynamic captions in real-time using on-device LLMs quantized to 4-bit precision—a feat Snap’s current NPU-dependent architecture struggles to match without draining battery life.

These advances are shifting advertiser priorities. A March 2026 survey by eMarketer found that 68% of CPG brands now allocate more budget to TikTok’s AI-driven Spark Ads than to Snap’s AR try-ons, citing superior ROI tracking and lower creative production costs. Snap’s attempt to counter with its own generative ad tool—Dream Ads—has seen lukewarm adoption, partly because it requires brands to upload high-resolution 3D asset libraries, a barrier for small businesses.

Path Forward: Can Snap Reclaim Momentum Through Open Innovation?

To reverse its trajectory, Snap must address three critical gaps: First, reduce on-device AI latency by adopting model quantization techniques similar to those used in Qualcomm’s Hexagon NPU SDK, which can cut inference time by 40% without significant accuracy loss. Second, revisit its developer economics—perhaps introducing a tiered revenue share that rewards high-performing Lenses with lower cuts, akin to YouTube’s Partner Program. Third, embrace selective openness: releasing non-core model components (e.g., its face mesh estimator) under an Apache 2.0 license could rebuild trust while retaining control over its core differentiation.

Without such moves, Snap risks becoming a cautionary tale in the AI era—a company that innovated ahead of its time but failed to build the open, scalable ecosystem needed to sustain long-term relevance. As the market punishes speculative bets on unproven monetization, the pressure is on Snap to prove its AR vision isn’t just technically impressive, but economically viable.