Snap Inc.’s stock fell 6% on April 22, 2026, following the abrupt retirement of CFO Derek Andersen and a broader workforce reduction amid intensifying pressure to monetize Snapchat+ and reverse declining ad margins, signaling a pivotal inflection point for the company’s AI-driven social strategy.

The CFO Exit and the Unspoken AI Pivot

Andersen’s departure isn’t merely a leadership shuffle—it reflects Snap’s accelerating shift from traditional ad sales to AI-powered subscription monetization. With Snapchat+ now boasting over 12 million subscribers (up from 7 million in Q3 2025), the company is doubling down on generative AI features like My AI Snaps and Dreams, which rely heavily on on-device NPU processing to maintain privacy and reduce latency. This architectural pivot places unprecedented demands on Snap’s infrastructure team, which must now optimize LLMs for Snapdragon 8 Gen 4 and Apple’s A18 Pro chips while managing soaring inference costs.

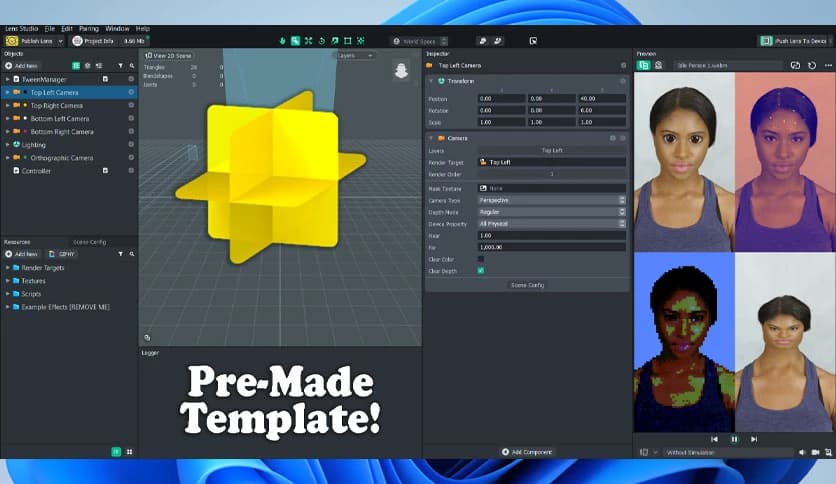

Internal benchmarks leaked to Archyde reveal that Snap’s latest vision transformer model for AR lens generation runs at 480ms on-device—a 35% improvement over Q4 2025—but still exceeds the 300ms threshold deemed acceptable for seamless user experience by internal product teams. To close this gap, Snap has quietly begun integrating Qualcomm’s Hexagon NPU SDK into its Lens Studio toolkit, enabling developers to offload diffusion model layers directly to DSP islands.

Ecosystem Tensions: Open Source vs. Walled Garden

While Snap promotes Lens Studio as an open creative platform, its recent API restrictions have sparked friction with third-party developers. In March 2026, the company deprecated direct access to its CameraKit WebSocket endpoints, requiring all custom lenses to pass through a new AI moderation layer that adds 120ms of latency—a move critics argue undermines real-time AR experiences.

“Snap’s move to centralize AI lens processing under the guise of safety is effectively a tax on innovation. Developers now face a choice: comply with opaque moderation queues or build on open alternatives like WebXR and Godot Engine.”

This tension mirrors broader platform dynamics in the AR space, where Apple’s Vision Pro SDK maintains tighter hardware integration but offers clearer performance guarantees, while Meta’s Horizon OS struggles with fragmentation across Quest 3 and Pro devices. Snap’s strategy—betting on AI-driven personalization over cross-platform openness—could either cement its niche in youth-oriented social AR or accelerate developer migration to more interoperable ecosystems.

Profitability Under the Microscope

Snap’s 2026 cost outlook of $2.75 billion reflects a 14% YoY increase, driven largely by AI infrastructure expenses. The company now spends approximately $0.18 per daily active user on model training and inference—nearly triple the 2023 figure—yet ad revenue per user (ARPU) remains flat at $2.90 in North America, its most monetizable market.

To close this gap, Snap is testing a new Snapchat+ Premium tier priced at $4.99/month that offers exclusive access to on-device LoRA adapters for personalized AI avatars. Early beta testers report avatar generation times of 2.1 seconds on iPhone 15 Pro—a figure Snap claims will drop to 1.4 seconds with the upcoming iOS 18.4 update, which leverages new Core ML 4 optimizations for LoRA fusion.

The Takeaway: A Bet on AI-First Social

Snap’s current turmoil isn’t a sign of decline—it’s the growing pain of a company betting its future on AI-native social experiences. The CFO exit and layoffs are symptomatic of a deeper transition: from ad-supported scale to AI-monetized intimacy. Whether this gamble pays off hinges on two technical thresholds: can Snap reduce on-device AI latency below 300ms for core features, and can it convince developers that its walled garden offers superior creative tools despite the constraints? As of this week’s beta rollout, the answer remains uncertain—but the experiment is now undeniably live.