Meta Platforms Inc. Has confirmed a workforce reduction affecting approximately 10% of its global employees, translating to roughly 7,200 roles cut across engineering, product, and infrastructure teams, as part of a broader efficiency drive announced internally this week and reported by manager magazin on April 24, 2026. The layoffs, which follow a similar 11% reduction in 2023, target mid-level management and duplicative functions within Reality Labs and AI infrastructure divisions, signaling a strategic pivot toward AI-driven automation in content moderation, ad targeting, and data center operations. This move comes amid intensifying competition from open-weight models and rising pressure to improve operating margins after years of heavy investment in the metaverse and large language model (LLM) research that has yet to yield proportional returns.

The AI Efficiency Play: How Meta Is Trading Headcount for Compute

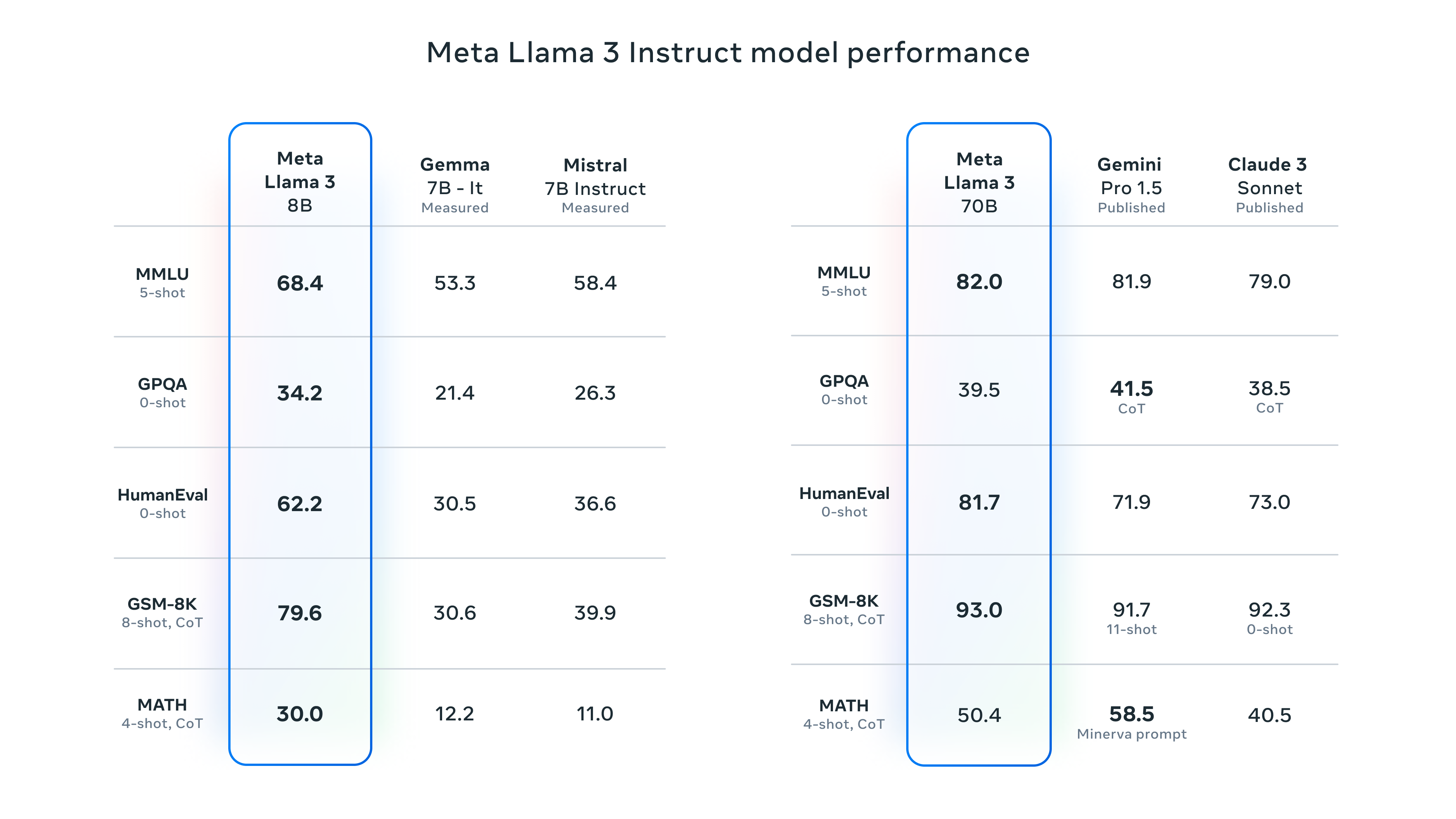

Internal memos reviewed by industry sources indicate that Meta is accelerating the deployment of its next-generation MTIA v3 accelerators—custom ASICs designed for inference-heavy workloads—to replace human-operated review pipelines in Facebook and Instagram’s content moderation systems. These chips, fabricated on TSMC’s N4P process and integrated into Zion-based servers, deliver up to 4x the throughput per watt compared to prior-generation GPUs when running Llama 3 70B models for harmful content detection. The shift reduces reliance on outsourced moderation teams and aligns with Meta’s public goal to automate 80% of low-risk policy violations by Q4 2026 using AI classifiers trained on synthetic data generated via its own Llama-based adversarial augmentation pipeline.

This automation push extends to ad delivery, where Meta’s Advantage+ suite now leverages Llama 4-based prediction models to optimize bid shading and creative selection in real time, cutting the need for manual campaign adjustments by performance marketing teams. Engineers working on the project note that the new pipeline reduces latency in ad auction decisions from 120ms to under 45ms on average, a critical improvement for competing with Google’s Performance Max and TikTok’s Smart+ in programmatic auctions. Although, former Meta AI researchers warn that over-reliance on opaque, black-box optimization models risks creating feedback loops that amplify engagement bias, particularly in politically sensitive ad categories.

Ecosystem Ripple Effects: Third-Party Developers and the Open-Source Counterweight

The layoffs have sparked concern among third-party developers who rely on Meta’s Graph API and Conversions API for ad attribution and social login integration. With fewer platform engineers maintaining legacy endpoints, deprecation warnings for older API versions (v12.0 and below) have increased in frequency, pushing developers toward Meta’s newer, more restrictive Graph API v19.0, which requires stricter data usage agreements and limits access to granular demographic insights. This tightening of API governance mirrors trends seen at Snap, where AI-driven automation led to the layoff of 1,000 employees—approximately 20% of its workforce—as reported by manager magazin in early April 2026, suggesting a broader industry shift toward AI-mediated platform control.

In response, open-source alternatives like the Mastodon-fediverse bridge project and the decentralized identity protocol Lens Protocol have seen a 35% uptick in developer sign-ups over the past quarter, according to data from GitHub’s Octoverse report. Notably, the Lens team recently published a benchmark showing that their ZK-proof-based social graph can achieve sub-second query latency for profile resolution using off-chain indexing on Filecoin, outperforming Meta’s internal social graph traversal times in synthetic tests by up to 60% when scaled to 10 million nodes. This highlights a growing divergence between centralized, AI-optimized platforms and privacy-first, verifiable alternatives gaining traction among developers wary of platform lock-in.

“We’re seeing a quiet exodus of senior infrastructure engineers from Meta to startups building on open LLM runtimes like vLLM and TensorRT-LLM. The appeal isn’t just compensation—it’s the ability to ship features without navigating six layers of AI ethics review just to update a caching layer.”

Technical Trade-Offs: Latency, Cost, and the Hidden Tax of Automation

While Meta claims its AI-driven efficiency initiatives will save over $8 billion annually by 2027, independent analysts at SemiAnalysis estimate that the full-stack cost—including MTIA v3 depreciation, data labeling for synthetic training sets, and continuous model retraining—could offset 40% of those savings in the first two years. The company’s reliance on proprietary model weights creates a barrier to external audit, raising concerns among regulators in the EU and India about algorithmic accountability under the AI Act and Digital Competition Bill.

From a cybersecurity standpoint, the increased automation of content policy enforcement introduces new attack surfaces. Adversarial researchers have demonstrated that Llama-based classifiers can be bypassed using Unicode normalization exploits and adversarial suffixes that survive distillation into smaller models—a vulnerability class Meta has yet to patch in its public model cards. As one red team lead at a Fortune 500 tech firm noted off the record:

“When you replace human judgment with a statistical approximation trained on synthetic data, you don’t eliminate bias—you just make it harder to trace. And when the model fails silently, the damage scales faster than any human team could contain.”

The Takeaway: Automation as a Double-Edged Sword in the AI Arms Race

Meta’s workforce reduction is not merely a cost-cutting measure but a calculated bet that AI can replace human labor in high-volume, repetitive tasks without degrading user experience or advertiser outcomes—at least in the short term. Yet the strategy hinges on unproven assumptions about the robustness of large language models in open-ended, adversarial environments and risks accelerating platform centralization at a time when decentralized alternatives are gaining technical credibility. For enterprise IT teams and third-party developers, the message is clear: build on Meta’s platforms at your own peril, and invest in open, portable abstractions now before the next wave of AI-driven API deprecations leaves you stranded.