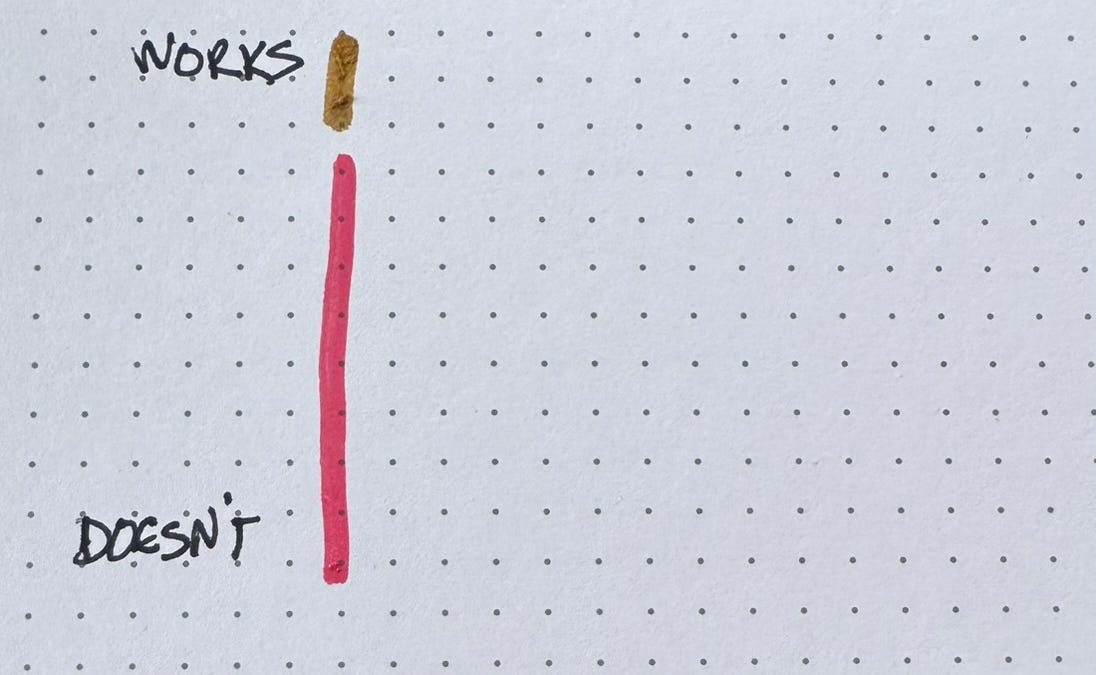

Kent Beck’s “Genie Tarpit” concept, resurfacing in developer discussions this week, isn’t a new idea – it’s a stark reminder of the fundamental tension in software design: prioritizing immediate functionality versus long-term maintainability. The core principle, visualized as a two-dimensional space of “works” versus “tidy,” forces a critical question: do you ship now with technical debt, or invest upfront in a cleaner, more robust foundation? This debate is particularly acute as we move deeper into an era of increasingly complex AI systems and rapidly evolving software architectures.

The Allure and Peril of Immediate Velocity

The “tarpit” metaphor is potent. It describes the situation where adding features feels productive in the short term, but each addition incrementally increases complexity, making future changes exponentially harder. We’ve all been there – a codebase that feels like wading through molasses. The pressure to deliver, especially in competitive markets, often pushes teams towards the “ship now, fix later” mentality. But “later” rarely arrives. The accumulation of this technical debt isn’t merely a matter of code quality; it directly impacts developer velocity, increases bug rates and ultimately stifles innovation. The current trend towards microservices, while offering benefits in terms of scalability and fault isolation, can *exacerbate* the tarpit problem if not carefully managed. Each microservice represents a potential point of untidiness, and coordinating changes across multiple services adds significant overhead.

What This Means for Enterprise IT

For large organizations, the Genie Tarpit represents a significant financial risk. The cost of refactoring a deeply entangled system can easily outweigh the initial savings from rapid, but sloppy, development.

The problem isn’t limited to legacy systems. Even projects built with modern frameworks like React or Angular can quickly descend into a tarpit if architectural principles are ignored. The ease of adding components and libraries can create a dependency hell, where understanding the interactions between different parts of the system becomes a Herculean task. What we have is where tools like static analysis and dependency management develop into crucial – but they are often treated as afterthoughts rather than integral parts of the development process.

The Rise of Observability and the Quest for “Tidy”

Interestingly, the renewed focus on the Genie Tarpit coincides with a surge in interest in observability tools. Platforms like Honeycomb.io and New Relic are gaining traction because they provide developers with deeper insights into the behavior of their systems. This isn’t just about monitoring metrics; it’s about understanding the *relationships* between different components and identifying the root causes of performance bottlenecks and errors. Observability allows teams to proactively address potential sources of untidiness before they become major problems. The shift towards distributed tracing, for example, allows developers to follow a request as it flows through multiple microservices, revealing hidden dependencies and performance issues.

However, observability is not a silver bullet. It requires a significant investment in instrumentation and a cultural shift towards data-driven decision-making. Simply collecting data isn’t enough; you need to be able to analyze it effectively and translate it into actionable insights. This is where AI and machine learning are starting to play a role, automating the process of anomaly detection and root cause analysis.

LLMs and the Automation of Code Refactoring

The emergence of large language models (LLMs) like OpenAI’s GPT-4 and Google’s Gemini presents a potentially transformative opportunity to address the Genie Tarpit. These models are increasingly capable of understanding and manipulating code, and can be used to automate tasks like code refactoring, bug fixing, and documentation generation. Several startups are now offering AI-powered tools that integrate with popular IDEs and version control systems, providing developers with real-time assistance in maintaining code quality.

However, it’s crucial to approach these tools with caution. LLMs are not perfect, and can sometimes introduce new bugs or security vulnerabilities. The quality of the output depends heavily on the quality of the input and the specific training data used to build the model. Relying too heavily on AI-powered tools can lead to a decline in developers’ own skills and understanding of the codebase.

“The biggest challenge with using LLMs for code refactoring isn’t the technical limitations of the models themselves, but the lack of trust and the difficulty of verifying the correctness of the generated code. Developers need tools that provide clear explanations of the changes made and allow them to easily revert to the original code if necessary.”

– Dr. Anya Sharma, CTO of CodeLens AI, speaking at the AI in Software Engineering Summit, April 2026.

The key is to use LLMs as *assistants*, not replacements, for human developers. They can handle the tedious and repetitive tasks, freeing up developers to focus on more complex and creative problems.

The Architectural Implications: From Monoliths to Modular Systems

The Genie Tarpit problem is intrinsically linked to software architecture. Monolithic applications, with their tightly coupled components, are particularly susceptible to becoming untidy. The larger the monolith, the harder it is to understand and modify. This is why there’s been a widespread move towards microservices and other modular architectures.

However, simply breaking an application into smaller pieces doesn’t automatically solve the problem. If the microservices are poorly designed or lack clear boundaries, they can quickly become a distributed tarpit. The key is to embrace principles of loose coupling and high cohesion. Each microservice should have a well-defined responsibility and should interact with other services through well-defined APIs.

Event-driven architectures, where services communicate asynchronously through events, can also assist to reduce coupling and improve resilience. Frameworks like Apache Kafka and RabbitMQ provide the infrastructure for building event-driven systems.

The 30-Second Verdict

Prioritize code quality *from the start*. Technical debt is inevitable, but it should be managed proactively, not ignored. Observability and AI-powered tools can help, but they are not substitutes for excellent architectural design and disciplined development practices.

The debate isn’t about choosing between speed and quality; it’s about finding the right balance. Shipping quickly is critical, but not at the expense of long-term maintainability. The Genie Tarpit serves as a cautionary tale – a reminder that neglecting the fundamentals of software design can have devastating consequences. The current wave of AI tools offers a potential path towards mitigating this risk, but only if used thoughtfully and strategically. The canonical source for Beck’s original writing can be found here. Further discussion on the topic is available on Stack Exchange, highlighting the ongoing relevance of this concept. For a deeper dive into observability, explore the documentation for Honeycomb.