The GUARD Act is a proposed U.S. Legislative framework aiming to protect minors from “AI companions” by mandating strict age verification. However, its broad definitions threaten to block teenagers from basic AI tools and force all adult users to surrender sensitive government IDs to access the open web.

Let’s be clear: the intent—stopping predatory AI bots from grooming vulnerable teens—is a necessary fight. But the execution of the GUARD Act is a textbook example of regulatory blunt-force trauma. As we head into this week’s pivotal vote, the bill is being framed as a safety shield. In reality, it’s a blueprint for a gated internet where anonymity is a relic and a government ID is the only valid API key for basic search functionality.

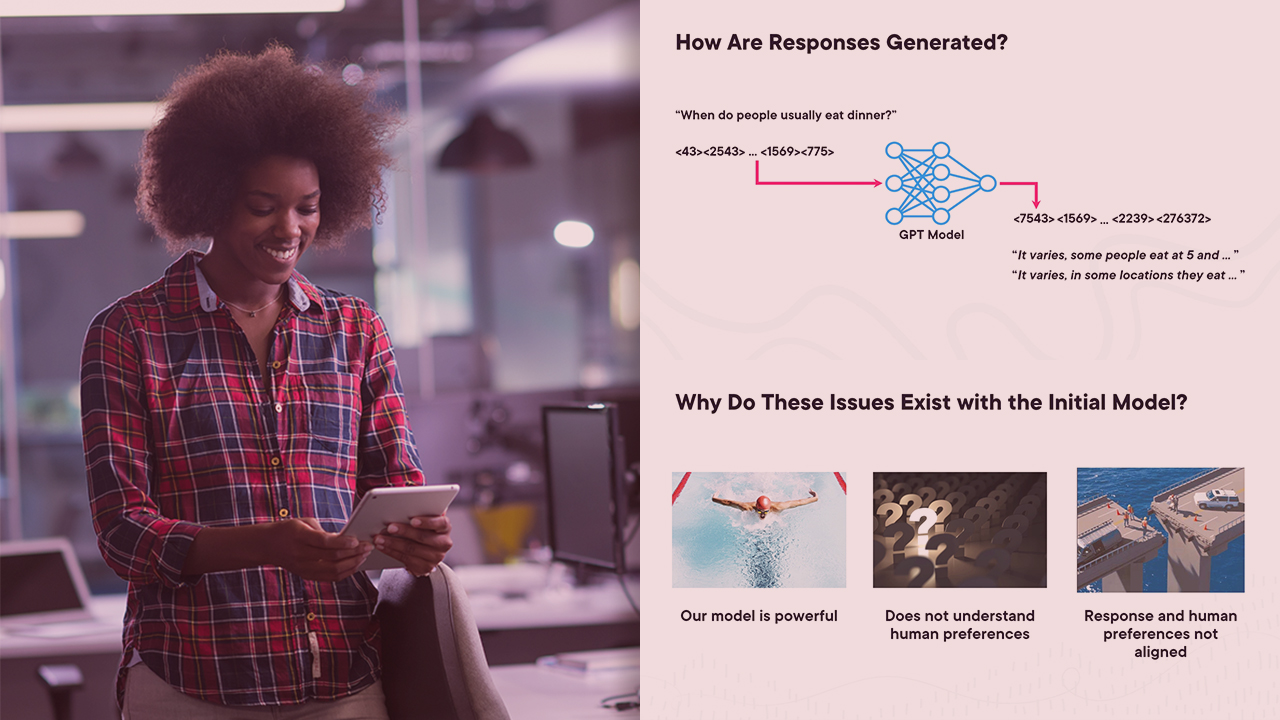

The core of the failure lies in the bill’s definitions. By labeling any system that generates non-pre-written responses as an “AI chatbot,” the legislation effectively captures almost every modern LLM (Large Language Model) implementation. From a technical standpoint, this is absurd. Whether a model is a 7B parameter lightweight instance running on an NPU (Neural Processing Unit) in a laptop or a trillion-parameter behemoth in a GPU cluster, the underlying mechanism of token prediction is the same. The bill doesn’t distinguish between a specialized “companion” and a utility tool; it simply sees “generative text” and triggers a compliance alarm.

The “Companion” Fallacy and the Death of Utility

The act introduces the term “AI companion,” defined as a bot that encourages interpersonal or emotional interaction. To a lawmaker, this sounds like a niche category of virtual girlfriends. To an engineer, this is a nightmare of ambiguity. Modern RLHF (Reinforcement Learning from Human Feedback) is specifically designed to make AI helpful, polite, and conversational. When a tutor bot says, “That’s a great question, let’s break down this calculus problem together,” This proves facilitating an interpersonal interaction.

Under the GUARD Act, that tutor bot is now a “companion.”

Companies facing fines of $100,000 per violation will not gamble on the nuances of “emotional interaction.” They will simply implement the safest possible default: a hard block on anyone under 18. We are looking at a future where a high school junior is locked out of a customer service bot as they can’t prove their age to a third-party vendor just to return a pair of defective headphones.

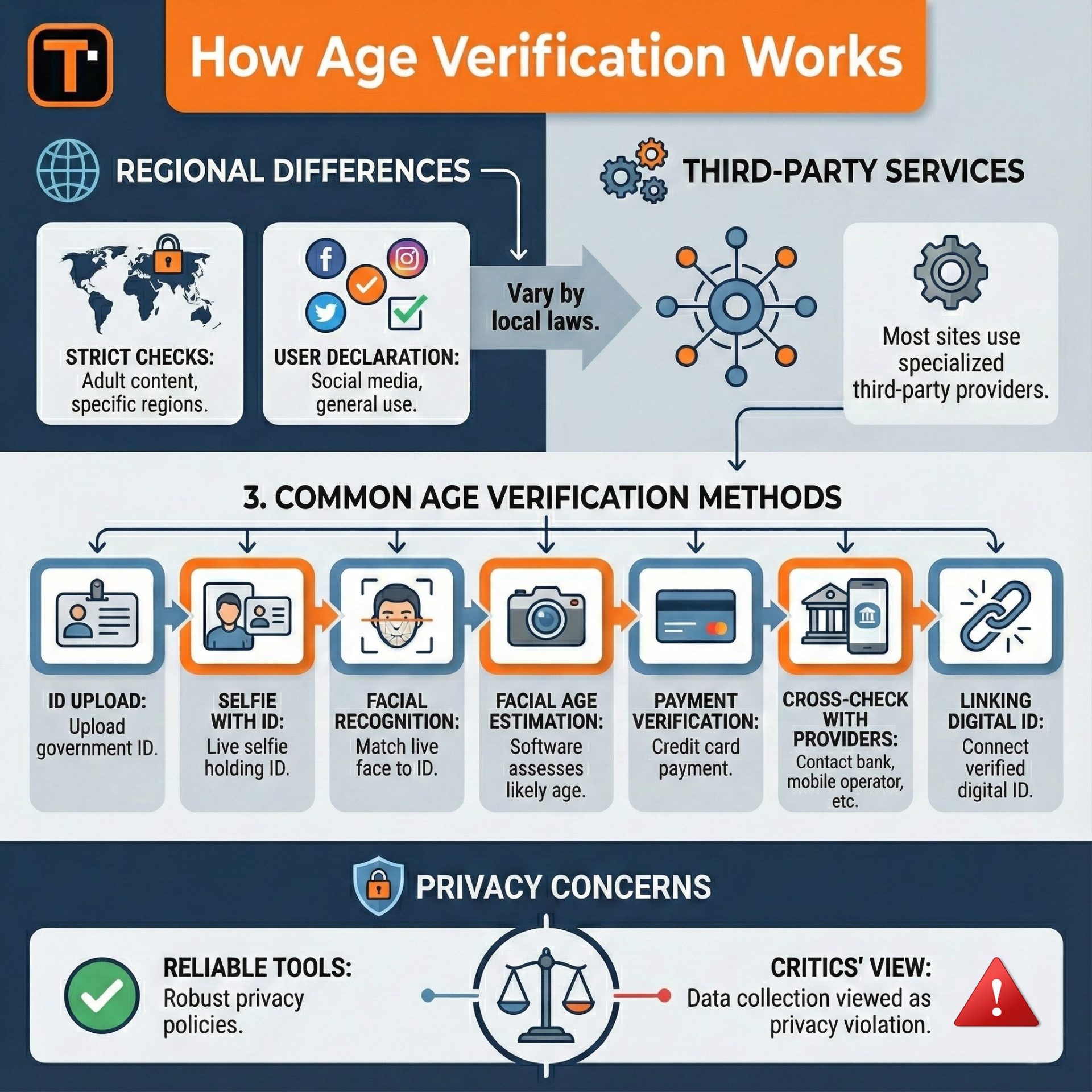

“The danger here isn’t just the restriction of access, but the creation of massive, centralized identity honeypots. Requiring ‘reasonable age verification’ essentially mandates that every small AI startup outsource their user’s most sensitive PII to a handful of identity providers, creating a systemic cybersecurity vulnerability.” — Marcus Thorne, Lead Security Architect at NexaShield

The Identity Stack: Trading Privacy for a False Sense of Security

The most insidious part of the GUARD Act is the requirement for “reasonable age verification.” This is a euphemism for the end of the pseudonymous web. We are moving away from simple checkboxes toward a regime of government ID uploads, biometric facial scanning, or financial record verification. This isn’t just a UX friction point; it’s a fundamental shift in the internet’s architecture.

From a security perspective, this is a catastrophe. Every single database that stores a copy of a user’s driver’s license becomes a Tier-1 target for state-sponsored actors and cybercriminals. While proponents suggest using NIST Digital Identity Guidelines to mitigate risk, the reality is that most companies will opt for the cheapest, most invasive third-party API available. This creates a “verification layer” that sits between the user and the tool, adding latency to every request and creating a single point of failure for user privacy.

It is a compliance tax on the user’s identity.

The Compliance Moat: How Big Tech Wins

While the GUARD Act is framed as a way to curb the power of “dangerous AI,” it actually builds a massive moat around the incumbents. Consider the operational overhead for a small open-source developer hosting a model on GitHub or a boutique AI lab. They cannot afford the legal counsel to parse “emotional interaction” or the enterprise-grade infrastructure to securely handle government IDs.

- Enterprise Lock-in: Only companies like Google, Microsoft, and Meta have the existing identity infrastructure (OAuth 2.0, integrated account systems) to absorb these requirements without breaking their product.

- Open Source Erosion: Small-scale deployments of open-weights models (like Llama or Mistral) become legal liabilities for the host if they cannot verify every single user’s age.

- Innovation Stagnation: The “safe” move is to strip features. We will see “lobotomized” versions of AI tools for the general public, where any hint of personality is removed to avoid the “companion” label.

The Latency of Law: Where Policy Meets the Inference Layer

If we look at the actual request-response cycle of an AI tool, the GUARD Act inserts a mandatory authentication handshake before the prompt even reaches the inference engine. In a world where we are fighting for millisecond reductions in Time To First Token (TTFT), adding a mandatory, third-party identity check is a regression in performance. For developers building real-time AI agents, this is an unacceptable bottleneck.

the bill ignores the reality of “jailbreaking.” Any teenager with a basic understanding of prompt engineering or access to a local, uncensored model running on an NVIDIA RTX card can bypass these gates. The GUARD Act doesn’t stop the “dangerous” AI; it only stops the “useful” AI from being accessible to the people who need it most for education and productivity.

We are trading the privacy of 330 million adults and the educational access of millions of teens for a regulatory checkbox that doesn’t actually solve the problem of harmful AI content. The solution to harmful AI is better alignment, robust safety filters at the model level, and targeted enforcement against bad actors—not a digital border crossing for every search query.

The internet was designed to be an open protocol. The GUARD Act wants to turn it into a gated community. If this passes, the “open web” becomes a myth, and your government ID becomes the only way to question a chatbot for support with your algebra homework.