Consumers are shifting toward decentralized “Home AI Nodes” as public opposition to hyperscale data centers grows. This trend leverages edge computing to move AI inference from the cloud to residential hardware, reducing latency and energy grid strain while creating a new multi-billion dollar consumer infrastructure market by mid-2026.

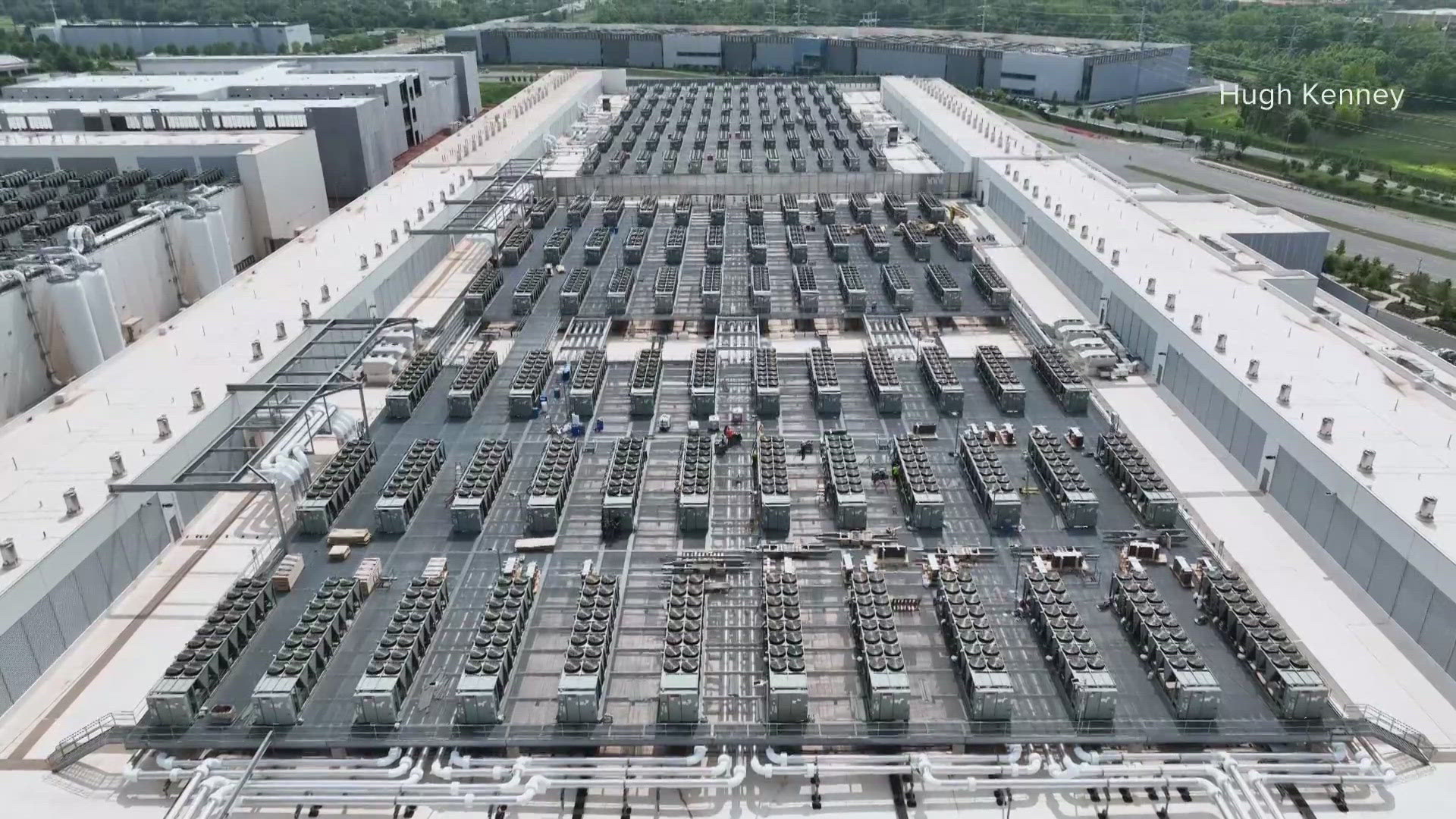

The narrative surrounding artificial intelligence has long been dominated by the “hyperscalers”—the behemoths like Microsoft (NASDAQ: MSFT) and Amazon (NASDAQ: AMZN)—who have spent billions on massive, water-hungry data centers. However, as we enter the second quarter of 2026, a critical inflection point has arrived. Municipal zoning boards and environmental regulators are increasingly blocking large-scale buildouts due to power grid instability and water scarcity. This regulatory friction is forcing a strategic pivot toward “Edge AI,” where the compute power is distributed into the home.

The Bottom Line

- CAPEX Shift: Capital expenditure is migrating from centralized corporate cloud builds to decentralized consumer hardware spending.

- Infrastructure Opportunity: Power management and cooling firms, specifically Vertiv (NYSE: VRT) and Schneider Electric (EPA: SU), are pivoting to residential-grade high-density power solutions.

- Asset Monetization: Homeowners are transitioning from passive energy consumers to “compute providers,” leasing idle GPU cycles back to the network.

The Municipal Gridlock and the Rise of the Home Node

For the past three years, the market assumed that the only way to scale AI was to build larger. But the math no longer adds up. In several U.S. Jurisdictions, the lead time for grid interconnection has extended to five years, and energy costs for industrial-scale cooling have increased 18% YoY. This has created a “compute vacuum” that the residential market is now filling.

Here is the breakdown: instead of sending every prompt to a remote server in Virginia or Iowa, the industry is moving toward “local inference.” By installing a dedicated AI server—essentially a high-performance compute node—directly into the home, users eliminate the latency of the round-trip to the cloud and bypass the regulatory bottlenecks hindering Considerable Tech. This shift is not merely a convenience; it is a financial necessity for maintaining the growth trajectories of AI services.

“The future of AI is not a few giant brains in the desert, but millions of small brains distributed across the edge. We are seeing a fundamental redistribution of where the actual work of intelligence happens.”

This transition is being accelerated by the release of specialized “inference-only” chips. While NVIDIA (NASDAQ: NVDA) dominated the training phase with H100s, the current market is shifting toward lower-power, high-efficiency chips designed for the home environment. This allows users to run sophisticated Large Language Models (LLMs) without upgrading their entire electrical panel to industrial standards.

The Hardware Pivot: From Gaming PCs to Residential Infrastructure

Taking control of the AI boom requires moving beyond the consumer GPU. The “Home Data Center” is becoming a structured architectural component of the modern residence, similar to how the HVAC system or the electrical breaker box is viewed. We are seeing the emergence of “AI-Ready” home certifications, which impact real estate valuations by adding a premium to homes with dedicated cooling and high-amperage power circuits.

But the balance sheet tells a different story when you look at the margins. For companies like AMD (NASDAQ: AMD), the opportunity lies in the “prosumer” market. By selling integrated AI clusters—combining compute, storage, and liquid cooling—they can capture a higher margin per unit than they do in the highly competitive cloud server market.

To understand the financial trade-offs between continuing to rely on the cloud versus investing in home infrastructure, consider the following metrics:

| Metric | Cloud-Based Inference (SaaS) | Home-Edge AI Node | Variance |

|---|---|---|---|

| Monthly Recurring Cost | $20 – $200 (Subscription) | $15 – $40 (Electricity/Maint) | -70% to -90% |

| Data Latency | 50ms – 200ms | < 5ms | -97% |

| Upfront CAPEX | $0 | $2,500 – $10,000 | +$10k Max |

| Data Sovereignty | Third-Party Controlled | User Controlled | Absolute |

The Macroeconomic Ripple Effect on Energy and Real Estate

This decentralization is not without its risks. As compute moves into the home, the burden on the residential electrical grid increases. This creates a secondary boom for the “electrification” sector. We are seeing an increase in demand for home battery systems and solar integration to offset the 24/7 power draw of AI nodes. This trend is directly reflected in the SEC filings of residential energy providers, who are now listing “AI-driven load growth” as a primary driver for infrastructure investment.

this creates a new revenue stream for the homeowner. Through decentralized compute networks, users can “rent” their idle GPU power to researchers or startups. This transforms a luxury tech purchase into a yield-generating asset. If a home node generates $150 per month in rental income, the effective payback period on a $5,000 investment drops to under three years, assuming a stable demand for inference.

Here is the math on the broader market: the “Edge AI” hardware market is projected to grow at a CAGR of 22.4% through 2030. This growth is pulling demand away from traditional data center REITs (Real Estate Investment Trusts) and pushing it toward consumer electronics and home improvement sectors. For investors, the play is no longer just about the chipmakers, but about the “plumbing”—the cables, the cooling, and the power regulators.

The Trajectory: From Luxury to Utility

As we look toward the close of 2026, the “Home AI Node” will likely transition from a niche enthusiast product to a standard home utility. Much like the transition from dial-up modems to fiber-optic broadband, the ability to process data locally will become a prerequisite for participating in the AI economy. Companies that can simplify the installation and management of these systems—essentially “AI-as-an-Appliance”—will capture the largest share of this new market.

The strategic move for the individual is clear: investing in the physical infrastructure of the home now prevents reliance on the increasingly expensive and regulated cloud. For the institutional investor, the focus should shift toward the supply chain that enables this transition. Keep a close eye on Bloomberg’s energy indices and Reuters’ tech supply chain reports to track the movement of cooling components and power semiconductors.

the AI boom is moving out of the warehouse and into the living room. Those who control the hardware at the edge will control the flow of intelligence in the next decade.

Disclaimer: The information provided in this article is for educational and informational purposes only and does not constitute financial advice.