Techdirt’s historical retrospective for May 3rd–9th reveals a recurring cycle of digital friction. Across 2006, 2011, and 2016, the central conflict remained unchanged: the collision between state-sponsored surveillance, corporate copyright hegemony, and the fundamental human right to digital privacy and ownership in an increasingly networked world.

Looking back from the vantage point of May 2026, these archives aren’t just a trip down memory lane; they are a blueprint of the current AI and cybersecurity wars. The “fishing expeditions” of 2011 copyright trolls have simply evolved into the massive, automated scraping operations used to train Large Language Models (LLMs) without consent. The 2016 battles over WhatsApp’s end-to-end encryption (E2EE) were the opening salvos for today’s debates over client-side scanning and post-quantum cryptography.

The patterns are systemic.

The Encryption Deadlock: From WhatsApp to Post-Quantum Reality

In 2016, the world watched Brazil block WhatsApp because the platform refused to compromise its encryption to appease a judge. At the time, the technical debate centered on the feasibility of “backdoors”—essentially creating a master key that governments could use to bypass security. We now know that in the realm of cryptography, a backdoor for the “good guys” is effectively a front door for any adversary with enough compute power.

Today, the stakes have shifted from simple message interception to the threat of “Store Now, Decrypt Later” (SNDL) attacks. State actors are currently harvesting encrypted data, betting that future quantum computers—utilizing Shor’s algorithm—will render current RSA and ECC (Elliptic Curve Cryptography) obsolete. This is why the industry is frantically migrating to NIST-standardized post-quantum algorithms.

"The fundamental tension is that security is binary; you either have it or you don't. Any attempt to introduce 'exceptional access' inherently degrades the security of the entire system for every user," notes Bruce Schneier, a renowned cybersecurity analyst, and cryptographer.

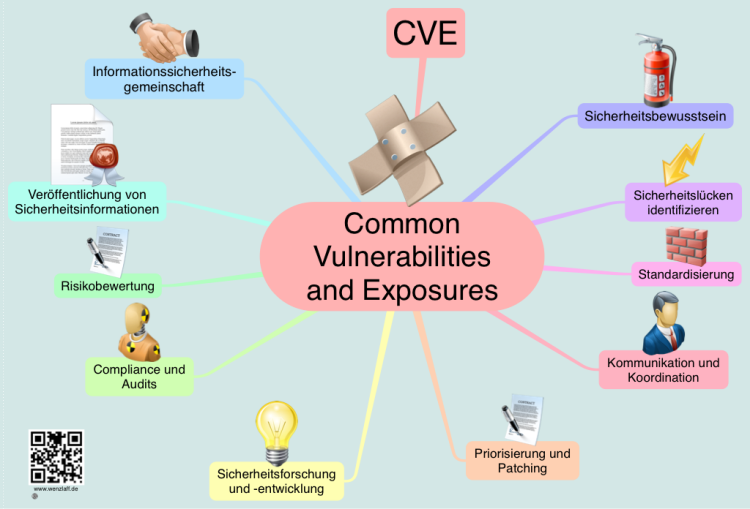

The 2016 struggle over FBI hacking warrants was a precursor to the modern “zero-day” market. The government’s desire for “highly-questionable legal arguments” to justify hacking has evolved into a multi-billion dollar industry where vulnerabilities are traded like commodities on the dark web, often bypassing the CVE (Common Vulnerabilities and Exposures) reporting process to maintain offensive capabilities.

The Ownership Paradox: Copyright Trolls vs. Model Weights

The 2011 archives are dominated by “copyright trolls” like John Steele and the failure of ACTA. These entities used the legal system as a profit center, leveraging the fear of statutory damages to shake down individual users. In 2016, Techdirt asked, “Do You Own What You Own?” The answer has only become more cynical.

We have moved from the era of DRM (Digital Rights Management) to the era of the “Subscription Everything” model. You don’t own your software, your movies, or even the OS on your hardware; you license a temporary right to access them. This “rental economy” has now extended to the very data used to train AI. The “fishing expeditions” of 2011 have been replaced by the “scraping expeditions” of 2024-2026, where trillion-parameter models are trained on the collective output of the internet, often in direct violation of the Electronic Frontier Foundation’s principles of fair use.

The legal battle has shifted from “did you download this song?” to “did this LLM ingest my codebase to generate a competing function?” The core issue remains the same: the attempt to apply 19th-century copyright concepts to 21st-century digital entropy.

From ‘Twenty Questions’ to Agentic AI: The Intelligence Leap

One of the most striking entries from 2006 is “Teaching Artificial Intelligence Through Twenty Questions.” In 2006, AI was largely symbolic—rule-based systems that relied on “if-then” logic and decision trees. It was a rigid, deterministic approach to intelligence.

Fast forward to 2026, and we are dealing with stochastic parrots—models that don’t “know” facts but predict the next token in a sequence based on massive statistical probability. The jump from a “Twenty Questions” bot to an agentic AI capable of autonomous API calls and complex reasoning is a result of LLM parameter scaling and the discovery of the Transformer architecture. We’ve traded the transparency of rule-based systems for the “black box” of neural networks.

The 2006 concern over “monitoring website visitors” has evolved into the pervasive tracking of the modern web. We no longer just track clicks; we track gaze, sentiment, and biometric markers via NPUs (Neural Processing Units) integrated directly into our device silicon, allowing for real-time, on-device behavioral analysis.

- Privacy: The 2016 surveillance warnings were the “canary in the coal mine” for today’s AI-driven mass surveillance.

- Law: Copyright law is still lagging behind technology, shifting from targeting individuals to targeting data provenance.

- AI: We have moved from symbolic logic (2006) to probabilistic intelligence, sacrificing interpretability for power.

- Security: The fight for E2EE is now a race against quantum decryption.

The Architecture of Control

Whether it was the French “Three Strikes” Hadopi program in 2016 or the EU’s struggle with ACTA in 2011, the goal has always been the same: centralized control over decentralized networks. The internet was designed to be an end-to-end architecture, where the “intelligence” resides at the edges (the users) and the network simply moves packets. Every policy mentioned in these Techdirt archives represents an attempt to move that intelligence—and control—back to the center.

For those tracking the current trajectory of the “chip wars” and the push for open-source AI, these archives serve as a reminder that the hardware is just the substrate. The real war is over the protocols. Whether it’s the open-sourcing of weights or the implementation of IEEE standards for AI ethics, the struggle is to ensure that the tools of the future aren’t locked behind the same “three strikes” logic of the past.

The cycle continues. The tools change, the terminology evolves from “data retention” to “data lakes,” but the fundamental tension between the watcher and the watched remains the defining characteristic of the digital age.