On April 17, 2026, viral TikTok videos falsely claiming that gasoline sold at German fuel stations is being diluted with water or cheaper additives have triggered widespread consumer panic, despite zero evidence from regulatory bodies or independent lab tests. The rumors, amplified by algorithmic recommendation engines and lacking credible sourcing, exploit public distrust in energy markets following recent geopolitical supply disruptions. Authorities including the Bundesanstalt für Geowissenschaften und Rohstoffe (BGR) and the ADAC have issued public rebuttals, confirming that fuel quality standards under DIN EN 228 remain strictly enforced through random sampling and spectroscopic analysis at refineries and retail outlets.

The Anatomy of a Digital Fuel Scare: How TikTok’s Algorithm Amplifies Baseless Claims

The current wave of misinformation follows a predictable pattern: short-form videos showing cloudy fuel in transparent containers, often paired with text overlays like “This is why your car is sputtering!” or “Stations are stealing your money.” These clips typically originate from unverified accounts with minimal follower history, yet gain traction through TikTok’s For You Page (FYP) algorithm, which prioritizes engagement over veracity. Unlike platforms such as X (formerly Twitter) or Reddit, which rely on follower graphs or community moderation, TikTok’s recommendation system surfaces content based on watch time and replay rates — metrics easily manipulated by emotionally charged, low-effort speculation. A TikTok’s own transparency report confirms that content triggering strong emotional responses — particularly fear or outrage — receives disproportionate distribution, even when flagged by users as misleading.

What makes this incident particularly concerning is the technical illiteracy embedded in the narrative. Gasoline’s appearance can vary due to temperature fluctuations, ethanol content (up to E10 in Germany), or minor sediment disturbance — none of which indicate adulteration. Yet, the videos present these normal visual changes as proof of fraud, bypassing basic fuel chemistry knowledge. This mirrors earlier disinformation cycles around electric vehicle battery fires and hydrogen refueling safety, where complex technical realities are reduced to sensationalist visuals.

Ecosystem Bridging: When Social Media Algorithms Undermine Critical Infrastructure Trust

The spillover effects extend beyond consumer anxiety. False fuel quality claims can trigger bank runs on gas station revenues, strain emergency services with non-urgent vehicle inspections, and destabilize commodities markets through speculative hoarding. In 2025, a similar hoax in France led to a temporary 12% spike in diesel futures trading on the ICE exchange before being debunked by the UFIP. This reveals a growing vulnerability: as critical infrastructure becomes more digitized — from smart pumps with IoT telemetry to blockchain-based fuel provenance tracking — the human layer of trust remains susceptible to low-cost, high-impact disinformation campaigns.

the incident highlights the asymmetric burden placed on platforms versus regulators. Although TikTok eventually labels or removes blatantly false content under its Community Guidelines, the delay between upload and action allows narratives to metastasize. By contrast, Germany’s Federal Network Agency (Bundesnetzagentur) lacks real-time tools to counter viral falsehoods at scale, relying instead on periodic public statements that struggle to match TikTok’s velocity. As one energy sector analyst noted:

“One can test fuel samples in under an hour using FTIR spectroscopy, but countering a viral TikTok myth takes days — and even then, only reaches a fraction of the original audience. The asymmetry is structural.”

— Dr. Lena Vogel, Senior Analyst, Frontier Economics Berlin

Technical Countermeasures: Beyond Fact-Checking to Algorithmic Accountability

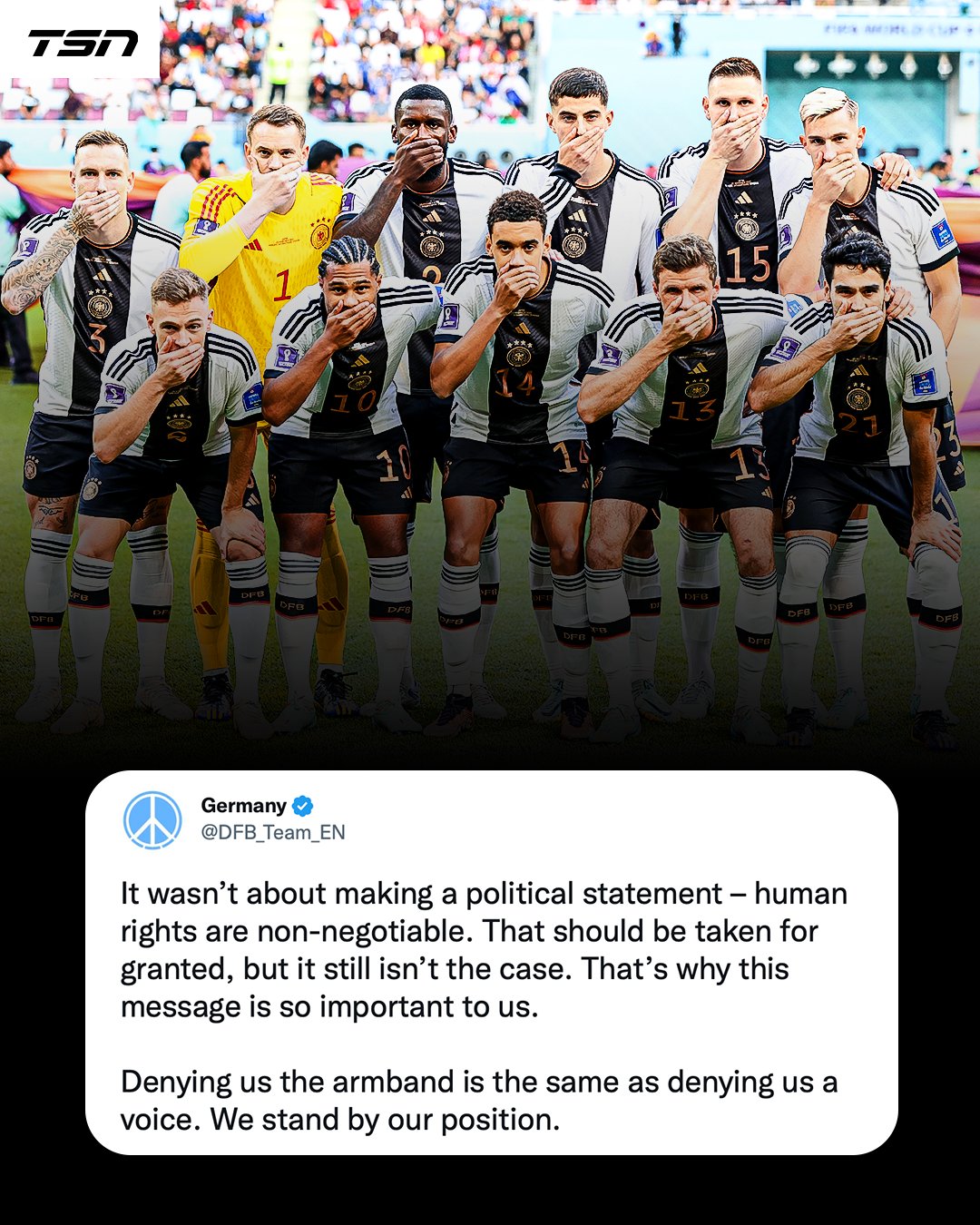

Addressing this requires more than debunking. Platforms must adjust recommendation weights for content involving public safety or essential services. Twitter’s Community Notes model — which appends contextual labels from diverse contributors — has shown promise in reducing belief in false claims by up to 35%, according to a 2024 NBER study. TikTok could adapt a similar system, leveraging its Creator Marketplace to incentivize subject-matter experts (e.g., chemists, automotive engineers) to provide on-video context.

Meanwhile, regulatory bodies should explore pre-bunking strategies. The EU’s upcoming Guidelines on Trusted Flaggers under the DSA could empower accredited institutions like the PTB or ADAC to submit real-time corrections that receive algorithmic priority. Imagine a scenario where a video claiming “watered gas” triggers an automatic overlay from the BGR showing near-infrared spectrometry results from the station’s last audit — turning passive consumption into active verification.

The 30-Second Verdict: Why This Matters for Tech and Society

This isn’t really about gasoline. It’s about how attention economies erode epistemic trust in systems we depend on but rarely see. When a 15-second video can undermine confidence in fuel quality standards enforced for decades, it signals a broader crisis: the decoupling of perception from technical reality in the age of algorithmic curation. For engineers, policymakers, and platform designers, the challenge is clear — build not just safer fuels, but more resilient information ecosystems. Until then, the next viral scare could target anything from medicine to microchips.