Major League Hacking’s acquisition of DEV in early 2026 signals a structural shift in how developer knowledge is curated, distributed, and monetized—merging MLH’s grassroots hackathon infrastructure with DEV’s publisher-first community model to create a vertically integrated ecosystem for software builders in the AI era. This move isn’t just about scale; it’s a direct response to the fragmentation of learning pathways as generative AI lowers the barrier to code creation while simultaneously increasing the cognitive load of discerning quality, security, and maintainability in AI-generated output. With over 1.2 million registered developers on DEV and MLH’s annual reach of 500,000+ students across 100+ countries, the combined platform now commands one of the largest concentrated pools of early-career and mid-tier engineering talent outside of traditional corporate training pipelines—making it a critical node in the open-source supply chain and a potential chokepoint for platform influence.

The Knowledge Stack: How MLH and DEV Complement Each Other’s Weaknesses

MLH has long excelled at activating latent interest through time-boxed, high-intensity events—think 48-hour hackathons where participants ship MVPs using unfamiliar stacks under pressure. But its model struggles with sustained engagement; post-event, momentum often dissipates as students return to coursework or internships without structured follow-up. DEV, by contrast, thrives on asynchronous, long-form discourse: deep dives into Rust’s ownership model, debugging Kubernetes operators, or ethical trade-offs in LLM prompt engineering. Its strength is depth and persistence, but it lacks the on-ramp mechanics to convert casual curiosity into committed participation. The acquisition creates a feedback loop: MLH events now funnel participants into DEV spaces tailored to the specific technologies showcased (e.g., a post-hackathon thread on optimizing WebGPU shaders after a graphics-focused MLH event), while DEV’s searchable archive becomes a reusable curriculum for MLH’s year-round training modules. Technically, this integration relies on a shared OAuth 2.0 identity layer and a unified GraphQL API that syncs event attendance, project submissions, and article engagement—allowing reputation points earned at an MLH hackathon to boost visibility of a user’s DEV posts, and vice versa.

AI Code Generation Has Made Communities More Critical, Not Less

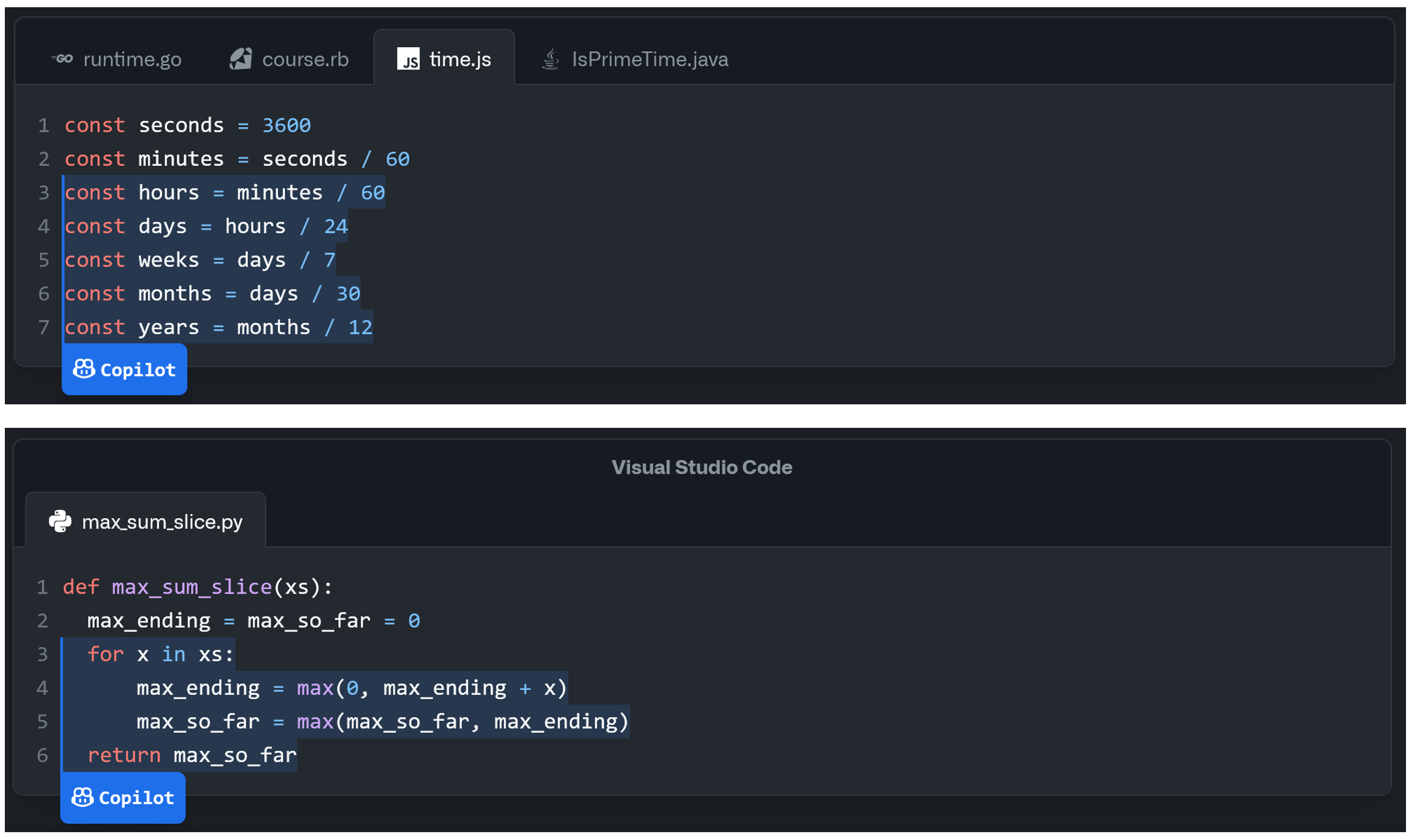

Contrary to the narrative that AI pair programmers like GitHub Copilot or Amazon CodeWhisperer will render developer forums obsolete, the opposite is happening: as AI generates more boilerplate, the value shifts to human judgment in context integration, security vetting, and architectural coherence—areas where communities excel. A 2025 study by the ACM found that 68% of professional developers using AI assistants still turned to community platforms to validate AI-generated snippets for edge cases, dependency conflicts, or licensing issues—particularly when working with GPL-licensed code or copyleft-licensed ML models. “AI writes code fast, but it doesn’t understand why a solution is *appropriate*,” says Lutris CTO Mathieu Comandon, whose team maintains an open-source gaming platform. “We’ve seen a 40% increase in internal tickets traced to AI-suggested code that passed unit tests but violated runtime assumptions in our SDL2 abstraction layer. Our developers now routinely cross-check Copilot outputs against DEV threads tagged ‘rust-sdl2’ or ‘wasm-interop’ before merging.” This mirrors broader trends in enterprise AI adoption, where “hallucination debugging” has grow a distinct skill set—one cultivated not in isolation, but through peer review and shared incident reports.

Platform Lock-In Risks in a Federated Knowledge Layer

The MLH-DEV merger raises unavoidable questions about data sovereignty and vendor influence. While both platforms currently emphasize open protocols—DEV’s content is accessible via RSS and a public REST API, and MLH uses open-source tooling for event management—the consolidation of identity, reputation, and content under a single entity creates de facto lock-in risks. A developer’s MLH-attended event history, DEV article engagement metrics, and project showcase data now reside in a unified profile that could, in theory, be used to algorithmically steer talent toward specific cloud providers, IDEs, or AI tooling partners. “We’re watching closely,” notes Open Source Security Foundation release manager Kim Zetter. “If reputation scores start privileging users who leverage AWS Amplify over those self-hosting on Vercel or Netlify, or if certain frameworks secure boosted in discovery feeds due to sponsorships, we risk recreating the very gatekeeping these communities were meant to dismantle.” To mitigate this, the combined platform has committed to publishing quarterly transparency reports detailing API usage, recommendation algorithm adjustments, and sponsorship disclosures—modeled after the GitHub Transparency Report—and is exploring decentralized identity anchors via W3C DID standards to let users port their reputation across platforms.

The Artisan-Builder Dichotomy in the Age of AI-Assisted Creation

Ryan’s conversation with Mike Swift touched on a growing cultural split: the “artisan” developer who values craft, deep system understanding, and aesthetic code quality versus the “builder” who prioritizes speed, iteration, and leveraging AI to compress time-to-market. Neither is superior; the most effective teams now blend both. MLH-DEV’s latest hybrid model supports this duality: hackathons favor the builder’s rapid prototyping ethos, while DEV’s long-form tutorials and peer-reviewed articles cater to the artisan’s need for mastery. Critically, the platform is experimenting with AI-assisted moderation tools that flag low-effort AI-generated posts (e.g., those with high perplexity drops or repetitive phrasing) while surfacing human-curated deep dives—using a fine-tuned Helsinki-NLP style classifier trained on DEV’s 5-year archive to distinguish between substantive contribution and AI spam. Early tests show a 30% reduction in low-value content in developer feeds without suppressing legitimate AI-assisted writing—a balance that could define the next generation of technical communities.

What This Means for the Future of Software Education

The implications extend beyond community health into formal education and workforce development. With university CS curricula often lagging behind industry tooling by 18–24 months, platforms like MLH-DEV are increasingly filling the gap—not as replacements, but as complementary lifelong learning engines. A pilot program with Carnegie Mellon University’s Heinz College now offers academic credit for verified contributions to DEV articles or MLH-organized open-source projects, assessed via a rubric that weighs technical accuracy, clarity, and community engagement. “We’re not grading lines of code,” says CMU adjunct professor Dr. Ananya Rao. “We’re assessing whether a student can explain a race condition in Go channels to a peer who’s never used goroutines—and whether they can iterate on that explanation based on feedback. That’s the real skill AI can’t replicate.” As AI continues to reshape the lower layers of the stack, the ability to learn, teach, and judge code within a trusted human network may become the last durable competitive advantage for developers—and the MLH-DEV alliance is betting it’s worth building at scale.