Claude is evolving from a conversational LLM into a centralized productivity OS, leveraging advanced tool-employ capabilities to consolidate calendars, task managers, and note-taking apps. By integrating fragmented workflows into a single agentic interface, users are eliminating the “context-switching tax” and redefining personal knowledge management through a unified, AI-driven orchestration layer.

For years, the “productivity stack” has been a fragmented nightmare. We’ve been conditioned to accept a workflow that looks like a digital scavenger hunt: a Todoist list for tasks, a Notion database for notes, and a Google Calendar for time-blocking. We call this “organization,” but in reality, it’s just a series of tabs that eat our RAM and our focus. The cognitive load of moving between these disparate schemas—switching from a list view to a calendar grid to a markdown document—creates a friction point that kills deep work.

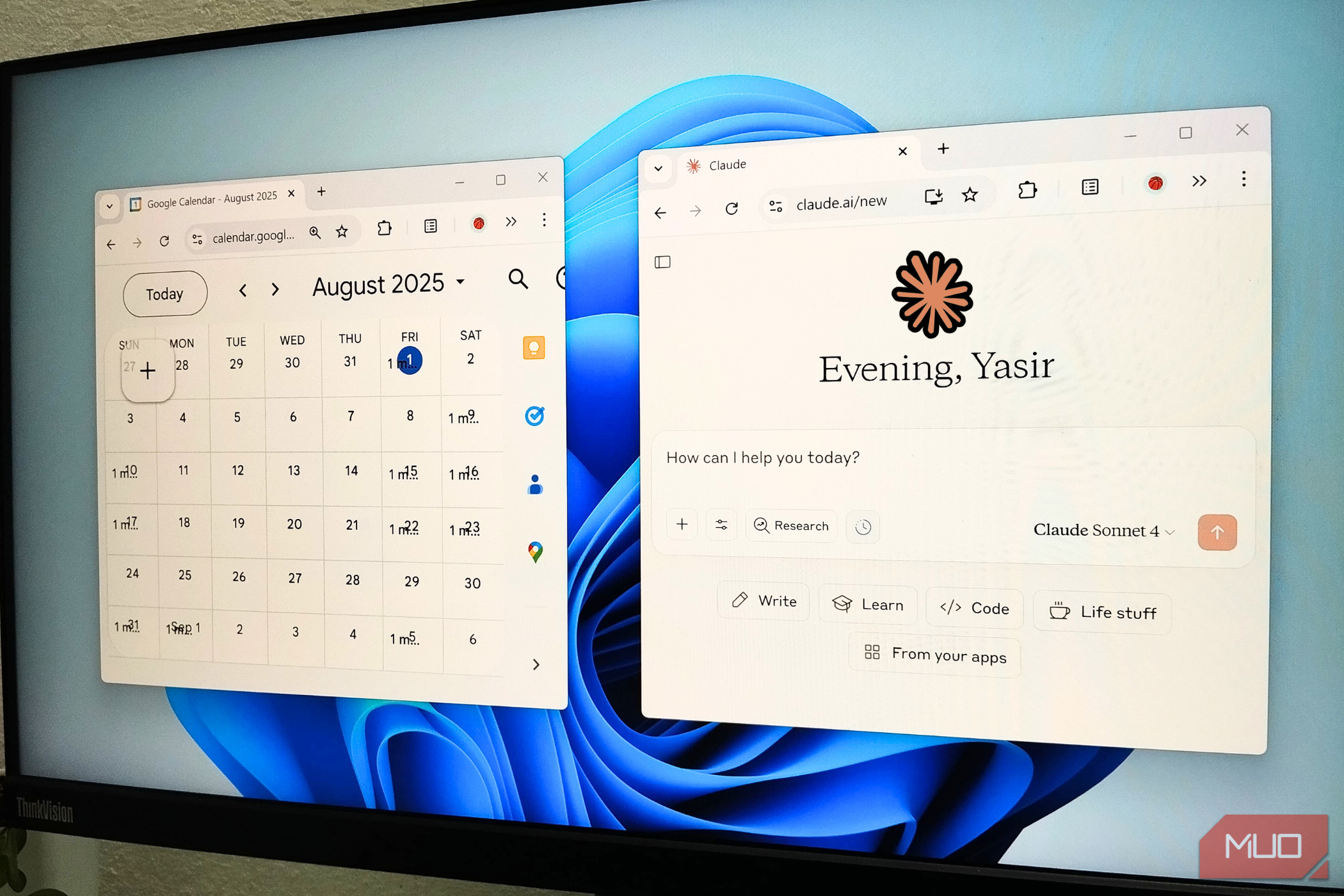

Last week, that friction vanished. I stopped “using apps” and started interacting with a system.

The shift isn’t about Claude suddenly having a better UI for a calendar than Google does. It’s about the death of the interface as the primary point of interaction. By utilizing the latest beta updates rolling out this week, Claude has transitioned from a chatbot you visit to an agent that orchestrates your digital existence. Instead of opening a calendar app to observe if I’m free on Tuesday, I simply question Claude to “optimize my Tuesday for deep work,” and it handles the API calls to my scheduling software in the background.

The Orchestration Layer: Moving Beyond Simple RAG

To understand why this works, we have to look at the architectural shift from basic Retrieval-Augmented Generation (RAG) to full-scale agentic tool use. Early AI productivity was just “chatting with your docs.” You uploaded a PDF, and the LLM queried it. That’s passive. What we’re seeing now is the implementation of sophisticated function calling and loop-based reasoning.

When I tell Claude to “move my 2 PM to tomorrow and notify the team,” the model isn’t just predicting the next token in a sentence. It is identifying the necessary tool (the Calendar API), formulating a JSON payload with the correct parameters, executing the call, and then verifying the result. This is a closed-loop system. The LLM is no longer the destination; it is the orchestration layer.

This is powered by massive increases in context window efficiency and the refinement of “computer use” capabilities. By maintaining a persistent state of my current projects and preferences within its long-term memory—likely utilizing a combination of vector databases and cached prompt prefixes—Claude doesn’t require me to re-explain the context of a project every time I open a modern chat. It knows the project, the stakeholders, and the deadlines.

It’s a seamless transition from a tool to a teammate.

The Productivity Stack: Traditional vs. Agentic

| Metric | Traditional App Stack | AI-Agentic Stack (Claude) |

|---|---|---|

| Cognitive Load | High (Manual context switching) | Low (Single natural language interface) |

| Data Silos | Fragmented (Notes $neq$ Calendar) | Unified (Cross-functional synthesis) |

| Execution | Manual entry and updating | Automated via API orchestration |

| Latency | Human-speed (Click $rightarrow$ Load $rightarrow$ Find) | LLM-speed (Query $rightarrow$ Execute) |

The Ecosystem War: Why Platform Lock-In is Dying

This shift represents a fundamental threat to the “walled garden” strategy employed by Big Tech. For a decade, Microsoft and Google have relied on ecosystem lock-in—the idea that you stay with Outlook as it’s tied to your Word docs and Teams chats. But when an LLM can interface with any service via a standardized API, the specific UI of the underlying app becomes irrelevant. The app becomes a “headless” database.

If Claude can read my emails, update my Trello boards, and schedule my Zoom calls, I no longer need to spend time in the Trello or Zoom interfaces. The value shifts from the software provider to the intelligence layer that manages the software. We are moving toward a “headless” productivity era where the primary interface is a single, intelligent dialogue box.

“The transition from app-centric to agent-centric computing is the most significant paradigm shift since the move from command-line interfaces to GUIs. We are essentially abstracting away the software itself.”

This abstraction is where the real power lies. By removing the need to navigate menus, we reduce the “time to action.” The latency is no longer about network speed, but about the inference speed of the model. With the scaling of transformer architectures and the integration of specialized NPUs (Neural Processing Units) in modern silicon, that inference latency is hitting the threshold of real-time human conversation.

The Security Paradox of Total Integration

Of course, handing the keys to your entire digital life to a single AI agent isn’t without risk. We are effectively creating a single point of failure. If an attacker gains access to your Claude session, they don’t just have your chat history; they have a functional remote control for your calendar, your notes, and your communications.

To mitigate this, the industry is pushing toward more robust end-to-end encryption and granular permission scopes. We need “Least Privilege” access for AI agents. Claude shouldn’t have a blanket “write” permission for my entire Google Drive; it should have scoped access to specific folders, mediated by a secure OAuth handshake that the user can revoke instantly.

the privacy implications of training data remain a sticking point. For a productivity agent to be truly effective, it needs to “know” you. This requires a level of data ingestion that would make a privacy advocate shudder. The solution likely lies in local-first AI or “Edge LLMs” where the most sensitive personal data is processed on-device using open-source frameworks, with only anonymized high-level queries sent to the cloud.

The 30-Second Verdict

- The Win: Massive reduction in cognitive load and elimination of app-switching.

- The Tech: Driven by agentic tool-use, function calling, and expanded context windows.

- The Risk: Centralized vulnerability and privacy concerns regarding deep data ingestion.

- The Future: The “Headless App” era where the LLM is the only UI you need.

I spent the last three years meticulously organizing my Notion workspace, color-coding my calendar, and tagging my tasks. It was a full-time job just to manage the tools I was using to do my actual job. In one week, Claude made that entire ritual obsolete. I didn’t “organize” my life; I just started talking to it, and the system organized itself.

The era of the productivity app is over. The era of the productivity agent has begun.