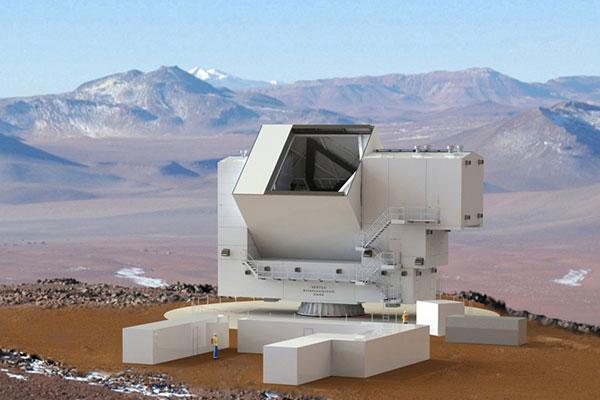

The Fy Sustens telescope (FYST), the world’s highest submillimeter telescope, has officially commenced operations in the Atacama Desert, Chile. Developed through a global consortium including the Universidad Austral de Chile (UACh) and UCSC, it leverages high-altitude atmospheric windows to observe the early universe’s cold gas and dust.

Let’s be clear: this isn’t just another piece of glass pointed at the sky. We are talking about a precision instrument situated at 5,640 meters above sea level. At this altitude, the atmosphere is thin enough to let submillimeter waves—the awkward middle child between infrared and radio waves—pass through without being absorbed by water vapor. For the engineers, Here’s a brutal environment. For the data, it’s a goldmine.

The technical achievement here isn’t just the location; it’s the instrumentation. The FYST utilizes a 2.6-meter primary mirror and a sophisticated receiver system designed to detect the faintest signals from the “Cosmic Dawn.” This is the era when the first stars ignited, and the only way to see them is by detecting the redshifted light that has stretched into the submillimeter spectrum over billions of years.

The Silicon Behind the Stars: Monitoring and Data Pipelines

While the headlines focus on the “highest telescope,” the real story for those of us in the valley is the telemetry and monitoring stack. The Universidad César Vallejo (UCSC) didn’t just provide labor; they engineered the monitoring technology essential for the telescope’s stability. In an environment where temperature swings are violent and oxygen is scarce, the hardware must be self-healing and autonomously monitored.

The data pipeline for a submillimeter array is a nightmare of throughput and latency. We aren’t dealing with simple JPEGs of galaxies. We are talking about massive streams of raw spectral data that require intense preprocessing. To manage this, the system relies on high-performance computing (HPC) clusters and specialized FPGA (Field Programmable Gate Array) architectures to handle the real-time Prompt Fourier Transforms (FFTs) required to convert raw signals into usable spectra.

If you want to understand the scale of this, look at the IEEE Xplore archives on radio astronomy; the signal-to-noise ratio (SNR) challenges at these frequencies are astronomical. The monitoring systems must track everything from the cryogenic cooling of the receivers (which operate at near absolute zero) to the precise torque of the servo motors moving the mirror.

The 30-Second Verdict: Why This Changes the Game

- Atmospheric Transparency: By sitting above 90% of the Earth’s water vapor, FYST accesses “windows” of the spectrum previously only available to satellites.

- C-S-S (Cold-Sustained-Science): The ability to map cold dust in the early universe provides a direct blueprint of how galaxies formed.

- Regional Tech Leap: The involvement of UACh and UCSC signals a shift from Chile being a “host” for foreign telescopes to being a co-developer of the core tech.

Bridging the Gap: From Atacama to the Global Tech Ecosystem

The deployment of FYST isn’t happening in a vacuum. It exists within a broader geopolitical and technical race for “Massive Data” in astronomy. We are seeing a convergence between traditional astrophysics and the tools of modern data science. The algorithms used to filter noise from the FYST’s submillimeter receivers are cousins to the noise-reduction techniques used in open-source signal processing libraries on GitHub.

the “Strategic Patience” mentioned in recent elite hacking circles regarding AI is mirrored here in the scientific community. There is a realization that while AI can simulate the universe, the ground truth—the actual raw data from a telescope like FYST—is the only way to validate those models. Without the hard data from the Atacama, our LLM-driven cosmological models are just fancy hallucinations.

“The integration of real-time telemetry and autonomous monitoring in extreme environments is the true frontier. We are moving away from ‘observe and record’ toward ‘analyze and adapt’ in situ.”

This shift toward “edge computing” at 5,600 meters is a microcosm of the broader industry trend. Whether it’s a telescope in Chile or an NPU (Neural Processing Unit) in a smartphone, the goal is the same: move the computation as close to the data source as possible to avoid the latency of the backhaul.

The Hardware Stack: A Comparison of Submillimeter Capabilities

To understand where FYST sits in the hierarchy, we have to look at the specs. It isn’t trying to out-muscle the ALMA (Atacama Large Millimeter/submillimeter Array), but It’s filling a critical niche in frequency coverage and accessibility.

| Feature | FYST (Current) | Traditional Sub-mm Arrays | Space-Based Observatories |

|---|---|---|---|

| Altitude/Location | 5,640m (Atacama) | Variable (High) | L2 Lagrange Point / Orbit |

| Atmospheric Interference | Minimal (Ultra-Low Water Vapor) | Moderate to High | Zero |

| Maintenance Cycle | Ground-accessible (Difficult) | Ground-accessible | Impossible/Robotic |

| Data Throughput | High-speed Fiber Backhaul | Standard Fiber | Limited by Deep Space Network |

The trade-off here is clear. You get nearly the atmospheric clarity of space with the ability to actually upgrade the hardware. If a receiver fails at the L2 point, you’re out of luck. If a component fails at FYST, you send a team of engineers up the mountain. It’s the “repairability” argument applied to the scale of the cosmos.

The Macro-Market Dynamics of Scientific Sovereignty

There is a subtle but powerful shift occurring in the “Chip Wars” and the “Data Wars” that extends even to astronomy. For decades, the Global North provided the hardware and the Global South provided the land. The presence of UACh and UCSC in the core development of FYST represents a move toward technological sovereignty.

By mastering the monitoring systems and the data pipelines, Chilean institutions are no longer just providing the “real estate” for science; they are owning the IP of the infrastructure. This is a pattern we see in the semiconductor industry—countries moving from assembly to design. The same logic applies here. When you own the monitoring stack, you own the operational intelligence of the instrument.

For those following the Ars Technica style of deep-dive analysis, the takeaway is that the “edge” is moving. The edge is no longer just the perimeter of a corporate network; it is the summit of a volcano in the Atacama. The ability to deploy stable, high-throughput computing in these “dead zones” is a prerequisite for the next century of discovery.

The FYST is now operational. The data is flowing. And for the first time, the window to the early universe is open wider than ever, powered by a blend of extreme altitude and precision engineering.