Disney’s deployment of an AI-driven Han Solo at Galaxy’s Edge attempts to merge LLM-driven dialogue with high-fidelity digital twins, but fails to bridge the “uncanny valley.” The project highlights the current ceiling of affective computing in replicating human charisma and the technical limitations of real-time emotional latency in public installations.

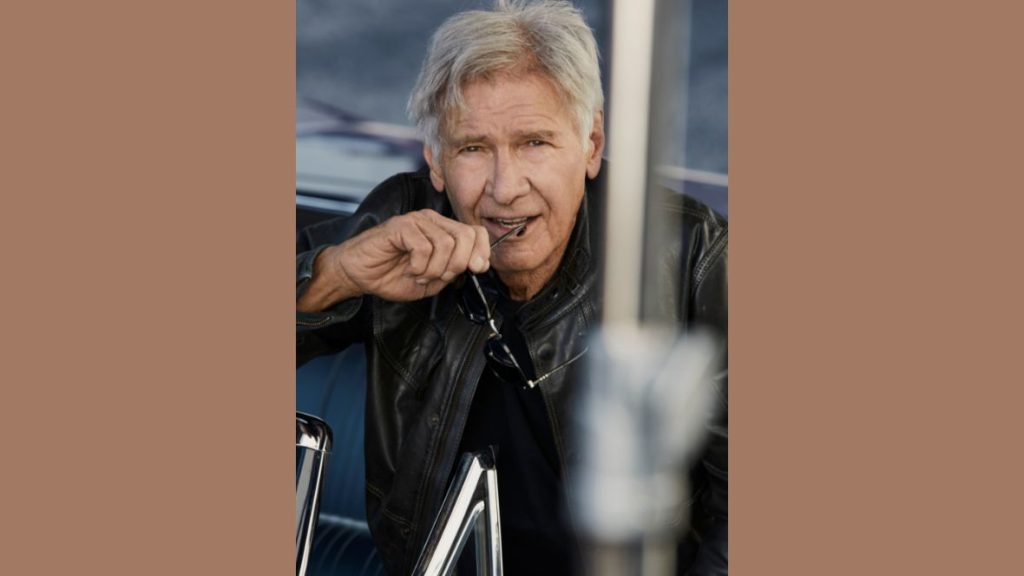

Let’s be clear: the “Han Solo” experience isn’t a failure of acting; it’s a failure of architecture. When we talk about replacing a legend like Harrison Ford, we aren’t talking about skin textures or the ability to recite lines from A Latest Hope. We are talking about the micro-expressions, the rhythmic pauses, and the spontaneous subtext that define human charisma. In the tech world, we call this “affective computing,” and as of this week’s beta rollout in the parks, Disney has hit a wall.

The problem is that charisma is an emergent property of biological intelligence, not a set of weights in a neural network. You can scale an LLM to a trillion parameters, but if the inference latency is 800 milliseconds, the “soul” of the conversation vanishes. The result is a digital puppet that is technically impressive but emotionally sterile.

The Latency of Charisma: Why LLMs Can’t Mimic the “Ford Smirk”

To build a digital Han Solo, Disney likely leveraged a combination of NVIDIA ACE (Avatar Cloud Engine) for the animation pipeline and a proprietary, fine-tuned LLM trained on the Star Wars corpus. On paper, What we have is a powerhouse stack. In practice, it suffers from the “conversational gap.”

Human conversation operates on a tight loop of feedback. We process non-verbal cues in milliseconds. Current AI agents, even those running on high-end NPUs (Neural Processing Units) at the edge, struggle with the transition from listening to processing to generating. When the AI Han Solo pauses, it isn’t a “calculated, brooding silence” characteristic of Ford; it’s a token-generation lag.

This is a fundamental issue of LLM parameter scaling. To get the nuance of Ford’s sarcasm, you need a model with deep contextual understanding. However, larger models require more compute and introduce higher latency. If you shrink the model to achieve real-time responsiveness, you lose the cognitive complexity required to be “charming.” You end up with a chatbot in a remarkably expensive skin.

“The industry is currently obsessed with visual fidelity—the ‘pixels’—while ignoring the temporal fidelity of human interaction. We can render a pore on a character’s nose in 8K, but we can’t yet simulate the precise timing of a sarcastic eye-roll in real-time. That’s where the illusion breaks.”

The 30-Second Technical Verdict

- The Tech: Neural Radiance Fields (NeRFs) for visuals + LLM for dialogue.

- The Fail: Inference latency kills the organic flow of conversation.

- The Gap: Lack of “affective synchronization” between speech and micro-expression.

- The Result: A high-fidelity “uncanny valley” experience.

The Neural Radiance Field Problem: High Fidelity, Zero Soul

Visually, the Galaxy’s Edge installation is a triumph of Neural Radiance Fields (NeRFs) and Gaussian Splatting. For those not in the loop, NeRFs allow developers to create 3D scenes from 2D images, resulting in lighting and reflections that look photorealistic. The “Solo” you see isn’t a traditional CGI mesh; it’s a volumetric representation of a human being.

But here is the rub: high visual fidelity actually increases the psychological discomfort of the uncanny valley. When a character looks 99% human, our brains stop looking at the 99% that is correct and obsess over the 1% that is wrong. In this case, the disconnect between the hyper-realistic skin and the slightly robotic ocular movement creates a cognitive dissonance that triggers a “creepiness” response in guests.

This is a classic case of hardware outstripping software. We have the GPU power to render the image, but we don’t have the behavioral algorithms to drive it. The movement is driven by a blend-shape system that lacks the organic unpredictability of a living actor. Harrison Ford doesn’t just move his mouth to speak; his entire skeletal structure reacts to the emotion of the scene.

| Metric | Human (Harrison Ford) | AI Digital Twin (2026) | Impact on User Experience |

|---|---|---|---|

| Response Latency | ~200ms (Organic) | 600ms – 1.2s (Inference) | Perceived as “robotic” or “laggy” |

| Micro-expression | Spontaneous/Subconscious | Pre-baked/Triggered | Lack of emotional authenticity |

| Contextual Pivot | Instantaneous | Dependent on prompt window | Conversations feel linear/scripted |

| Visual Fidelity | Biological Reality | NeRF/Gaussian Splatting | High fidelity, but “uncanny” |

Edge Computing vs. The Uncanny Valley

To mitigate the lag, Disney is reportedly pushing more compute to the edge. By utilizing localized server clusters within the park—essentially creating a private, high-speed cloud—they can bypass the round-trip latency of a centralized data center. They are likely employing edge computing architectures to handle the TTS (Text-to-Speech) and animation synthesis locally.

However, edge computing is a band-aid, not a cure. The bottleneck isn’t just the distance the data travels; it’s the time it takes for the LLM to “think.” Even with quantized models running on H100-equivalent hardware at the park, the “thinking” time is an inherent part of the transformer architecture. Until we move toward something more akin to neuromorphic computing—where processing and memory are co-located like in a human brain—we will always have this gap.

there is the issue of “state management.” For a digital twin to feel real, it needs to remember who you are across multiple interactions. While RAG (Retrieval-Augmented Generation) can pull user data from a Disney MagicBand profile, the integration is clunky. The AI might remember your name, but it doesn’t remember the *vibe* of your previous interaction.

The Digital Twin Precedent: Beyond the Theme Park

While the Han Solo experiment might feel like a gimmick, it is a canary in the coal mine for the broader “Digital Twin” economy. We are seeing the same struggle in enterprise AI, where companies are trying to create “AI CEOs” or “Virtual Experts.”

The lesson here is that presence is not the same as personality. You can simulate the presence of a person using a high-end SoC and a massive dataset, but personality is found in the deviations from the norm. AI is, by definition, a prediction of the most likely next token. Charisma, however, is often the least likely but most effective response.

If you desire to see where this is actually working, look at open-source communities experimenting with small language models (SLMs) optimized for specific emotional niches. By narrowing the scope of the AI, developers can reduce latency and increase the “hit rate” of authentic-feeling interactions.

the Galaxy’s Edge Han Solo proves that while we can digitize the image and the voice, we cannot yet digitize the “it” factor. Harrison Ford isn’t just a set of data points; he’s a series of unpredictable, human choices. Until AI can be truly unpredictable—and not just stochastic—it will remain a tribute, not a replacement.