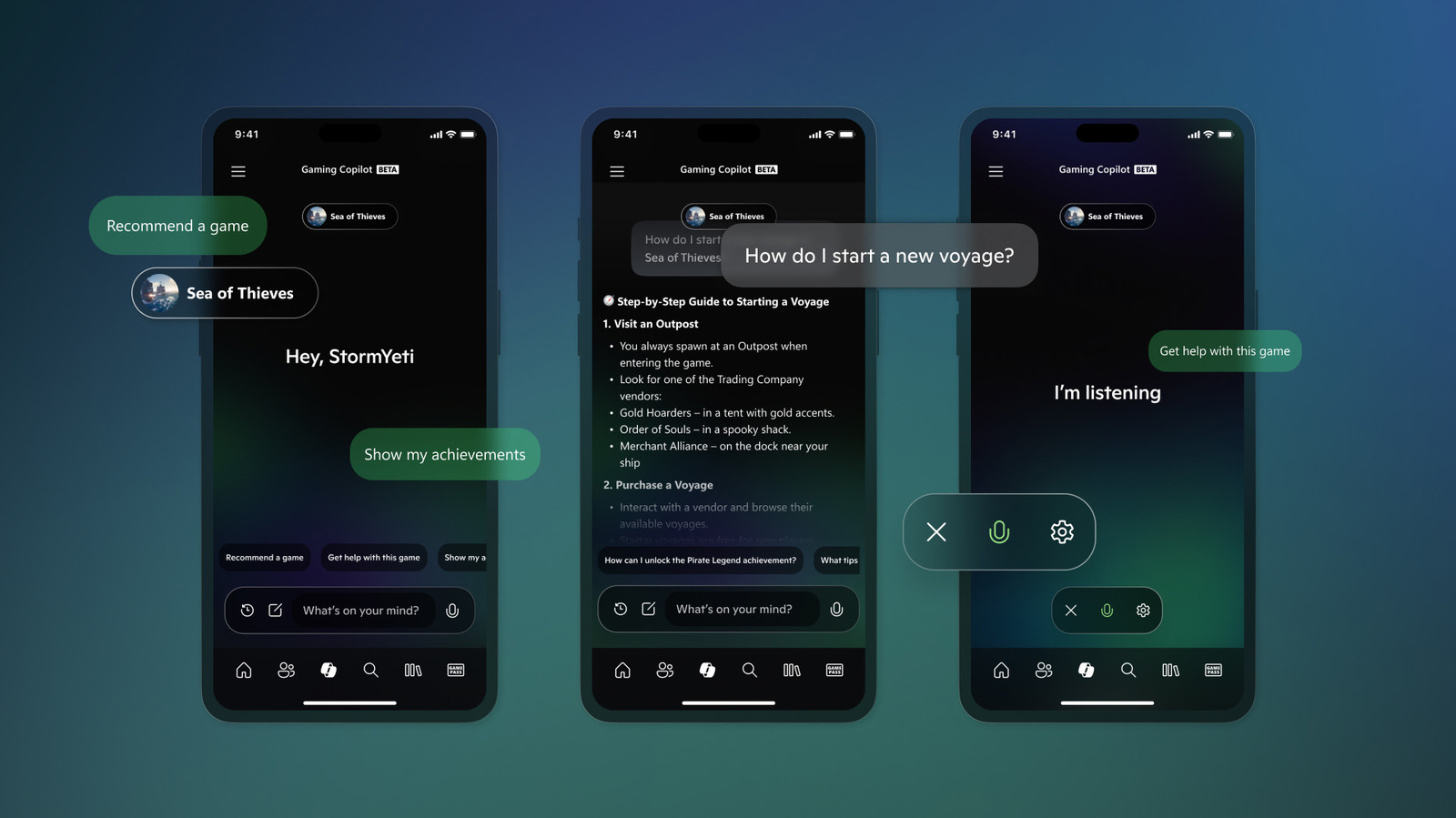

Microsoft is scrubbing Copilot AI from Xbox consoles and its mobile application this week. The move signals a strategic retreat from general-purpose LLM integration in gaming, prioritizing system performance and specialized game-engine AI over a cloud-dependent chatbot that failed to provide tangible utility for the core gaming demographic.

Let’s be clear: this isn’t a failure of the AI itself, but a failure of the implementation. Copilot was an appendage—a bolted-on productivity tool trying to survive in an environment where milliseconds of latency are the difference between a headshot and a respawn screen. By removing the assistant from the Xbox mobile app and the console UI, Microsoft is admitting that a general-purpose Large Language Model (LLM) is the wrong tool for the living room.

For the average user, this looks like a feature removal. For those of us tracking the silicon, it’s a calculated move to reclaim overhead.

The Latency Trap: Why Cloud LLMs Fail the Console Test

The fundamental friction here is architectural. Copilot operates primarily via cloud-based inference. When a user triggers a prompt, the request travels from the Xbox SoC (System on a Chip), hits a Microsoft Azure data center, processes through a massive parameter-heavy model, and returns a tokenized response. In a productivity suite like Word or Excel, a 500ms round-trip is negligible. In a gaming ecosystem, it’s an eternity.

Integrating a cloud-dependent AI into the console shell creates a “stutter” in the user experience. Even if the AI isn’t stealing GPU cycles from the game itself, the API calls and background synchronization required to retain Copilot “aware” of the user’s state create unnecessary noise on the network stack. We are seeing a shift away from the “everything-is-an-AI-chatbot” era toward a more surgical application of machine learning.

The reality is that gamers don’t want a chatbot to tell them how to find a hidden collectible in Starfield; they want a seamless, integrated wiki or an in-game hint system that doesn’t require leaving the primary render loop. Copilot was too broad, too slow, and too disconnected from the actual game code.

The 30-Second Verdict: Why This Happened

- Utility Gap: General AI assistants provide low value for high-intensity gaming sessions.

- Resource Overhead: Reducing background API polling improves system stability.

- Strategic Pivot: Shifting focus from “UI Assistants” to “In-Engine Generative AI.”

- User Friction: The mobile app integration was an awkward bridge to nowhere.

Architectural Dead-Ends and the NPU Void

If we look under the hood of the Xbox Series X, we see a beast of a machine, but it’s a beast designed for rasterization and ray tracing, not local LLM inference. Current consoles lack a dedicated NPU (Neural Processing Unit) capable of running quantized models locally. While the AMD Zen 2 architecture is powerhouse for general compute, it isn’t optimized for the matrix multiplications required for real-time AI generation.

To make Copilot truly useful, Microsoft would have needed to ship a local, small-language model (SLM) that could run on the console’s hardware without eating into the VRAM allocated to the game. Since that’s a hardware impossibility on current-gen consoles, they were forced to rely on the cloud. This creates a dependency on high-speed internet that contradicts the “offline” nature of many core gaming experiences.

The industry is moving toward “AI PCs” with dedicated NPUs. Microsoft is likely realizing that the console is the wrong place to experiment with general AI until the next hardware cycle introduces silicon specifically designed for tensor operations.

“The industry is realizing that the ‘Chatbot UI’ is a legacy interface. The future of AI in gaming isn’t a side-panel assistant; it’s the integration of LLMs directly into the NPC behavior trees and procedural world-building, which requires deep engine integration, not a cloud API wrapper.” — Marcus Thorne, Lead AI Architect at NeuralGaming Labs.

From Chatbots to Generative Agents: The Strategic Pivot

Microsoft isn’t abandoning AI in gaming; they are just moving it from the shell to the core. There is a massive difference between a chatbot that tells you your subscription is expiring and a generative agent that allows an NPC to react dynamically to your voice input in real-time.

We are seeing a transition toward what I call “Semantic Game Integration.” Instead of Copilot, we will see the integration of AutoGen-style frameworks where AI agents manage game states or generate quests on the fly. This requires the AI to have access to the game’s memory and state-tree, something a general-purpose assistant like Copilot cannot do without compromising security, and stability.

Consider the difference in resource allocation:

| Feature | General AI (Copilot) | Specialized Game AI (Generative Agents) |

|---|---|---|

| Inference Location | Cloud (Azure) | Hybrid (Local NPU/Edge) |

| Latency Requirement | Moderate (1-2 seconds) | Ultra-Low (<100ms) |

| Data Input | User Prompts | Game State/Telemetry/Player Action |

| Primary Goal | Information Retrieval | Immersion & Dynamic Gameplay |

The Ecosystem Ripple Effect

This move also has significant implications for platform lock-in. By stripping Copilot, Microsoft is reducing the “friction” for third-party developers who might have been wary of a dominant, first-party AI layer intercepting user data or interfering with game-specific UI. It’s a nod to the open-ecosystem philosophy that has turn into more prevalent as antitrust scrutiny increases.

this allows Microsoft to double down on AI in Windows and Office, where the ROI is exponentially higher. In the enterprise space, Copilot is a revenue generator. In the gaming space, it was a novelty. Silicon Valley is finally trimming the fat.

For those concerned about cybersecurity, the removal of a cloud-connected, high-privilege assistant from the console shell reduces the attack surface. Every API endpoint is a potential vector for prompt injection or data leakage. By narrowing the scope of AI on the console, Microsoft is effectively hardening the environment against the emerging class of LLM-based exploits.

The “AI everywhere” gold rush of 2023-2025 is ending. We are entering the era of “AI where it actually works.” For Xbox, that means getting the chatbot out of the way and letting the games actually play.

The Bottom Line for Developers

If you’re building for Xbox, stop trying to integrate general-purpose LLM wrappers. Focus on edge-computing AI and specialized models that can live within the game’s memory budget. The era of the “Side-Panel Assistant” is dead; the era of the “Living World” is just beginning.