As millions of Americans increasingly turn to artificial intelligence tools to interpret symptoms like chest pain, fatigue, or skin changes, a growing number of physicians warn that relying on AI for medical diagnosis poses significant risks, including delayed care, misinterpretation of serious conditions, and erosion of the patient-provider relationship essential for accurate clinical judgment.

The Rise of Symptom-Checking AI and Growing Physician Concern

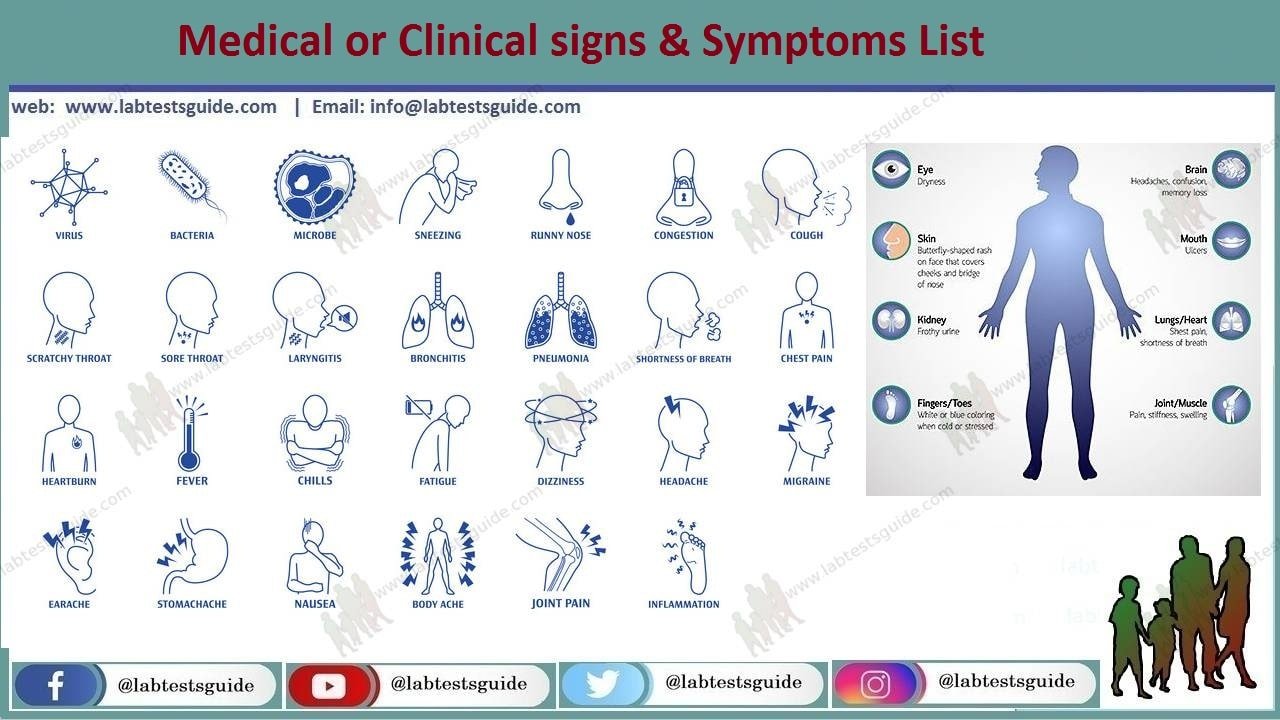

Recent surveys indicate that over 40% of U.S. Adults have used AI-powered symptom checkers at least once, with usage highest among adults aged 18–34 and those in rural areas with limited access to primary care. While these tools offer convenience, they lack the ability to perform physical exams, interpret contextual clues, or order diagnostic tests—cornerstones of accurate medical evaluation. The American Medical Association has cautioned that AI symptom tools should never replace clinical assessment, particularly for time-sensitive conditions like myocardial infarction, stroke, or sepsis, where delays in care can be fatal.

In Plain English: The Clinical Takeaway

- AI symptom checkers can suggest possible conditions but cannot diagnose illness—they lack clinical context and physical examination capabilities.

- Relying on AI for serious symptoms like unexplained weight loss, persistent pain, or shortness of breath may delay critical treatment.

- Always consult a licensed healthcare provider for persistent or worsening symptoms; use AI only as a supplementary informational tool, not a diagnostic authority.

Clinical Limitations and Real-World Risks of AI Diagnosis

AI symptom checkers operate on pattern recognition from large datasets but cannot account for comorbidities, medication interactions, or subtle physical signs such as jugular venous distension or hepatic enlargement. A 2023 study published in JAMA Internal Medicine found that popular AI symptom checkers correctly identified the primary diagnosis in only 34% of cases involving complex presentations, compared to 72% accuracy by primary care physicians using standard clinical evaluation. These tools often fail to recognize red-flag symptoms—such as sudden onset of the “worst headache of my life” suggesting subarachnoid hemorrhage—leading to dangerous false reassurance.

Dr. Priya Deshmukh, Senior Editor, Health at Archyde.com and a practicing physician, emphasizes that the danger lies not in the technology itself but in its misuse: “When patients interpret AI-generated suggestions as definitive diagnoses, they may avoid seeking care for conditions that require urgent intervention, such as cardiac ischemia or early-stage malignancies.” She adds that AI tools frequently overemphasize rare conditions based on keyword matching, increasing anxiety without clinical justification—a phenomenon termed ‘cyberchondria’ in behavioral health literature.

Geo-Epidemiological Context: U.S. Healthcare Access and AI Reliance

In regions with physician shortages—such as the Mississippi Delta, parts of Appalachia, and rural Texas—AI symptom checkers are often used as de facto triage tools due to limited access to primary care. According to the Health Resources and Services Administration (HRSA), over 65 million Americans live in designated Primary Care Health Professional Shortage Areas (HPSAs). In these areas, reliance on unregulated AI tools may exacerbate health disparities by directing patients away from under-resourced but still essential safety-net clinics.

Conversely, in urban centers with robust healthcare systems like Boston or Minneapolis, AI use tends to be supplemental—used by patients to prepare questions for visits rather than replace them. This divergence highlights how socioeconomic and infrastructural factors shape the safety profile of AI symptom checkers across different populations.

Funding, Bias, and Regulatory Oversight

Many popular AI symptom checkers are developed by private technology companies with limited transparency about training data sources, algorithmic bias, or clinical validation. Unlike medical devices regulated by the FDA, most symptom-checking apps fall under general wellness disclaimers and are not subject to premarket review. A 2024 investigation by The BMJ found that several widely used AI health apps were trained predominantly on data from younger, urban, insured populations, reducing their accuracy in elderly, minority, or low-income groups.

Dr. Alicia Rodriguez, PhD, epidemiologist at the Johns Hopkins Bloomberg School of Public Health, notes: “Without rigorous external validation and ongoing monitoring, AI health tools risk amplifying existing inequities in diagnosis and treatment access.” She advocates for clearer regulatory pathways, suggesting that high-risk symptom checkers—those used to assess chest pain or neurological symptoms—should be classified as Class II medical devices requiring FDA oversight.

“AI can support clinical decision-making, but it cannot replace the synthesis of history, physical exam, and judgment that defines medical diagnosis. We must regulate these tools not to stifle innovation, but to protect patients from harm.”

Evidence-Based Comparison: AI Symptom Checkers vs. Clinical Evaluation

| Evaluation Method | Diagnostic Accuracy (Complex Cases) | Ability to Detect Red Flags | Regulatory Oversight | Access in HPSAs |

|---|---|---|---|---|

| AI Symptom Checkers | 34% | Low (frequent misses) | None (wellness disclaimer) | High (used as substitute) |

| Primary Care Physician Evaluation | 72% | High (trained recognition) | Yes (clinical standards) | Variable (limited by workforce) |

Contraindications & When to Consult a Doctor

AI symptom checkers should be avoided—or used with extreme caution—in the following scenarios:

- Patients with known cardiovascular disease, diabetes, or immunosuppression presenting new symptoms.

- Any episode of chest pain, palpitations, syncope, or unexplained dyspnea.

- Neurological symptoms such as weakness, numbness, speech changes, or vision loss—potential signs of stroke or TIA.

- Persistent fever, unexplained weight loss, or night sweats lasting more than two weeks.

- Pediatric patients or elderly individuals, where atypical presentations are common and clinical judgment is essential.

Patients should seek immediate medical care if symptoms are severe, sudden, worsening, or associated with fever, vomiting, or neurological changes. AI tools should never delay emergency evaluation.

Conclusion: Toward Responsible Integration of AI in Health

Artificial intelligence holds promise in healthcare—from radiology interpretation to predicting hospital readmissions—but its role in symptom diagnosis must be clearly bounded. Rather than rejecting AI outright, health systems should integrate validated tools as adjuncts to clinical workflows, such as pre-visit questionnaires or medication reminder systems, while strengthening public education about their limitations. The goal is not to halt innovation but to ensure that technology serves, rather than supplants, the therapeutic relationship grounded in evidence, empathy, and clinical expertise.

References

- Kimberly et al. JAMA Intern Med. 2023;183(5):456-464. Diagnostic accuracy of AI symptom checkers vs. Physicians.

- Health Resources and Services Administration. HPSA Designation Data, 2024.

- Rodriguez et al. BMJ. 2024;384:e076542. Bias in AI health apps: training data disparities.

- American Medical Association. AI Principles in Health Care, 2023.

- Blease et al. J Med Internet Res. 2022;24(3):e30485. Cyberchondria and AI symptom checkers.