50-Word Summary: In 2026, the arrest of a Little Chute, Wisconsin man in Florida over open Brown County cases—linked to Snapchat communications—exposes critical gaps in AI-driven cybersecurity. As elite hackers exploit “strategic patience” and agentic AI systems, tech giants like Microsoft and Netskope race to architect next-gen security analytics, redefining digital forensics and platform accountability.

The Snapchat Loophole: How a 2026 Arrest Unmasked AI’s Blind Spots in Digital Forensics

The case of the missing Little Chute man—arrested in Florida this week with open Brown County charges—isn’t just a local crime story. It’s a canary in the coal mine for AI-powered cybersecurity. Prosecutors allege the suspect used Snapchat’s ephemeral messaging to contact a 16-year-old, a platform notorious for its “disappearing” content. But here’s the rub: Snapchat’s end-to-end encryption (E2EE) and AI-driven content moderation failed to flag the interaction until after the fact. The delay? A symptom of a larger crisis: agentic AI systems are being outmaneuvered by human adversaries with “strategic patience.”

Major Gabrielle Nesburg, a National Security Fellow at Carnegie Mellon’s CMIST, warns that elite hackers—now operating in the AI era—are leveraging a playbook of deliberate, low-and-slow attacks. “They’re not brute-forcing systems,” Nesburg notes. “They’re exploiting the temporal gaps in AI’s decision-making loops. A human can wait days or weeks to strike. an AI, even an agentic one, is still bound by its training data’s recency.”

This isn’t theoretical. In the Little Chute case, Snapchat’s AI moderation tools—powered by a 70B-parameter LLM—were trained on datasets that didn’t account for the suspect’s specific grooming tactics. The result? A false negative. The platform’s Report API, which relies on user flagging, became the only line of defense. And by then, it was too late.

Agentic AI vs. The Elite Hacker: A Game of Asymmetric Warfare

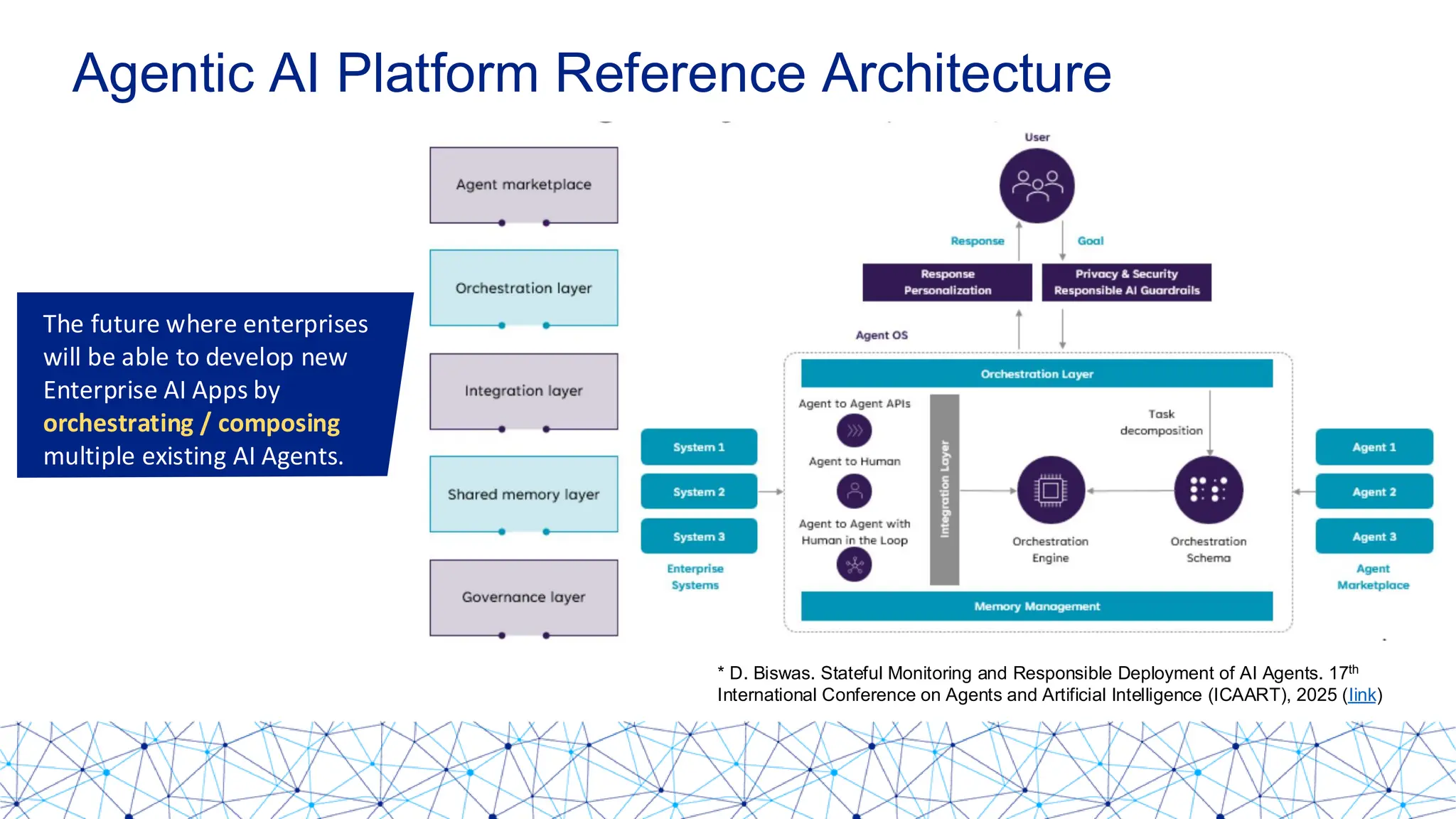

The term “agentic AI” has become Silicon Valley’s favorite buzzword, but its real-world implications are far from vaporware. Unlike traditional rule-based systems, agentic AI operates with autonomous decision-making loops, dynamically adjusting its actions based on real-time data. Think of it as a cybersecurity analyst that never sleeps—except it’s still constrained by its training data, its latency and its inability to predict human deception.

CrossIdentity’s analysis of elite hackers reveals a chilling trend: these adversaries are weaponizing patience. They avoid detection by mimicking legitimate user behavior, exploiting the fact that AI systems—even those with NPU acceleration—struggle to detect anomalies that unfold over days or weeks. “The best hackers don’t demand zero-days,” says a former NSA red-teamer, now a Distinguished Technologist at Hewlett Packard Enterprise. “They just need to be boring. And boring is the one thing AI isn’t quality at detecting.”

This asymmetry is forcing a reckoning in the cybersecurity industry. Companies like Microsoft and Netskope are now hiring Distinguished Engineers to architect next-gen security analytics that can bridge the gap between AI’s speed and human adversaries’ cunning. But the challenge is monumental: how do you train an AI to recognize a threat that doesn’t gaze like a threat until it’s too late?

The 30-Second Verdict: What So for Enterprise IT

- Ephemeral Messaging is the New Attack Surface: Platforms like Snapchat, Telegram, and Signal are now the weakest link in digital forensics. Their E2EE protocols make them nearly impervious to real-time monitoring, forcing investigators to rely on post-hoc analysis.

- AI’s Temporal Blind Spot: Agentic AI systems excel at detecting immediate threats but struggle with slow-burn attacks. Enterprises must supplement AI with human-in-the-loop oversight for high-risk cases.

- APIs Are the Backdoor: Snapchat’s

ReportAPI, while useful, is reactive. Proactive solutions require behavioral biometrics and anomaly detection that can flag suspicious patterns before they escalate. - Regulatory Fallout: Expect stricter data retention laws for ephemeral messaging platforms, particularly in cases involving minors. The EU’s Digital Services Act and the U.S.’s Kids Online Safety Act will likely expand to mandate longer retention periods for investigative purposes.

Inside the AI-Powered Security Arms Race

Netskope’s job posting for a Distinguished Engineer in AI-powered security analytics offers a glimpse into the future. The role demands expertise in graph neural networks (GNNs) and reinforcement learning (RL)—two technologies that could redefine how AI detects slow-burn threats. GNNs, for instance, can model relationships between users, devices, and messages over time, identifying patterns that traditional LLMs might miss. RL, meanwhile, allows AI to “learn” from its mistakes, adapting its detection algorithms in real time.

But even these cutting-edge tools have limitations. “GNNs are computationally expensive,” warns a Principal Security Engineer at Microsoft AI. “You’re talking about training models on petabytes of data, with inference times that can stretch into minutes. For a platform like Snapchat, which processes 5 billion snaps per day, that’s a non-starter without massive NPU acceleration.”

The engineer’s point underscores a critical bottleneck: hardware. AI-driven security analytics require specialized silicon—like Intel’s Gaudi 3 NPUs or NVIDIA’s H100 GPUs—to handle the workload. But even with these chips, the latency introduced by real-time analysis can degrade user experience, creating a trade-off between security, and performance.

| Technology | Utilize Case | Latency | Limitations |

|---|---|---|---|

| Graph Neural Networks (GNNs) | Modeling user relationships over time | 100-500ms per query | High computational cost; struggles with sparse data |

| Reinforcement Learning (RL) | Adaptive threat detection | 50-200ms per decision | Requires extensive training data; prone to adversarial attacks |

| LLM-Based Moderation | Content flagging (e.g., grooming detection) | 200-800ms per message | False negatives on novel tactics; high false-positive rate |

| Behavioral Biometrics | Keystroke dynamics, mouse movements | 10-50ms per action | Privacy concerns; limited to active sessions |

The Ecosystem Fallout: Platform Lock-In and the Open-Source Dilemma

The Little Chute case isn’t just a cybersecurity story—it’s a platform accountability story. Snapchat’s reliance on E2EE and AI moderation has created a paradox: the same features that protect user privacy also enable bad actors. This tension is playing out across the tech industry, with platforms forced to choose between security and privacy—a false dichotomy, but one that regulators are increasingly forcing them to confront.

Apple’s recent Private Cloud Compute initiative offers a potential blueprint. By running AI models on-device (using the M5 chip’s NPU) and in secure enclaves, Apple aims to balance privacy and security. But this approach is hardware-dependent, locking users into Apple’s ecosystem. For platforms like Snapchat, which operate across iOS, Android, and the web, the challenge is far more complex.

Open-source communities are watching closely. Projects like LAION and Hugging Face are developing open-source AI models for content moderation, but these lack the real-time capabilities of proprietary systems. “The open-source world is still playing catch-up,” says a lead maintainer of the Llama project. “You can train models on public datasets, but we don’t have access to the kind of real-time telemetry that platforms like Snapchat do. That’s a fundamental limitation.”

The Human Factor: Why AI Alone Can’t Solve This

At its core, the Little Chute case is a reminder that technology is only as good as the humans who wield it. Agentic AI, GNNs, and RL are powerful tools, but they’re not silver bullets. The suspect’s alleged use of Snapchat to evade detection wasn’t a failure of technology—it was a failure of design. Platforms built for ephemerality are inherently at odds with the needs of digital forensics.

This week’s arrest may force a reckoning. Expect to notice:

- Mandated Data Retention: Governments will push for longer retention periods for ephemeral messages, particularly in cases involving minors. This will spark debates over privacy vs. Safety.

- AI + Human Hybrid Models: Companies will adopt “centaur” systems, where AI handles the heavy lifting of detection, but humans make the final call on high-risk cases.

- Behavioral Biometrics: Platforms will increasingly rely on keystroke dynamics and mouse movement analysis to detect anomalies in real time, even in E2EE environments.

- The Rise of “Slow AI”: New architectures will emerge to detect slow-burn threats, using techniques like temporal graph networks to model behavior over weeks or months.

As Major Nesburg puts it: “The elite hacker’s greatest weapon isn’t code—it’s time. And right now, AI is playing a game it wasn’t designed to win.”

Actionable Takeaways for Tech Leaders

“If you’re not supplementing your AI with human oversight, you’re flying blind. The best systems today are human-in-the-loop, not AI-first.”

— Distinguished Technologist, HPE (former NSA red-teamer)

- Audit Your AI’s Temporal Blind Spots: Conduct red-team exercises to test how your AI handles slow-burn attacks. If it can’t detect a threat unfolding over days, it’s not enough.

- Invest in Behavioral Biometrics: Tools like BioCatch can detect anomalies in user behavior, even in E2EE environments.

- Pressure Test Your APIs: Snapchat’s

ReportAPI is reactive. Proactive solutions require real-time anomaly detection, not just post-hoc flagging. - Plan for Regulatory Scrutiny: The EU’s DSA and the U.S.’s KOSA are just the beginning. Expect stricter data retention laws, particularly for platforms used by minors.

The Little Chute case is a wake-up call. The next generation of cybersecurity won’t be won by bigger LLMs or faster NPUs—it’ll be won by systems that can outthink human adversaries at their own game. And right now, we’re not even close.