ChatGPT’s Personalized Game Recommendations: A Deep Dive into Affective Computing and LLM-Driven Preference Modeling

A recent report from Diario AS details a user’s experience with ChatGPT providing remarkably accurate game recommendations based on personal preferences. This isn’t simply a sophisticated filtering algorithm. it represents a significant leap in affective computing – the ability of AI to recognize, interpret, and respond to human emotion and nuanced tastes. The core innovation lies in OpenAI’s continued refinement of its large language models (LLMs) to not just *understand* stated preferences, but to infer underlying motivations and psychological profiles from conversational data. This moves beyond collaborative filtering (think Amazon’s “Customers who bought this also bought…”) and into genuinely personalized experiences.

The implications extend far beyond gaming. This technology foreshadows a future where AI acts as a hyper-personalized concierge for all aspects of digital life, from content creation to product discovery. However, it also raises critical questions about data privacy, algorithmic bias, and the potential for manipulative recommendation systems.

The LLM Parameter Scaling Advantage: Why ChatGPT Excels

ChatGPT’s success isn’t accidental. OpenAI has consistently pushed the boundaries of LLM parameter scaling. While the exact parameter count of the current GPT-4 Turbo model remains undisclosed, estimates place it well beyond the 1.76 trillion parameters of its predecessor. This massive scale allows the model to capture far more subtle patterns in language and behavior. Crucially, the model isn’t just memorizing data; it’s learning to *generalize* from it. This generalization is key to accurately predicting preferences even when presented with novel inputs. The shift from GPT-3.5 to GPT-4 Turbo also saw a significant improvement in the model’s ability to handle longer context windows – allowing for more detailed user profiles to be considered during the recommendation process. This is a direct result of architectural improvements like Sparse Attention, which reduces the computational cost of processing long sequences.

The article highlights the user’s “astonishment” at the accuracy of the recommendations. This isn’t simply about suggesting popular titles; it’s about identifying games that align with the user’s *emotional* needs and playstyle. For example, if a user frequently expresses frustration with complex game mechanics, the AI might recommend a more streamlined, narrative-focused experience. This requires a level of understanding that traditional recommendation systems simply lack.

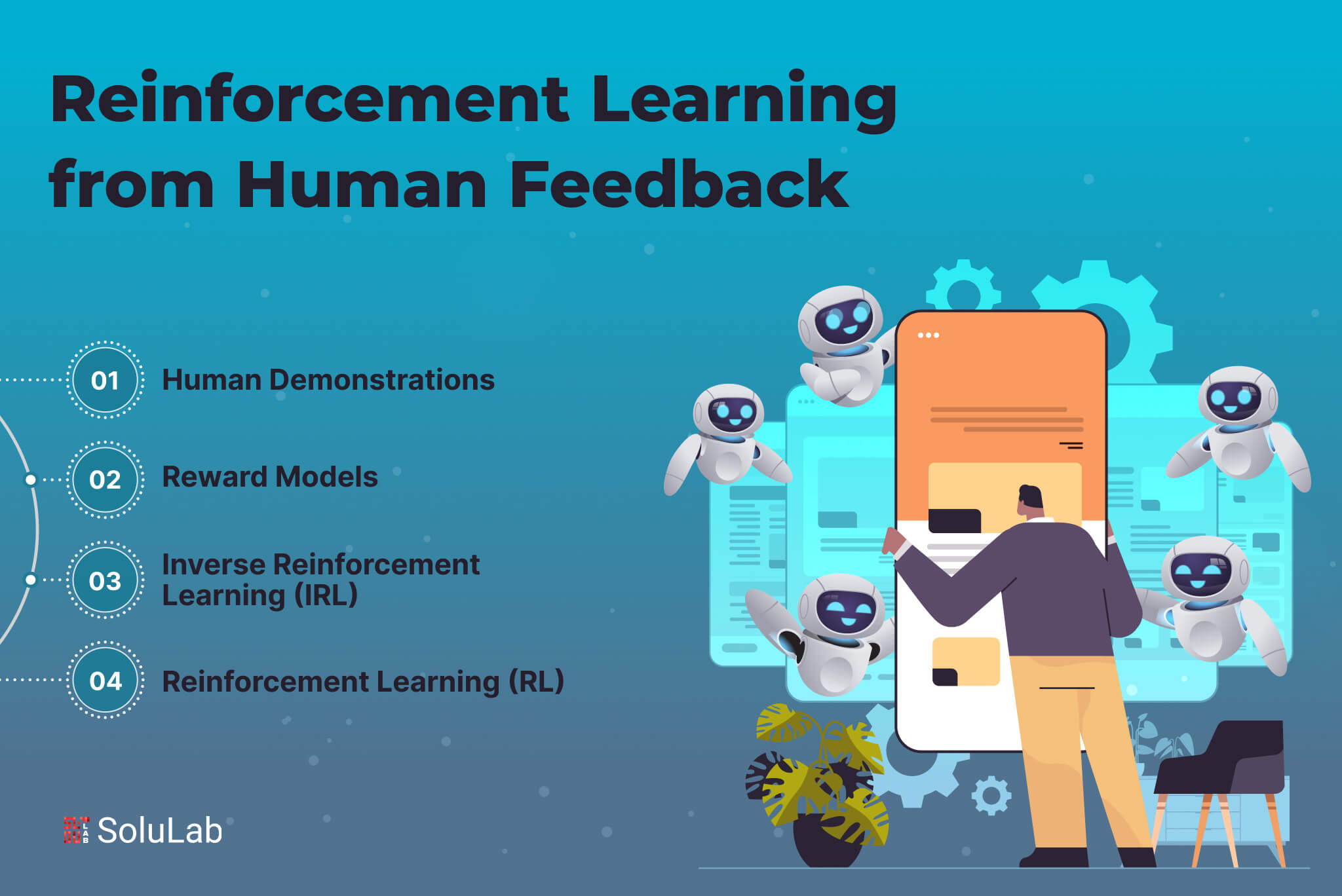

Beyond the Algorithm: The Role of Reinforcement Learning from Human Feedback (RLHF)

While parameter scaling is crucial, it’s not the whole story. OpenAI’s use of Reinforcement Learning from Human Feedback (RLHF) has been instrumental in shaping ChatGPT’s behavior. RLHF involves training the model to align with human preferences through a process of iterative feedback. Human reviewers rate the quality of the model’s responses, and this feedback is used to refine the model’s reward function. This process helps to ensure that the model’s recommendations are not only accurate but also helpful, engaging, and ethically sound. The latest iterations of RLHF incorporate techniques like Direct Preference Optimization (DPO), which streamlines the training process and improves sample efficiency.

However, RLHF isn’t without its challenges. Bias in the training data can lead to biased recommendations. For example, if the human reviewers predominantly favor certain genres or developers, the model may inadvertently perpetuate these biases. Addressing this requires careful curation of the training data and ongoing monitoring of the model’s performance.

The Data Privacy Tightrope: Profiling and the Ethics of Affective Computing

The ability to infer a user’s emotional state and psychological profile from their interactions with an AI raises serious data privacy concerns. What data is being collected? How is it being stored? And how is it being used? These are questions that regulators are increasingly scrutinizing. The European Union’s Digital Services Act (DSA) and the forthcoming AI Act are likely to impose strict requirements on companies that deploy AI-powered recommendation systems. The AI Act, in particular, aims to classify AI systems based on their risk level and impose corresponding obligations on developers and deployers.

“The personalization capabilities of LLMs are incredibly powerful, but they come with a significant responsibility. We need to ensure that these systems are transparent, accountable, and respectful of user privacy. The risk of creating ‘filter bubbles’ and reinforcing existing biases is very real.”

– Dr. Anya Sharma, CTO, SecureAI Solutions

OpenAI’s current privacy policy outlines its data collection practices, but it’s crucial for users to understand the extent to which their data is being used to personalize their experience. The use of differential privacy techniques – adding noise to the data to protect individual identities – can help to mitigate some of these risks, but it’s not a panacea.

The Ecosystem War: OpenAI vs. Google and the Battle for AI Dominance

This advancement by OpenAI isn’t happening in a vacuum. Google is aggressively pursuing similar capabilities with its Gemini models and its own RLHF techniques. The competition between OpenAI and Google is driving rapid innovation in the field of AI, but it’s also creating a fragmented ecosystem. Google AI’s blog provides insights into their ongoing research and development efforts. The key difference lies in OpenAI’s more open approach to API access, which has fostered a vibrant ecosystem of third-party developers building applications on top of its models. Google, historically, has been more protective of its core technologies.

This difference in strategy has implications for platform lock-in. OpenAI’s open API allows developers to integrate its models into a wide range of applications, reducing the risk of vendor lock-in. Google, is pushing users towards its own ecosystem of services. The long-term winner of this ecosystem war will likely be the company that can best balance innovation with openness and interoperability.

What This Means for Enterprise IT

The implications for enterprise IT are profound. Imagine using AI to personalize training programs for employees, tailor marketing campaigns to individual customers, or even design products that meet the specific needs of different user segments. The possibilities are endless. However, enterprises will need to address the challenges of data security, compliance, and algorithmic bias before they can fully leverage these capabilities. Gartner’s research on AI adoption highlights these challenges and provides guidance for enterprises looking to implement AI solutions.

the increasing sophistication of LLMs will require enterprises to invest in robust cybersecurity measures to protect against AI-powered attacks. For example, LLMs can be used to generate highly convincing phishing emails or to automate the discovery of vulnerabilities in software systems.

The 30-Second Verdict: ChatGPT’s personalized game recommendations are a harbinger of a future where AI understands us better than we understand ourselves. While exciting, this future demands careful consideration of ethical implications and robust data privacy safeguards.

The canonical URL for the Diario AS article is: https://www.diarioas.com/tecnologia/chatgpt-recomienda-juego-perfecto-alucina-re-20240428163114.html