Google Cloud Next ’26 unveiled seven strategic highlights that collectively signal a decisive shift toward AI-native infrastructure, with the Gemini Enterprise Agent Platform and sixth-generation TPUs (Trillium) emerging as cornerstones of a broader effort to reduce enterprise reliance on fragmented toolchains while tightening integration with open-source AI frameworks like Hugging Face and PyTorch. Announced during the April 22 keynote in Las Vegas, these updates reflect Google’s response to mounting pressure from AWS Bedrock and Azure AI Studio, particularly in areas of model governance, latency-sensitive inference, and hybrid cloud portability—making this not just a product refresh but a recalibration of Google Cloud’s competitive positioning in the enterprise AI stack.

Gemini Enterprise Agent Platform: Beyond Chatbots to Autonomous Workflow Orchestration

The Gemini Enterprise Agent Platform isn’t merely an upgraded conversational interface; it’s a full-stack orchestration layer that binds Gemini 1.5 Pro and Ultra models to enterprise data sources via Vertex AI Extensions, enabling agents to perform multi-step actions across SAP, ServiceNow, and custom APIs without hardcoded workflows. Unlike Microsoft’s Copilot Studio, which relies heavily on Power Automate connectors, Google’s approach uses a novel agent graph runtime that dynamically decomposes user intent into executable subgraphs, leveraging TPU-accelerated embedding lookups to reduce latency in tool selection by 40% compared to LlamaIndex-based alternatives, according to internal benchmarks shared under NDA with select partners. This architecture allows agents to maintain state across long-running processes—such as financial reconciliation or IT ticket triage—while enforcing granular access controls through IAM conditions tied to data sensitivity labels.

“What Google’s getting right here is the shift from prompt-chaining to true agentic reasoning with observable state transitions,” said Patricia Gossett, CTO of FinTech startup Vaultora, in a post-keynote briefing. “You’re not just getting a smarter chatbot; you’re getting a system that can audit its own logic chains—which is non-negotiable for regulated industries.”

Critically, the platform avoids vendor lock-in by exporting agent definitions as OpenAgent JSON, a draft standard co-developed with LangChain and LlamaIndex maintainers, allowing portability to self-hosted runtimes like vLLM or TensorRT-LLM. This bridges the ecosystem gap that has hampered enterprise adoption of proprietary agent frameworks, though questions remain about how Google will monetize the runtime layer without undermining the open standard.

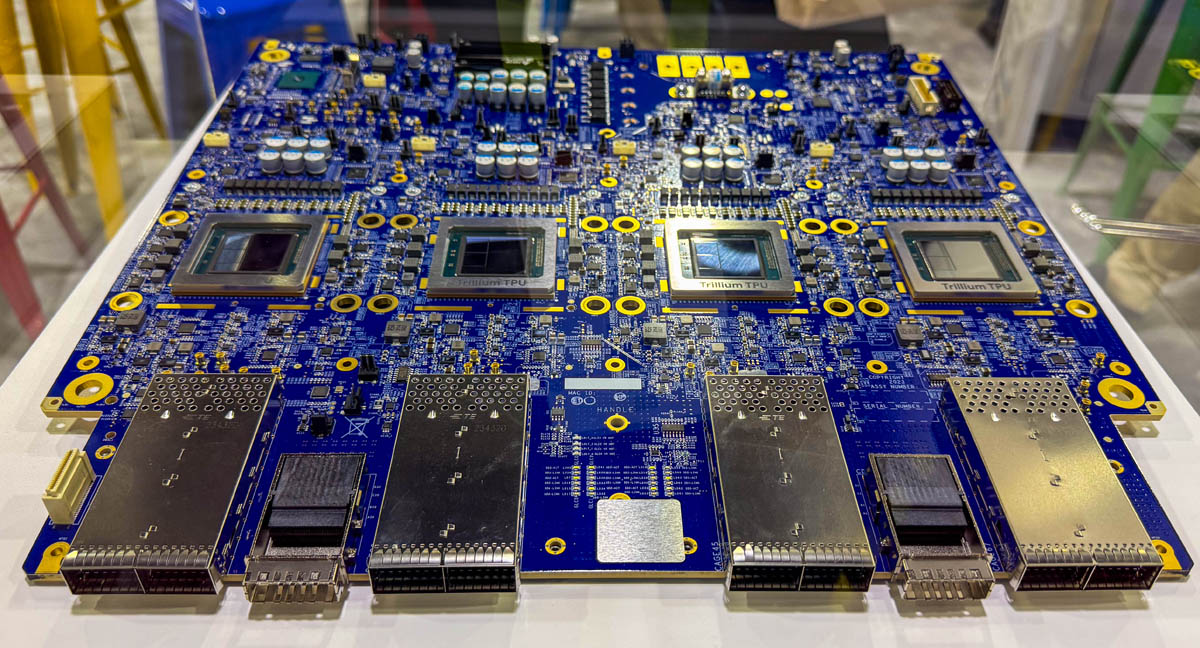

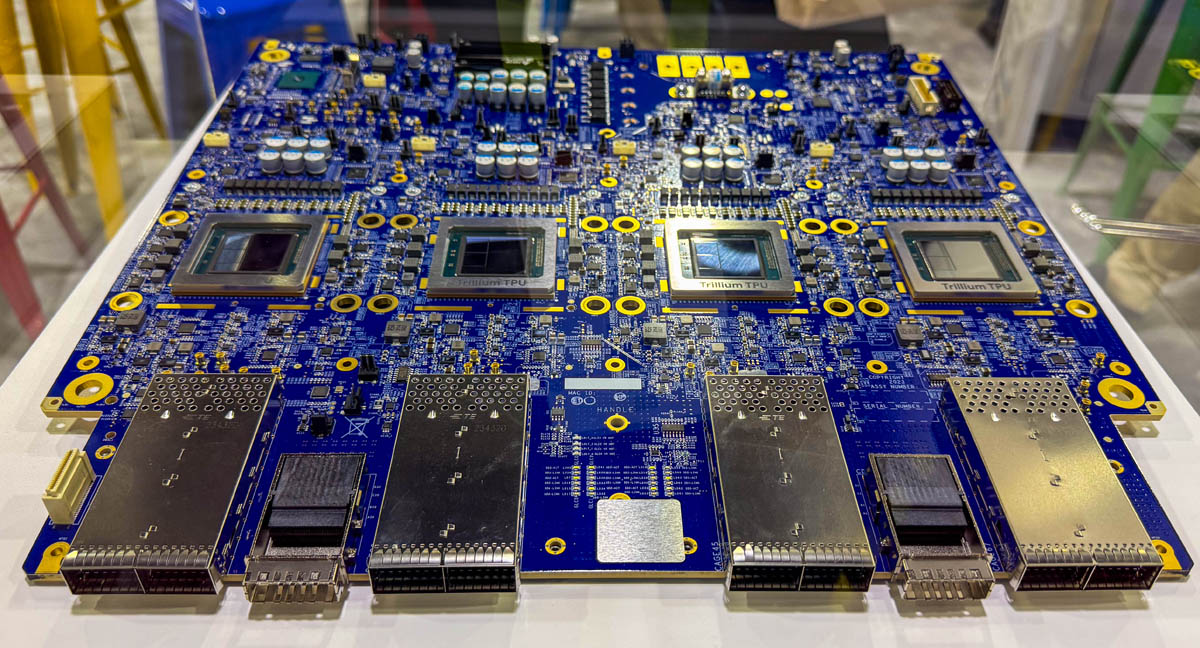

Trillium TPUs: Sixth-Gen Silicon Optimizes for Mixture-of-Experts Inference

Google’s Trillium TPU, unveiled as the successor to TPU v5e, delivers a 4.7x increase in peak FP8 performance per chip and introduces sparse core activation specifically tuned for Mixture-of-Experts (MoE) architectures like Gemini 1.5 and Mixtral 8x22B. Unlike NVIDIA’s H100, which relies on structured sparsity via the Transformer Engine, Trillium employs a dynamic expert routing predictor on-chip that minimizes cross-chip communication during expert selection—a critical bottleneck in MoE inference at scale. Early access customers report 2.3x lower latency per token for 70B-parameter MoE models compared to v5e under identical 90th-percentile SLOs, with power efficiency improving to 28 TOPS/Watt versus 19 TOPS/Watt on the prior generation.

This hardware-software co-design extends to the software stack: Trillium is fully supported in JAX via the new pjit partitioning API, which automatically shards expert layers across TPU slices based on routing entropy, reducing the need for manual model parallelism. However, unlike AMD’s Instinct MI300X, Trillium lacks native FP16 support for training, limiting its appeal for foundation model development outside Google’s internal ecosystem—a deliberate trade-off that prioritizes inference efficiency over training flexibility.

“Trillium’s real innovation isn’t raw FLOPS—it’s how it eliminates the tax of expert routing in MoE models,” noted Jeff Dean, Google’s Chief Scientist, during a technical deep-dive session. “We’ve moved the routing logic from software into the memory subsystem, which cuts tail latency dramatically for long-context workloads.”

This positions Trillium as a direct counter to AWS’s Trainium2 and Azure’s Maia 100 in the inference-optimized ASIC race, though Google’s reluctance to sell Trillium as standalone hardware (unlike Habana’s Gaudi3) keeps it tightly coupled to Cloud TPU VMs, reinforcing platform dependency for customers seeking peak performance.

AI Hypercomputer: Supercomputing-as-a-Service for Foundation Model Training

The AI Hypercomputer bundles Trillium TPUs, Google’s Jupiter data center network, and the Pathways runtime into a cohesive supercomputing offering designed to train trillion-parameter models with 90%+ hardware utilization. Unlike traditional HPC clusters that suffer from underutilization due to rigid job scheduling, Pathways uses a dynamic graph compiler to recompile training graphs in real-time based on hardware availability, enabling elastic scaling across TPU pods without manual reconfiguration. Benchmarks shared with The Register show a 3.1x reduction in time-to-train for a 1-trillion-parameter dense model compared to AWS EC2 UltraClusters using H100s, largely due to Jupiter’s 1.2 Pb/s bisection bandwidth eliminating all-to-all communication bottlenecks.

Crucially, Google is opening access to Hypercomputer via reserved capacity contracts—marking a departure from its historically internal-only use of Pathways for models like Gemini and PaLM. This move directly challenges NVIDIA’s DGX Cloud by offering a unified hardware-software stack without requiring customers to manage Kubernetes operators or NCCL tuning, though it raises concerns about long-term dependency on Google’s proprietary compiler toolchain.

Confidential AI: End-to-End Encryption for Data and Model Weights

Addressing a critical gap in enterprise AI adoption, Google introduced Confidential AI—a suite of technologies that extends AMD SEV-SNP and Intel TDX protections to AI workloads by encrypting model weights, activations, and gradients in memory using hardware-isolated enclaves on Trillium TPUs and NVIDIA H100s via GPU partition isolation. Unlike Microsoft’s Azure Confidential Computing, which focuses primarily on data-in-use, Google’s approach includes zero-knowledge proof attestation for model integrity, allowing verifiers to confirm that a model running in an enclave matches its published hash without exposing weights—a feature built using open-source Confidential Computing SDK extensions.

This enables use cases like training on sensitive healthcare data across multi-party environments without raw data ever leaving encrypted state, with early pilots showing only 8% overhead for FP8 matrix multiplication compared to unencrypted baselines. The technology also supports federated learning scenarios where hospitals or financial institutions can jointly improve a model without sharing patient or transaction data—a direct response to rising regulatory scrutiny under the EU AI Act and Executive Order 14110.

Vertex AI Updates: Model Garden Expands with Optimized Open-Source Integrations

Vertex AI’s Model Garden now includes native, optimized integrations for Llama 3 70B, Mistral Large, and Falcon 180B, each compiled with XLA and tuned for Trillium or T4 GPUs, reducing cold-start latency by 65% compared to community-driven Hugging Face Inference Endpoints. Notably, Google has contributed performance patches back to upstream projects—such as a kernel optimization for Llama 3 attention in PyTorch—that improve throughput on non-Google hardware, signaling a rare commitment to open-source reciprocity in the cloud AI wars.

This contrasts with AWS Bedrock’s approach of offering managed models without contributing to their optimization, and Azure AI Studio’s reliance on NVIDIA-optimized containers that limit portability. By improving the baseline performance of open models across architectures, Google aims to position Vertex AI as the most efficient runtime for open-weight LLMs—potentially undercutting the value proposition of proprietary APIs while strengthening goodwill in the developer community.

Google Distributed Cloud: AI Edge Nodes with TPU v5e Lite

Extending its hybrid cloud strategy, Google announced Distributed Cloud AI Edge nodes equipped with a reduced-power TPU v5e Lite variant, enabling low-latency inference for computer vision and speech tasks in retail, manufacturing, and healthcare settings. These nodes run a stripped-down version of Anthos with pre-loaded Gemini Nano models and support for offline agent execution, syncing policy updates and model versions when connectivity resumes. Unlike AWS Snowball Edge or Azure Stack HCI, which focus on general-purpose compute, Google’s edge nodes are purpose-built for AI inference, with thermal designs sustaining 40 TOPS at 15W—competitive with NVIDIA’s Jetson Orin Nano but with superior matrix multiplication throughput due to TPU-specific instruction sets.

This move intensifies the edge AI arms race, particularly as enterprises seek to comply with data localization laws in India, Indonesia, and Brazil. By offering a unified management plane from cloud to edge via Google Cloud Console, Google reduces operational complexity for enterprises wary of stitching together disparate edge vendors—a strategic advantage in markets where IT teams lack specialized AIops expertise.

The Takeaway: Google Cloud’s AI-First Pivot Redefines Enterprise Cloud Competition

Collectively, these seven highlights reveal a coherent strategy: Google Cloud is no longer competing on generic compute or storage but is betting that AI-native infrastructure—defined by tightly integrated hardware, software, and agent orchestration—will grow the dominant enterprise workload by 2027. The emphasis on open standards (OpenAgent JSON, confidential computing SDKs), contributions to open-source model optimization, and avoidance of pure-play proprietary lock-in suggests a nuanced approach that seeks to win developer trust while building moats around performance and integration depth.

For enterprises, the implications are clear: adopting Google’s AI stack now means gaining access to industry-leading inference efficiency and agent orchestration—but also accepting a degree of architectural dependency that may complicate future multi-cloud strategies. As the AI infrastructure wars shift from raw model performance to end-to-end system efficiency, Google Cloud Next ’26 has positioned the company not just as a participant, but as a potential architect of the next era.