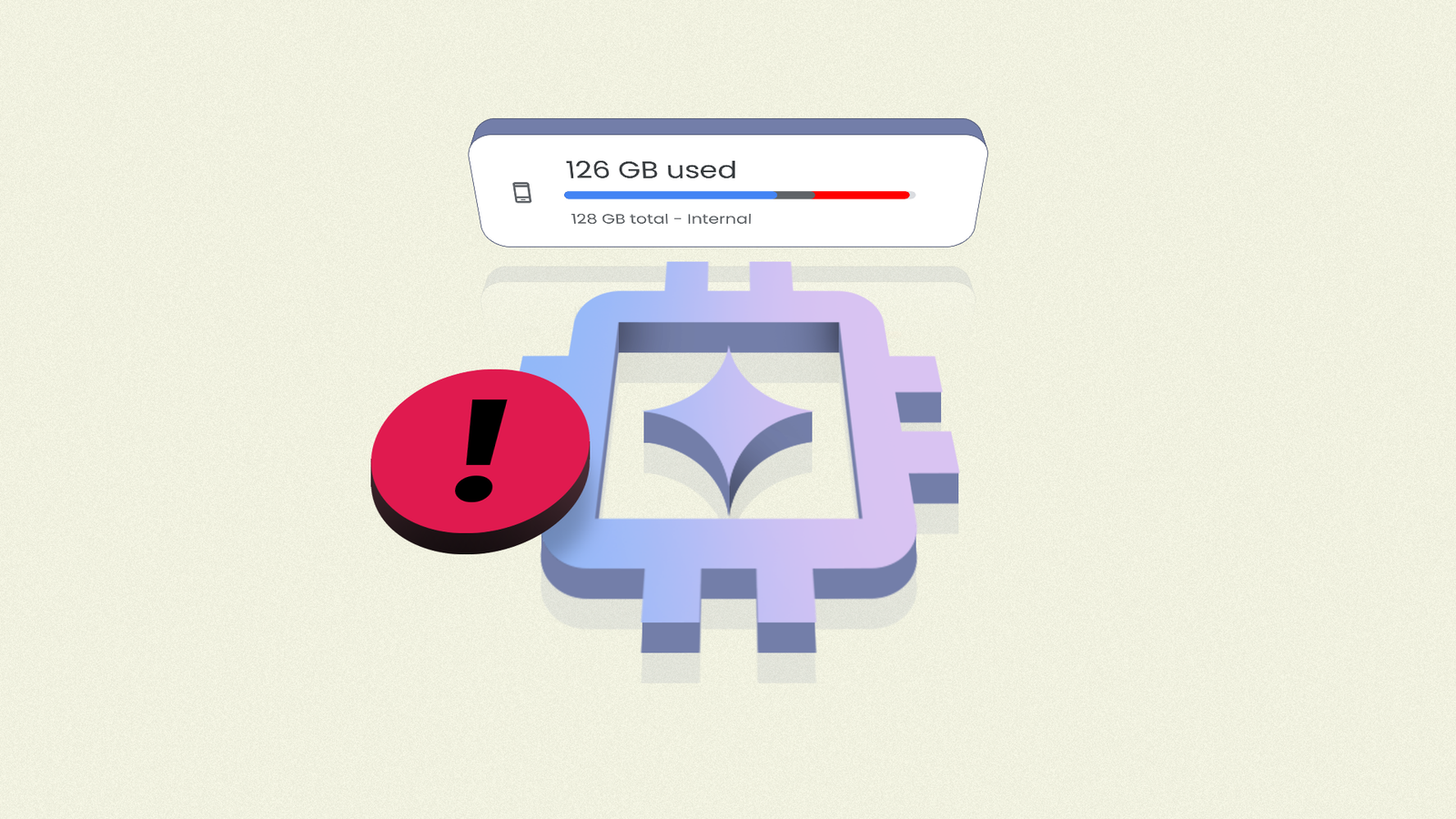

Google’s AICore, the system-level service powering on-device generative AI features, is rapidly consuming massive swaths of local storage on 128GB Android devices. As of mid-May 2026, the cumulative footprint of cached model weights and vector databases is effectively rendering base-model handsets obsolete for power users, forcing a hard reckoning with the limitations of current mobile storage architectures.

The honeymoon phase of “on-device intelligence” is over. We are currently witnessing a collision between the aggressive parameter scaling of Large Language Models (LLMs) and the stagnant hardware configurations of entry-level and mid-tier smartphones. For the user, this manifests as a “storage death spiral”: the OS requires free space to operate, yet the background AICore processes are ballooning to accommodate higher-precision quantized models.

The Quantization Trap and the 128GB Ceiling

To understand why your storage is vanishing, one must look at how AICore handles model deployment. Google is pushing for 4-bit and 8-bit integer quantization to run these models on the NPU (Neural Processing Unit), but even at these compressed levels, the sheer volume of weights required for multimodal tasks—vision, voice, and text processing—is non-trivial. When you factor in the “swap space” required for the AICore persistent cache, which stores frequently accessed vector embeddings to reduce latency, the 128GB floor is simply insufficient.

It isn’t just about the model size; it’s about the fragmentation of the flash memory. As AICore writes and rewrites these massive weight files, the UFS (Universal Flash Storage) controller struggles with wear leveling and garbage collection, further degrading system responsiveness.

“The industry spent a decade optimizing for app size, assuming a steady state where a 50MB APK was the gold standard. We are now seeing system-level AI services that treat local storage as a dumping ground for multi-gigabyte blobs. If you are running 128GB in 2026, you are essentially renting your phone from the OS.” — Dr. Aris Thorne, Lead Systems Architect at a major mobile silicon firm.

Structural Entropy: Why Optimization Isn’t Keeping Pace

The technical reality is that while Google has made strides in Gemma-based model efficiency, the demand for higher context windows—the amount of information an AI can “remember” at once—requires larger KV (Key-Value) caches. These caches are stored in local flash, and on a device with limited storage, there is no overhead for the system to manage these files effectively.

We are seeing a divergence in the Android ecosystem:

- The Premium Tier (512GB+): These devices utilize UFS 4.0 or higher, allowing for faster read/write cycles that mitigate the impact of AICore background tasks.

- The Base Tier (128GB): These devices are often stuck on UFS 3.1 or even eMMC 5.1 in ultra-budget models, creating a bottleneck where background AI indexing locks the storage controller, leading to the “jank” users report during heavy system usage.

The Security and Privacy Paradox

There is a dangerous irony here. By keeping these models on-device, Google claims to prioritize privacy—keeping your data away from the cloud. However, the storage pressure creates a new attack vector. When storage reaches critical capacity, the OS often initiates aggressive background cleanup processes. If these processes are not hardened, they can lead to race conditions where private model weights are prematurely purged or, worse, improperly cached in insecure temporary directories.

as developers begin to integrate their own AICore APIs into third-party apps, we are looking at a “tragedy of the commons” scenario. Every app developer wants their own slice of the local AI pie, and there is currently no centralized, user-facing “AI Storage Manager” to rein in the total footprint.

The 30-Second Verdict

If you are currently shopping for an Android device, 128GB is no longer a “budget” choice; it is a liability. The integration of AICore is systemic, not optional. Unless Google introduces a radical “cloud-bursting” feature that allows AICore to offload non-essential weights to the cloud when local storage hits a 90% threshold, the 128GB tier will be effectively unusable for power users by the end of the year.

| Hardware Constraint | Impact on AICore Performance |

|---|---|

| 128GB Storage | High: Frequent cache thrashing, system-level slowdowns. |

| 8GB RAM | Medium: Aggressive background process killing. |

| UFS 3.1 Interface | High: Latency in model loading during context switching. |

The “AI-first” phone is here, but it demands more than just a snappy NPU. It demands a storage architecture that respects the user’s finite capacity. Right now, the software is writing checks that the hardware simply cannot cash.

When the system starts deleting your photos to make room for a “smarter” keyboard, we have crossed the line from utility to obstruction. It is time for Google to provide granular control over what AI models live on our devices and how much space they are permitted to occupy. Until then, treat that 128GB sticker as a warning, not a specification.