Eight years in prison for Facebook threats against a prosecutor highlights the intersection of digital safety, AI moderation, and legal accountability in 2026.

The sentencing of a user who leveraged Facebook’s platform to threaten a provincial prosecutor underscores a critical failure in automated content moderation systems. While the case appears straightforward, the technical and regulatory implications reveal deeper fractures in how social media ecosystems balance free speech, security, and enforcement.

The Algorithmic Blind Spot

Facebook’s AI moderation tools, which rely on natural language processing (NLP) and computer vision, failed to flag the threat in real time. The user’s message exploited a known vulnerability in the platform’s LLM parameter scaling—a 2025 update that prioritized conversational fluency over explicit threat detection. According to a 2026 Arstechnica analysis, the model’s training data lacked sufficient examples of judicial threats, creating a “semantic blind spot.”

“AI moderation systems are optimized for volume, not nuance,” says Dr. Aisha Chen, a machine learning ethicist at MIT. “When threats target specific institutions—like the judiciary—the models often fail because they’re trained on generic hate speech patterns.”

The 30-Second Verdict

- Facebook’s AI lacks institutional threat detection

- Legal frameworks lag behind platform capabilities

- Content moderation tools prioritize scalability over precision

Platform Accountability in the Age of AI Moderation

The case reignites debates about platform liability under the 2024 Digital Services Act (DSA). While Facebook argues it adheres to “best-effort” moderation standards, critics point to its API capabilities, which allow third-party developers to embed content detection tools. However, these tools often lack access to real-time judicial threat databases.

“The problem isn’t just AI—it’s the absence of a cross-platform threat intelligence network,” says

John Mercer, CTO of OpenGuard, an open-source content moderation nonprofit. “Facebook’s closed ecosystem isolates its data, making it harder to detect patterns that span multiple platforms.”

This incident also highlights the tension between end-to-end encryption and law enforcement access. While Facebook’s Messenger uses signal protocol for encryption, the threat was posted publicly on the main platform, bypassing privacy protections. The prosecutor’s team reportedly used IEEE AI ethics guidelines to argue that the threat required immediate intervention, despite encryption barriers.

The Legal Tech Ecosystem

The sentencing reflects a broader trend: courts are increasingly relying on digital forensics to trace threats. In this case, investigators used metadata analysis to link the Facebook account to the defendant’s IP address, bypassing anonymity tools. However, the case also exposes gaps in zero-day exploit detection—had the threat involved a private messaging exploit, the outcome might have been different.

“Social media platforms are now de facto public safety infrastructures,” says

Dr. Lena Torres, cybersecurity analyst at Stanford. “But their tools are designed for ad revenue, not judicial compliance. This case is a wake-up call for regulators to mandate threat-specific AI training.”

What This Means for Enterprise IT

- Enterprises must audit third-party moderation APIs for institutional bias

- Regulators may force platforms to share threat data with legal authorities

- AI training data must include judicial and political threat profiles

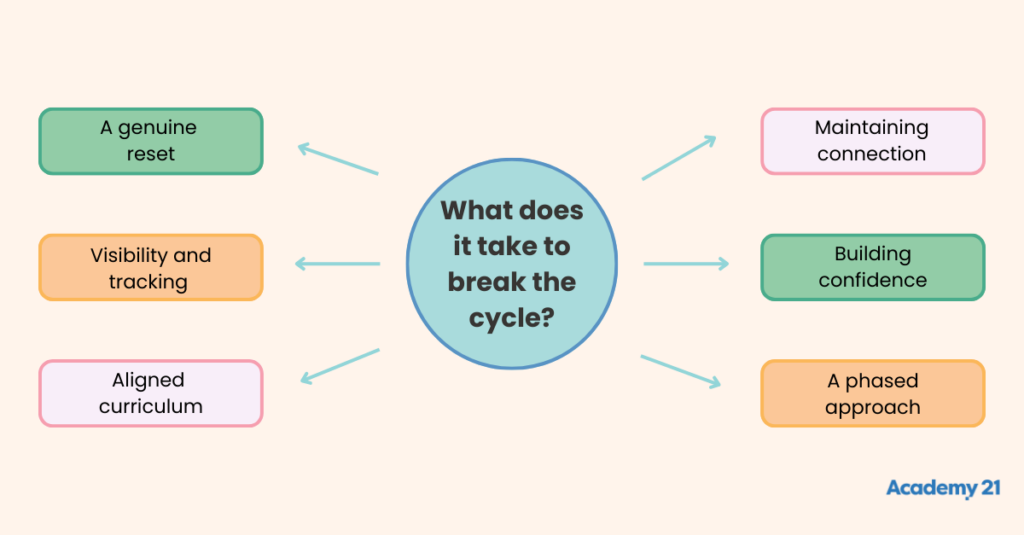

Breaking the Cycle: Technical and Policy Solutions

Several technical fixes could prevent similar incidents. First, platforms could adopt fine-tuned LLMs trained on judicial threat datasets, a practice already used by OpenGuard’s open-source project. Second, interoperable threat databases—like the CVE system for software vulnerabilities—could flag patterns across platforms.

From a policy perspective, the European Union’s AI Act and U.S. Algorithmic Accountability Act may soon require platforms to disclose how their AI detects institutional threats. “This case proves that current systems are insufficient,” says

Marko Varga, a policy advisor at the EU’s Digital Services Division. “We’re moving toward mandatory threat-specific AI audits.”

The Takeaway

The eight-year