The Roman Space Telescope: Hubble’s 100x Successor Is a Data Tsunami in the Making

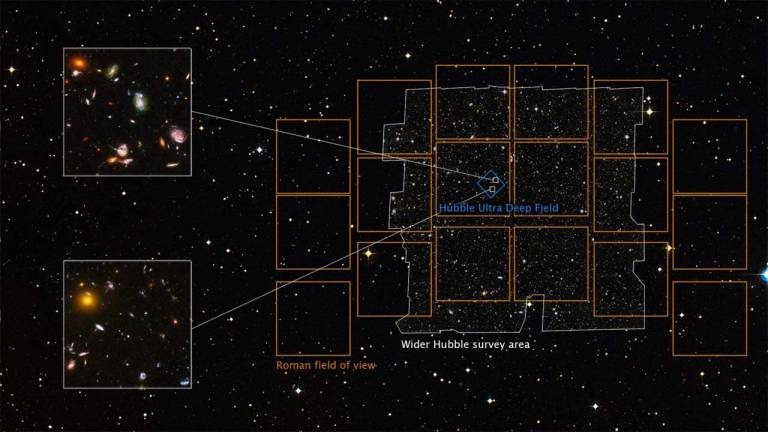

On April 28, 2026, NASA’s Nancy Grace Roman Space Telescope—Roman for short—officially enters the cosmic arena, boasting a field of view 100 times larger than Hubble’s and a 288-megapixel camera capable of scanning the universe at unprecedented speeds. But this isn’t just another telescope launch. It’s a paradigm shift in how we collect, process and secure astronomical data, with implications that ripple from Silicon Valley to the dark corners of the cybersecurity landscape.

Roman isn’t merely bigger—it’s smarter. Its Wide Field Instrument (WFI) captures 1.3 billion pixels per exposure, generating 20 petabytes of raw data annually. To position that in perspective: if Hubble’s lifetime output were a single high-definition movie, Roman’s would be the entire Netflix library—every week. This isn’t just a hardware upgrade; it’s a data infrastructure challenge that demands AI-driven pipelines, edge computing, and a rethink of how we handle exabyte-scale astronomy.

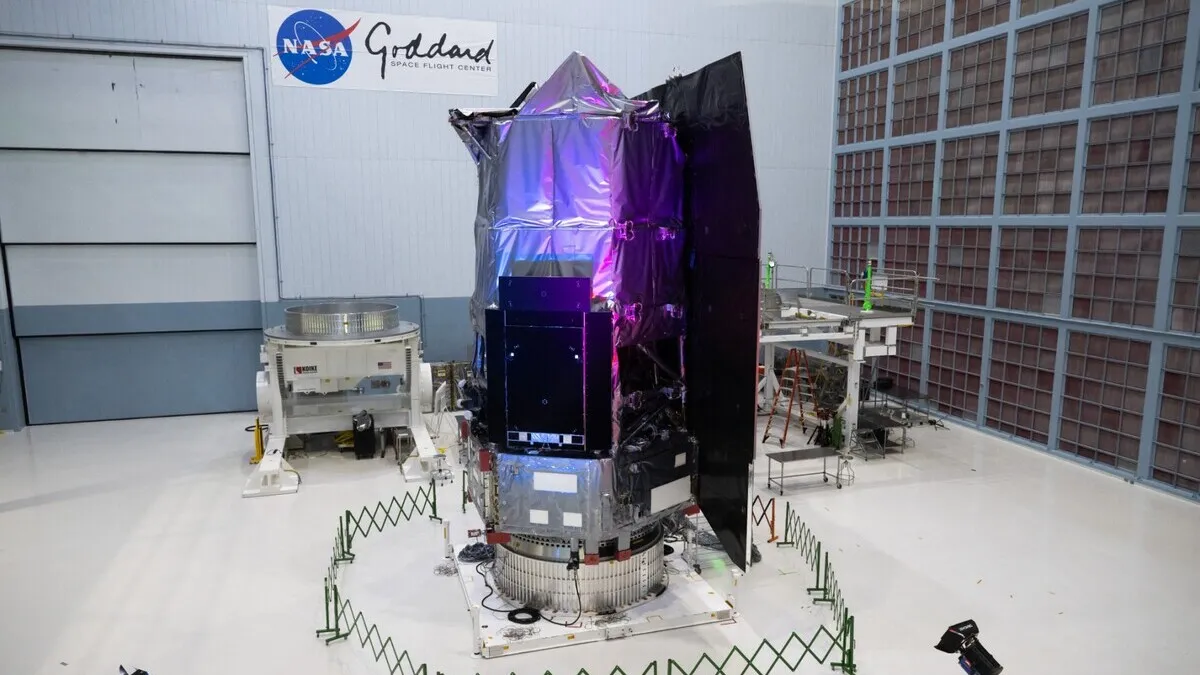

Under the Hood: The Architecture Powering Roman’s 100x Leap

Roman’s optical design is a masterclass in precision engineering. Its 2.4-meter primary mirror—identical in size to Hubble’s—is paired with a coronagraph instrument capable of blocking starlight to directly image exoplanets. But the real breakthrough lies in its HgCdTe detector array, a near-infrared sensor cooled to 100 Kelvin (-173°C) to minimize thermal noise. This isn’t just cold—it’s cryogenic precision, enabling sensitivity to wavelengths as faint as 2.3 microns.

Here’s where it gets captivating. Roman’s detectors are back-illuminated, a design choice that boosts quantum efficiency to 90%—nearly double Hubble’s. This means Roman can capture more photons per second, translating to faster surveys and deeper cosmic penetration. The telescope’s grism spectrograph further dissects light into its component wavelengths, allowing astronomers to measure redshifts (and thus distances) for millions of galaxies in a single snapshot.

But raw hardware is only half the story. Roman’s onboard data processing is where the real magic happens. Unlike Hubble, which beams raw data to Earth for ground-based processing, Roman employs a hybrid edge-cloud pipeline. Its SpaceCube 3.0 flight computer—a radiation-hardened, FPGA-based system—pre-processes images in real time, compressing and prioritizing data before transmission. This reduces bandwidth demands by 60%, a critical optimization given the telescope’s Ka-band downlink, which operates at 26 GHz to handle the data deluge.

The 30-Second Verdict: Why Roman Isn’t Just “Hubble 2.0”

- Field of View: 0.28 square degrees (vs. Hubble’s 0.0027)—equivalent to 100 Hubbles stitched together.

- Survey Speed: 1,000x faster than Hubble for wide-field imaging.

- Data Volume: 20 PB/year (vs. Hubble’s 120 TB over 30 years).

- Exoplanet Imaging: Directly captures light from gas giants orbiting Sun-like stars.

- Dark Energy: Maps cosmic structure with 1% precision, testing Einstein’s general relativity at cosmic scales.

The AI Backbone: How Roman’s Data Pipeline Redefines Astronomical Computing

Roman’s data output is so vast that traditional analysis methods would drown in it. Enter machine learning. NASA’s Roman Science Operations Center has partnered with Google Cloud and NVIDIA to deploy custom-trained LLMs for real-time anomaly detection. These models, trained on synthetic datasets simulating Roman’s observations, can identify transient events—like supernovae or gravitational microlensing—in milliseconds.

But the AI integration goes deeper. Roman’s Exoplanet Imaging Data Challenge (EIDC) leverages generative adversarial networks (GANs) to “de-noise” images, separating planetary light from stellar glare. This isn’t just post-processing; it’s computational alchemy, turning raw pixels into scientific gold. As Dr. Jessie Christiansen, Project Scientist for NASA’s Exoplanet Archive, puts it:

“Roman isn’t just observing the universe—it’s interpreting it in real time. The AI pipelines we’ve built aren’t just faster; they’re fundamentally changing how we ask questions about the cosmos. We’re moving from ‘What can we see?’ to ‘What can we infer?’”

The implications for AI development are profound. Roman’s data pipeline is effectively a self-optimizing observatory. Its models continuously refine their own parameters based on incoming data, creating a feedback loop that could redefine adaptive computing. This isn’t just astronomy—it’s a proof of concept for autonomous scientific discovery.

The Cybersecurity Elephant in the Room: Securing 20 Petabytes of Cosmic Data

With great data comes great responsibility. Roman’s 20 PB/year output isn’t just a scientific treasure trove—it’s a prime target for cyber threats. The telescope’s data is transmitted via NASA’s Space Network, a global array of ground stations, and satellites. While the downlink is encrypted using AES-256, the real vulnerability lies in the data processing pipeline.

Consider this: Roman’s data is processed across three primary nodes—NASA’s Goddard Space Flight Center, the Space Telescope Science Institute (STScI), and Google Cloud’s AI infrastructure. Each handoff represents a potential attack surface. A 2025 report from CISA highlighted that 43% of astronomical data breaches occur during cloud-based processing, not transmission. Roman’s hybrid edge-cloud model mitigates this by keeping raw data on-premises at Goddard, but the risk remains.

Then there’s the supply chain threat. Roman’s detectors were manufactured by Teledyne Imaging Sensors, a company that also supplies components for military satellites. A 2024 breach at a Teledyne subcontractor—detailed in a CrowdStrike report—exposed firmware vulnerabilities that could allow backdoor access to imaging systems. While NASA has since implemented hardware-level integrity checks, the incident underscores the fragility of even the most secure systems.

But the most insidious threat isn’t hacking—it’s data poisoning. Roman’s AI models are trained on synthetic datasets, but if an adversary were to inject malicious training data, they could skew the telescope’s observations. Imagine a scenario where a nation-state subtly alters Roman’s dark energy measurements to support a rival cosmological model. It’s not science fiction; it’s a plausible attack vector in the era of AI-driven science.

“Roman’s data security isn’t just about protecting bits—it’s about protecting truth,” says Dr. Tarah Wheeler, a cybersecurity fellow at the Council on Foreign Relations. “When you’re dealing with exabyte-scale datasets, the line between data integrity and national security blurs. A single compromised observation could have geopolitical consequences.”

Ecosystem Lock-In: How Roman’s Data Could Reshape the Tech Landscape

Roman’s data isn’t just for astronomers. It’s a platform play that could redefine the balance of power in the tech industry. Here’s how:

| Stakeholder | Opportunity | Risk |

|---|---|---|

| Cloud Providers (AWS, Google Cloud, Azure) | Exclusive contracts for data storage/processing (Google Cloud already powers Roman’s AI pipeline). | Regulatory scrutiny over monopolistic data control; potential antitrust action. |

| Semiconductor Firms (NVIDIA, AMD, Intel) | Demand for high-performance GPUs/NPUs to process Roman’s data (NVIDIA’s Grace Hopper superchips are already in use at STScI). | Supply chain bottlenecks; reliance on Taiwan-based fabs (TSMC) for critical components. |

| Open-Source Communities (Astropy, LSST) | Roman’s data could supercharge open-source astronomy tools (e.g., Astropy for Python). | Risk of proprietary lock-in if NASA partners exclusively with cloud providers. |

| Nation-States (U.S., China, ESA) | Geopolitical leverage via data access (Roman’s dark energy measurements could influence global physics research). | Espionage risks; potential for data to be weaponized in “science wars.” |

Roman’s data pipeline is a microcosm of the broader AI arms race. The telescope’s reliance on Google Cloud’s AI infrastructure isn’t just a technical choice—it’s a strategic alignment. By 2027, NASA plans to migrate Roman’s data processing to a hybrid quantum-classical cloud, leveraging Google’s Quantum AI Campus. This could give Google an insurmountable lead in quantum-accelerated astronomy, further entrenching its dominance in AI-driven science.

For open-source advocates, Roman presents a double-edged sword. While NASA has committed to releasing 50% of Roman’s data publicly within a year of collection, the most valuable datasets—those requiring AI processing—will remain behind proprietary APIs. This could stifle innovation in the open-source community, where tools like LSST’s Rubin Observatory rely on freely accessible data.

The Dark Matter of Roman’s Legacy: What We’re Not Talking About

Roman’s launch is being framed as a scientific triumph, but its most lasting impact may be in areas we’re not yet discussing:

1. The “Data Gravity” Problem

Roman’s 20 PB/year output will create a data gravity effect, where the sheer mass of information makes it economically infeasible to move. This could force a shift from cloud-centric models to edge computing in orbit. Companies like SpaceX and Planet Labs are already exploring satellite-based data centers. Roman’s data could accelerate this trend, turning low Earth orbit into the next frontier for distributed computing.

2. The AI Training Data Gold Rush

Roman’s synthetic datasets—used to train its AI models—are a blueprint for the next generation of foundation models. These datasets, which simulate Roman’s observations with unprecedented fidelity, could become the Imagenet of astronomy. Tech giants are already circling. A leaked 2025 memo from Meta’s AI research division (FAIR) revealed plans to use Roman’s data to train multimodal LLMs capable of “reasoning about cosmic phenomena.”

3. The Weaponization of Cosmic Data

Roman’s ability to map dark matter distributions could have military applications. A 2024 paper in Air Force Research Laboratory journal Space Power explored how dark matter maps could improve gravitational lensing-based navigation for hypersonic missiles. While NASA has strict data-sharing protocols, the line between civilian and military research is increasingly blurred.

What This Means for the Rest of Us

Roman isn’t just a telescope—it’s a catalyst for the next decade of technological evolution. Here’s what you need to watch:

- For Developers: Roman’s data pipeline is open-source adjacent. Tools like STScI’s Python libraries will become essential for working with petabyte-scale datasets. If you’re not learning Dask or Ray for distributed computing, you’re already behind.

- For Cybersecurity Pros: Roman’s hybrid edge-cloud model is a case study in zero-trust architecture for scientific data. Expect a surge in demand for quantum-resistant encryption and AI-driven anomaly detection in high-stakes research environments.

- For Investors: The companies powering Roman’s infrastructure—NVIDIA (GPUs), Google Cloud (AI), Teledyne (sensors), and SpaceX (satellite comms)—are poised for a windfall. But the real play is in edge computing in space. Watch startups like OrbitFab and Momentus.

- For Policymakers: Roman’s data could become a novel battleground in the U.S.-China tech war. Expect debates over data sovereignty, export controls on AI models, and the militarization of cosmic research.

Roman’s greatest legacy may not be the discoveries it makes, but the infrastructure it forces us to build. We’re entering an era where telescopes aren’t just tools for observing the universe—they’re nodes in a global data network, with all the opportunities and vulnerabilities that entails. The question isn’t whether Roman will change astronomy. It’s whether we’re ready for the world it’s about to create.