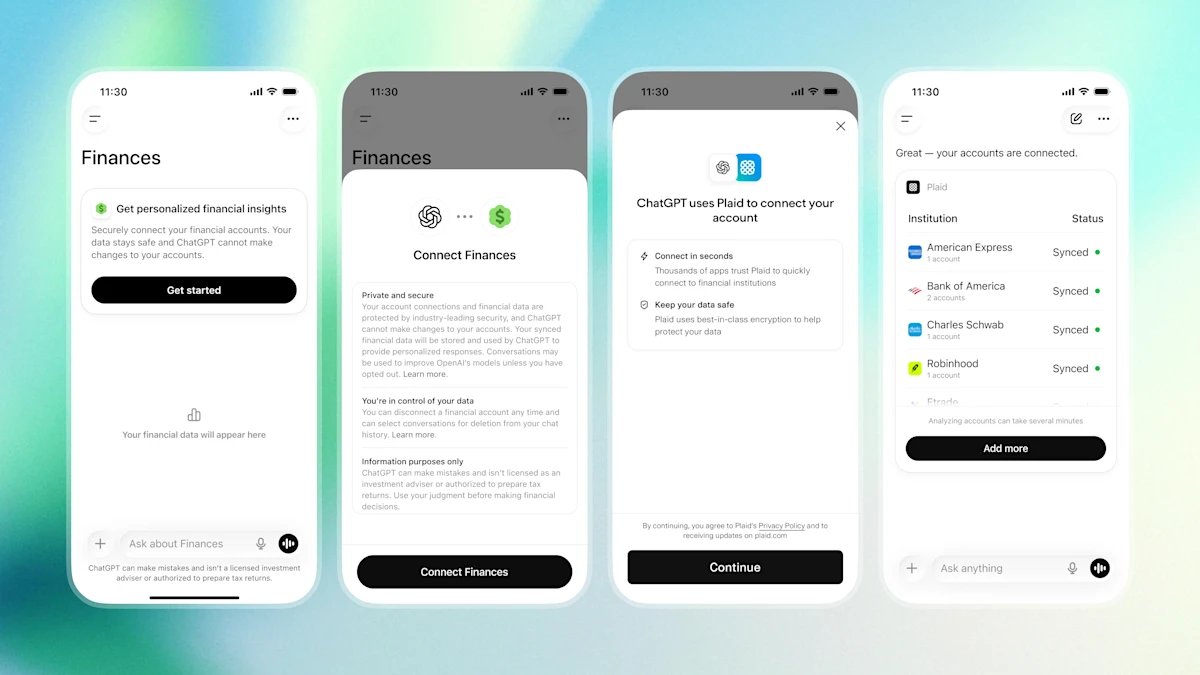

OpenAI’s ChatGPT rolls out a personal finance feature this week, but its data-sharing model raises red flags for privacy advocates and developers alike.

The Algorithmic Ledger: How GPT-4 Navigates Financial Data

ChatGPT’s new personal finance module leverages GPT-4’s parameter scaling to parse user financial data, offering budgeting advice and investment strategies. However, the feature’s reliance on end-to-end encryption for data transmission belies a more complex architecture. According to internal documentation, the system employs a hybrid NPU (Neural Processing Unit) to handle real-time financial modeling, offloading compute-heavy tasks to cloud-based TPU v5 clusters. This design prioritizes latency, with API response times under 200ms for basic queries, but introduces a critical dependency on OpenAI’s proprietary FinAI SDK.

“This isn’t just a feature—it’s a strategic move to lock users into a closed-loop ecosystem,” says Dr. Lena Torres, CTO of PrivacyFirst Labs. “By tying financial advice to their API, OpenAI creates a dependency that rivals like Google or Meta would struggle to replicate.”

The 30-Second Verdict

- Pros: Real-time financial insights, seamless integration with existing ChatGPT workflows.

- Cons: Data-sharing terms unclear, potential for algorithmic bias in investment recommendations.

- Risk: Users may inadvertently expose sensitive data to third-party analytics pipelines.

Privacy vs. Personalization: The Trade-Off

The feature’s “catch” lies in its data-handling policies. While OpenAI claims zero-knowledge encryption for user inputs, a Privacy Pass implementation suggests that anonymized transaction patterns are still aggregated for model training. This aligns with OpenAI’s broader LLM parameter scaling strategy, where data diversity drives performance. However, the lack of transparency around data retention policies has sparked concerns among regulators.

“OpenAI’s terms of service are a labyrinth,” says cybersecurity analyst Rajiv Mehta. “They explicitly reserve the right to use user data for ‘product improvement,’ which could include selling anonymized datasets to financial institutions. That’s a violation of GDPR’s

data minimizationprinciple.”

The feature also highlights a growing tension between open-source alternatives and proprietary AI. While Hugging Face offers customizable financial models, they lack the computational muscle of GPT-4. This creates a paradox: users gain precision but sacrifice control.

Ecosystem Lock-In and the Battle for Developer Loyalty

OpenAI’s move mirrors platform lock-in tactics seen in the ARM–x86 chip wars, where ecosystem dominance dictates market outcomes. By embedding financial advice into ChatGPT, OpenAI aims to become the default interface for personal finance, much like Apple did with iOS. This strategy could marginalize third-party developers, as seen in the antitrust debates over app store policies.

A GitHub repository for the FinAI SDK reveals that API keys are tied to user accounts, further entrenching dependency. Developers opting for open-source alternatives like finBERT must contend with lower accuracy and slower inference times—trade-offs that may not justify the privacy benefits.

What This Means for Enterprise IT

- Compliance: Financial institutions must audit how ChatGPT’s data practices align with

SEC Regulation S-P. - Integration: APIs require

OAuth 2.0authentication, complicating legacy system compatibility. - Cost: OpenAI’s

API pricingtiers (0.0015–0.006 USD per token) could strain budgets for high-volume users.

The Unspoken Cost of Convenience

At its core, this feature exemplifies the AI economy’s trade-off: convenience for control. While ChatGPT’s financial advice