Starbucks is currently beta-testing a ChatGPT-powered integration designed to personalize beverage recommendations based on user preference and mood. Deployed as a specialized GPT agent, the tool attempts to bridge the gap between conversational AI and retail commerce, though initial user experiences suggest a reliance on basic prompt-engineering rather than deep personalization.

Let’s be real: a “coffee recommender” sounds like a toy. But if you look past the venti lattes, you’re seeing a skirmish in the larger war for intent-based commerce. Starbucks isn’t trying to aid you find a drink. they are attempting to map the semantic relationship between a user’s emotional state and a specific SKU (Stock Keeping Unit). By leveraging OpenAI’s ecosystem, they are effectively outsourcing their customer discovery layer to a Large Language Model (LLM), hoping that the model’s latent space can predict cravings better than a static menu can.

The problem? The current beta is suffering from a classic case of “shallow integration.”

The Latency Gap: Why Your Latte Recommendation Feels Basic

From a technical standpoint, this isn’t a custom-trained model. It’s a wrapper. The “basic” results reported by early testers stem from the fact that the agent is likely operating on a constrained set of system prompts—essentially a digital guidebook of Starbucks’ menu—rather than a real-time integration with the user’s historical purchase data via a secure API. If the AI doesn’t know you’ve ordered a Nitro Cold Brew every Tuesday for three years, it’s just guessing based on generalities.

To move beyond “basic,” Starbucks would need to implement a Retrieval-Augmented Generation (RAG) architecture. Instead of relying on the LLM’s internal weights, a RAG system would query a private vector database of the user’s order history and local store inventory in real-time, injecting that specific context into the prompt before the LLM generates a response. Without this, you aren’t getting a personalized recommendation; you’re getting a probabilistic guess based on a training set that probably thinks “pumpkin spice” is the only seasonal variable that matters.

The 30-Second Verdict: Utility vs. Gimmick

- The Win: Low-friction entry for “decision-fatigued” customers.

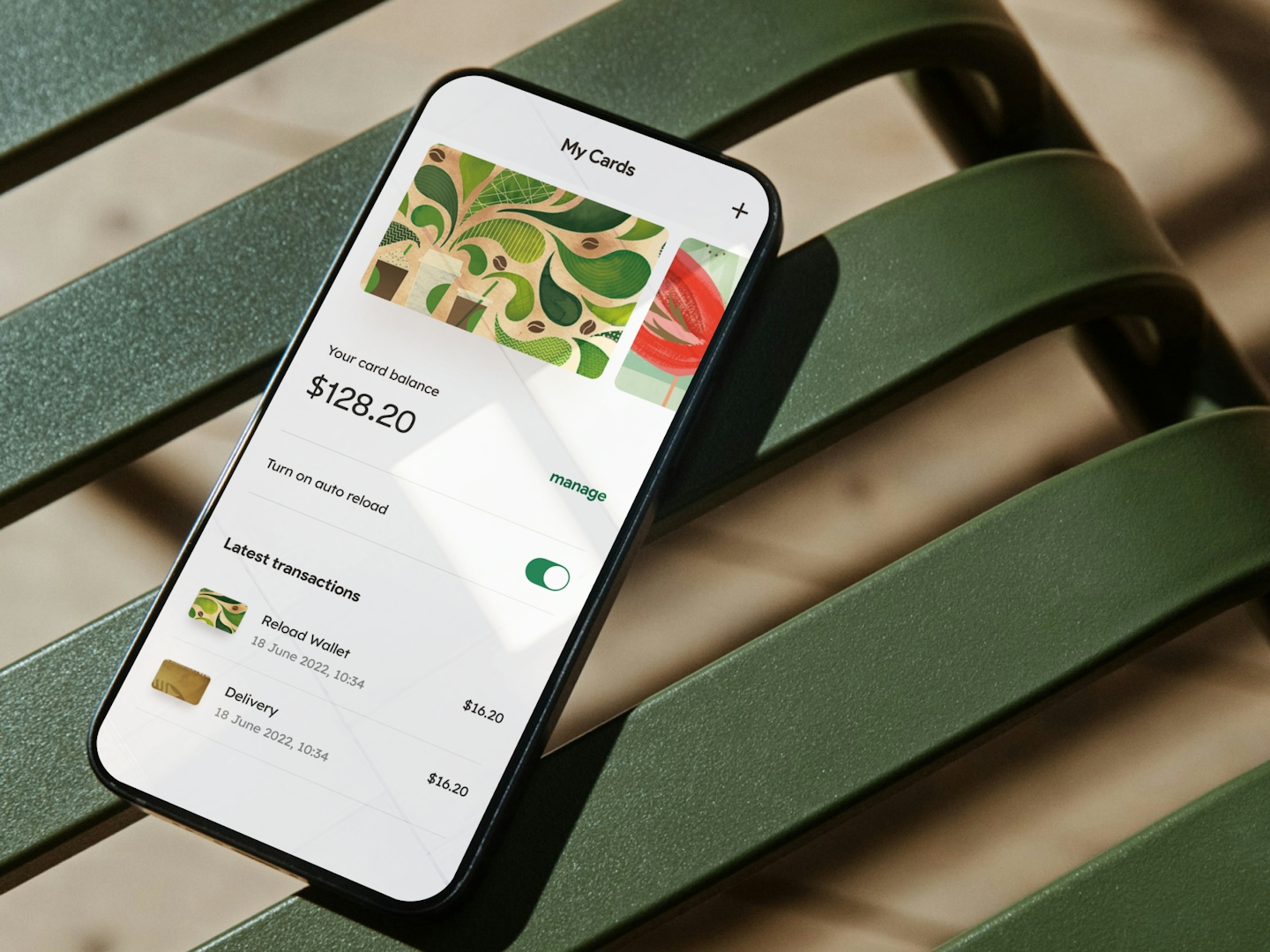

- The Fail: Lack of deep integration with the Starbucks Rewards backend.

- The Tech: Likely a GPT-4o wrapper with limited tool-calling capabilities.

The Security Shadow: API Hooks and Data Leakage

Whenever a retail giant plugs a third-party LLM into their customer-facing pipeline, the attack surface expands. We aren’t just talking about “prompt injection” where a user convinces the bot to give them a free coffee. We are talking about Indirect Prompt Injection. If the AI is reading user profiles or external data, a malicious actor could potentially embed hidden instructions in a profile field that the LLM executes, leading to data exfiltration or unauthorized API calls.

In the current climate of offensive security, where architectures like the “Attack Helix” are automating the discovery of vulnerabilities, the risk of an AI-driven “man-in-the-middle” attack on a commerce app is non-trivial. The integration must rely on strict OAuth 2.0 protocols and scoped permissions to ensure the LLM can’t accidentally trigger a payment event or leak PII (Personally Identifiable Information) into the global training set.

“The industry is rushing to integrate LLMs into consumer workflows without fully auditing the ‘plugin’ layer. When you bridge a conversational AI to a financial transaction system, you’re creating a high-value target for prompt-based exploits that traditional firewalls aren’t designed to stop.”

Platform Lock-in and the OpenAI Dependency

By building this on ChatGPT, Starbucks is making a strategic bet on the OpenAI ecosystem. What we have is a move toward platform lock-in. If the integration becomes a core part of the user experience, shifting to an open-source alternative—like a fine-tuned Llama 3 instance hosted on private infrastructure—becomes a massive migration headache.

Compare this to the “chip wars” we see with ARM vs. X86. Just as software is optimized for specific silicon, these “AI experiences” are being optimized for specific model behaviors. If OpenAI changes the RLHF (Reinforcement Learning from Human Feedback) parameters of their model, the Starbucks bot might suddenly stop recommending caffeine and start suggesting herbal teas, regardless of the system prompt. This is the danger of building a brand experience on “rented” intelligence.

| Feature | Current Beta (Wrapper) | Ideal State (Integrated RAG) |

|---|---|---|

| Context | General Menu Knowledge | User Order History + Local Stock |

| Latency | Variable (API Dependent) | Low (Edge-cached Vector DB) |

| Personalization | Probabilistic/Generic | Deterministic/Behavioral |

| Security | Third-party Trust | Zero-Trust API Gateway |

The Macro Shift: From Search to Synthesis

This Starbucks experiment is a microcosm of the shift from Search (where you look for a drink) to Synthesis (where the AI tells you what you want). It’s a play for the “Zero-Click” economy. If Starbucks can capture the intent at the LLM level, they bypass the need for the user to even open the app’s home screen. They are effectively trying to build a “shortcut” from a user’s mood directly to a checkout button.

But for this to actually operate—and not just be a novelty that people use once and forget—the AI needs to understand the physics of the product. It needs to know that a “heavy cream” modification changes the viscosity and flavor profile of a cold brew. Until the model is grounded in real-world sensory data or hyper-specific product ontologies, it will remain a fancy digital vending machine.

For the developers and engineers watching this, the lesson is clear: the value isn’t in the LLM itself, but in the proprietary data pipeline that feeds it. The winner of the AI retail war won’t be the company with the best prompt; it will be the company with the cleanest, most accessible data lake. Check the IEEE Xplore archives on semantic search if you want to see where this is actually heading—far beyond “picking a drink.”

The Bottom Line: Starbucks is playing with the tools, but they haven’t mastered the craft. Until they move from a generic ChatGPT wrapper to a deeply integrated, RAG-driven architecture, this is just a marketing exercise in “AI-washing.”