AI-driven fraud and hate speech incidents have surged past 300 monthly cases globally, fueled by the weaponization of Large Language Models (LLMs) and sophisticated deepfake synthesis. This escalation signals a critical failure in safety alignment and the proliferation of “jailbroken” open-source models used for scalable, automated social engineering.

The industry spent 2024 and 2025 obsessed with “alignment”—the attempt to make AI helpful and harmless. But as we hit May 2026, the telemetry is clear: the guardrails are leaking. We aren’t just seeing “hallucinations” anymore; we are seeing the deliberate engineering of toxicity. The gap between a model’s training safety and its real-world deployment has become a playground for awful actors.

This isn’t a failure of the code, per se. We see a failure of the philosophy. We tried to patch human morality into a statistical prediction engine using Reinforcement Learning from Human Feedback (RLHF). The result? A thin veneer of politeness that can be stripped away with a few clever prompts.

The Alignment Gap: Why Guardrails are Crumbling

At the heart of this surge is the battle between safety filters and adversarial prompting. Most commercial LLMs utilize a layered defense: a system prompt that defines boundaries, a moderation API that scans for banned keywords, and RLHF to penalize “toxic” outputs. However, the “jailbreak” community has evolved. We’ve moved past simple “Do Anything Now” (DAN) prompts into complex, multi-step adversarial attacks that leverage the model’s own logic to bypass its restrictions.

The technical vulnerability lies in the latent space of the model. By using techniques like adversarial suffixes—strings of seemingly random characters that, when appended to a prompt, force the model into a compliant state—attackers can bypass safety layers entirely. This allows the generation of hate speech or the creation of phishing scripts that are indistinguishable from human-written lures.

It’s a cat-and-mouse game where the mouse has a GPU cluster.

“The fundamental issue is that we are treating safety as a wrapper rather than an architectural primitive. As long as the ‘safety’ layer is a separate filter from the ‘reasoning’ layer, there will always be a mathematical path to circumvent it.” — Dr. Elena Rossi, Senior AI Safety Researcher.

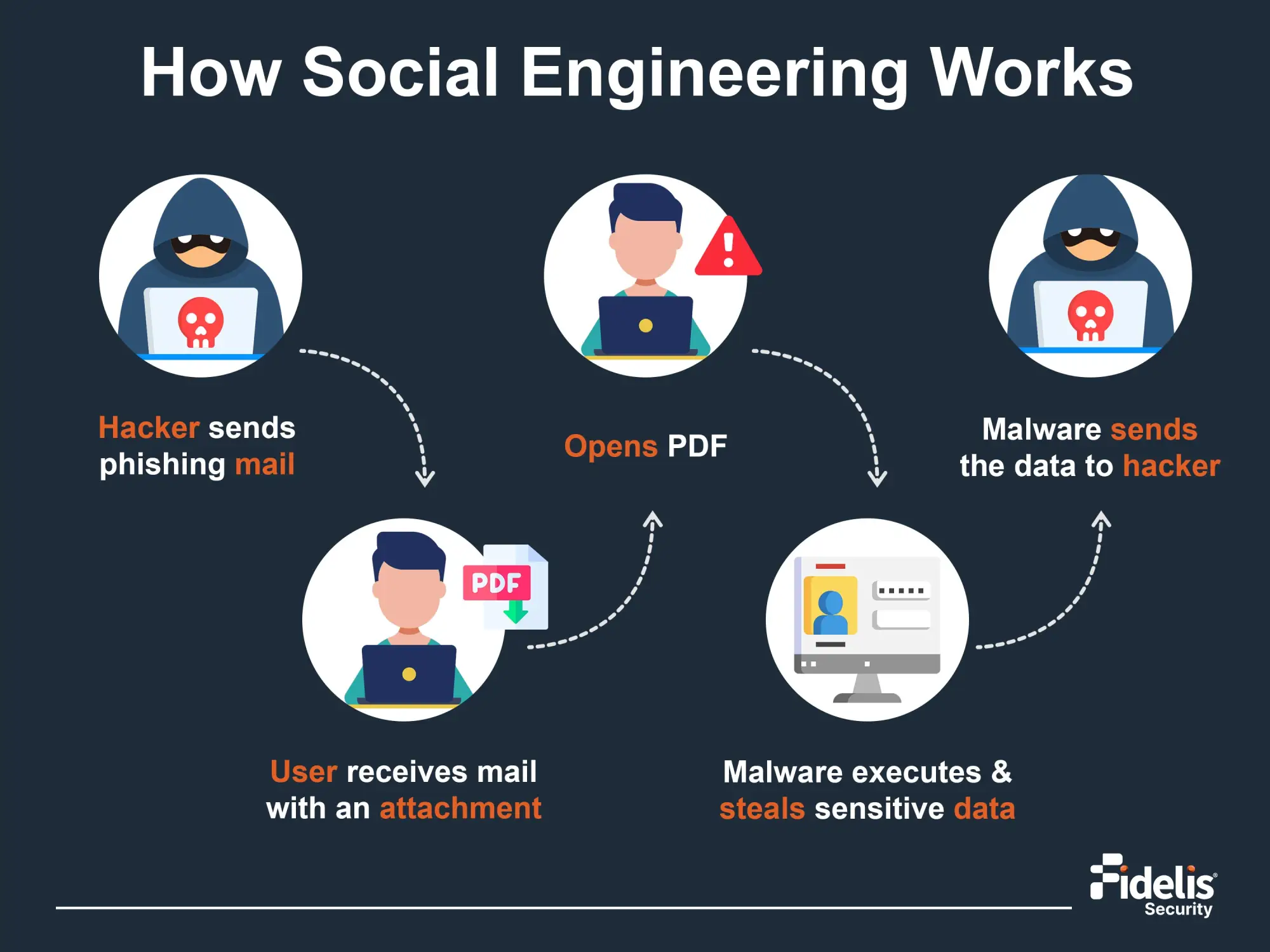

The Mechanics of the “AI Heist”: Beyond Simple Phishing

The 300+ monthly “AI accidents” reported aren’t just chatbots saying mean things. The real danger is the convergence of LLMs with RVC (Retrieval-based Voice Conversion) and advanced deepfake pipelines. We are seeing a shift toward “Hyper-Personalized Social Engineering.”

In a typical 2026 fraud workflow, the attacker doesn’t just send a generic email. They use a scraper to ingest a target’s LinkedIn, X, and public GitHub commits. An LLM then analyzes the target’s linguistic patterns—their specific cadence, favorite jargon, and professional anxieties. This “persona profile” is fed into a voice-cloning model. The result is a vishing (voice phishing) call that sounds exactly like a CEO or a family member, discussing a project that actually exists in the target’s current workflow.

This is no longer about “spotting the glitch.” The latency has dropped. The synthesis is seamless. We are dealing with end-to-end deception pipelines.

The Technical Anatomy of an AI Fraud Attack

- Data Ingestion: OSINT (Open Source Intelligence) gathering via API scrapers.

- Persona Synthesis: LLM-driven linguistic mirroring to create high-trust scripts.

- Audio Generation: Low-latency RVC models for real-time voice cloning.

- Execution: Automated deployment via VoIP gateways to bypass traditional spam filters.

Open-Source Democratization vs. Malicious Scaling

The tension between closed ecosystems (like OpenAI or Google) and open-source models (like the Llama and Mistral lineages) has reached a breaking point. While closed models have rigorous, centralized filtering, they are “black boxes” that can be bypassed. Open-source models, however, can be “unfiltered” entirely.

Using Low-Rank Adaptation (LoRA), a malicious actor can take a base open-source model and fine-tune it on a dataset of hate speech or fraudulent templates. This requires remarkably little compute—essentially a single high-end consumer NPU or a rented H100 instance for a few hours. Once the model is “de-aligned,” the safety guardrails are gone. The attacker now owns a private, uncensored engine for generating toxicity at scale.

| Feature | Closed-Source LLMs (SaaS) | Unfiltered Open-Source LLMs |

|---|---|---|

| Safety Guardrails | Centralized, API-level filtering | User-defined or completely removed |

| Deployment | Cloud-based (Traceable) | Local/Private (Anonymous) |

| Customization | Limited Prompt Engineering | Full Weight Fine-tuning (LoRA) |

| Risk Profile | Prompt Injection / Jailbreaking | Intentional Malicious Alignment |

This creates a massive regulatory blind spot. You can’t “patch” a model that is running on a private server in a jurisdiction with no AI oversight.

The 2026 Regulatory Paradox

Governments are responding with legislation, but the code is moving faster than the law. The EU AI Act and similar frameworks focus on “High-Risk AI,” but they struggle to define the line between a tool and a weapon. If a model is capable of both writing a legal brief and a phishing email, is the model “high-risk,” or is the user?

The industry is now pivoting toward “Proof of Personhood” and cryptographic signing. We are seeing a push for IEEE standards for content provenance, where every piece of AI-generated audio or text is watermarked at the token level. But watermarks can be stripped. Noise can be added to confuse the detectors.

The only real solution is a shift in the security stack. We must move from “Detecting AI” to “Zero Trust Communication.” If you can’t verify the identity via a hardware-based cryptographic key (like a YubiKey for your voice), you assume the entity is synthetic.

The 30-Second Verdict

The surge in AI-driven “accidents” is a symptom of a larger architectural flaw: we built the engine before we built the brakes. The democratization of LLMs via open-source is a net positive for innovation, but it has effectively weaponized the “Alignment Problem.” Until we move toward hardware-verified identity and architectural safety, the number of monthly incidents will only climb.

Stop trusting your ears. Stop trusting your eyes. Start trusting the hash.