A German clinic project is currently utilizing high-impact trauma education to warn students about the lethal consequences of drunk driving. While psychological deterrence is a baseline, the industry is pivoting toward AI-driven Driver Monitoring Systems (DMS) that utilize edge-computing NPUs to detect impairment and trigger vehicle lockouts in real-time.

Awareness campaigns are a legacy solution for a systemic failure. Warning a teenager about the visceral reality of a crash is a necessary social lubricant, but it doesn’t solve the latency problem of human decision-making under the influence. In the silicon valley of automotive safety, we are moving past “don’t do it” and toward “the car won’t let you.”

The gap between a student’s understanding of risk and their actual behavior is where the technology fails. To bridge this, the industry is integrating sophisticated computer vision (CV) and biometric sensors directly into the cockpit. We aren’t talking about simple cameras; we are talking about infrared (IR) arrays capable of tracking saccadic eye movements and pupillary dilation—biomarkers that are nearly impossible to fake when blood alcohol concentration (BAC) spikes.

The Silicon Shield: Why NPUs are Replacing the Breathalyzer

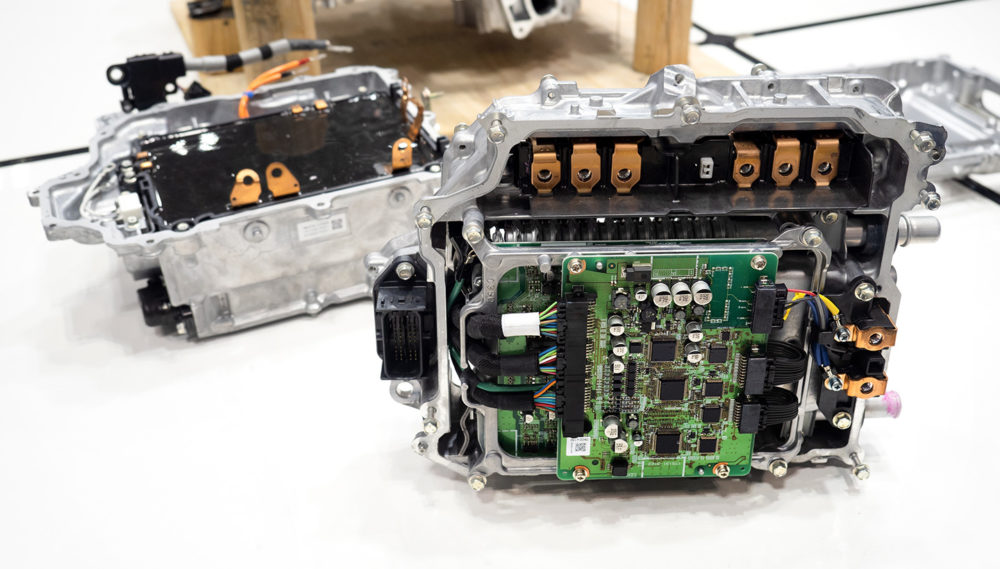

The traditional breathalyzer is a reactive tool. Modern safety architecture demands a proactive, always-on sentinel. What we have is where the Neural Processing Unit (NPU) comes into play. By offloading the heavy lifting of gaze tracking and facial expression analysis from the main CPU to a dedicated NPU, vehicles can achieve sub-millisecond latency in impairment detection.

When a driver’s cognitive load increases or their reaction time lags—typical markers of alcohol consumption—the DMS identifies the pattern via a pre-trained Large Model optimized for edge deployment. This isn’t cloud-based; the processing happens locally to avoid the catastrophic latency of a 5G handshake during a critical braking event. If the system detects a “high-probability impairment” state, it doesn’t just beep; it interfaces with the vehicle’s control logic.

The 30-Second Verdict on DMS Hardware

- Sensor Suite: Near-infrared (NIR) cameras for night-vision and occlusion-proof tracking.

- Processing: Specialized AI accelerators (like the NVIDIA DRIVE Orin) that handle trillions of operations per second (TOPS).

- Action: Direct integration with the Electronic Control Unit (ECU) to limit speed or prevent ignition.

The engineering challenge here is the “False Positive” paradox. If a system locks a driver out because they are simply tired or squinting in the sun, the user experience (UX) collapses, and the driver will find a way to bypass the system. This is why IEEE standards for functional safety are being rewritten to include more nuanced biometric validation.

Interrupting the CAN Bus: The Mechanics of the Lockout

To understand how a clinic’s warning becomes a technical reality, we have to look at the Controller Area Network (CAN bus). The CAN bus is the nervous system of the car, allowing microcontrollers to communicate without a host computer. A sophisticated impairment system doesn’t just send a warning to the dashboard; it injects a high-priority frame into the CAN bus to override driver input.

By utilizing an “Interlock” architecture, the software can physically prevent the transmission from shifting out of ‘Park’ if the biometric threshold for sobriety isn’t met. This creates a hard-coded barrier that renders the driver’s intent irrelevant.

“The shift from passive safety—like airbags—to active intervention requires a fundamental rewrite of the vehicle’s trust model. We are moving toward a ‘Zero Trust’ architecture where the vehicle continuously verifies the operator’s fitness to drive.”

This architectural shift is not without its rivals. While proprietary stacks from OEMs (Original Equipment Manufacturers) dominate, there is a growing movement toward open-source safety frameworks. Developers on GitHub are experimenting with open-source computer vision libraries that could democratize these safety features for older vehicle retrofits, potentially saving thousands of lives in markets where new, AI-integrated cars are unaffordable.

The Privacy Paradox and the Biometric Black Box

Here is where the “geek-chic” optimism hits the wall of regulation. To save a student from a fatal crash, the car must essentially surveil them. The system requires a constant stream of high-resolution biometric data: where you are looking, how your pupils are reacting, and even the micro-tremors in your facial muscles.

In the EU, this triggers a massive GDPR collision. Is the data stored? Is it hashed locally, or does it leak to the cloud? If a car detects impairment, does that data automatically trigger a police report? The “Black Box” problem becomes an ethical minefield. If the AI decides you are too drunk to drive, but you are in a medical emergency, the system becomes a liability rather than a lifesaver.

We are seeing a tension between “Closed Ecosystems” (like Tesla’s vertical integration) and “Open Standards” (like those pushed by Mobileye). Closed systems can iterate faster, but open standards provide the transparency needed for legal and ethical audits.

From Classroom Warnings to Code-Based Enforcement

The clinic project’s approach of showing students the “deadly consequences” is a vital psychological primer, but it is an analog solution in a digital age. The future of road safety isn’t found in a lecture hall; it’s found in the weights and biases of a neural network.

As we roll out these updates in the coming beta cycles of 2026, the goal is a seamless transition from education to automation. We are moving toward a world where the “choice” to drive under the influence is removed from the equation entirely. The code becomes the conscience.

For the developers and engineers building these systems, the mandate is clear: minimize latency, maximize biometric accuracy, and ensure that the lockout mechanism is as immutable as the laws of physics. Because when the alternative is a coffin, “user friction” is a price we are more than willing to pay.