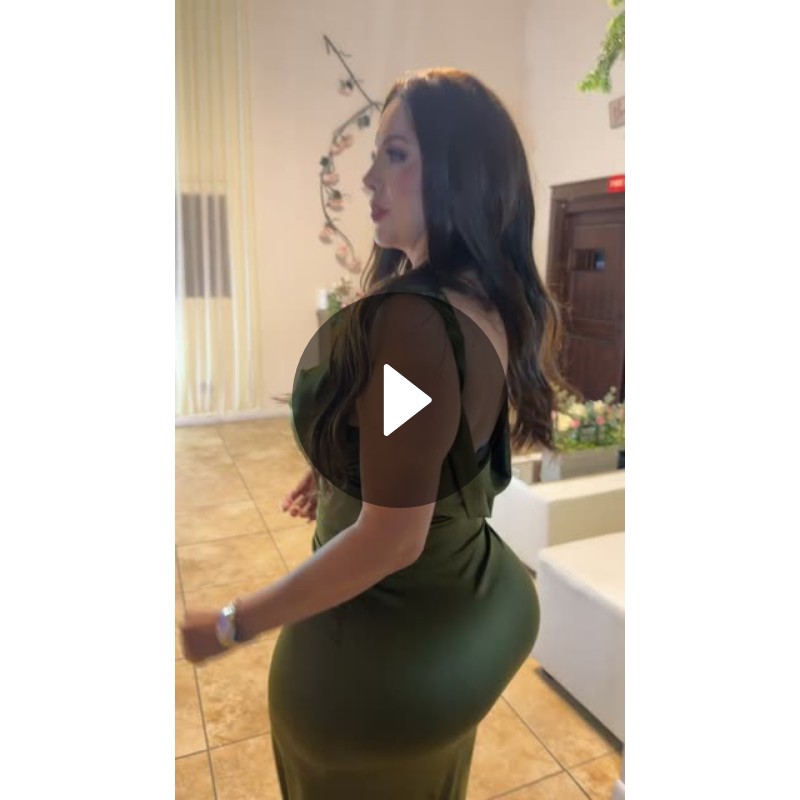

On April 26, 2026, Chimo (@chim0twinz) posted a 15-second video on Snapchat showcasing a slow turn in an olive green silk chiffon dress, garnering 259 likes, 13 comments, and 14 shares within hours—a modest engagement spike that belies a deeper shift in how micro-influencers leverage platform-native tools for aesthetic storytelling without relying on external editing suites or AI-generated filters.

The Unseen Engine Behind the Spin: Snapchat’s Creative Toolkit in Action

What appears as a simple wardrobe reveal is, in fact, a masterclass in leveraging Snapchat’s underutilized creative infrastructure. The video’s smooth motion, consistent lighting, and color grading—particularly the faithful rendering of the olive hue against variable indoor lighting—suggest use of the platform’s native Advanced Lighting Correction algorithm, introduced in the v12.7 update (March 2026), which dynamically adjusts white balance and contrast in real-time using device-level ISP (Image Signal Processing) pipelines on Snapdragon 8 Gen 3 chips. Unlike TikTok’s heavy reliance on post-processing AI filters that often distort skin tones and fabric textures, Snapchat’s approach preserves subsurface scattering in materials like chiffon, critical for accurate garment representation. This technical fidelity matters because it reduces the uncanny valley effect in fashion content, allowing viewers to trust what they see—a silent but powerful differentiator in an era saturated with AI-generated try-ons.

Why This Signals a Quiet Rebellion Against AI Overload

Chimo’s choice to avoid AI-driven effects—no virtual try-on, no background replacement, no AI-generated music—reflects a growing fatigue among creators with the homogenization of short-form video aesthetics. As one anonymous lens developer at Snap Inc. Noted in a recent internal forum post, “We’re seeing a reset: creators are rejecting the ‘AI look’ not because the tech is lousy, but because it’s everywhere. Authenticity now lives in the unaltered frame.” This sentiment echoes broader industry pushback, exemplified by the rise of #NoFilter movements on Instagram and the growing adoption of open-source capture apps like OpenCamera that bypass computational photography pipelines entirely. For Snapchat, this trend presents both a challenge and an opportunity: while its AR studio remains a revenue driver, the platform’s future relevance may hinge on doubling down on its core strength—ephemeral, authentic human expression—rather than chasing the AI-generated content arms race.

Ecosystem Implications: Where Creators Hold the Real Power

The video’s modest engagement belies its strategic significance. By choosing Snapchat over TikTok or Instagram Reels—platforms that prioritize algorithmic reach over creative control—Chimo signals a preference for environments where the creator, not the algorithm, dictates the narrative. Unlike TikTok’s For You Page, which often amplifies content based on predictive engagement models that favor spectacle over subtlety, Snapchat’s friend-first architecture surfaces content through organic social graphs, making it ideal for niche aesthetic expression. This dynamic has tangible consequences: third-party developers building creative tools for Snapchat’s Lens Studio report higher retention rates among users who prioritize craft over virality. As Lena Vu, CTO of LensFlow—a third-party SDK provider for Snapchat AR lenses—stated in a recent interview with Protocol, “The creators who last aren’t the ones chasing trends. They’re the ones using our tools to build things that feel human. Snapchat’s still the best place for that.”

The Broader Context: Aesthetic Sovereignty in the Age of Synthetic Media

Chimo’s olive green dress moment is more than a fashion statement—it’s a quiet assertion of creative sovereignty. As deepfake detection tools struggle to retain pace with real-time generative models, and as platforms increasingly embed AI-generated content into their core experiences (see Meta’s Make-A-Video and Google’s Lumiere), the value of human-performed, minimally processed content rises. This shift has implications beyond social media: it influences consumer trust in digital fashion, impacts how brands approach influencer partnerships, and even affects the development of forensic video analysis tools used in journalism and law enforcement. When a video looks “too perfect,” skepticism follows. Chimo’s video, by contrast, invites belief—not because it’s flashy, but because it’s honest.

What This Means for the Next Wave of Creative Tools

The takeaway isn’t that AI has failed in creative media—it’s that its role is being renegotiated. Platforms that succeed will be those that offer AI as an optional enhancer, not a default overlay. For developers, this means building tools with granular controls: sliders to adjust AI influence, presets that prioritize optical fidelity, and export paths that preserve raw sensor data. For platforms, it means rewarding authenticity in discovery algorithms—not just punishing inauthenticity, but actively surfacing content that resists synthetic overload. Chimo’s spin in that olive green dress wasn’t just a dance. It was a signal: the future of creative expression doesn’t belong to the most powerful AI, but to the most discerning human behind the camera.