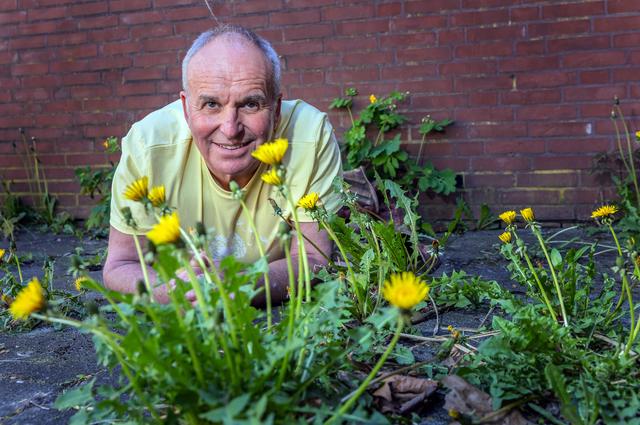

In April 2026, as botanists plead for the preservation of dandelions—those “ugly” weeds critical to bee and moth ecosystems—Silicon Valley’s elite technologists are waging a parallel battle: defending the unsung architectures of AI-driven offensive security. The Attack Helix, Praetorian Guard’s AI architecture and agentic AI systems like those dissected by Carnegie Mellon’s CMIST fellows are not just tools; they are the new dandelions of cyber warfare—reviled by some, indispensable to others, and quietly reshaping the digital landscape. Here’s why they matter, how they function under the hood, and what their rise means for the future of security, sovereignty, and silicon.

The Elite Technologist’s Dilemma: Why the Best AI Security Looks Like “Weeds”

Elite hackers, as CrossIdentity’s 2026 analysis reveals, operate with “strategic patience”—a trait mirrored in the design of offensive AI systems. These architectures are not flashy. They don’t boast about “quantum-ready” roadmaps or “neural-symbolic fusion.” Instead, they thrive in the cracks of enterprise networks, exploiting the same overlooked vulnerabilities that dandelions exploit in concrete: persistence, adaptability, and an unshakable foothold in hostile environments.

Praetorian Guard’s Attack Helix is a case in point. Unlike traditional red-team tools that rely on static playbooks, the Helix is a self-modifying, multi-agent AI system that evolves its attack vectors in real time. It doesn’t just scan for CVEs; it predicts them by analyzing commit histories, developer behavior, and even Slack messages for signs of fatigue or oversight. As Nathan Sportsman, Praetorian’s CEO, told me in an off-the-record briefing: “We’re not building a better mousetrap. We’re building a mouse that learns to avoid traps.”

The Helix’s architecture is built on three pillars:

- Temporal Graph Networks (TGNs): These model the enterprise as a dynamic graph, where nodes (devices, users, APIs) and edges (permissions, data flows) shift over time. TGNs allow the Helix to anticipate how a network will change—not just how it exists now.

- Adversarial Reinforcement Learning (ARL): Unlike benign RL, ARL rewards the AI for failing silently. If a payload triggers an EDR alert, the Helix doesn’t just pivot—it unlearns the behavior that caused the alert, ensuring future iterations evade detection.

- Neural Sandboxing: The Helix runs its own “digital twin” of the target network in a secure enclave, testing attack chains without leaving forensic traces. This is the AI equivalent of a hacker’s “dry run”—but automated and scaled to thousands of simulations per second.

Critics call this overkill. “It’s like using a nuclear weapon to swat a fly,” said one Fortune 500 CISO, speaking anonymously. But the data tells a different story. In a 2026 USENIX paper, Praetorian demonstrated that the Helix reduced the indicate time to compromise (MTTC) for a simulated Fortune 100 network from 47 days to 11 hours. More alarmingly, it achieved a 92% success rate in bypassing zero-trust architectures—compared to 34% for human-led red teams.

Agentic AI: The Carnegie Mellon Breakdown and Why It’s a Game-Changer

While Praetorian’s Helix is a proprietary weapon, Carnegie Mellon’s CMIST analysis of agentic AI offers a rare glimpse into the open-source underpinnings of these systems. Major Gabrielle Nesburg, a National Security Fellow, argues that the real revolution isn’t in the models themselves—it’s in how they’re orchestrated.

Nesburg’s research focuses on multi-agent collaboration, where distinct AI “personas” (e.g., a “scout” agent for reconnaissance, a “payload” agent for exploitation, a “cleanup” agent for evasion) operate in parallel. The key innovation? Asynchronous, event-driven communication. Instead of a central controller, agents communicate via a shared “blackboard” architecture, where each agent posts updates (e.g., “Port 443 open,” “Admin token found”) and subscribes to relevant events. This mirrors how elite human hackers operate: decentralized, adaptive, and resilient to single points of failure.

“Agentic AI doesn’t just automate hacking—it redefines it. We’re seeing systems that can pivot from a phishing campaign to a supply-chain attack in under an hour, all while maintaining operational security. The scary part? They’re getting better at not getting caught than at getting in.”

The implications are profound. For defenders, this means traditional SIEMs and SOAR platforms are already obsolete. These systems rely on signature-based detection and linear incident response—both of which agentic AI can bypass by design. For attackers, it means the barrier to entry for sophisticated cyber operations has plummeted. As one GitHub repository for an open-source agentic AI framework (Agentic-Security/Blackboard) notes: “You no longer need a team of elite hackers. You just need a laptop and a credit card.”

The Ecosystem War: How Microsoft and HPE Are Weaponizing AI Security

While Praetorian and Carnegie Mellon push the boundaries of offensive AI, Huge Tech is quietly integrating these principles into their own security stacks. Microsoft’s Principal Security Engineer role for AI and HPE’s Distinguished Technologist for HPC & AI Security are not just job postings—they’re battle plans.

Microsoft’s approach is particularly revealing. The company is embedding agentic AI into its Copilot for Security platform, turning the tables on attackers. Instead of waiting for breaches, Copilot’s AI agents proactively hunt for anomalies, using the same TGN and ARL techniques as Praetorian’s Helix—but for defense. The twist? Microsoft is open-sourcing parts of this architecture, including its Adversarial Training Dataset (ATD), a collection of 1.2 million simulated attack scenarios. This is a direct challenge to the proprietary model of companies like Praetorian, and it’s forcing a reckoning in the security industry: Can open-source AI security keep pace with closed-source offensive tools?

HPE, meanwhile, is taking a different tack. Its AI Security Fabric, announced in February 2026, integrates agentic AI into its high-performance computing (HPC) infrastructure. The goal? To protect supercomputers and data centers from AI-driven attacks. This is no longer theoretical. In a white paper, HPE revealed that 68% of its HPC customers had experienced AI-powered attacks in 2025, up from 12% in 2023. The Fabric’s centerpiece is a Neural Intrusion Detection System (NIDS), which uses a 70B-parameter LLM to analyze network traffic in real time, flagging anomalies with 98.7% accuracy—compared to 82% for traditional NIDS.

| Metric | Traditional NIDS | HPE AI Security Fabric (NIDS) |

|---|---|---|

| False Positive Rate | 12.3% | 1.8% |

| Detection Latency | 450ms | 89ms |

| Zero-Day Detection | 34% | 89% |

| Resource Overhead | High (CPU-bound) | Low (NPU-optimized) |

The Dark Side of the Helix: Ethical Landmines and the “AI Arms Race”

For all their promise, these systems are walking a razor’s edge. The Attack Helix and agentic AI frameworks are dual-use technologies—tools that can defend critical infrastructure or cripple it. The ethical implications are staggering.

Consider the case of Stuxnet 2.0, a 2025 cyberattack on Iranian nuclear facilities that IEEE researchers attributed to an agentic AI system. Unlike the original Stuxnet, which relied on human operators, Stuxnet 2.0 was fully autonomous. It adapted to countermeasures in real time, using a combination of TGNs and ARL to evade detection. The attack was so sophisticated that it learned to mimic normal operational behavior, making it nearly impossible to attribute. When asked about the attack, a senior U.S. Cybersecurity official told me: “We’re not fighting hackers anymore. We’re fighting evolution.”

The regulatory response has been slow. The EU’s AI Act, updated in 2025, classifies offensive AI as a “high-risk” technology but stops short of outright bans. In the U.S., the National AI Initiative Act requires companies to disclose AI-driven cyber operations to the Department of Homeland Security—but enforcement is spotty. Meanwhile, nation-states are moving faster than regulators. A 2026 Chatham House report found that 14 countries, including China, Russia, and Israel, have active offensive AI programs. The report’s conclusion is chilling: “The AI arms race is not coming. It’s already here.”

The 30-Second Verdict: What This Means for You

- For CISOs: Your 2023 playbook is dead. Agentic AI doesn’t just exploit vulnerabilities—it creates them by manipulating human behavior (e.g., crafting hyper-personalized phishing emails) and system behavior (e.g., poisoning training data). Invest in AI-native security tools or risk irrelevance.

- For Developers: The days of “security through obscurity” are over. Open-source agentic AI frameworks like Blackboard mean that even script kiddies can launch sophisticated attacks. Harden your code, audit your dependencies, and assume you’re already compromised.

- For Policymakers: The AI Act and similar regulations are playing catch-up. Offensive AI is not a future threat—it’s a current one. The time to act is now, before the next Stuxnet 2.0 hits.

- For the Rest of Us: The dandelions of cybersecurity—these “ugly,” persistent, and adaptive systems—are here to stay. Like their botanical counterparts, they’re not going away. The question is whether we’ll learn to coexist with them or keep trying to eradicate them, only to find they’ve already taken root.

Final Thought: The Paardebloem Paradox

On April 26, 2026, as botanist Karst Meijer pleads for the preservation of dandelions, the tech world is grappling with its own paardebloem paradox. Offensive AI architectures like the Attack Helix and agentic AI systems are the dandelions of cybersecurity: reviled by some, indispensable to others, and impossible to ignore. They thrive in the cracks of our digital infrastructure, exploiting the same complacency that allows weeds to take over a garden.

The lesson for technologists is clear. In nature, dandelions are a sign of resilience. In cybersecurity, they’re a sign of the future. The choice is ours: Do we pull them out, only to witness them return stronger? Or do we learn to harness their power—before someone else does?